TensorFlow單層感知

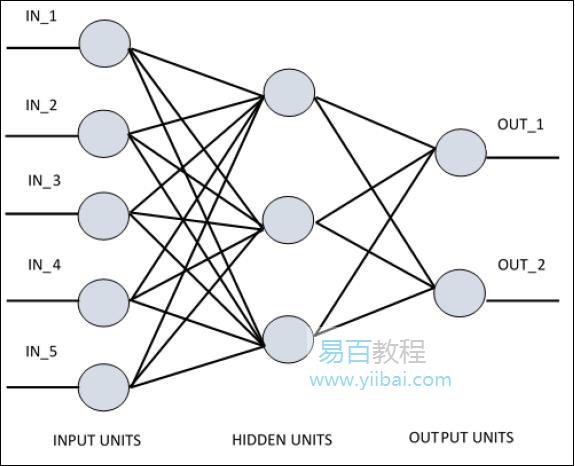

理解人工神經網路(ANN)對於要理解單層感知器非常重要。人工神經網路是資訊處理系統,其機制受到生物神經迴路功能的啟發。人工神經網路擁有許多彼此連線的處理單元。以下是人工神經網路的示意圖 -

該圖顯示隱藏單元與外部層通訊。而輸入和輸出單元僅通過網路的隱藏層進行通訊。

與節點的連線模式,輸入和輸出之間的總層數和節點級別以及每層的神經元數量定義了神經網路的體系結構。

有兩種型別的架構。這些型別關注功能人工神經網路如下 -

- 單層感知器

- 多層感知器

單層感知器

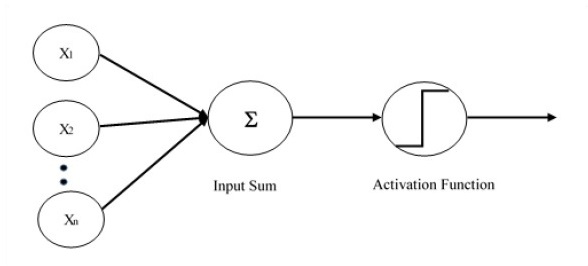

單層感知器是第一個提出的神經模型。神經元的區域性記憶的內容由權重向量組成。單層感知器的計算是在輸入向量的和的計算中執行的,每個輸入向量的值乘以權重的向量的相應元素。輸出中顯示的值將是啟用功能的輸入。

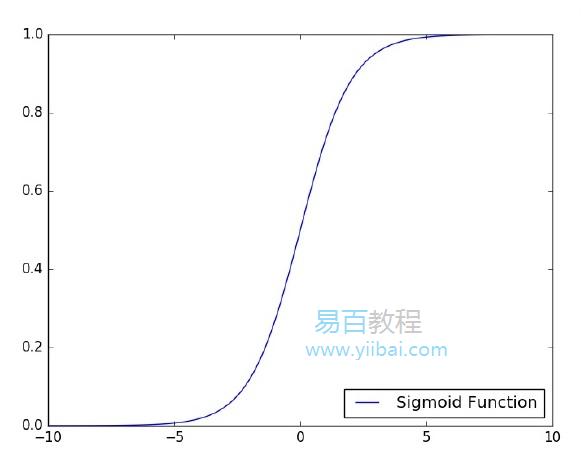

下面來看看如何使用TensorFlow實現影象分類問題的單層感知器。說明單層感知器的最好例子是通過「Logistic回歸」的表示。

現在,考慮以下訓訓練邏輯回歸的基本步驟 -

- 在訓練開始時用隨機值初始化權重。

- 對於訓練集的每個元素,使用期望輸出和實際輸出之間的差異來計算誤差。計算的誤差用於調整權重。

- 重複該過程,直到整個訓練集上的錯誤不小於指定的閾值,直到達到最大疊代次數。

下面提到了評估邏輯回歸的完整程式碼 -

# Import MINST data

from tensorflow.examples.tutorials.mnist import input_data

mnist = input_data.read_data_sets("/tmp/data/", one_hot = True)

import tensorflow as tf

import matplotlib.pyplot as plt

# Parameters

learning_rate = 0.01

training_epochs = 25

batch_size = 100

display_step = 1

# tf Graph Input

x = tf.placeholder("float", [None, 784]) # mnist data image of shape 28*28 = 784

y = tf.placeholder("float", [None, 10]) # 0-9 digits recognition => 10 classes

# Create model

# Set model weights

W = tf.Variable(tf.zeros([784, 10]))

b = tf.Variable(tf.zeros([10]))

# Construct model

activation = tf.nn.softmax(tf.matmul(x, W) + b) # Softmax

# Minimize error using cross entropy

cross_entropy = y*tf.log(activation)

cost = tf.reduce_mean(-tf.reduce_sum(cross_entropy,reduction_indices = 1))

optimizer = tf.train.GradientDescentOptimizer(learning_rate).minimize(cost)

#Plot settings

avg_set = []

epoch_set = []

# Initializing the variables init = tf.initialize_all_variables()

# Launch the graph

with tf.Session() as sess:

sess.run(init)

# Training cycle

for epoch in range(training_epochs):

avg_cost = 0.

total_batch = int(mnist.train.num_examples/batch_size)

# Loop over all batches

for i in range(total_batch):

batch_xs, batch_ys = mnist.train.next_batch(batch_size)

# Fit training using batch data sess.run(optimizer, feed_dict = {

x: batch_xs, y: batch_ys})

# Compute average loss avg_cost += sess.run(cost, feed_dict = {

x: batch_xs, y: batch_ys})/total_batch

# Display logs per epoch step

if epoch % display_step == 0:

print ("Epoch:", '%04d' % (epoch+1), "cost=", "{:.9f}".format(avg_cost))

avg_set.append(avg_cost) epoch_set.append(epoch+1)

print ("Training phase finished")

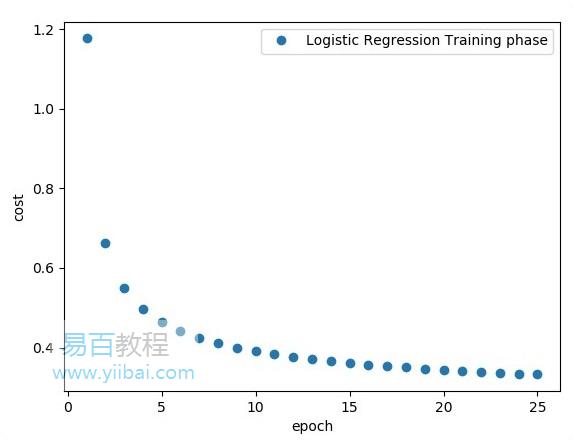

plt.plot(epoch_set,avg_set, 'o', label = 'Logistic Regression Training phase')

plt.ylabel('cost')

plt.xlabel('epoch')

plt.legend()

plt.show()

# Test model

correct_prediction = tf.equal(tf.argmax(activation, 1), tf.argmax(y, 1))

# Calculate accuracy

accuracy = tf.reduce_mean(tf.cast(correct_prediction, "float")) print

("Model accuracy:", accuracy.eval({x: mnist.test.images, y: mnist.test.labels}))

執行上面的程式碼生成以下輸出 -

邏輯回歸也認為是預測分析。邏輯回歸用於描述資料並解釋一個從屬二元變數與一個或多個名義或自變數之間的關係。