TensorFlow折積神經網路

在了解了機器學習概念之後,現在可以將注意力轉移到深度學習概念上。深度學習是機器學習的一個分支。深度學習實現的範例包括影象識別和語音識別等應用。

以下是兩種重要的深度神經網路 -

- 折積神經網路

- 遞迴神經網路

在本章中,我們將重點介紹CNN - 折積神經網路。

折積神經網路

折積神經網路旨在通過多層陣列處理資料。這種型別的神經網路用於影象識別或面部識別等應用。CNN與其他普通神經網路之間的主要區別在於CNN將輸入視為二維陣列並直接在影象上操作,而不是專注於其他神經網路所關注的特徵提取。

CNN的主導方法包括識別問題的解決方案。像谷歌和Facebook這樣的頂級公司已經投入研究和開發識別專案,以更快的速度完成。

折積神經網路使用三個基本思想 -

- 區域性感受域

- 折積

- 池

下面詳細地來了解這些想法。

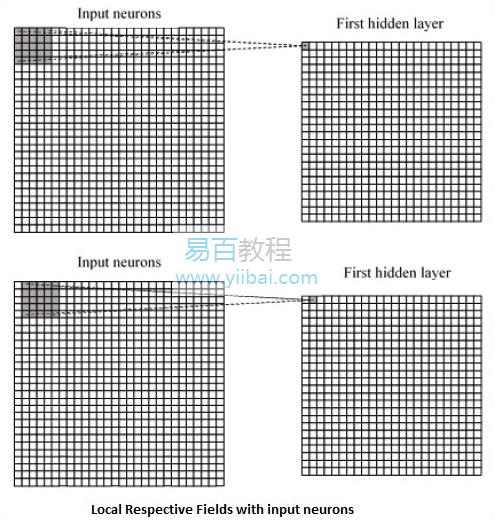

CNN利用輸入資料中存在的空間相關性。神經網路的每個並行層連線一些輸入神經元。該特定區域稱為區域性感受域。區域性感受域聚焦於隱藏的神經元。隱藏的神經元處理所提到的欄位內的輸入資料,而沒有實現特定邊界之外的變化。

以下是生成區域性感受域的圖表表示 -

如果觀察上述表示,可以看到每個連線都學習隱藏神經元的權重,並且具有從一個層到另一個層的移動的相關連線。在這裡,各個神經元不時地進行轉換。這個過程叫做「折積」。

從輸入層到隱藏特徵對映的連線的對映被定義為「共用權重」,並且包括的偏差被稱為「共用偏差」。

CNN或折積神經網路使用匯集層,這些層是在CNN宣告之後立即定位的層。它將來自使用者的輸入作為來自折積網路的特徵對映並準備精簡的特徵對映。池層有助於建立具有先前層的神經元的層。

CNN的TensorFlow實現

在本節中,我們將了解CNN的TensorFlow實現。需要執行整個網路的適當尺寸的步驟如下所示 -

第1步 - 包括計算CNN模型所需的TensorFlow和資料集模組所需的模組。

import tensorflow as tf

import numpy as np

from tensorflow.examples.tutorials.mnist import input_data

第2步 - 宣告一個名為run_cnn()的函式,該函式包含各種引數和優化變數以及資料預留位置的宣告。這些優化變數將宣告訓練模式。

def run_cnn():

mnist = input_data.read_data_sets("MNIST_data/", one_hot = True)

learning_rate = 0.0001

epochs = 10

batch_size = 50

第3步 - 在此步驟中,我們將使用輸入引數宣告訓練資料預留位置 - 對於28 x 28畫素= 784。它是從mnist.train.nextbatch()中提取的展平影象資料。

我們可以根據要求重塑張量。第一個值(-1)告訴函式根據傳遞給它的資料量動態調整該維度。將兩個中間尺寸設定為影象尺寸(即28×28)。

x = tf.placeholder(tf.float32, [None, 784])

x_shaped = tf.reshape(x, [-1, 28, 28, 1])

y = tf.placeholder(tf.float32, [None, 10])

第4步 - 現在建立一些折積層 -

layer1 = create_new_conv_layer(x_shaped, 1, 32, [5, 5], [2, 2], name = 'layer1')

layer2 = create_new_conv_layer(layer1, 32, 64, [5, 5], [2, 2], name = 'layer2')

第5步 - 將輸出平坦化為完全連線的輸出階段 - 在兩層尺寸為28 x 28的步幅2匯集後,尺寸為14 x 14或最小7 x 7 x,y坐標,但是有64個輸出通道。要建立與「密集」層完全連線,新形狀需要為[-1,7 x 7 x 64]。可以為此圖層設定一些權重和偏差值,然後使用ReLU啟用。

flattened = tf.reshape(layer2, [-1, 7 * 7 * 64])

wd1 = tf.Variable(tf.truncated_normal([7 * 7 * 64, 1000], stddev = 0.03), name = 'wd1')

bd1 = tf.Variable(tf.truncated_normal([1000], stddev = 0.01), name = 'bd1')

dense_layer1 = tf.matmul(flattened, wd1) + bd1

dense_layer1 = tf.nn.relu(dense_layer1)

第6步 - 具有特定softmax啟用的另一層與所需的優化器定義精度評估,這使得初始化操作符的設定成為可能。

wd2 = tf.Variable(tf.truncated_normal([1000, 10], stddev = 0.03), name = 'wd2')

bd2 = tf.Variable(tf.truncated_normal([10], stddev = 0.01), name = 'bd2')

dense_layer2 = tf.matmul(dense_layer1, wd2) + bd2

y_ = tf.nn.softmax(dense_layer2)

cross_entropy = tf.reduce_mean(

tf.nn.softmax_cross_entropy_with_logits(logits = dense_layer2, labels = y))

optimiser = tf.train.AdamOptimizer(learning_rate = learning_rate).minimize(cross_entropy)

correct_prediction = tf.equal(tf.argmax(y, 1), tf.argmax(y_, 1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

init_op = tf.global_variables_initializer()

第7步 - 設定記錄變數,將摘要相加以儲存資料的準確性。

tf.summary.scalar('accuracy', accuracy)

merged = tf.summary.merge_all()

writer = tf.summary.FileWriter('E:\TensorFlowProject')

with tf.Session() as sess:

sess.run(init_op)

total_batch = int(len(mnist.train.labels) / batch_size)

for epoch in range(epochs):

avg_cost = 0

for i in range(total_batch):

batch_x, batch_y = mnist.train.next_batch(batch_size = batch_size)

_, c = sess.run([optimiser, cross_entropy], feed_dict = {

x:batch_x, y: batch_y})

avg_cost += c / total_batch

test_acc = sess.run(accuracy, feed_dict = {x: mnist.test.images, y:

mnist.test.labels})

summary = sess.run(merged, feed_dict = {x: mnist.test.images, y:

mnist.test.labels})

writer.add_summary(summary, epoch)

print("\nTraining complete!")

writer.add_graph(sess.graph)

print(sess.run(accuracy, feed_dict = {x: mnist.test.images, y:

mnist.test.labels}))

def create_new_conv_layer(

input_data, num_input_channels, num_filters,filter_shape, pool_shape, name):

conv_filt_shape = [

filter_shape[0], filter_shape[1], num_input_channels, num_filters]

weights = tf.Variable(

tf.truncated_normal(conv_filt_shape, stddev = 0.03), name = name+'_W')

bias = tf.Variable(tf.truncated_normal([num_filters]), name = name+'_b')

#Out layer defines the output

out_layer =

tf.nn.conv2d(input_data, weights, [1, 1, 1, 1], padding = 'SAME')

out_layer += bias

out_layer = tf.nn.relu(out_layer)

ksize = [1, pool_shape[0], pool_shape[1], 1]

strides = [1, 2, 2, 1]

out_layer = tf.nn.max_pool(

out_layer, ksize = ksize, strides = strides, padding = 'SAME')

return out_layer

if __name__ == "__main__":

run_cnn()

以下是執行上述程式碼生成的輸出 -

See @{tf.nn.softmax_cross_entropy_with_logits_v2}.

2019-09-19 17:22:58.802268: I

T:\src\github\tensorflow\tensorflow\core\platform\cpu_feature_guard.cc:140]

Your CPU supports instructions that this TensorFlow binary was not compiled to

use: AVX2

2019-09-19 17:25:41.522845: W

T:\src\github\tensorflow\tensorflow\core\framework\allocator.cc:101] Allocation

of 1003520000 exceeds 10% of system memory.

2019-09-19 17:25:44.630941: W

T:\src\github\tensorflow\tensorflow\core\framework\allocator.cc:101] Allocation

of 501760000 exceeds 10% of system memory.

Epoch: 1 cost = 0.676 test accuracy: 0.940

2019-09-19 17:26:51.987554: W

T:\src\github\tensorflow\tensorflow\core\framework\allocator.cc:101] Allocation

of 1003520000 exceeds 10% of system memory.