【論文翻譯】Deep Residual Learning for Image Recognition

論文題目:Deep Residual Learning for Image Recognition

論文來源:Deep Residual Learning for Image Recognition

翻譯人:BDML@CQUT實驗室

Deep Residual Learning forImage Recognition

Kaiming He Xiangyu Zhang Shaoqing Ren Jian Sun Microsoft Research {kahe, v-xiangz, v-shren, jiansun}@microsoft.com

基於深度殘差學習的影象識別

Kaiming He Xiangyu Zhang Shaoqing Ren Jian Sun Microsoft Research {kahe, v-xiangz, v-shren, jiansun}@microsoft.com

Abstract

Deeper neural networks are more difficult to train. We present a residual learning framework to ease the training of networks that are substantially deeper than those used previously. We explicitly reformulate the layers as learning residual functions with reference to the layer inputs, instead of learning unreferenced functions. We provide comprehensive empirical evidence showing that these residual networks are easier to optimize,and can gain accuracy from considerably increased depth. On the ImageNet dataset we evaluate residualnets with adepth of up to152 layers—8× deeper than VGG nets but still having lower complexity. Anensembleoftheseresidualnetsachieves3.57%error on the ImageNet testset. This result won the1st place on the ILSVRC 2015 classification task. We also present analysis on CIFAR-10 with 100 and 1000 layers.

The depth of representations is of central importance for many visual recognition tasks. Solely due to our extremely deep representations, we obtain a 28% relative improvement on the COCO object detection dataset. Deep residualnets are foundations of our submissions to ILSVRC & COCO 2015 competitions1, where we also won the 1st places on the tasks of ImageNet detection, ImageNet localization, COCO detection, and COCO segmentation.

更深層次的神經網路更難訓練。我們提出了一個剩餘學習框架,以便於對比以前使用的網路更深入的培訓。我們明確地將層重新定義爲參考層輸入的學習剩餘函數,而不是學習未參照的函數。我們提供了全面的經驗證據,表明這些殘差網路更容易優化,並且可以從顯著增加的深度中獲得精度。在ImageNet數據集上,我們評估深度高達152層的剩餘網路,比VGG網路深8倍,但複雜性仍然較低。這些殘差網的集合在ImageNet測試集上達到了3.57%的誤差。該結果在ILSVRC 2015分類任務中獲得第一名。我們還對100層和1000層的CIF-AR-10進行了分析。

在許多視覺識別任務中,表徵的深度是至關重要的。僅僅由於我們非常深入的表示,我們獲得了28%的相對改善的COCO物件檢測數據集。深度殘差網是我們提交ILSVRC和COCO 2015競賽1的基礎,我們在ImageNet檢測、ImageNet定位、COCO檢測和COCO分割任務中也獲得了第一名。

1.Introduction

Deep convolutional neural networks have led to a series of breakthroughs for image classification . Deep networks naturally integrate low/mid/highlevel features and classifiers in an end-to-end multilayer fashion, and the 「levels」 of features can be enriched by the number of stacked layers (depth). Recent evidence revealsthatnetworkdepthisofcrucialimportance, and the leading results on the challenging ImageNet dataset all exploit 「very deep」 models, with a depth of sixteen to thirty . Many other nontrivial visual recognition tasks have also greatly benefited from very deep models.

深折積神經網路爲影象分類帶來了一系列突破。深層網路自然地以端到端的多層方式整合低/中/高層特徵和分類器,並且特徵的「層次」可以通過層疊層數(深度)來豐富。最近的證據揭示了網路深度是至關重要的,具有挑戰性的ImageNet數據集的主要結果都採用了「非常深」模型,深度爲16到30。許多其他非平凡的視覺識別任務也有從非常深入的模型中受益匪淺。

Driven by the significance of depth, a question arises: Is learning better networks as easy as stacking more layers? An obstacle to answering this question was the notorious problem of vanishing/exploding gradients , which hamper convergence from the beginning. This problem, however, has been largely addressed by normalized initialization and intermediate normalization layers ,which enable networks with tens of layers to start converging for stochastic gradient descent (SGD) with backpropagation .

在深度的重要性的驅使下,一個問題出現了:學習更好的網路是否像堆疊更多層一樣容易?回答這個問題的一個障礙是衆所周知的梯度消失/爆炸問題,它從一開始就阻礙了收斂。然而,這個問題在很大程度上已經通過規範化初始化和中間規範化層來解決,這使得具有數十層的網路能夠開始收斂,以進行反向傳播的隨機梯度下降(SGD)。

When deeper networks are able to start converging, a degradation problem has been exposed: with the network depth increasing, accuracy gets saturated (which might be unsurprising) and then degrades rapidly. Unexpectedly, such degradation is not caused by overfitting, and adding more layers to a suitably deep model leads to higher training error, asreportedin and thoroughly verified by our experiments. Fig. 1 shows a typical example.

當更深層的網路能夠開始收斂時,一個退化的問題就暴露出來了:隨着網路深度的增加,精度達到飽和(這可能並不奇怪),然後迅速下降。出乎意料的是,這種退化並不是由過度擬合引起的,而且在適當的深度模型中新增更多的層會導致更高的訓練誤差,如中所述,並通過我們的實驗得到了充分的驗證。圖1示出了一個典型範例。

The degradation (of training accuracy) indicates that not all systems are similarly easy to optimize. Let us considera shallower architecture and its deeper counterpart that adds more layers onto it. There exists a solution by construction to the deeper model: the added layers are identity mapping, and the other layers are copied from the learned shallower model. The existence of this constructed solution indicates that adeeper model should produce no higher training error than its shallower counterpart. But experiments show that our current solvers on hand are unable to find solutions that are comparably good or better than the constructed solution (or unable to do so in feasible time).

(訓練精度的)下降表明並非所有的系統都同樣容易優化。讓我們考慮一個較淺的架構和它的深層對應物,爲它新增了更多的層。對於更深層次的模型,存在一種構造解決方案:新增的層是身份對映,其他層是從學習的淺層模型複製的。此構造解的存在性表明,較深的模型不應比較淺的模型產生更高的訓練誤差。但實驗表明,我們現有的解決方案無法找到與構建的解決方案相當好或更好(或在可行的時間內無法做到)。

In this paper, we address the degradation problem by introducing a deep residual learning framework. Instead of hoping each few stacked layers directly fit a desired underlying mapping, we explicitly let these layers fit a residual mapping. Formally, denoting the desired underlying mapping as , we let the stacked nonlinear layers fit an other mapping of . The original mapping is recastin to . We hypothesize that it is easier to optimize the residual mapping than to optimize the original, unreferenced mapping. To the extreme, if an identity mapping were optimal, it would be easier to push the residual to zero than to fit an identity mapping by as tack of nonlinear layers.

在本文中,我們通過引入深度剩餘學習框架來解決退化問題。我們不希望每一個層疊的層都直接適合一個期望的底層對映,而是顯式地讓這些層適合一個剩餘對映。形式上,將期望的底層對映表示爲,我們讓堆疊的非線性層擬合的另一個對映:。原始對映被重新轉換爲。我們假設優化剩餘對映比優化原始未參照對映更容易。在極端情況下,如果一個身份對映是最優的,那麼將殘差推到零比通過一堆非線性層來擬合身份對映更容易。

The formulation of can be realized by feedforward neural networks with 「shortcut connections」 (Fig. 2). Shortcut connections are those skipping one or more layers. In our case, the shortcut connections simply perform identity mapping, and their outputs are added to the outputs of the stacked layers (Fig. 2). Identity shortcut connections add neither extra parameter nor computational complexity. The entire network can still be trained end-to-end by SGD with backpropagation, and can be easily implemented using common libraries (e.g., Caffe [19]) without modifying the solvers.

的公式可以通過具有「捷徑連線」的前饋神經網路實現(圖2)。快捷連線是指跳過一個或多個層的連線。在我們的例子中,快捷連線只執行標識對映,它們的輸出被新增到堆疊層的輸出中(圖2)。標識快捷連線既不會增加額外的參數,也不會增加計算複雜性。整個網路仍然可以通過反向傳播的SGD進行端到端的訓練,並且可以使用公共庫(例如Caffe)輕鬆實現,而無需修改解算器。

We present comprehensive experiments on ImageNet to show the degradation problem and evaluate our method. Weshowthat: 1)Ourextremelydeepresidualnets are easy to optimize, but the counterpart 「plain」 nets (that simply stack layers) exhibit higher training error when the depth increases; 2) Our deep residual nets can easily enjoy accuracy gains from greatly increased depth, producing results substantially better than previous networks.

我們在ImageNet上進行了綜合實驗,以說明退化問題並評估了我們的方法。我們的研究表明:1)我們的極深殘差網易於優化,但當深度增加時,對應的「普通」網(即簡單的疊加層)表現出更高的訓練誤差;2)我們的深殘差網可以很容易地享受深度大幅增加帶來的精度提高,產生的結果比以前的網路好得多。

Similar phenomena are also shown on the CIFAR-10 set [20], suggesting that the optimization difficulties and the effects of our method are not just akin to a particular dataset. We present successfully trained models on this dataset with over 100 layers, and explore models with over 1000 layers.

在CIFAR-10集合上也顯示了類似的現象,這表明我們的方法的優化困難和效果不僅僅類似於特定的數據集。我們在這個數據集中展示了100多個層次的成功訓練模型,並探索了1000多個層次的模型。

On the ImageNet classification dataset , we obtain excellent results by extremely deep residual nets. Our 152 layer residual net is the deepest network ever presented on ImageNet, while still having lower complexity than VGG nets . Our ensemble has 3.57% top-5 error on the ImageNet test set, and won the 1st place in the ILSVRC 2015 classification competition. The extremely deep representations also have excellent generalization performance on other recognition tasks, and lead us to further win the 1st places on: ImageNet detection, ImageNet localization, COCO detection, and COCO segmentation in ILSVRC & COCO2015 competitions. This strong evidence shows that the residual learning principle is generic, and we expect that it is applicable in other vision and non-vision problems.

在ImageNet分類數據集上,我們利用極深殘差網得到了很好的結果。我們的152層剩餘網路是ImageNet上有史以來最深的網路,但其複雜性仍然低於VGG網路。我們的組合有3.57%的前5名錯誤ImageNet測試集,並在ILSVRC 2015分類競賽中獲得第一名。在其他識別任務中,極深的表徵也具有優異的泛化效能,使我們在ILSVRC&COCO 2015競賽中進一步獲得ImageNet檢測、ImageNet定位、COCO檢測和COCO分割的第一名。這有力的證據表明,剩餘學習原理是通用的,我們期望它適用於其他視覺和非視覺問題。

2.RelatedWork

Residual Representations. In image recognition, VLAD is a representation that encodes by the residual vectors with respect to a dictionary, and Fisher Vector can be formulated as a probabilistic version of VLAD. Both of them are powerful shallow representations for image retrieval and classification . For vector quantization, encoding residual vectors is shown to be more effective than encoding original vectors.

剩餘表示。 在影象識別中,VLAD是相對於字典的殘差向量進行編碼的表示,而Fisher Vector可以表示爲VLAD的概率版本。它們都是影象檢索和分類的有力的淺層表示。對於向量量化,編碼殘餘向量比編碼原始向量更有效。

In low-level vision and computer graphics, for solving Partial Differential Equations (PDEs), the widely used Multigrid method reformulates the system as subproblems at multiple scales, where each subproblem is responsible for the residual solution between a coarser and a finer scale. An alternative to Multigrid is hierarchical basis preconditioning , which relies on variables that represen tresidual vectors between two scales. It has been shown that these solvers converge much faster than standard solvers that are unaware of the residual nature of the solutions. Thesemethodssuggestthatagoodreformulation or preconditioning can simplify the optimization.

在低層視覺和計算機圖學中,爲了求解偏微分方程(PDEs),廣泛使用的多重網格方法將系統重新定義爲多個尺度的子問題,其中每個子問題負責粗尺度和細尺度之間的剩餘解。多重網格的另一種選擇是分層基礎預處理,它依賴於表示兩個尺度之間的殘差向量的變數。已經證明這些解算器比不知道解的剩餘性質的標準解算器收斂得快得多。這些方法表明,一個好的重組或預處理可以簡化優化。

Shortcut Connections. Practices and theories that lead to short cut connections have been studied for along time. An early practice of training multi-layer perceptrons (MLPs) is to add a linear layer connected from the network input to the output . In, a few intermediate layers are directly connected to auxiliary classifiers for addressing vanishing/exploding gradients. The papers of propose methods for centering layer responses, gradients, and propagated errors, implemented by shortcut connections. In , an 「inception」 layer is composed of a shortcut branch and a few deeper branches.

快捷連線。導致捷徑連線的實踐和理論已經被研究了很長時間。訓練多層感知器(mlp)的早期實踐是新增一個從網路輸入連線到輸出的線性層。一些中間層直接連線到輔助分類器,用於處理消失/爆炸梯度。的論文提出了用快捷連線實現的對中層響應、梯度和傳播誤差的方法。「初始」層由一個快捷分支和幾個更深的分支組成。

Concurrent with our work, 「highway networks」 present shortcut connections with gating functions . These gates are data-dependent and have parameters, in contrast to our identity shortcuts that are parameter-free. When a gated shortcut is 「closed」 (approaching zero), the layers in highway networks represent nonresidual functions. On the contrary, our formulation always learns residual functions; our identity shortcuts are never closed, and all information is always passed through, with additional residual functions to be learned. In addition, high-way networks have not demonstrated accuracy gains with extremely increased depth (e.g., over 100 layers).

與我們的工作同時,「公路網」提供了具有門控功能的快捷連線。這些門依賴於數據並且有參數,而我們的身份快捷方式是無參數的。當封閉的快捷方式「關閉」(接近零)時,公路網中的圖層表示非殘差函數。相反,我們的公式總是學習殘差函數;我們的身份捷徑從不封閉,所有的資訊總是通過,還有其他剩餘函數需要學習。此外,高速網路還沒有顯示出深度的極大增加會帶來精度的提高

3.DeepResidualLearning

3.1.ResidualLearning

Let us consider as an underlying mapping to be fit by a few stacked layers (not necessarily the entire net), with denoting the inputs to the first of these layers. If one hypothesizes that multiple nonlinear layers can asymptotically approximate complicated functions 2, then it is equivalent to hypothesize that they can asymptotically approximate the residual functions, i.e., (assuming that the input and output are of the same dimensions). So rather than expect stacked layers to approximate , we explicitly let these layers approximate a residual function . The original function thus becomes . Althoughbothformsshouldbeabletoasymptotically approximate the desired functions (as hypothesized), the ease of learning might be different.

讓我們把看作是一個底層對映,它由幾個堆疊層(不一定是整個網路)擬合,x表示這些層中第一個層的輸入。如果假設多個非線性層可以漸近逼近複雜函數2,那麼就相當於假設它們可以漸近逼近剩餘函數,即(假設輸入和輸出具有相同的維數)。因此,我們不希望堆疊層近似,而是明確地讓這些層近似於一個剩餘函數。因此,原始函數變成。儘管這兩種形式都應該能夠漸進地近似所需的函數(如假設的那樣),但是學習的容易程度可能不同。

This reformulation is motivated by the counterintuitive phenomena about the degradation problem(Fig.1,left). As we discussed in the introduction, if the added layers can b econstructed asidentity mappings,adeeper model should have training error no greater than its shallower counterpart. The degradation problem suggests that the solvers might have difficulties in approximating identity mappings by multiple nonlinear layers. With the residual learning reformulation, if identity mappings are optimal, the solvers may simply drive the weights of the multiple nonlinear layers toward zero to approach identity mappings.

這種重新表述的動機是關於退化問題的反直覺現象(圖1,左圖)。正如我們在引言中所討論的,如果新增的層可以被構造爲身份對映,那麼一個更深層次的模型的訓練誤差應該不大於它的淺層模型。退化問題表明,解算器在用多個非線性層逼近同一對映時可能存在困難。在殘差學習重構中,如果身份對映是最優的,則解算器可以簡單地將多個非線性層的權值趨近於零來逼近同一對映。

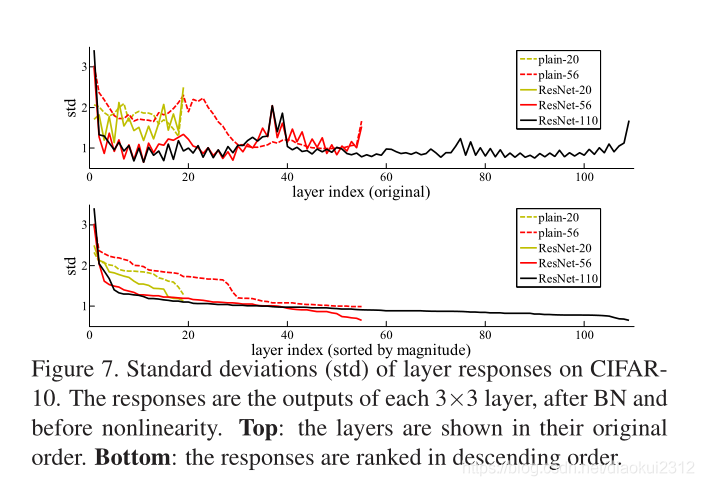

Inrealcases,it is unlikely that identity mappings are optimal, but our reformulation may help to precondition the problem. If the optimal function is closer to an identity mapping than to a zero mapping, it should be easier for the solver to find the perturbations with reference to an identity mapping, than to learn the function as a new one. We show byexperiments(Fig.7) that the learn edresidual functionsin general have small responses, suggesting that identity mappings provide reasonable preconditioning.

在實際情況下,身份對映不太可能是最優的,但我們的重新表述可能有助於解決問題。如果最優函數更接近於一個單位對映而不是一個零對映,那麼解算器應該比學習一個新函數更容易找到與單位對映有關的擾動。我們通過實驗(圖7)表明,學習的殘差函數通常具有較小的響應,這表明身份對映提供了合理的預處理。

3.2.IdentityMappingbyShortcuts

We adopt residual learning to every few stacked layers. A building block is shown in Fig. 2. Formally, in this paper we consider a building block defined as:

Here and are the input and output vectors of the layers considered. The function ) represents the residual mapping to be learned. For the example in Fig. 2 that has two layers, in which σ denotes ReLU [29] and the biases are omitted for simplifying notations. The operation is performed by a shortcut connection and element-wise addition. We adopt the second nonlinearity after the addition (i.e., σ(y), see Fig. 2).

我們對每幾層進行剩餘學習。圖2示出了構建塊。形式上,在本文中,我們考慮將構建塊定義爲:

這裏 和 是所考慮層的輸入和輸出向量。函數 )表示要學習的剩餘對映。對於圖2中有兩層的範例,,其中表示ReLU和偏差被省略以簡化符號。操作是通過一個快捷連線和元素級加法來執行的。我們採用加法後的第二非線性(即,見圖2)。

The short cut connection sinEqn.(1)introduce neither extra parameter nor computation complexity. This is not only attractive in practice but also important in our comparisons between plain and residual networks. We can fairly compare plain/residual networks that simultaneously have the same number of parameters, depth, width, and computationalcost(except for the negligible element-wiseaddition).

式中的快捷連線:(1)既不引入額外參數,也不引入計算複雜度。這不僅在實踐中很有吸引力,而且在我們比較平面網路和殘差網路時也很重要。我們可以比較同時具有相同參數數量、深度、寬度和計算成本的平面/殘差網路(除了可忽略的元素加法)。

The dimensions of and must be equal in Eqn.(1). If this is not the case (e.g., when changing the input/output channels), we can perform a linear projection Ws by the shortcut connections to match the dimensions:

We can also use asquare matrix Ws inEqn.(1). Butwewill show by experiments that the identity mapping is sufficient for addressing the degradation problem and is economical, and thus Ws is only used when matching dimensions.

和的尺寸在等式(1)中必須相等。如果不是這樣(例如,在更改輸入/輸出通道時),我們可以通過快捷連線執行線性投影,以匹配尺寸:

我們也可以用一個平方矩陣來表示。但是我們將通過實驗證明,身份對映對於解決退化問題是足夠的,並且是經濟的,因此Ws是隻在匹配維度時使用。

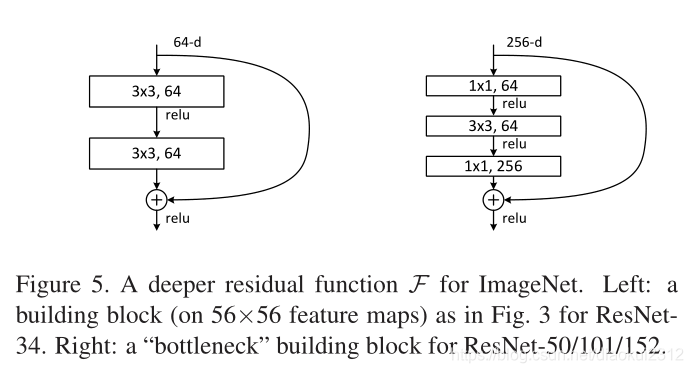

The form of the residual function F is flexible. Experiments in this paper involve a function F that has two or three layers (Fig. 5), while more layers are possible. But if F hasonlyasinglelayer,Eqn.(1)issimilartoalinearlayer: ,forwhichwehavenotobservedadvantages.

剩餘函數F的形式是靈活的。本文中的實驗涉及一個具有兩個或三個層的函數(圖5),而更多的層是可能的。但如果只有一個單層,則式(1)類似於線性層:,我們沒有觀察到它的優點。

We also note that although the above notations are about fully-connected layers for simplicity, they are applicable to convolutional layers. The function ) can represent multiple convolutional layers. The element-wise addition is performed on two feature maps, channel by channel.

我們還注意到,儘管爲了簡單起見,上述符號是關於全連線層的,但它們適用於折積層。函數)可以表示多個折積層。在兩個特徵對映上逐個通道地執行元素級加法。

3.3.NetworkArchitectures

We have tested various plain/residual nets, and have observed consistent phenomena. To provide instances for discussion, we describe two models for ImageNet as follows.

我們測試了各種平面網/殘差網,並觀察到了一致的現象。爲了提供討論的範例,我們描述了ImageNet的兩個模型。

Plain Network. Our plain baselines (Fig. 3, middle) are mainlyinspiredbythephilosophyofVGGnets(Fig.3, left). The convolutional layers mostly have 3×3 filters and follow two simple design rules: (i) for the same output feature map size, the layers have the same number of filters; and (ii) if the feature map size is halved, the number of filters is doubled so as to preserve the time complexity per layer. We perform downsampling directly by convolutional layers that have a stride of 2. The network ends with a global average pooling layer and a 1000-way fully-connected layer with softmax. The total number of weighted layers is 34 in Fig. 3 (middle).

普通網路。我們的簡單基線(圖3,中間)主要受VGG網路的哲學啓發(圖3,左)。折積層通常有3×3個濾波器,遵循兩個簡單的設計規則:(i)對於相同的輸出特徵對映大小,各層具有相同數量的濾波器;(ii)如果特徵對映大小減半,則濾波器數量加倍,以保持每層的時間複雜度。我們直接通過2步的折積層進行下採樣。該網路以一個全域性平均池層和一個1000路全連線層和soft max結束。圖3(中間)中加權層的總數爲34。

Figure 3. Example network architectures for ImageNet. Left: the VGG-19 model [40] (19.6 billion FLOPs) as a reference. Middle: a plain network with 34 parameter layers (3.6 billion FLOPs).Right: a residual network with 34 parameter layers (3.6 billion FLOPs). The dotted shortcuts increase dimensions. Table 1 show smore details and other variants.

圖3。ImageNet的網路體系結構範例。左圖:VGG-19模型[40](196億次失敗)作爲參考。中間層:一個有34個參數層(36億次浮點運算)的普通網路。右圖:一個有34個參數層(36億次浮點運算)的剩餘網路。虛線快捷方式增加尺寸。表1顯示了更多細節和其他變體。

It is worth noticing that our model has fewer filters and lower complexity than VGGnets (Fig.3,left). Our 34 layer base line has 3.6 billion FLOPs(multiply-adds), which is only 18% of VGG-19 (19.6 billion FLOPs)

值得注意的是,我們的模型比VGG網路具有更少的濾波器和更低的複雜性(圖3,左圖)。我們的34層基線有36億次失敗(乘法相加),僅爲VGG-19(196億次)的18%。

Residual Network. Based on the above plain network, we insert shortcut connections (Fig. 3, right) which turn the network into its counterpart residual version. The identity shortcuts (Eqn.(1)) can be directly used when the input and output are of the same dimensions (solid line shortcuts in Fig.3). When the dimension sincrease(dottedlineshortcuts in Fig. 3), we consider two options: (A) The shortcut still performs identity mapping, with extra zero entries padded for increasing dimensions. This option introduces no extra parameter; (B) The projection shortcut in Eqn.(2) is used to match dimensions (done by 1×1 convolutions). For both options, when the shortcuts go across feature maps of two sizes, they are performed with a stride of 2.

剩餘網路。基於上述平面網路,我們插入快捷連線(圖3,右圖),將網路轉換爲對應的殘差版本。當輸入和輸出具有相同的尺寸(圖3中的實線快捷方式)時,可以直接使用標識快捷方式(Eqn.(1))。當維度增加時(圖3中的虛線快捷方式),我們考慮兩個選項:(A)快捷方式仍然執行身份對映,併爲增加維度填充額外的零條目。此選項不引入額外參數;(B)公式(2)中的投影快捷方式用於匹配尺寸(通過1×1折積完成)。對於這兩個選項,當快捷方式跨越兩個尺寸的要素地圖時,它們的執行步幅爲2。

3.4.Implementation

Our implementation for ImageNet follows the practice in [21, 40]. The image is resized with its shorter side randomly sampled in [256,480] for scale augmentation [40]. A 224×224 crop is randomly sampled from an image or its horizontal flip,withtheper-pixel mean subtracted[21]. The standard coloraugment ationin [21] is used. We adoptbatch normalization (BN) [16] right after each convolution and before activation, following [16]. We initialize the weights as in [12] and train all plain/residual nets from scratch. We use SGD with a mini-batch size of 256. The learning rate starts from 0.1 and is divided by10 when the error plateaus, and the models are trained for up to 60×104 it erations. We use a weight decay of 0.0001 and a momentum of 0.9. We do not use dropout [13], following the practice in [16].

我們對ImageNet的實現遵循了中的實踐。在[256,480]中隨機對影象的短邊進行採樣,以進行縮放。從影象或其水平翻轉中隨機抽取224×224的裁剪,並減去每畫素的平均值。使用了[21]中的標準增色。我們在每次折積後和啓用前採用批次處理標準化(BN),後面是。我們按照中的方法初始化權重,並從頭開始訓練所有的普通網/殘差網。我們使用SGD,最小批次爲256。學習率從0.1開始,當誤差趨於平穩時除以10,模型訓練到60×104次迭代。我們使用0.0001的重量衰減和0.9的動量。我們不使用輟學,遵循[16]中的做法。

In testing, for comparison studies we adopt the standard 10-crop testing [21]. For best results, we adopt the fullyconvolutional form as in [40, 12], and average the scores at multiple scales (images are resized such that the shorter side is in{224,256,384,480,640}).

在試驗中,我們採用標準10作物試驗[21]。爲了獲得最佳結果,我們採用了[40,12]中的完全進化形式,並在多個尺度上對分數進行平均(調整影象大小,使短邊位於{224256384480640})。

4.Experiments

4.1.ImageNetClassification

We evaluate our method on the ImageNet 2012 classification data set[35] that consists of 1000 classes. The models are trained on the 1.28 million training images, and evaluated on the 50k validation images. We also obtain a final result on the 100k test images, reported by the test server. We evaluate both top-1 and top-5 error rates.

我們在包含1000個類的ImageNet 2012分類數據集上評估我們的方法。模型在128萬張訓練影象上進行訓練,並在50k個驗證影象上進行評估。我們還獲得了由測試伺服器報告的100k個測試影象的最終結果。我們評估前1名和前5名的錯誤率。

Plain Networks. We first evaluate 18-layer and 34-layer plain nets. The 34-layer plain net is in Fig. 3 (middle). The 18-layer plain net is of a similar form. See Table 1 for detailed architectures.

普通網路。我們首先評估18層和34層平面網。圖3(中)爲34層平面網。18層平網形式相似。具體架構見表1。

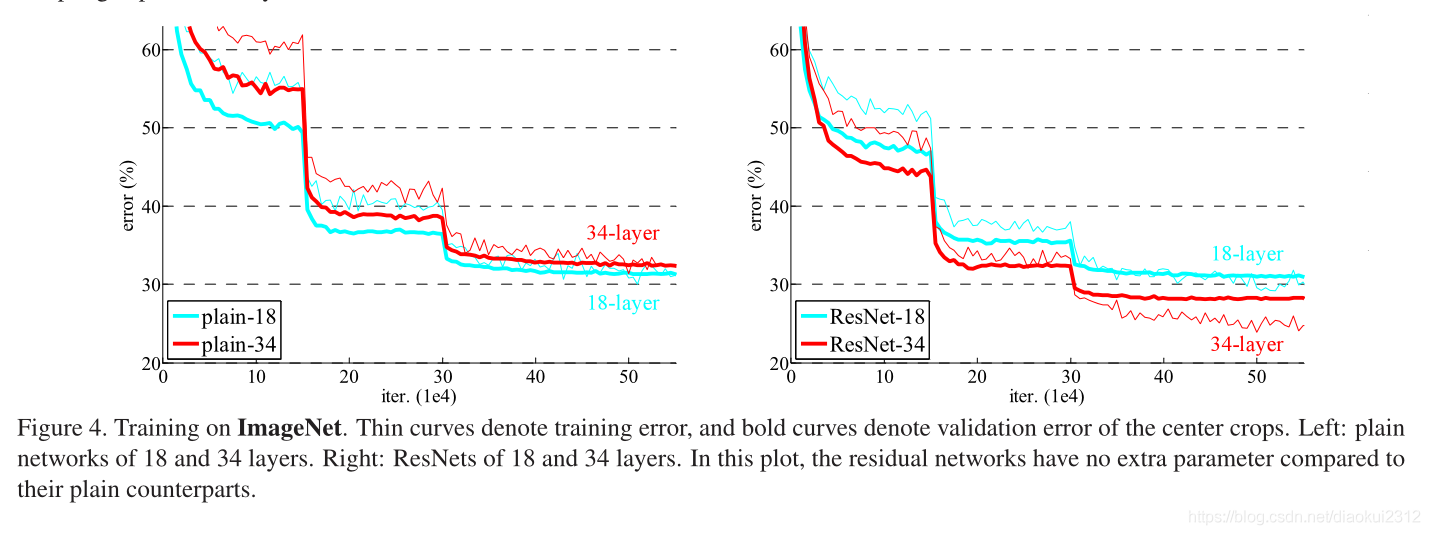

The results inTable 2 show that the deeper 34-layer plain net has higher validation error than the shallower 18-layer plain net. To reveal the reasons, in Fig. 4 (left) we compare their training/validation errors during the training procedure. We have observed the degradation problem - the 34-layer plain net has higher training error throughout the whole training procedure, even though the solution space of the 18-layer plain network is a subspace of that of the 34-layer one.

表2中的結果表明,較深的34層平面網比淺層的18層平面網具有更高的驗證誤差。爲了揭示原因,在圖4(左)中,我們比較了他們在培訓過程中的培訓/驗證錯誤。我們觀察到退化問題

We argue that this optimization difficulty is unlikely to be caused by vanishing gradients. These plain networks are trained with BN [16], which ensures forward propagated signals to have non-zero variances. We also verify that the backward propagated gradients exhibit healthy norms with BN. So neither forward nor backward signals vanish. In fact, the 34-layer plain net is still able to achieve competitive accuracy (Table 3), suggesting that the solver works to some extent. We conjecture that the deep plain nets may have exponentially low convergence rates,which impac tthe reducing of the training error3. The reason for such optimization difficulties will be studied in the future.

我們認爲這種優化困難不太可能是由梯度消失引起的。這些平面網路用BN訓練,以確保前向傳播的信號具有非零方差。我們還驗證了反向傳播的梯度與BN具有良好的規範性。所以前進和後退的信號都不會消失。事實上,34層平面網仍然能夠達到競爭的精度(表3),這表明求解器在一定程度上起作用。我們推測深平面網可能具有指數級的低收斂速度,這影響了訓練誤差的降低。這種優化困難的原因將在未來進行研究。

Residual Networks. Next we evaluate 18-layer and 34layer residual nets (ResNets). The baseline architectures are the same as the above plain nets, expect that a shortcut connection is added to each pair of 3×3 filters as in Fig. 3 (right). In the first comparison (Table 2 and Fig. 4 right), we use identity mapping for all shortcuts and zero-padding for increasing dimensions (optionA).So they have no extra parameter compared to the plain counterparts.

剩餘網路。接下來我們評估18層和34層殘差網(ResNets)。基線架構與上述普通網路相同,只是在每對3×3濾波器上增加了一個快捷連線,如圖3(右圖)所示。在第一個比較中(表2和圖4右圖),我們對所有快捷方式使用標識對映,對增加維度使用零填充(選項A)。因此,與普通的對應物相比,它們沒有額外的參數。

We have three major observations from Table 2 and Fig. 4. First, the situation is reversed with residual learning–the34-layerResNetisbetterthanthe18-layerResNet (by 2.8%). More importantly, the 34-layer ResNet exhibits considerably lower training error and is generalizable to the validationdata. This indicates that the degradation problem is well addressed in this setting and we manage to obtain accuracy gains from increased depth.

從表2和圖4中我們有三個主要的觀察結果。首先,通過剩餘學習扭轉了這種情況——34層ResNet比18層ResNet好(2.8%)。更重要的是,34層ResNet顯示出相當低的訓練誤差,並且可以推廣到驗證數據中。這表明,在這種情況下,退化問題得到了很好的解決,我們設法從增加深度中獲得精度增益。

Second, compared to its plain counterpart, the 34-layer ResNet reduces the top-1 error by 3.5% (Table 2), resulting from the successfully reduced training error (Fig. 4 right vs. left). This comparison verifies the effectiveness of residual learning on extremely deep systems.

第二,與普通的34層相比ResNet將top-1錯誤減少了3.5%(表2),這是由於成功地減少了訓練誤差(圖4右與左)。這種比較驗證了剩餘學習在極深系統上的有效性。

Last, we also note that the 18-layer plain/residual nets are comparably accurate (Table 2), but the 18-layer ResNet converges faster (Fig. 4 right vs. left). When the net is 「not overly deep」 (18 layers here), the current SGD solver is still able to find good solutions to the plain net. In this case, the ResNet eases the optimization by providing faster convergence at the early stage.

最後,我們還注意到18層平面網/殘差網相對準確(表2),但18層ResNet收斂更快(圖4右對左)。當網路「不太深」(這裏是18層)時,當前的SGD解算器仍然能夠找到普通網路的良好解決方案。在這種情況下,ResNet通過在早期階段提供更快的收斂來簡化優化。

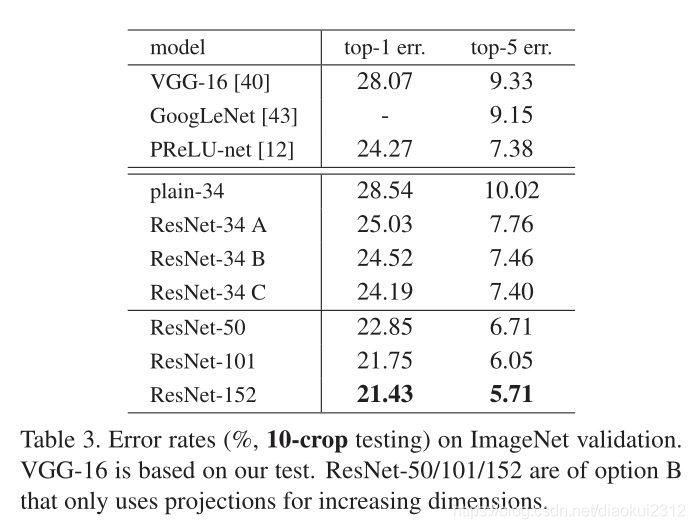

Identity vs. Projection Shortcuts. We have shown that parameter-free, identity shortcuts help with training. Next we investigate projection shortcuts (Eqn.(2)). In Table 3 we compare three options: (A) zero-padding shortcuts are used for increasing dimensions, and all shortcuts are parameter-free (the same as Table 2 and Fig. 4 right); (B) projection shortcuts are used for increasing dimensions, and other shortcuts are identity; and © all shortcuts are projections.

身份與投影快捷方式。我們已經證明了這點無參數,身份快捷方式有助於訓練。接下來我們研究投影快捷方式(Eqn.(2))。在表3中,我們比較了三個選項:(A)零填充快捷鍵用於增加維度,並且所有快捷鍵都是無參數的(與表2和圖4右相同);(B)投影快捷鍵用於增加維度,其他快捷方式是標識;(C)所有快捷方式都是投影。

Table 3

shows that all three options are considerably better than the plain counterpart. B is slightly better than A. We argue that this is because the zero-padded dimensions in A indeed have no residual learning. C is marginally better than B, and we attribute this to the extra parameters introduced by many (thirteen) projection shortcuts. But the small differences among A/B/C indicate that projection shortcuts are not essential for addressing the degradation problem. So we do not use option C in the rest of this paper, to reduce memory/time complexity and model sizes. Identity shortcuts are particularly important for not increasing the complexity of the bottleneck architectures that are introduced below.

表3顯示,這三種方案都比普通方案好得多。我們認爲這是因爲A中的零填充維數確實沒有剩餘學習。C略優於B,我們將其歸因於許多(十三)個投影快捷方式引入的額外參數。但是A/B/C之間的細微差別表明,投影快捷方式對於解決退化問題不是必需的。因此,在本文的其餘部分中,我們不使用選項C來降低記憶體/時間複雜性和模型大小。身份快捷方式對於不增加下面 下麪介紹的瓶頸體系結構的複雜性特別重要。

Deeper Bottleneck Architectures. Next we describe our deeper nets for ImageNet. Because of concerns on the training time that we can afford, we modify the building block as a bottleneck design4. For each residual function F, we use a stack of 3 layers instead of 2 (Fig. 5). The three layers are 1×1, 3×3, and 1×1 convolutions, where the 1×1 layers are responsible for reducing and then increasing (restoring) dimensions, leaving the 3×3 layer a bottleneck with smaller input/output dimensions. Fig. 5 shows an example, where both designs have similar time complexity.

更深層次的瓶頸架構。接下來,我們將描述ImageNet的深層網路。出於對培訓時間的考慮,我們將構建塊修改爲瓶頸設計4。對於每個剩餘函數F,我們使用3層而不是2層的堆疊(圖5)。這三個層分別是1×1、3×3和1×1折積,其中1×1層負責減少然後增加(恢復)維數,使3×3層成爲輸入/輸出維數較小的瓶頸。圖5示出了兩個設計具有相似的時間複雜度的範例。

The parameter-free identity shortcuts are particularly important for the bottleneck architectures. If the identity short-cut in Fig. 5 (right) is replaced with projection, one can show that the time complexity and model size are doubled, as the shortcut is connected to the two high-dimensional ends. So identity shortcuts lead to more efficient models for the bottleneck designs.

無參數標識快捷方式對於瓶頸體系結構尤爲重要。如果將圖5(右)中的標識快捷方式替換爲投影,則可以顯示時間複雜性和模型大小加倍,因爲快捷方式連線到兩個高維端。因此,身份捷徑爲瓶頸設計提供了更有效的模型。

50-layer ResNet: We replace each 2-layer block in the 34-layer net with this 3-layer bottleneck block, resulting in a 50-layer ResNet (Table 1). We use option B for increasing dimensions. This model has 3.8 billion FLOPs.

每層更換50層34層網路與此3層瓶頸塊,導致50層ResNet(表1)。我們使用選項B來增加維度。這種模式有38億次失敗。

101-layer and 152-layer ResNets: We construct 101-layer and 152-layer ResNets by using more 3-layer blocks(Table 1). Remarkably, although the depth is significantly increased, the 152-layer ResNet (11.3 billion FLOPs) still has lower complexity than VGG-16/19 nets (15.3/19.6 billion FLOPs).

101層和152層resnet:我們使用更多的3層塊構建101層和152層resnet(表1)。值得注意的是,儘管深度顯著增加,但152層ResNet(113億個FLOPs)仍然比VGG-16/19網路(153/196億個FLOPs)的複雜度低。

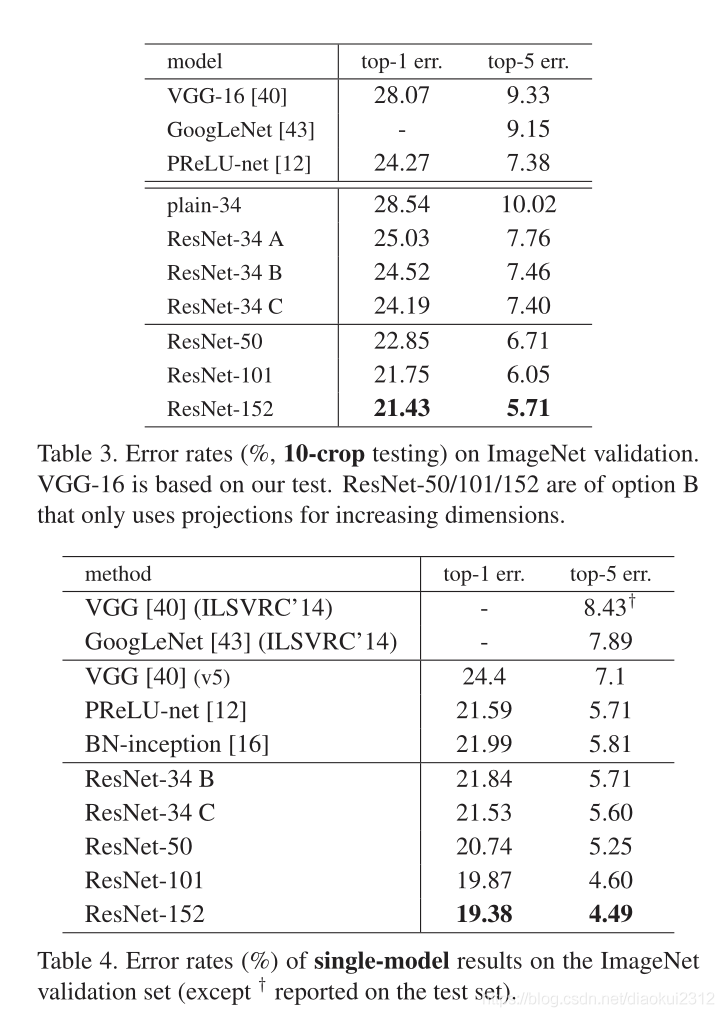

The 50/101/152-layer ResNets are more accurate than the 34-layer ones by considerable margins (Table 3 and 4). We do not observe the degradation problem and thus enjoy significant accuracy gains from considerably increased depth. The benefits of depth are witnessed for all evaluation metrics (Table 3 and 4).

50/101/152層重網比34層重網精度高出相當大的差距(表3和表4)。我們沒有觀察到退化問題,因此可以從顯著增加的深度中獲得顯著的精度增益。深度的好處在所有評估指標中都有體現(表3和表4)。

Comparisons with State-of-the-art Methods. In Table 4 we compare with the previous best single-model results. Our baseline 34-layer ResNets have achieved very competitive accuracy. Our 152-layer ResNet has a single-model top-5 validation error of 4.49%. This single-model result outperforms all previous ensemble results (Table 5). We combine six models of different depth to form an ensemble(only with two 152-layer ones at the time of submitting). This leads to 3.57% top-5 error on the test set (Table 5).This entry won the 1st place in ILSVRC 2015.

與最先進方法的比較。在表4中,我們將與之前的最佳單模型結果進行比較。我們的基準34層resnet已經達到了非常有競爭力的準確性。我們的152層ResNet有一個單一模型TOP5驗證誤差爲4.49%。這個單一模型的結果優於所有先前的集合結果(表5)。我們將六個不同深度的模型組合成一個整體(提交時只有兩個152層模型)。這導致測試集的前5名錯誤率爲3.57%(表5)。該參賽作品在2015年ILSVRC中獲得第一名。

4.2. CIFAR-10 and Analysis

We conducted more studies on the CIFAR-10 dataset, which consists of 50k training images and 10k testing images in 10 classes. We present experiments trained on the training set and evaluated on the test set. Our focus is on the behaviors of extremely deep networks, but not on pushing the state-of-the-art results, so we intentionally use simple architectures as follows.

我們對CIFAR-10數據集進行了更多的研究,該數據集包括10個班的50k個訓練影象和10k個測試影象。我們提出了在訓練集上訓練的實驗,並在測試集上進行了評估。我們的重點是極深層網路的行爲,而不是推動最先進的結果,因此我們有意使用以下簡單的架構。

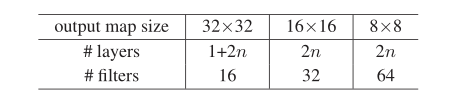

The plain/residual architectures follow the form in Fig. 3(middle/right). The network inputs are 32×32 images, with the per-pixel mean subtracted. The first layer is 3×3 convolutions. Then we use a stack of 6n layers with 3×3 convolutions on the feature maps of sizes {32,16,8} respectively, with 2n layers for each feature map size. The numbers of filters are{16,32,64}respectively. The subsampling is performed by convolutions with a stride of 2. The network ends with a global average pooling, a 10-way fully-connected layer, and soft max. There are totally 6n+2 stacked weighted layers. The following table summarizes

the architecture:

普通/剩餘架構遵循圖3(中/右)中的形式。網路輸入爲32×32幅影象,減去每畫素的平均值。第一層是3×3折積。然後我們分別在大小爲{32,16,8}的特徵對映上使用6n層和3×3折積,每個特徵圖大小有2n層。過濾器的數量分別爲{16,32,64}。子採樣是通過步長爲2的折積進行的。該網路以全域性平均池、10路完全連線層和softmax結束。共有6n+2個加權層。下表總結了體系結構:

When shortcut connections are used, they are connected to the pairs of 3×3 layers (totally 3n shortcuts). On this dataset we use identity shortcuts in all cases (i.e., option A), so our residual models have exactly the same depth, width, and number of parameters as the plain counterparts.

當使用快捷方式連線時,它們連線到3×3層(共3個快捷方式)對上。在這個數據集中,我們在所有情況下都使用標識快捷方式(即選項A),因此,我們的殘差模型具有與普通模型完全相同的深度、寬度和參數數量。

We use a weight decay of 0.0001 and momentum of 0.9, and adopt the weight initialization in [12] and BN [16] but with no dropout. These models are trained with a mini-batch size of 128 on two GPUs. We start with a learning rate of 0.1, divide it by 10 at 32k and 48k iterations, and terminate training at 64k iterations, which is determined on a 45k/5k train/val split. We follow the simple data augmentation in [24] for training: 4 pixels are padded on each side, and a 3 2×32 crop is randomly sampled from the padded image or its horizontal flip. For testing, we only evaluate the single view of the original 32×32 image.

我們使用0.0001的權重衰減和0.9的動量,並採用了[12]和BN[16]中的權重初始化,但沒有丟失。這些模型在兩個gpu上訓練成128個小批次。我們從0.1的學習率開始,在32k和48k迭代時除以10,在64k迭代時終止訓練,這是根據45k/5k訓練/val分割確定的。我們按照[24]中簡單的數據擴充方法進行訓練:每邊填充4個畫素,從填充影象或其水平翻轉中隨機抽取一個32×32的裁剪。爲了測試,我們只評估原始32×32影象的單個檢視。

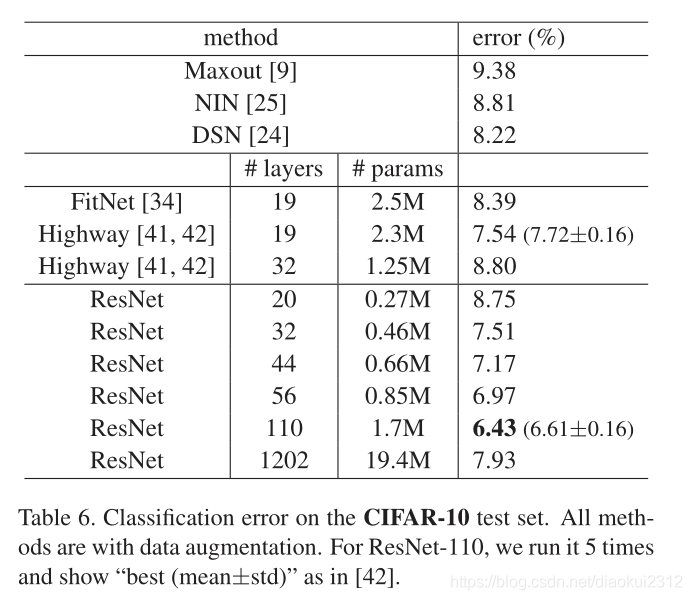

We compare n = {3,5,7,9}, leading to 20, 32,44, and 56-layer networks. Fig. 6 (left) shows the behaviors of the plain nets. The deep plain nets suffer from increased depth, and exhibit higher training error when going deeper. This phenomenon is similar to that on ImageNet (Fig.4, left) and on MNIST (see [41]), suggesting that such an optimization difficulty is a fundamental problem.

我們比較n={3,5,7,9},得到20、32、44和56層網路。圖6(左)顯示了平面網的行爲。深平原網受深度增加的影響,越深訓練誤差越大。這種現象類似於ImageNet(圖4,左圖)和MNIST(見[41]),這表明這種優化困難是一個基本問題。

We further explore n = 1 8 that leads to a 110-layer ResNet. In this case, we find that the initial learning rate of 0.1 is slightly too large to start converging5. So we use 0.01 to warm up the training until the training error is below 80% (about 400 iterations), and then go back to 0.1 and continue training. The rest of the learning schedule is as done previously. This 110-layer network converges well (Fig. 6, middle). It has fewer parameters than other deep and thin networks such as FitNet and Highway (Table 6), yet is among the state-of-the-art results (6.43%,

Table 6).

我們進一步研究n=18,這將導致110層ResNet。在這種情況下,我們發現初始學習率0.1稍大,無法開始收斂5。所以我們用0.01預熱訓練直到訓練誤差小於80%(大約400次迭代),然後回到0.1繼續訓練。學習計劃的其餘部分如前所述。這個110層網路收斂性很好(圖6,中間)。它的參數比其他深而薄的要少FitNet和Highway(表6)等網路是最先進的結果(6.43%,表6)。

Analysis of Layer Responses. Fig. 7 shows the standard deviations (std) of the layer responses. The responses are the outputs of each 3×3 layer, after BN and before other nonlinearity (ReLU/addition). For ResNets, this analysis reveals the response strength of the residual functions. Fig. 7 shows that ResNets have generally smaller responses than their plain counterparts. These results support our basic motivation (Sec.3.1) that the residual functions might be generally closer to zero than the non-residual functions. We also notice that the deeper ResNet has smaller magnitudes of responses, as evidenced by the comparisons among ResNet-20, 56, and 110 in Fig.7. When there are more layers, an individual layer of ResNets tends to modify the signal less.

層響應分析。圖7顯示了層響應的標準偏差(std)。響應是每3×3層的輸出,在BN之後和其他非線性(ReLU/addition)之前。對於resnet,這個分析揭示了殘差函數的響應強度。圖7示出resnet通常比它們的普通對應物具有更小的響應。這些結果支援我們的基本動機(第3.1節),即殘差函數可能比非殘差函數更接近於零。我們還注意到更深的ResNet具有較小的響應量,如圖7中ResNet-20、56和110之間的比較所證明的。當有更多層時,單個resnet層傾向於減少信號的修改。

Exploring Over 1000 layers. We explore an aggressively deep model of over 1000 layers. We set n = 200 that leads to a 1202-layer network, which is trained as described above. Our method shows no optimization difficulty, and this 103-layer network is able to achieve training error<0.1% (Fig. 6, right). Its test error is still fairly good(7.93%, Table 6).

探索超過1000層。我們探索了一個超過1000層的深度模型。我們將n=200設定爲1202層網路,該網路按上述方式訓練。我們的方法沒有優化的困難,而且這個103層網路能夠達到訓練誤差<0.1%(圖6,右圖)。其測試誤差仍相當好(7.93%,表6)。

But there are still open problems on such aggressively deep models. The testing result of this 1202-layer network is worse than that of our 110-layer network, although both have similar training error. We argue that this is because of overfitting. The 1202-layer network may be unnecessarily large (19.4M) for this small dataset. Strong regularization such as maxout [9] or dropout is applied to obtain the best results on this dataset. In this paper, we use no maxout/dropout and just simply impose regularization via deep and thin architetures by design, without distracting from the focus on the difficulties of optimization. But combining with stronger regularization may improve results, which we will study in the future.

但在這種激進的深層次模型上,仍然存在一些開放性的問題。這個1202層網路的測試結果比我們的110層網路差,儘管兩者都是有類似的訓練錯誤。我們認爲這是因爲過度適應。1202層網路對於這個小數據集來說可能是不必要的大(19.4米)。強正則化(如maxout[9]或dropout)用於在該數據集上獲得最佳結果。在本文中,我們沒有使用maxout/dropout,只是通過設計簡單地通過深而薄的體系結構實施正則化,而不分散對優化困難的關注。但結合更強的正則化可以提高結果,我們將在未來進行研究。

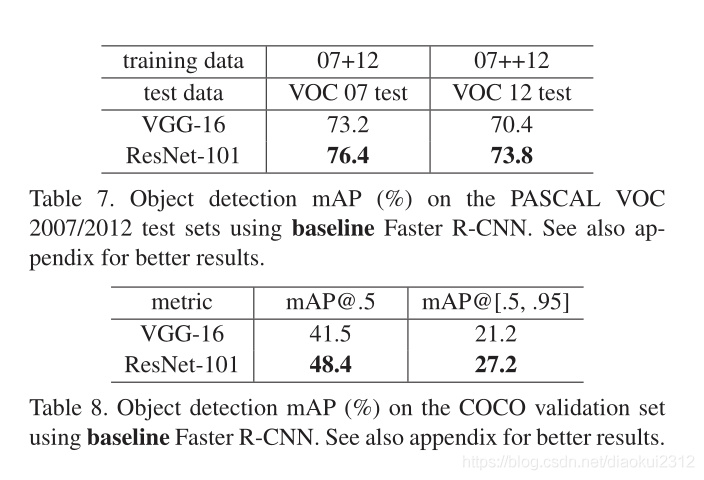

4.3. Object Detection on PASCAL and MS COCO

Our method has good generalization performance on other recognition tasks. Table 7 and 8 show the object detection baseline results on PASCAL VOC 2007 and 2012 [5] and COCO [26]. We adopt Faster R-CNN[32] as the detection method. Here we are interested in the improvements of replacing VGG-16 [40] with ResNet-101. The detection implementation (see appendix) of using both models is the same, so the gains can only be attributed to better networks. Most remarkably, on the challenging COCO dataset we obtain a 6.0% increase in COCO’s standard metric (mAP@[.5,.95]), which is a 28% relative improvement. This gain is solely due to the learned representations.

我們的方法在其他識別任務上具有良好的泛化效能。表7和表8顯示了PASCAL VOC 2007和2012[5]和COCO[26]的目標檢測基線結果。我們採用更快的R-CNN[32]作爲檢測方法。這裏我們對用ResNet-101代替VGG-16[40]的改進感興趣。使用這兩種模型的檢測實現(見附錄)是相同的,因此增益只能歸因於更好的網路。最值得注意的是,在具有挑戰性的COCO數據集上,COCO的標準度量值(mAP@[.5,.95])增加了6.0%,相對提高了28%。這種增益完全是由於學習的表現。

Based on deep residual nets, we won the 1st places in several tracks in ILSVRC & COCO 2015 competitions: ImageNet detection, ImageNet localization, COCO detection, and COCO segmentation. The details are in the appendix.

基於深度殘差網,我們在ILSVRC&COCO 2015競賽中獲得了多個賽道的第一名:ImageNet檢測、ImageNet定位、COCO檢測和COCO分割。