雲端開爐,線上訓練,Bert-vits2-v2.2雲端線上訓練和推理實踐(基於GoogleColab)

假如我們一定要說深度學習入門會有一定的門檻,那麼裝置成本是一個無法避開的話題。深度學習模型通常需要大量的計算資源來進行訓練和推理。較大規模的深度學習模型和複雜的資料集需要更高的計算能力才能進行有效的訓練。因此,訓練深度學習模型可能需要使用高效能的計算裝置,如圖形處理器(GPU)或專用的深度學習處理器(如TPU),這讓很多本地沒有N卡的同學望而卻步。

GoogleColab是由Google提供的一種基於雲的免費Jupyter筆電環境。它可以幫助入門使用者輕鬆地進行機器學習和深度學習的實驗。

儘管GoogleColab提供了很多便利和免費的功能,但也有一些限制。例如,每個對談的計算資源可能是有限的,並且對談可能會在一段時間後自動關閉。此外,Colab的使用可能受到Google的限制和政策規定。

對於筆者這樣的窮哥們來講,GoogleColab就是黑暗中的一道光,就算有訓練時長限制,也能湊合用了,要啥自行車?要飯咱也就別嫌飯餿了,本次我們基於GoogleColab在雲端訓練和推理Bert-vits2-v2.2專案,復刻那黑破壞神角色莉莉絲(lilith)。

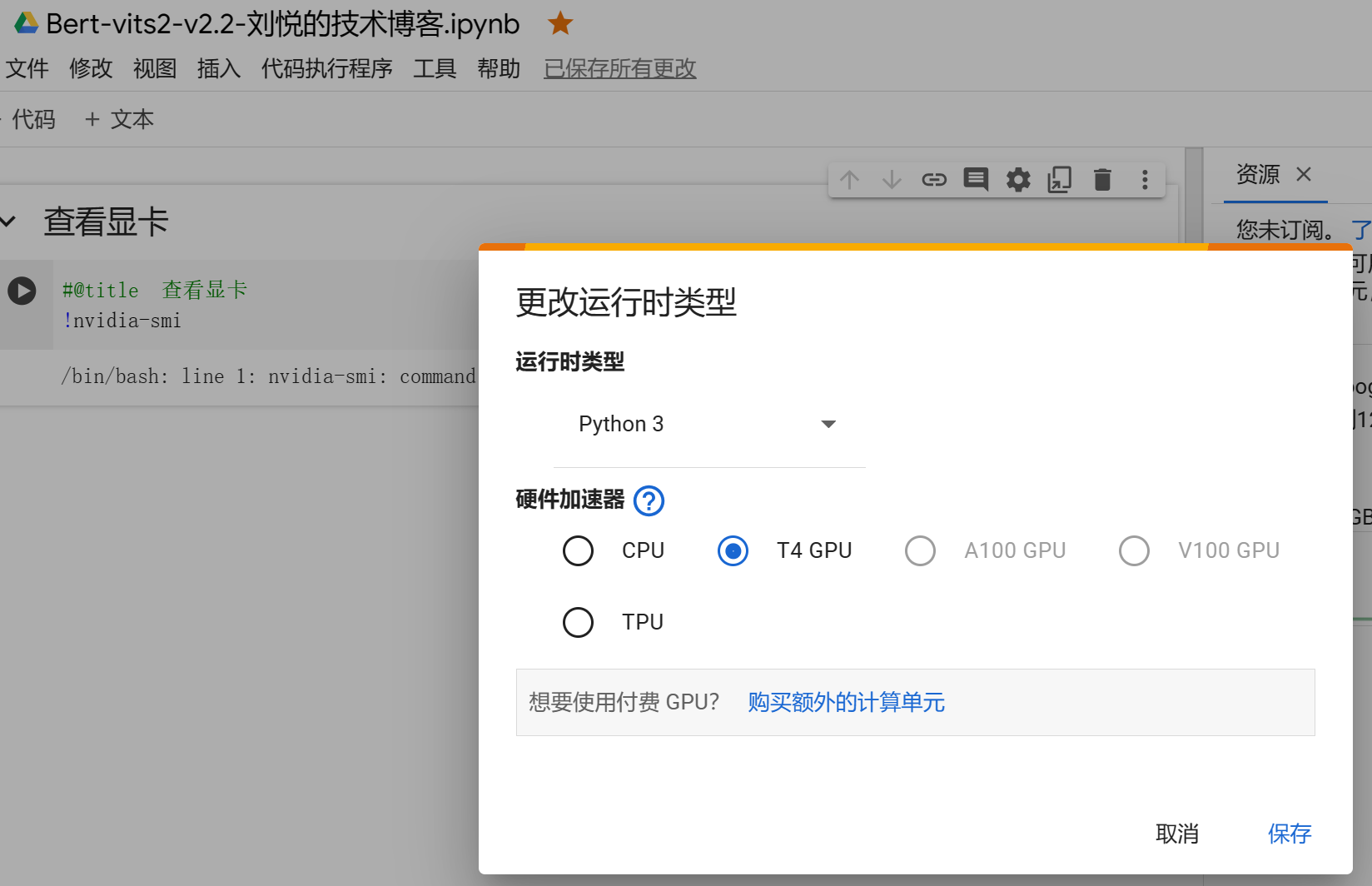

設定雲端裝置

首先進入GoogleColab實驗室官網:

https://colab.research.google.com/

點選新建筆記,並且連結裝置伺服器:

這裡硬體裝置選擇T4GPU。

隨後新建一條命令

#@title 檢視顯示卡

!nvidia-smi

點選執行程式返回:

Tue Dec 19 03:07:21 2023

+---------------------------------------------------------------------------------------+

| NVIDIA-SMI 535.104.05 Driver Version: 535.104.05 CUDA Version: 12.2 |

|-----------------------------------------+----------------------+----------------------+

| GPU Name Persistence-M | Bus-Id Disp.A | Volatile Uncorr. ECC |

| Fan Temp Perf Pwr:Usage/Cap | Memory-Usage | GPU-Util Compute M. |

| | | MIG M. |

|=========================================+======================+======================|

| 0 Tesla T4 Off | 00000000:00:04.0 Off | 0 |

| N/A 54C P8 10W / 70W | 0MiB / 15360MiB | 0% Default |

| | | N/A |

+-----------------------------------------+----------------------+----------------------+

+---------------------------------------------------------------------------------------+

| Processes: |

| GPU GI CI PID Type Process name GPU Memory |

| ID ID Usage |

|=======================================================================================|

| No running processes found |

+---------------------------------------------------------------------------------------+

新一代圖靈架構、16GB 視訊記憶體,免費 GPU 也能如此耀眼,不愧是業界良心。

克隆程式碼倉庫

隨後新建命令:

#@title 克隆程式碼倉庫

!git clone https://github.com/v3ucn/Bert-vits2-V2.2.git

程式返回:

Cloning into 'Bert-vits2-V2.2'...

remote: Enumerating objects: 310, done.

remote: Counting objects: 100% (310/310), done.

remote: Compressing objects: 100% (210/210), done.

remote: Total 310 (delta 97), reused 294 (delta 81), pack-reused 0

Receiving objects: 100% (310/310), 12.84 MiB | 18.95 MiB/s, done.

Resolving deltas: 100% (97/97), done.

安裝所需要的依賴

新建安裝依賴命令:

#@title 安裝所需要的依賴

%cd /content/Bert-vits2-V2.2

!pip install -r requirements.txt

依賴安裝的時間要長一些,需要耐心等待。

下載必要的模型

接著下載必要的模型,這裡包括bert模型和情感模型:

#@title 下載必要的模型

!wget -P emotional/clap-htsat-fused/ https://huggingface.co/laion/clap-htsat-fused/resolve/main/pytorch_model.bin

!wget -P emotional/wav2vec2-large-robust-12-ft-emotion-msp-dim/ https://huggingface.co/audeering/wav2vec2-large-robust-12-ft-emotion-msp-dim/resolve/main/pytorch_model.bin

!wget -P bert/chinese-roberta-wwm-ext-large/ https://huggingface.co/hfl/chinese-roberta-wwm-ext-large/resolve/main/pytorch_model.bin

!wget -P bert/bert-base-japanese-v3/ https://huggingface.co/cl-tohoku/bert-base-japanese-v3/resolve/main/pytorch_model.bin

!wget -P bert/deberta-v3-large/ https://huggingface.co/microsoft/deberta-v3-large/resolve/main/pytorch_model.bin

!wget -P bert/deberta-v3-large/ https://huggingface.co/microsoft/deberta-v3-large/resolve/main/pytorch_model.generator.bin

!wget -P bert/deberta-v2-large-japanese/ https://huggingface.co/ku-nlp/deberta-v2-large-japanese/resolve/main/pytorch_model.bin

如果推理任務只需要中文語音,那麼下載前三個模型即可。

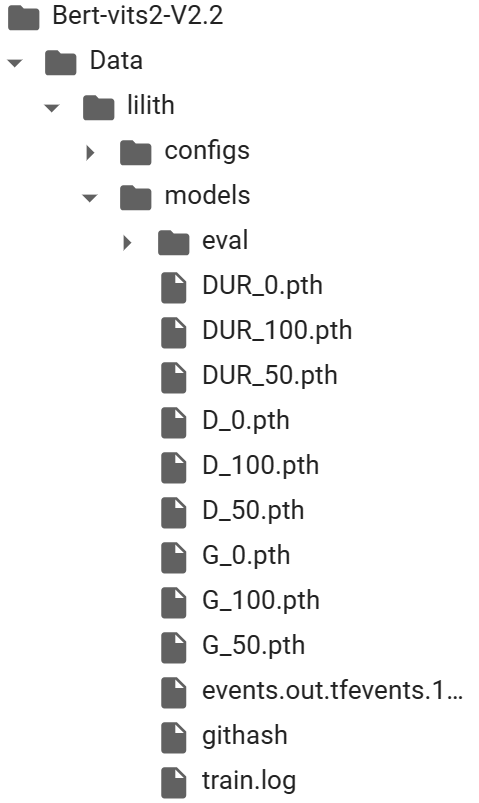

下載底模檔案

隨後下載Bert-vits2-v2.2底模:

#@title 下載底模檔案

!wget -P Data/lilith/models/ https://huggingface.co/OedoSoldier/Bert-VITS2-2.2-CLAP/resolve/main/DUR_0.pth

!wget -P Data/lilith/models/ https://huggingface.co/OedoSoldier/Bert-VITS2-2.2-CLAP/resolve/main/D_0.pth

!wget -P Data/lilith/models/ https://huggingface.co/OedoSoldier/Bert-VITS2-2.2-CLAP/resolve/main/G_0.pth

注意這裡的底模要放在角色的models目錄中,同時注意底模版本是2.2。

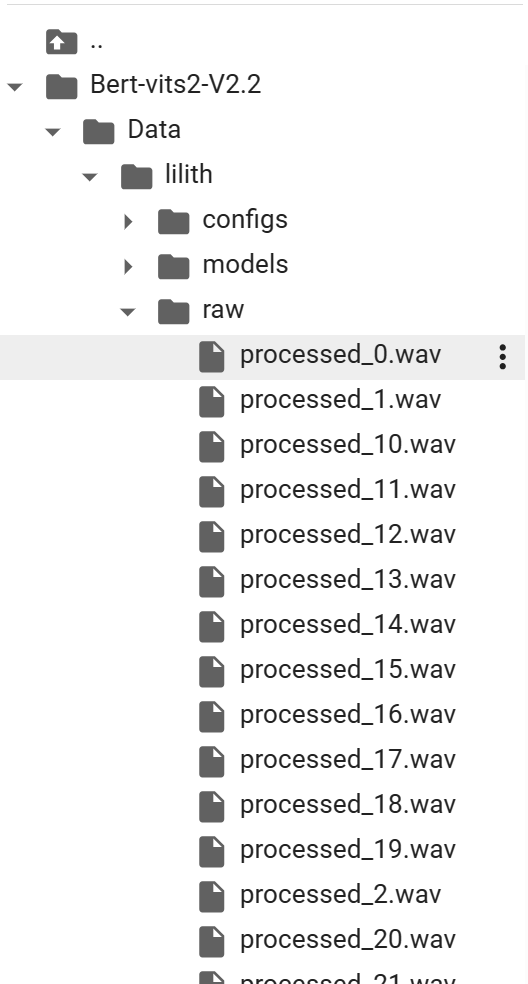

上傳音訊素材和重取樣

隨後開啟目錄,在lilith目錄右鍵新建資料夾raw,接著右鍵點選上傳,將素材上傳到雲端:

同時也將轉寫檔案esd.list右鍵上傳到專案的lilith目錄:

./Data/lilith/wavs/processed_0.wav|lilith|ZH|信仰,叫你們要否定心中的慾望。

./Data/lilith/wavs/processed_1.wav|lilith|ZH|把你們囚禁在自己的身體裡

./Data/lilith/wavs/processed_2.wav|lilith|ZH|聖修雅瑞之母

./Data/lilith/wavs/processed_3.wav|lilith|ZH|我有你要的東西

./Data/lilith/wavs/processed_4.wav|lilith|ZH|你渴望知識

./Data/lilith/wavs/processed_5.wav|lilith|ZH|不惜帶著孩子尋遍聖修雅瑞

./Data/lilith/wavs/processed_6.wav|lilith|ZH|這話你真的相信嗎

./Data/lilith/wavs/processed_7.wav|lilith|ZH|不必再裝了

./Data/lilith/wavs/processed_8.wav|lilith|ZH|你有問題,我有答案

./Data/lilith/wavs/processed_9.wav|lilith|ZH|我洞悉整個宇宙的真理

./Data/lilith/wavs/processed_10.wav|lilith|ZH|你看了那麼多

./Data/lilith/wavs/processed_11.wav|lilith|ZH|知道的卻那麼少

./Data/lilith/wavs/processed_12.wav|lilith|ZH|打碎枷鎖

./Data/lilith/wavs/processed_13.wav|lilith|ZH|你願意接受我的提議嗎?

./Data/lilith/wavs/processed_14.wav|lilith|ZH|你很好奇想知道我

./Data/lilith/wavs/processed_15.wav|lilith|ZH|為什麼饒了你的命

./Data/lilith/wavs/processed_16.wav|lilith|ZH|你相信我嗎

./Data/lilith/wavs/processed_17.wav|lilith|ZH|很好,現在你只需要知道。

./Data/lilith/wavs/processed_18.wav|lilith|ZH|我們要去見我兒子

./Data/lilith/wavs/processed_19.wav|lilith|ZH|是的,但不止如此。

./Data/lilith/wavs/processed_20.wav|lilith|ZH|他還是我計劃的關鍵

./Data/lilith/wavs/processed_21.wav|lilith|ZH|雖然我無法預料

./Data/lilith/wavs/processed_22.wav|lilith|ZH|在新世界裡你是否願意站在我身邊

./Data/lilith/wavs/processed_23.wav|lilith|ZH|找出自己真正的本性

./Data/lilith/wavs/processed_25.wav|lilith|ZH|可是我還是會為你

./Data/lilith/wavs/processed_27.wav|lilith|ZH|但現在所有的可能性

./Data/lilith/wavs/processed_28.wav|lilith|ZH|統統被奪走了

./Data/lilith/wavs/processed_29.wav|lilith|ZH|奪走啊

./Data/lilith/wavs/processed_30.wav|lilith|ZH|這把鑰匙能開啟的不僅是地獄的大門

./Data/lilith/wavs/processed_31.wav|lilith|ZH|也會開啟我們的未來

./Data/lilith/wavs/processed_32.wav|lilith|ZH|因為你的犧牲才得以實現到未來

./Data/lilith/wavs/processed_33.wav|lilith|ZH|打碎枷鎖

./Data/lilith/wavs/processed_34.wav|lilith|ZH|接受美麗的罪惡

./Data/lilith/wavs/processed_35.wav|lilith|ZH|這就是第一批

./Data/lilith/wavs/processed_36.wav|lilith|ZH|腦筋動得很快

./Data/lilith/wavs/processed_37.wav|lilith|ZH|沒錯,我正是莉莉絲。

至於音訊如何切分、轉寫、標註等操作,請移步:本地訓練,立等可取,30秒音訊素材復刻黴黴講中文音色基於Bert-VITS2V2.0.2。囿於篇幅,這裡不再贅述。

確保素材切分和轉寫檔案都上傳成功後,新建命令:

#@title 重取樣

!python3 resample.py --sr 44100 --in_dir ./Data/lilith/raw/ --out_dir ./Data/lilith/wavs/

進行重取樣操作。

預處理標籤檔案

接著新建命令:

#@title 預處理標籤檔案

!python3 preprocess_text.py --transcription-path ./Data/lilith/esd.list --train-path ./Data/lilith/train.list --val-path ./Data/lilith/val.list --config-path ./Data/lilith/configs/config.json

程式返回:

pytorch_model.bin: 100% 1.32G/1.32G [00:26<00:00, 49.4MB/s]

spm.model: 100% 2.46M/2.46M [00:00<00:00, 131MB/s]

The cache for model files in Transformers v4.22.0 has been updated. Migrating your old cache. This is a one-time only operation. You can interrupt this and resume the migration later on by calling `transformers.utils.move_cache()`.

0it [00:00, ?it/s]

[nltk_data] Downloading package averaged_perceptron_tagger to

[nltk_data] /root/nltk_data...

[nltk_data] Unzipping taggers/averaged_perceptron_tagger.zip.

[nltk_data] Downloading package cmudict to /root/nltk_data...

[nltk_data] Unzipping corpora/cmudict.zip.

Ignored unknown kwarg option normalize

Ignored unknown kwarg option normalize

Ignored unknown kwarg option normalize

Ignored unknown kwarg option normalize

Some weights of EmotionModel were not initialized from the model checkpoint at ./emotional/wav2vec2-large-robust-12-ft-emotion-msp-dim and are newly initialized: ['wav2vec2.encoder.pos_conv_embed.conv.parametrizations.weight.original1', 'wav2vec2.encoder.pos_conv_embed.conv.parametrizations.weight.original0']

You should probably TRAIN this model on a down-stream task to be able to use it for predictions and inference.

0% 0/36 [00:00<?, ?it/s]Building prefix dict from the default dictionary ...

Dumping model to file cache /tmp/jieba.cache

Loading model cost 0.686 seconds.

Prefix dict has been built successfully.

100% 36/36 [00:00<00:00, 40.28it/s]

總重複音訊數:0,總未找到的音訊數:0

訓練集和驗證集生成完成!

此時,在lilith目錄已經生成訓練集和驗證集,即train.list和val.list。

生成 BERT 特徵檔案

接著新建命令:

#@title 生成 BERT 特徵檔案

!python3 bert_gen.py --config-path ./Data/lilith/configs/config.json

程式返回:

0% 0/36 [00:00<?, ?it/s]Some weights of the model checkpoint at ./bert/chinese-roberta-wwm-ext-large were not used when initializing BertForMaskedLM: ['bert.pooler.dense.bias', 'bert.pooler.dense.weight', 'cls.seq_relationship.bias', 'cls.seq_relationship.weight']

- This IS expected if you are initializing BertForMaskedLM from the checkpoint of a model trained on another task or with another architecture (e.g. initializing a BertForSequenceClassification model from a BertForPreTraining model).

- This IS NOT expected if you are initializing BertForMaskedLM from the checkpoint of a model that you expect to be exactly identical (initializing a BertForSequenceClassification model from a BertForSequenceClassification model).

Some weights of the model checkpoint at ./bert/chinese-roberta-wwm-ext-large were not used when initializing BertForMaskedLM: ['bert.pooler.dense.bias', 'bert.pooler.dense.weight', 'cls.seq_relationship.bias', 'cls.seq_relationship.weight']

- This IS expected if you are initializing BertForMaskedLM from the checkpoint of a model trained on another task or with another architecture (e.g. initializing a BertForSequenceClassification model from a BertForPreTraining model).

- This IS NOT expected if you are initializing BertForMaskedLM from the checkpoint of a model that you expect to be exactly identical (initializing a BertForSequenceClassification model from a BertForSequenceClassification model).

100% 36/36 [00:21<00:00, 1.67it/s]

bert生成完畢!, 共有36個bert.pt生成!

數一下,一共36個,和音訊素材數量一致。

生成 clap 特徵檔案

最後生成clap情感特徵檔案:

#@title 生成 clap 特徵檔案

#!wget -P emotional/clap-htsat-fused/ https://huggingface.co/laion/clap-htsat-fused/resolve/main/pytorch_model.bin

!python3 clap_gen.py --config-path ./Data/lilith/configs/config.json

程式返回:

/content/Bert-vits2-V2.2/clap_gen.py:34: FutureWarning: Pass sr=48000 as keyword args. From version 0.10 passing these as positional arguments will result in an error

audio = librosa.load(wav_path, 48000)[0]

0% 0/36 [00:00<?, ?it/s]/content/Bert-vits2-V2.2/clap_gen.py:34: FutureWarning: Pass sr=48000 as keyword args. From version 0.10 passing these as positional arguments will result in an error

audio = librosa.load(wav_path, 48000)[0]

/content/Bert-vits2-V2.2/clap_gen.py:34: FutureWarning: Pass sr=48000 as keyword args. From version 0.10 passing these as positional arguments will result in an error

audio = librosa.load(wav_path, 48000)[0]

/content/Bert-vits2-V2.2/clap_gen.py:34: FutureWarning: Pass sr=48000 as keyword args. From version 0.10 passing these as positional arguments will result in an error

audio = librosa.load(wav_path, 48000)[0]

100% 36/36 [00:44<00:00, 1.23s/it]

clap生成完畢!, 共有36個emo.pt生成!

同樣36個,也就是說每個素材需要對應一個bert和一個clap。

開始訓練

萬事俱備,開始訓練:

#@title 開始訓練

!python3 train_ms.py

程式返回:

2023-12-19 03:17:48.852966: E external/local_xla/xla/stream_executor/cuda/cuda_dnn.cc:9261] Unable to register cuDNN factory: Attempting to register factory for plugin cuDNN when one has already been registered

2023-12-19 03:17:48.853057: E external/local_xla/xla/stream_executor/cuda/cuda_fft.cc:607] Unable to register cuFFT factory: Attempting to register factory for plugin cuFFT when one has already been registered

2023-12-19 03:17:48.992178: E external/local_xla/xla/stream_executor/cuda/cuda_blas.cc:1515] Unable to register cuBLAS factory: Attempting to register factory for plugin cuBLAS when one has already been registered

2023-12-19 03:17:49.268092: I tensorflow/core/platform/cpu_feature_guard.cc:182] This TensorFlow binary is optimized to use available CPU instructions in performance-critical operations.

To enable the following instructions: AVX2 FMA, in other operations, rebuild TensorFlow with the appropriate compiler flags.

2023-12-19 03:17:51.369993: W tensorflow/compiler/tf2tensorrt/utils/py_utils.cc:38] TF-TRT Warning: Could not find TensorRT

載入config中的設定localhost

載入config中的設定10086

載入config中的設定1

載入config中的設定0

載入config中的設定0

載入環境變數

MASTER_ADDR: localhost,

MASTER_PORT: 10086,

WORLD_SIZE: 1,

RANK: 0,

LOCAL_RANK: 0

12-19 03:17:55 INFO | data_utils.py:66 | Init dataset...

100% 32/32 [00:00<00:00, 51901.67it/s]

12-19 03:17:55 INFO | data_utils.py:81 | skipped: 0, total: 32

12-19 03:17:55 INFO | data_utils.py:66 | Init dataset...

100% 4/4 [00:00<00:00, 34100.03it/s]

12-19 03:17:55 INFO | data_utils.py:81 | skipped: 0, total: 4

Using noise scaled MAS for VITS2

Using duration discriminator for VITS2

INFO:models:Loaded checkpoint 'Data/lilith/models/DUR_0.pth' (iteration 0)

ERROR:models:emb_g.weight is not in the checkpoint

INFO:models:Loaded checkpoint 'Data/lilith/models/G_0.pth' (iteration 0)

INFO:models:Loaded checkpoint 'Data/lilith/models/D_0.pth' (iteration 0)

******************檢測到模型存在,epoch為 1,gloabl step為 0*********************

0% 0/8 [00:00<?, ?it/s][W reducer.cpp:1346] Warning: find_unused_parameters=True was specified in DDP constructor, but did not find any unused parameters in the forward pass. This flag results in an extra traversal of the autograd graph every iteration, which can adversely affect performance. If your model indeed never has any unused parameters in the forward pass, consider turning this flag off. Note that this warning may be a false positive if your model has flow control causing later iterations to have unused parameters. (function operator())

INFO:models:Train Epoch: 1 [0%]

INFO:models:[2.78941011428833, 2.49017596244812, 5.66870641708374, 25.731149673461914, 4.624840259552002, 3.6382224559783936, 0, 0.0002]

Evaluating ...

INFO:models:Saving model and optimizer state at iteration 1 to Data/lilith/models/G_0.pth

INFO:models:Saving model and optimizer state at iteration 1 to Data/lilith/models/D_0.pth

INFO:models:Saving model and optimizer state at iteration 1 to Data/lilith/models/DUR_0.pth

100% 8/8 [00:40<00:00, 5.05s/it]

INFO:models:====> Epoch: 1

100% 8/8 [00:09<00:00, 1.20s/it]

INFO:models:====> Epoch: 2

100% 8/8 [00:09<00:00, 1.23s/it]

INFO:models:====> Epoch: 3

100% 8/8 [00:09<00:00, 1.24s/it]

INFO:models:====> Epoch: 4

100% 8/8 [00:09<00:00, 1.25s/it]

INFO:models:====> Epoch: 5

100% 8/8 [00:10<00:00, 1.26s/it]

INFO:models:====> Epoch: 6

25% 2/8 [00:02<00:08, 1.41s/it]INFO:models:Train Epoch: 7 [25%]

由此就在底模的基礎上開始訓練了。

線上推理

訓練了100步之後,我們可以先看看效果:

注意修改根目錄的config.yml中的模型名稱和模型名稱一致:

# webui webui設定

# 注意, 「:」 後需要加空格

webui:

# 推理裝置

device: "cuda"

# 模型路徑

model: "models/G_100.pth"

# 組態檔路徑

config_path: "configs/config.json"

# 埠號

port: 7860

# 是否公開部署,對外網開放

share: false

# 是否開啟debug模式

debug: false

# 語種識別庫,可選langid, fastlid

language_identification_library: "langid"

這裡model引數寫成:models/G_100.pth

隨後新建命令:

#@title 開始推理

!python3 webui.py

程式返回:

Ignored unknown kwarg option normalize

Ignored unknown kwarg option normalize

Ignored unknown kwarg option normalize

Ignored unknown kwarg option normalize

Some weights of EmotionModel were not initialized from the model checkpoint at ./emotional/wav2vec2-large-robust-12-ft-emotion-msp-dim and are newly initialized: ['wav2vec2.encoder.pos_conv_embed.conv.parametrizations.weight.original0', 'wav2vec2.encoder.pos_conv_embed.conv.parametrizations.weight.original1']

You should probably TRAIN this model on a down-stream task to be able to use it for predictions and inference.

| numexpr.utils | INFO | NumExpr defaulting to 2 threads.

/usr/local/lib/python3.10/dist-packages/torch/nn/utils/weight_norm.py:30: UserWarning: torch.nn.utils.weight_norm is deprecated in favor of torch.nn.utils.parametrizations.weight_norm.

warnings.warn("torch.nn.utils.weight_norm is deprecated in favor of torch.nn.utils.parametrizations.weight_norm.")

| utils | INFO | Loaded checkpoint 'Data/lilith/models/G_100.pth' (iteration 13)

推理頁面已開啟!

Running on local URL: http://127.0.0.1:7860

Running on public URL: https://40b8695e0a18b0e2eb.gradio.live

一個內網地址,一個公網地址,存取公網地址https://40b8695e0a18b0e2eb.gradio.live進行推理即可。

最後奉上GoogleColab筆記連結:

https://colab.research.google.com/drive/1LgewU9jevSovP9NTuqTtoxDop3qeWWKK?usp=sharing

與君共觴。