OpenCV4之特徵提取與物件檢測

2023-07-27 18:00:53

1、影象特徵概述

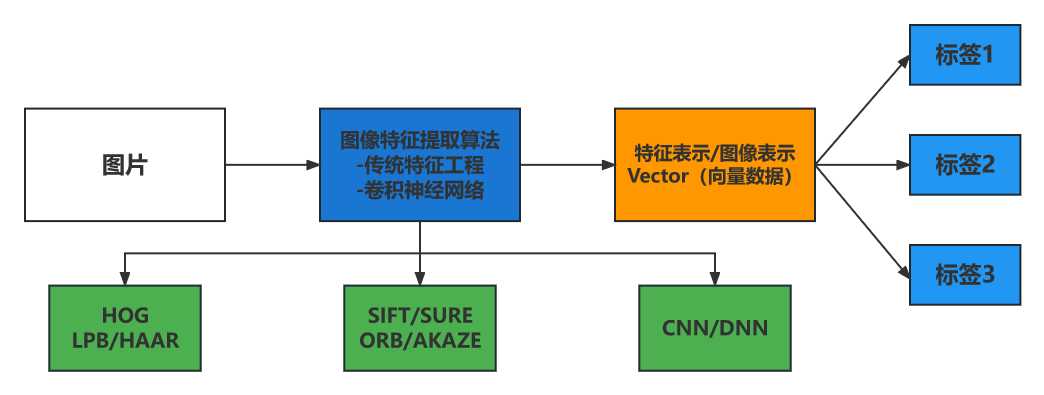

影象特徵的定義與表示

影象特徵表示是該影象唯一的表述,是影象的DNA

影象特徵提取概述

- 傳統影象特徵提取 - 主要基於紋理、角點、顏色分佈、梯度、邊緣等

- 深度折積神經網路特徵提取 - 基於監督學習、自動提取特徵

- 特徵資料/特徵屬性

-

- 尺度空間不變性

- 畫素遷移不變性

- 光照一致性原則

- 旋轉不變性原則

影象特徵應用

影象分類、物件識別、特徵檢測、影象對齊/匹配、物件檢測、影象搜尋/比對

- 影象處理:從影象到影象

- 特徵提取:從影象到向量(資料)

2、角點檢測

-

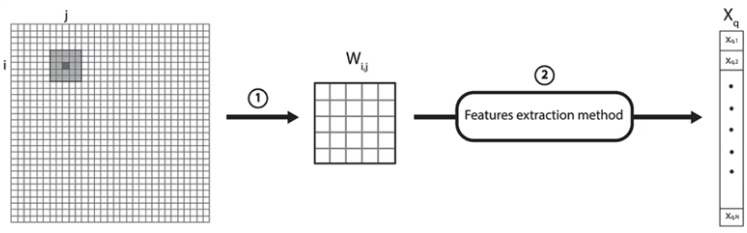

什麼是角點

-

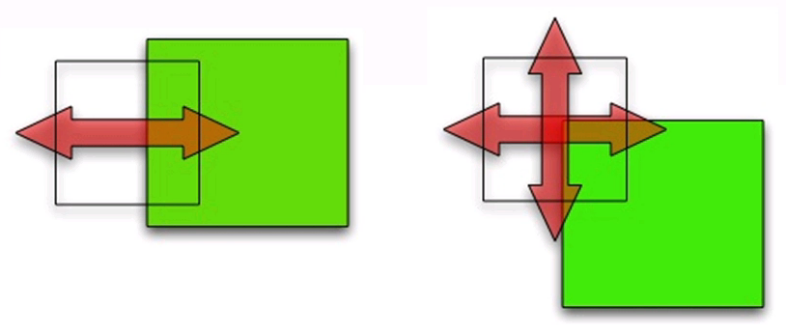

各個方向的梯度變化

-

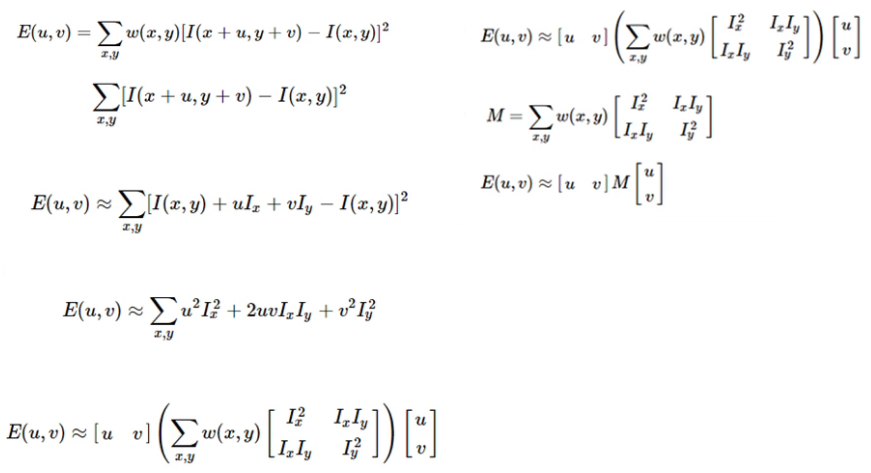

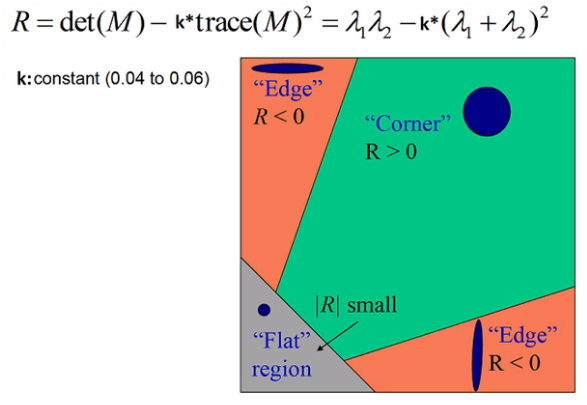

Harris角點檢測演演算法

//函數說明: void cv::cornerHarris( InputArray src, //輸入 OutputArray dst, //輸出 int blockSize, //塊大小 int ksize, //Sobel double k, //常數係數 int borderType = BORDER_DEFAULT // ) -

Shi-tomas角點檢測演演算法

//函數說明: void cv::goodFeaturesToTrack( InputArray image, //輸入影象 OutputArray corners, //輸出的角點座標 int maxCorners, //最大角點數目 double qualityLevel, //質量控制,即λ1與λ2的最小閾值 double minDistance, //重疊控制,忽略多少畫素值範圍內重疊的角點 InputArray mask = noArray(), int blockSize = 3, bool useHarrisDetector = false, double k = 0.04 ) -

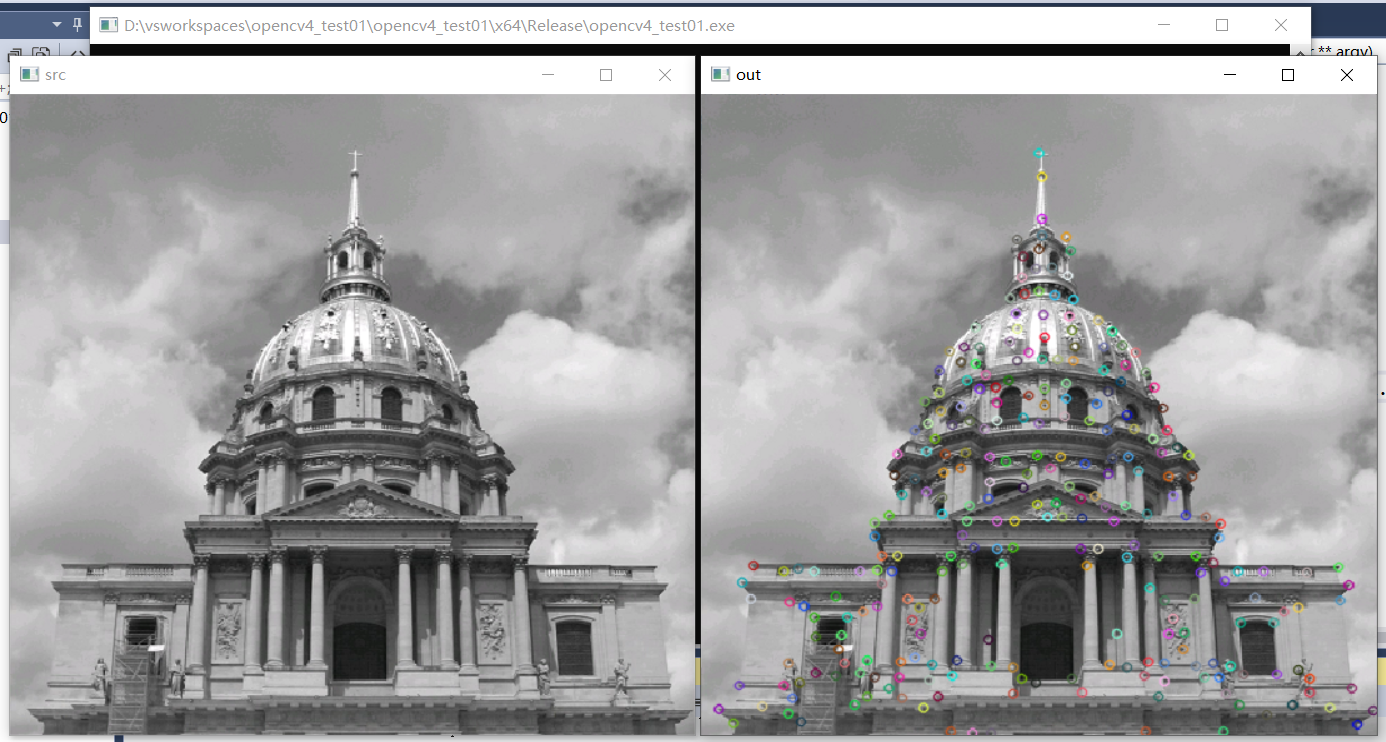

程式碼實現

#include <opencv2/opencv.hpp> #include <iostream> using namespace cv; using namespace std; int main(int argc, char** argv) { Mat src = imread("D:/images/building.png"); Mat gray; cvtColor(src, gray, COLOR_BGR2GRAY); namedWindow("src", WINDOW_FREERATIO); imshow("src", src); RNG rng(12345); vector<Point> points; goodFeaturesToTrack(gray, points, 400, 0.05, 10); for (size_t t = 0; t < points.size(); t++) { circle(src, points[t], 3, Scalar(rng.uniform(0, 255), rng.uniform(0, 255), rng.uniform(0, 255)), 1.5, LINE_AA); } namedWindow("out", WINDOW_FREERATIO); imshow("out", src); waitKey(0); destroyAllWindows(); return 0; } -

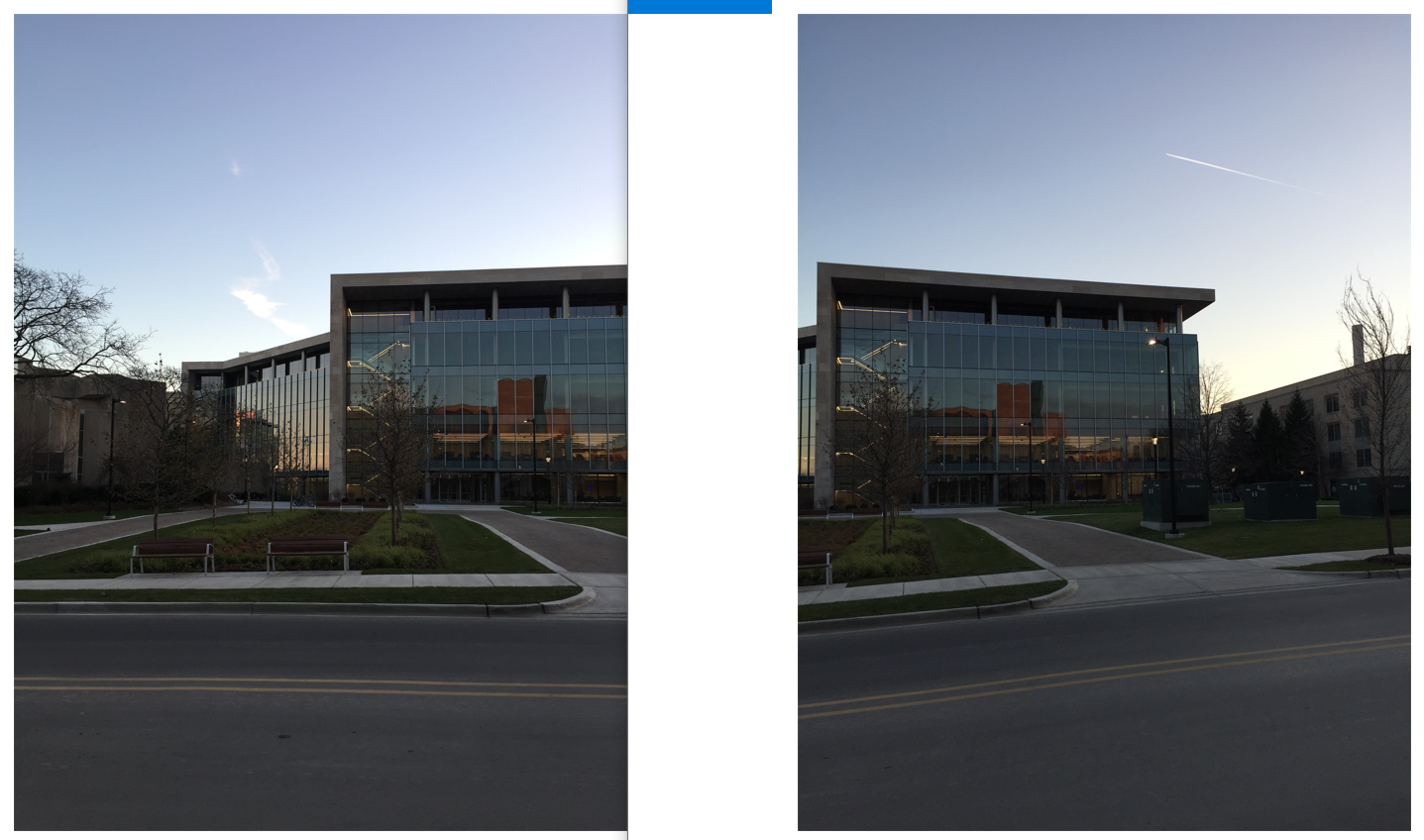

效果:

3、關鍵點檢測

- 影象特徵點/關鍵點

- 關鍵點檢測函數

- 程式碼演示

ORB關鍵點檢測(快速)

-

ORB演演算法由兩個部分組成:快速關鍵點定位+BRIEF描述子生成

-

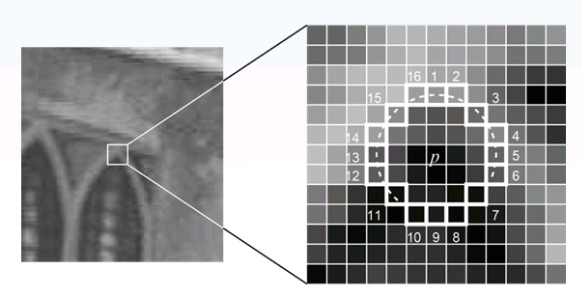

Fast關鍵點檢測:選擇當前畫素點P,閾值T,周圍16個畫素點,超過連續N=12個畫素點大於或者小於P,Fast1:優先檢測1、5、9、13,迴圈所有畫素點

關鍵點檢測函數

//ORB物件建立

Orb = cv::ORB::create(500)

virtual void cv::Feature2D::detect(

InputArray image, //輸入影象

std::vector<KeyPoint>& keypoints, //關鍵點

InputArray mask = noArray() //支援mask

)

KeyPoint資料結構-四個最重要屬性:

- pt

- angle

- response

- size

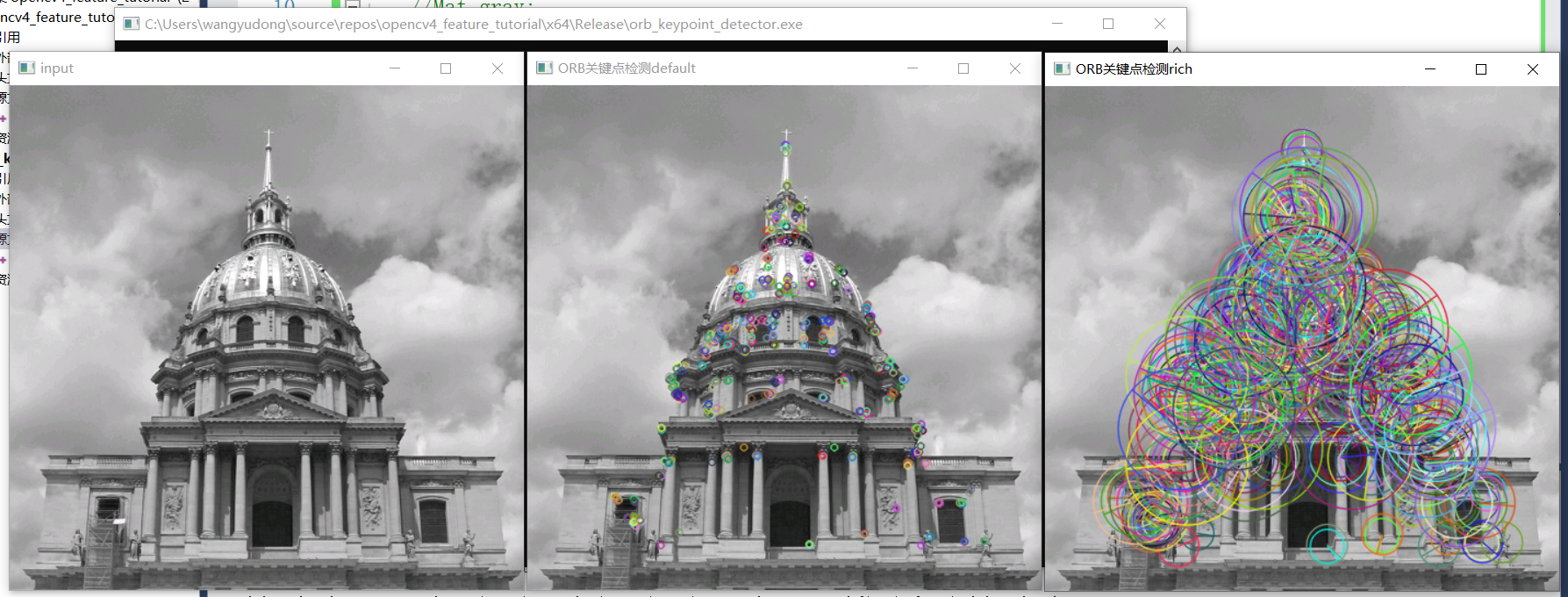

程式碼實現:

#include <opencv2/opencv.hpp>

#include <iostream>

using namespace cv;

using namespace std;

int main(int argc, char** argv) {

Mat src = imread("D:/images/building.png");

imshow("input", src);

//Mat gray;

//cvtColor(src, gray, COLOR_BGR2GRAY);

auto orb = ORB::create(500);

vector<KeyPoint> kypts;

orb->detect(src, kypts);

Mat result01, result02, result03;

drawKeypoints(src, kypts, result01, Scalar::all(-1), DrawMatchesFlags::DEFAULT);

drawKeypoints(src, kypts, result02, Scalar::all(-1), DrawMatchesFlags::DRAW_RICH_KEYPOINTS);

imshow("ORB關鍵點檢測default", result01);

imshow("ORB關鍵點檢測rich", result02);

waitKey(0);

destroyAllWindows();

return 0;

}

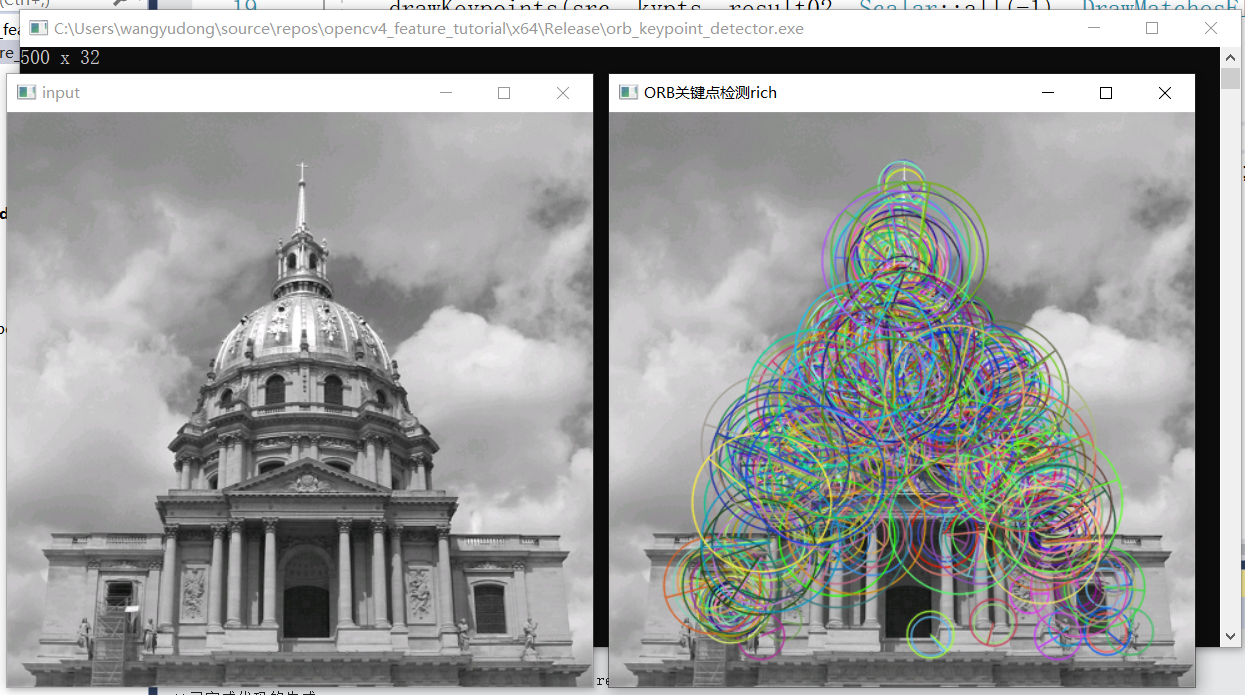

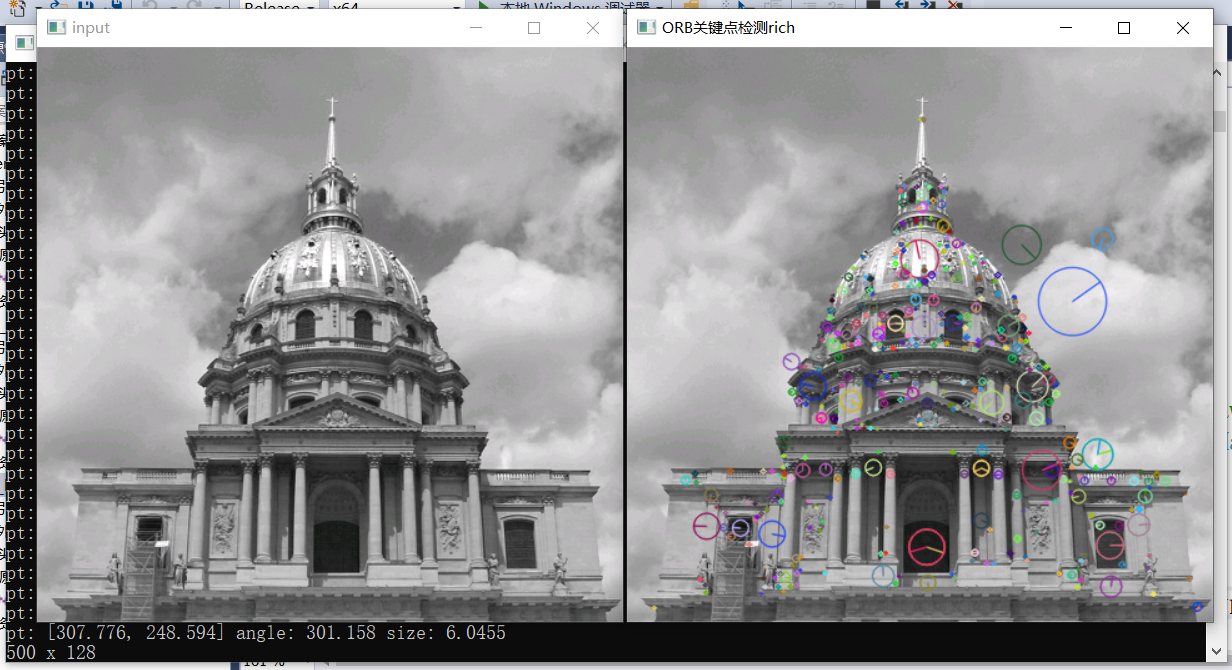

效果:

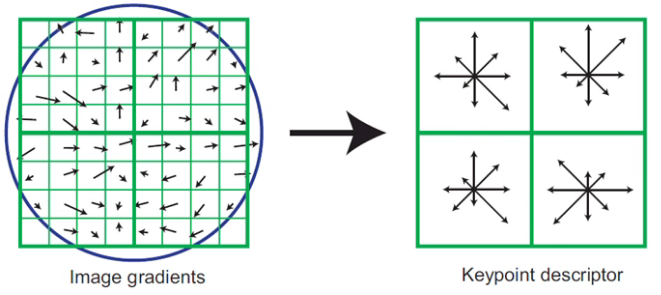

4、特徵描述子

- 基於關鍵點周圍區域

- 浮點數表示與二值編碼

- 描述子長度

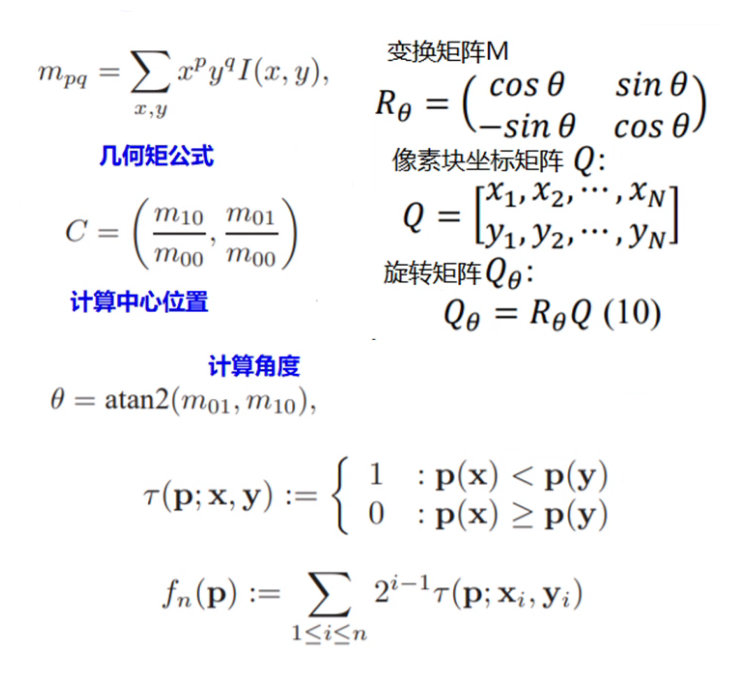

ORB特徵描述子生成步驟:

- 提取特徵關鍵點

- 描述子方向指派

- 特徵描述子編碼(二值編碼32位元)

程式碼實現:

#include <opencv2/opencv.hpp>

#include <iostream>

using namespace cv;

using namespace std;

int main(int argc, char** argv) {

Mat src = imread("D:/images/building.png");

imshow("input", src);

//Mat gray;

//cvtColor(src, gray, COLOR_BGR2GRAY);

auto orb = ORB::create(500); //獲取500個關鍵點,每個關鍵點計算一個orb特徵描述子

vector<KeyPoint> kypts;

orb->detect(src, kypts);

Mat result01, result02, result03;

//drawKeypoints(src, kypts, result01, Scalar::all(-1), DrawMatchesFlags::DEFAULT);

//不同半徑代表不同層級高斯金字塔中的關鍵點,即影象不同尺度中的關鍵點

drawKeypoints(src, kypts, result02, Scalar::all(-1), DrawMatchesFlags::DRAW_RICH_KEYPOINTS);

Mat desc_orb;

orb->compute(src, kypts, desc_orb);

std::cout << desc_orb.rows << " x " << desc_orb.cols << std::endl;

//imshow("ORB關鍵點檢測default", result01);

imshow("ORB關鍵點檢測rich", result02);

waitKey(0);

destroyAllWindows();

return 0;

}

效果:

SIFT(尺度不變特徵轉換,Scale-invariant feature transform)特徵描述子

- 尺度空間不變性

- 畫素遷移不變性

- 角度旋轉不變性

SIFT特徵提取步驟

- 尺度空間極值檢測

- 關鍵點定位

- 方向指派

- 特徵描述子

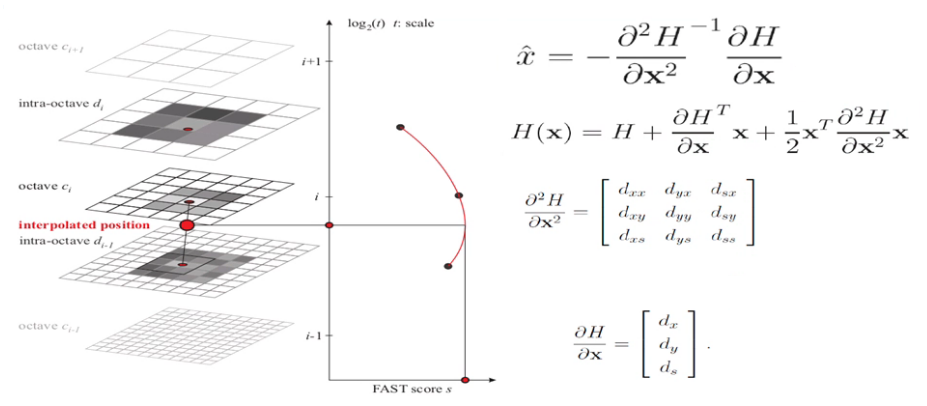

尺度空間極值檢測

- 構建尺度空間 -- 影象金字塔 + 高斯尺度空間

- 三層空間中的極值查詢

關鍵點定位

- 極值點定位 - 求導擬合

- 刪除低對比度與低響應候選點

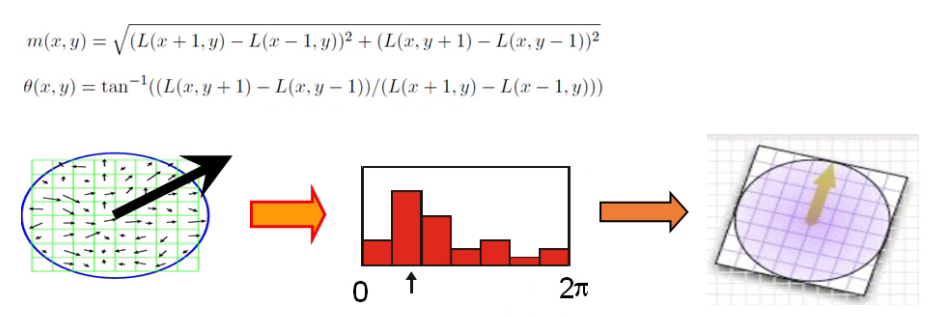

方向指派

- 關鍵點方向指派

- Scale尺度最近的影象,1.5倍大小的高斯視窗

特徵描述子

- 128維向量/特徵描述子

- 描述子編碼方式

程式碼實現:

#include <opencv2/opencv.hpp>

#include <iostream>

using namespace cv;

using namespace std;

int main(int argc, char** argv) {

Mat src = imread("D:/images/building.png");

imshow("input", src);

//Mat gray;

//cvtColor(src, gray, COLOR_BGR2GRAY);

auto sift = SIFT::create(500);

vector<KeyPoint> kypts;

sift->detect(src, kypts);

Mat result01, result02, result03;

//drawKeypoints(src, kypts, result01, Scalar::all(-1), DrawMatchesFlags::DEFAULT);

drawKeypoints(src, kypts, result02, Scalar::all(-1), DrawMatchesFlags::DRAW_RICH_KEYPOINTS);

std::cout << kypts.size() << std::endl;

for (int i = 0; i < kypts.size(); i++) {

std::cout << "pt: " << kypts[i].pt << " angle: " << kypts[i].angle << " size: " << kypts[i].size << std::endl;

}

Mat desc_orb;

sift->compute(src, kypts, desc_orb);

std::cout << desc_orb.rows << " x " << desc_orb.cols << std::endl;

//imshow("ORB關鍵點檢測default", result01);

imshow("ORB關鍵點檢測rich", result02);

waitKey(0);

destroyAllWindows();

return 0;

}

效果:

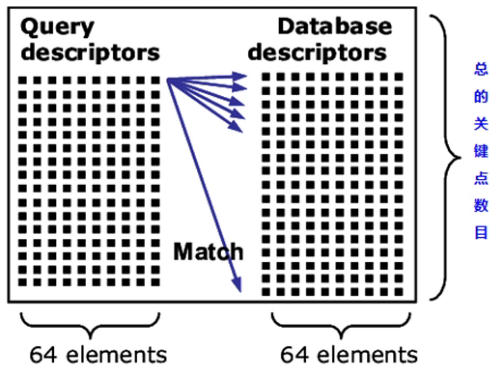

5、特徵匹配

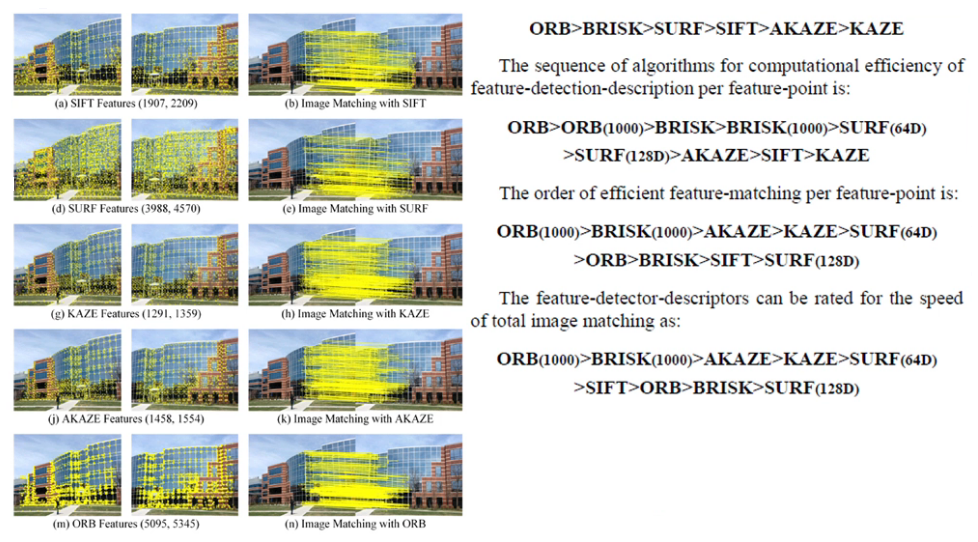

- 特徵匹配演演算法

- 特徵匹配函數

- 特徵匹配方法對比

特徵匹配演演算法

-

暴力匹配,全域性搜尋,計算最小距離,返回相似描述子合集

-

FLANN匹配,2009年釋出的開源高維資料匹配演演算法庫,全稱Fast Library for Approximate Nearest Neighbors

-

支援KMeans、KDTree、KNN、多探針LSH等搜尋與匹配演演算法

| 描述子 | 匹配方法 |

|---|---|

| SIFT, SURF, and KAZE | L1 Norm |

| AKAZE, ORB, and BRISK | Hamming distance(二值編碼) |

// 暴力匹配

auto bfMatcher = BFMatcher::create(NORM_HAMMING, false);

std::vector<DMatch> matches;

bfMatcher->match(box_descriptors, scene_descriptors, matches);

Mat img_orb_matches;

drawMatches(box, box_kpts, box_in_scene, scene_kpts, matches, img_orb_matches);

imshow("ORB暴力匹配演示", img_orb_matches);

// FLANN匹配

auto flannMatcher = FlannBasedMatcher(new flann::LshIndexParams(6, 12, 2));

flannMatcher.match(box_descriptors, scene_descriptors, matches);

Mat img_flann_matches;

drawMatches(box, box_kpts, box_in_scene, scene_kpts, matches, img_flann_matches);

namedWindow("FLANN匹配演示", WINDOW_FREERATIO);

imshow("FLANN匹配演示", img_flann_matches);

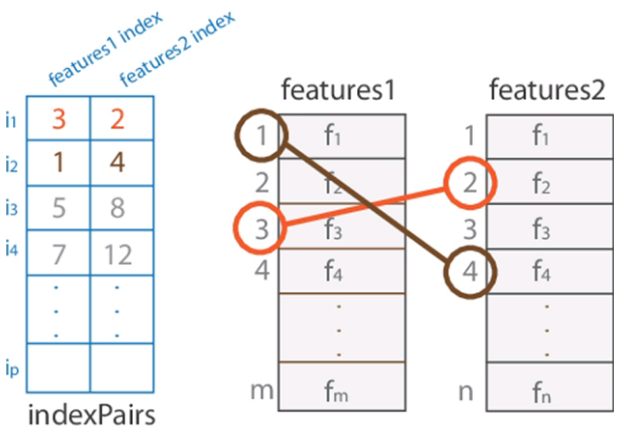

特徵匹配DMatch資料結構

DMatch資料結構:

- queryIdx

- trainIdx

- distance

distance表示距離,值越小表示匹配程度越高。

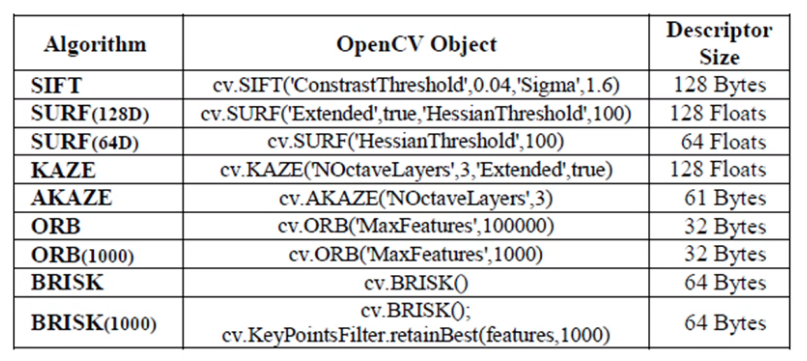

OpenCV特徵匹配方法對比

程式碼實現:

#include <opencv2/opencv.hpp>

#include <iostream>

using namespace cv;

using namespace std;

int main(int argc, char** argv) {

Mat book = imread("D:/images/book.jpg");

Mat book_on_desk = imread("D:/images/book_on_desk.jpg");

imshow("book", book);

//Mat gray;

//cvtColor(src, gray, COLOR_BGR2GRAY);

vector<KeyPoint> kypts_book;

vector<KeyPoint> kypts_book_on_desk;

Mat desc_book, desc_book_on_desk;

//auto orb = ORB::create(500);

//orb->detectAndCompute(book, Mat(), kypts_book, desc_book);

//orb->detectAndCompute(book_on_desk, Mat(), kypts_book_on_desk, desc_book_on_desk);

auto sift = SIFT::create(500);

sift->detectAndCompute(book, Mat(), kypts_book, desc_book);

sift->detectAndCompute(book_on_desk, Mat(), kypts_book_on_desk, desc_book_on_desk);

Mat result;

vector<DMatch> matches;

//// 暴力匹配

//auto bf_matcher = BFMatcher::create(NORM_HAMMING, false);

//bf_matcher->match(desc_book, desc_book_on_desk, matches);

//drawMatches(book, kypts_book, book_on_desk, kypts_book_on_desk, matches, result);

// FLANN匹配

//auto flannMatcher = FlannBasedMatcher(new flann::LshIndexParams(6, 12, 2));

auto flannMatcher = FlannBasedMatcher();

flannMatcher.match(desc_book, desc_book_on_desk, matches);

Mat img_flann_matches;

drawMatches(book, kypts_book, book_on_desk, kypts_book_on_desk, matches, img_flann_matches);

namedWindow("SIFT-FLANN匹配演示", WINDOW_FREERATIO);

imshow("SIFT-FLANN匹配演示", img_flann_matches);

//namedWindow("ORB暴力匹配演示", WINDOW_FREERATIO);

//imshow("ORB暴力匹配演示", result);

waitKey(0);

destroyAllWindows();

return 0;

}

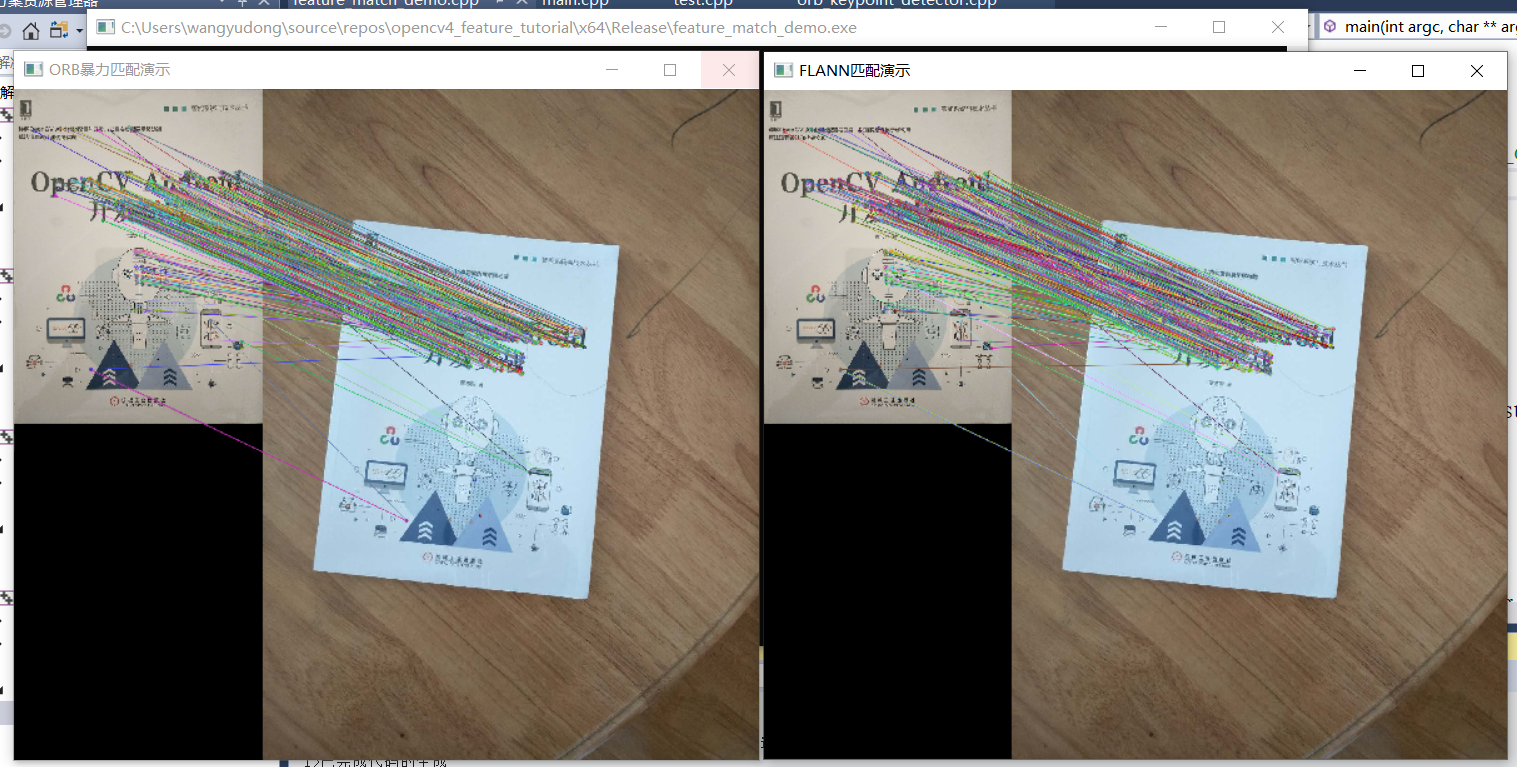

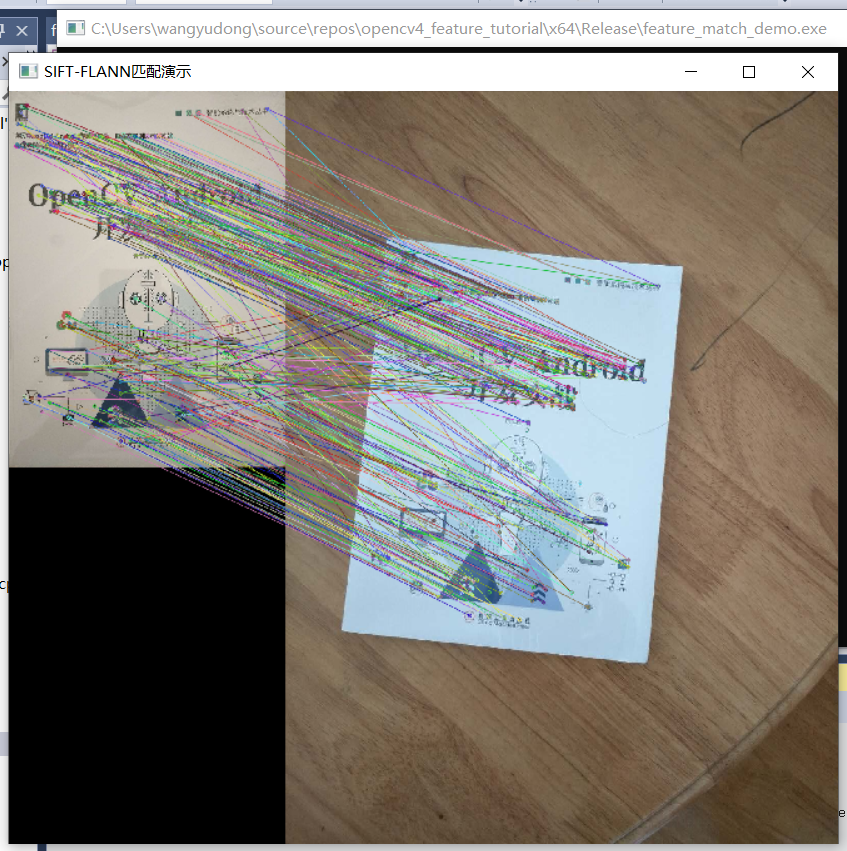

效果:

1、ORB描述子匹配效果

2、SIFT描述子匹配效果

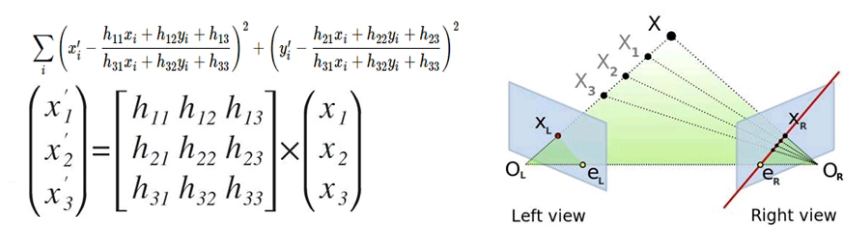

6、單應性變換/透視變換

Mat cv::findHomography(

InputArray srcPoints, // 輸入

InputArray dstPoints, // 輸出

int method = 0,

double ransacReprojThreshold = 3,

OuputArray mask = noArray(),

const int maxIters = 2000,

const double confidence = 0.995

)

擬合方法:

- 最小二乘法(0)

- 隨機取樣一致性(RANSC)

- 漸進取樣一致性(RHO)

程式碼實現:

#include <opencv2/opencv.hpp>

#include <iostream>

using namespace std;

using namespace cv;

int main(int argc, char** argv) {

Mat input = imread("D:/images/book_on_desk.jpg");

Mat gray, binary;

cvtColor(input, gray, COLOR_BGR2GRAY);

threshold(gray, binary, 0, 255, THRESH_BINARY | THRESH_OTSU);

vector<vector<Point>> contours;

vector<Vec4i> hierachy;

findContours(binary, contours, hierachy, RETR_EXTERNAL, CHAIN_APPROX_SIMPLE);

int index = 0;

for (size_t i = 0; i < contours.size(); i++) {

if (contourArea(contours[i]) > contourArea(contours[index])) {

index = i;

}

}

Mat approxCurve;

approxPolyDP(contours[index], approxCurve, contours[index].size() / 10, true);

//imshow("approx", approxCurve);

//std::cout << contours.size() << std::endl;

vector<Point2f> srcPts;

vector<Point2f> dstPts;

for (int i = 0; i < approxCurve.rows; i++) {

Vec2i pt = approxCurve.at<Vec2i>(i, 0);

srcPts.push_back(Point(pt[0], pt[1]));

circle(input, Point(pt[0], pt[1]), 12, Scalar(0, 0, 255), 2, 8, 0);

putText(input, std::to_string(i), Point(pt[0], pt[1]), FONT_HERSHEY_SIMPLEX, 0.5, Scalar(255, 0, 0), 1);

}

dstPts.push_back(Point2f(0, 0));

dstPts.push_back(Point2f(0, 760));

dstPts.push_back(Point2f(585, 760));

dstPts.push_back(Point2f(585, 0));

Mat h = findHomography(srcPts, dstPts, RANSAC); //計算單應性矩陣

Mat result;

warpPerspective(input, result, h, Size(585, 760)); //對原圖進行透視變換獲得校正後的目標區域

namedWindow("result", WINDOW_FREERATIO);

imshow("result", result);

drawContours(input, contours, index, Scalar(0, 255, 0), 2, 8);

namedWindow("輪廓", WINDOW_FREERATIO);

imshow("輪廓", input);

waitKey(0);

return 0;

}

效果:

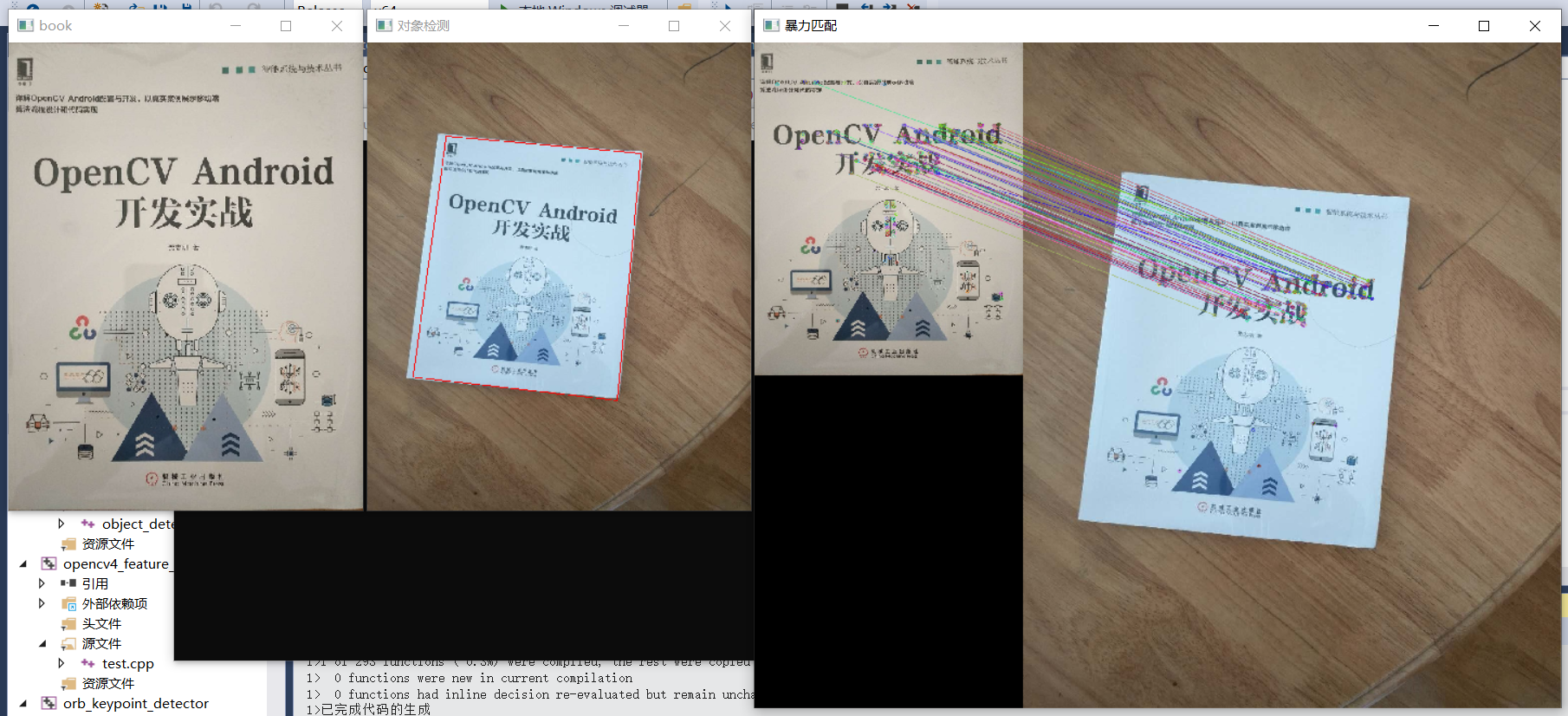

7、基於匹配的物件檢測

- 基於特徵的匹配與物件檢測

- ORB/AKAZE/SIFT

- 暴力/FLANN

- 透視變換

- 檢測框

程式碼實現:

#include <opencv2/opencv.hpp>

#include <iostream>

using namespace cv;

using namespace std;

int main(int argc, char** argv) {

// Mat image = imread("D:/images/butterfly.jpg");

Mat book = imread("D:/images/book.jpg");

Mat book_on_desk = imread("D:/images/book_on_desk.jpg");

namedWindow("book", WINDOW_FREERATIO);

imshow("book", book);

auto orb = ORB::create(500);

vector<KeyPoint> kypts_book;

vector<KeyPoint> kypts_book_on_desk;

Mat desc_book, desc_book_on_desk;

orb->detectAndCompute(book, Mat(), kypts_book, desc_book);

orb->detectAndCompute(book_on_desk, Mat(), kypts_book_on_desk, desc_book_on_desk);

Mat result;

auto bf_matcher = BFMatcher::create(NORM_HAMMING, false);

vector<DMatch> matches;

bf_matcher->match(desc_book, desc_book_on_desk, matches);

float good_rate = 0.15f;

int num_good_matches = matches.size() * good_rate;

std::cout << num_good_matches << std::endl;

std::sort(matches.begin(), matches.end());

matches.erase(matches.begin() + num_good_matches, matches.end());

drawMatches(book, kypts_book, book_on_desk, kypts_book_on_desk, matches, result);

vector<Point2f> obj_pts;

vector<Point2f> scene_pts;

for (size_t t = 0; t < matches.size(); t++) {

obj_pts.push_back(kypts_book[matches[t].queryIdx].pt);

scene_pts.push_back(kypts_book_on_desk[matches[t].trainIdx].pt);

}

Mat h = findHomography(obj_pts, scene_pts, RANSAC); // 計算單應性矩陣h

vector<Point2f> srcPts;

srcPts.push_back(Point2f(0, 0));

srcPts.push_back(Point2f(book.cols, 0));

srcPts.push_back(Point2f(book.cols, book.rows));

srcPts.push_back(Point2f(0, book.rows));

std::vector<Point2f> dstPts(4);

perspectiveTransform(srcPts, dstPts, h); // 計算轉換後書的四個頂點

for (int i = 0; i < 4; i++) {

line(book_on_desk, dstPts[i], dstPts[(i + 1) % 4], Scalar(0, 0, 255), 2, 8, 0);

}

namedWindow("暴力匹配", WINDOW_FREERATIO);

imshow("暴力匹配", result);

namedWindow("物件檢測", WINDOW_FREERATIO);

imshow("物件檢測", book_on_desk);

//imwrite("D:/object_find.png", book_on_desk);

waitKey(0);

return 0;

}

效果:

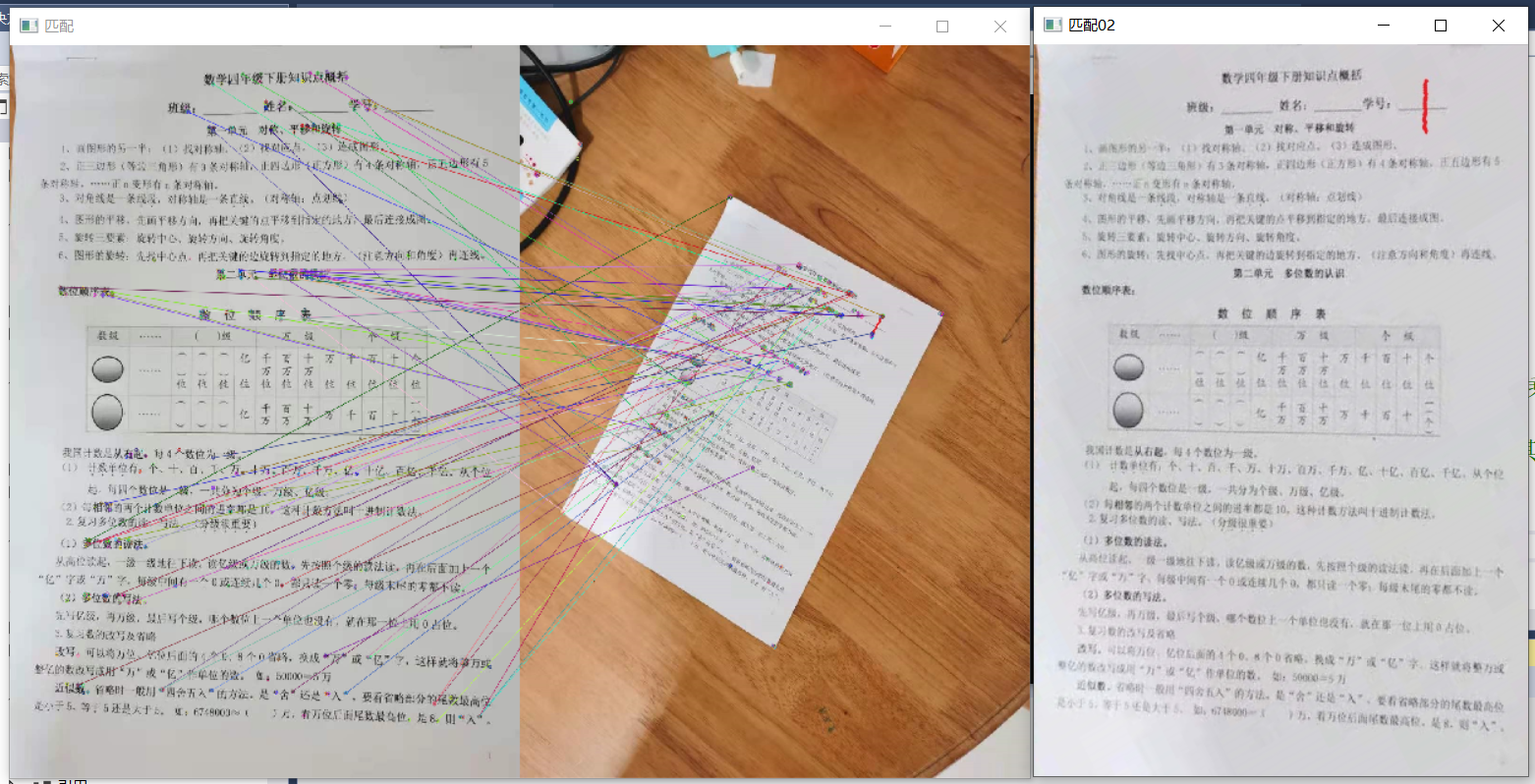

8、檔案對齊

- 模板表單/檔案

- 特徵匹配與對齊

程式碼實現:

#include <opencv2/opencv.hpp>

#include <iostream>

using namespace cv;

using namespace std;

int main(int argc, char** argv) {

Mat ref_img = imread("D:/images/form.png");

Mat img = imread("D:/images/form_in_doc.jpg");

imshow("表單模板", ref_img);

auto orb = ORB::create(500);

vector<KeyPoint> kypts_ref;

vector<KeyPoint> kypts_img;

Mat desc_book, desc_book_on_desk;

orb->detectAndCompute(ref_img, Mat(), kypts_ref, desc_book);

orb->detectAndCompute(img, Mat(), kypts_img, desc_book_on_desk);

Mat result;

auto bf_matcher = BFMatcher::create(NORM_HAMMING, false);

vector<DMatch> matches;

bf_matcher->match(desc_book_on_desk, desc_book, matches);

float good_rate = 0.15f;

int num_good_matches = matches.size() * good_rate;

std::cout << num_good_matches << std::endl;

std::sort(matches.begin(), matches.end());

matches.erase(matches.begin() + num_good_matches, matches.end());

drawMatches(ref_img, kypts_ref, img, kypts_img, matches, result);

imshow("匹配", result);

imwrite("D:/images/result_doc.png", result);

// Extract location of good matches

std::vector<Point2f> points1, points2;

for (size_t i = 0; i < matches.size(); i++)

{

points1.push_back(kypts_img[matches[i].queryIdx].pt);

points2.push_back(kypts_ref[matches[i].trainIdx].pt);

}

Mat h = findHomography(points1, points2, RANSAC); // 儘量用RANSAC,比最小二乘法效果好一些

Mat aligned_doc;

warpPerspective(img, aligned_doc, h, ref_img.size()); // 單應性矩陣h決定了其他無效區域不會被變換,只會變換target區域

imwrite("D:/images/aligned_doc.png", aligned_doc);

waitKey(0);

destroyAllWindows();

return 0;

}

效果:

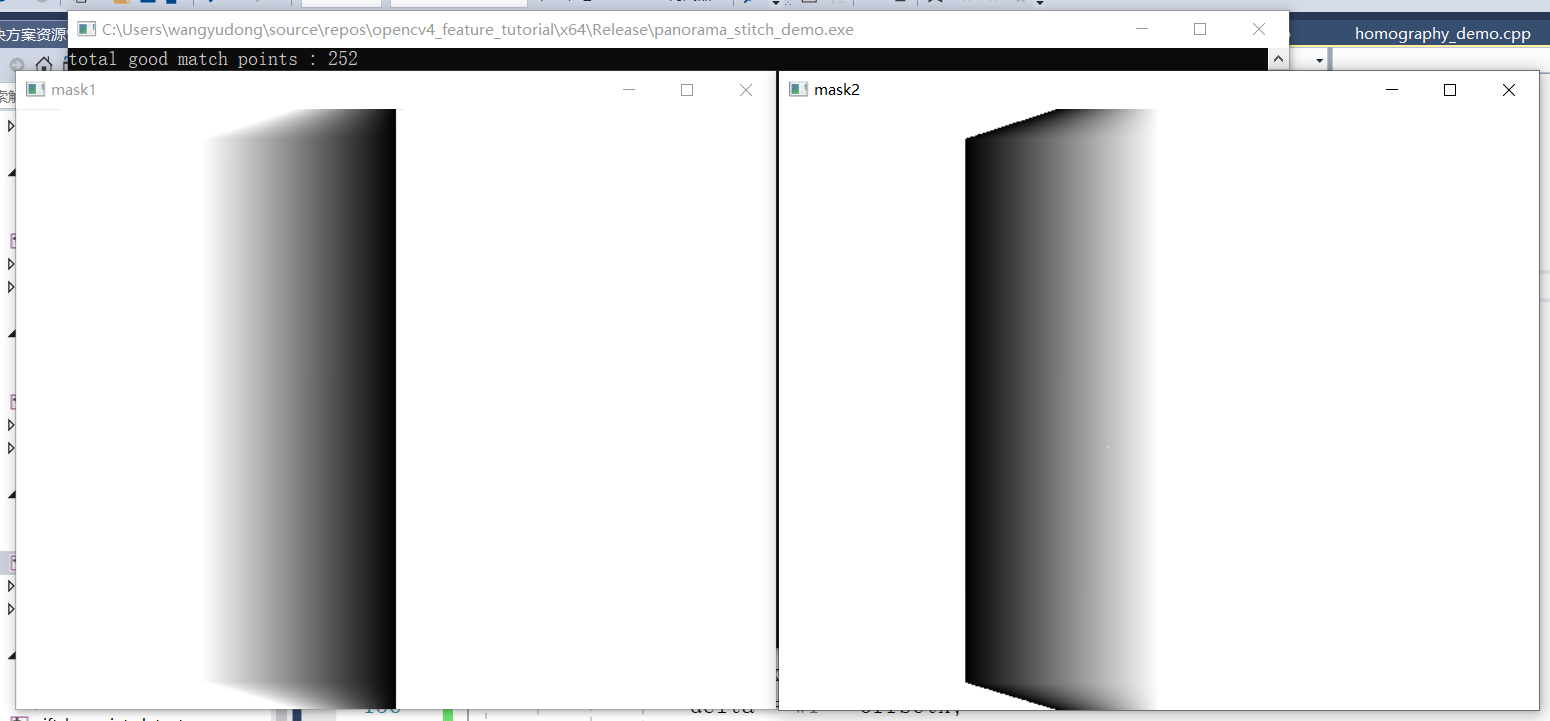

9、影象拼接

- 特徵檢測與匹配

- 影象對齊與變換

- 影象邊緣融合

程式碼實現:

#include <opencv2/opencv.hpp>

#include <iostream>

#define RATIO 0.8

using namespace std;

using namespace cv;

void linspace(Mat& image, float begin, float finish, int number, Mat &mask);

void generate_mask(Mat &img, Mat &mask);

int main(int argc, char** argv) {

Mat left = imread("D:/images/q11.jpg");

Mat right = imread("D:/images/q22.jpg");

if (left.empty() || right.empty()) {

printf("could not load image...\n");

return -1;

}

// 提取特徵點與描述子

vector<KeyPoint> keypoints_right, keypoints_left;

Mat descriptors_right, descriptors_left;

auto detector = AKAZE::create();

detector->detectAndCompute(left, Mat(), keypoints_left, descriptors_left);

detector->detectAndCompute(right, Mat(), keypoints_right, descriptors_right);

// 暴力匹配

vector<DMatch> matches;

auto matcher = DescriptorMatcher::create(DescriptorMatcher::BRUTEFORCE);

// 發現匹配

std::vector< std::vector<DMatch> > knn_matches;

matcher->knnMatch(descriptors_left, descriptors_right, knn_matches, 2);

const float ratio_thresh = 0.7f;

std::vector<DMatch> good_matches;

for (size_t i = 0; i < knn_matches.size(); i++)

{

if (knn_matches[i][0].distance < ratio_thresh * knn_matches[i][1].distance)

{

good_matches.push_back(knn_matches[i][0]);

}

}

printf("total good match points : %d\n", good_matches.size());

std::cout << std::endl;

Mat dst;

drawMatches(left, keypoints_left, right, keypoints_right, good_matches, dst);

imshow("output", dst);

imwrite("D:/images/good_matches.png", dst);

//-- Localize the object

std::vector<Point2f> left_pts;

std::vector<Point2f> right_pts;

for (size_t i = 0; i < good_matches.size(); i++)

{

// 收集所有好的匹配點

left_pts.push_back(keypoints_left[good_matches[i].queryIdx].pt);

right_pts.push_back(keypoints_right[good_matches[i].trainIdx].pt);

}

// 配準與對齊,對齊到第一張

Mat H = findHomography(right_pts, left_pts, RANSAC);

// 獲取全景圖大小

int h = max(left.rows, right.rows);

int w = left.cols + right.cols;

Mat panorama_01 = Mat::zeros(Size(w, h), CV_8UC3);

Rect roi;

roi.x = 0;

roi.y = 0;

roi.width = left.cols;

roi.height = left.rows;

// 獲取左側與右側對齊影象

left.copyTo(panorama_01(roi));

imwrite("D:/images/panorama_01.png", panorama_01);

Mat panorama_02;

warpPerspective(right, panorama_02, H, Size(w, h));

imwrite("D:/images/panorama_02.png", panorama_02);

// 計算融合重疊區域mask

Mat mask = Mat::zeros(Size(w, h), CV_8UC1);

generate_mask(panorama_02, mask);

// 建立遮罩層並根據mask完成權重初始化

Mat mask1 = Mat::ones(Size(w, h), CV_32FC1);

Mat mask2 = Mat::ones(Size(w, h), CV_32FC1);

// left mask

linspace(mask1, 1, 0, left.cols, mask);

// right mask

linspace(mask2, 0, 1, left.cols, mask);

namedWindow("mask1", WINDOW_FREERATIO);

imshow("mask1", mask1);

namedWindow("mask2", WINDOW_FREERATIO);

imshow("mask2", mask2);

// 左側融合

Mat m1;

vector<Mat> mv;

mv.push_back(mask1);

mv.push_back(mask1);

mv.push_back(mask1);

merge(mv, m1);

panorama_01.convertTo(panorama_01, CV_32F);

multiply(panorama_01, m1, panorama_01);

// 右側融合

mv.clear();

mv.push_back(mask2);

mv.push_back(mask2);

mv.push_back(mask2);

Mat m2;

merge(mv, m2);

panorama_02.convertTo(panorama_02, CV_32F);

multiply(panorama_02, m2, panorama_02);

// 合併全景圖

Mat panorama;

add(panorama_01, panorama_02, panorama);

panorama.convertTo(panorama, CV_8U);

imwrite("D:/images/panorama.png", panorama);

waitKey(0);

return 0;

}

void generate_mask(Mat &img, Mat &mask) {

int w = img.cols;

int h = img.rows;

for (int row = 0; row < h; row++) {

for (int col = 0; col < w; col++) {

Vec3b p = img.at<Vec3b>(row, col);

int b = p[0];

int g = p[1];

int r = p[2];

if (b == g && g == r && r == 0) {

mask.at<uchar>(row, col) = 255;

}

}

}

imwrite("D:/images/mask.png", mask);

}

// 對mask中的0區域,進行逐行計算每個畫素的權重值

void linspace(Mat& image, float begin, float finish, int w1, Mat &mask) {

int offsetx = 0;

float interval = 0;

float delta = 0;

for (int i = 0; i < image.rows; i++) {

offsetx = 0;

interval = 0;

delta = 0;

for (int j = 0; j < image.cols; j++) {

int pv = mask.at<uchar>(i, j);

if (pv == 0 && offsetx == 0) {

offsetx = j;

delta = w1 - offsetx;

interval = (finish - begin) / (delta - 1); // 計算每個畫素變化的大小

image.at<float>(i, j) = begin + (j - offsetx)*interval;

}

else if (pv == 0 && offsetx > 0 && (j - offsetx) < delta) {

image.at<float>(i, j) = begin + (j - offsetx)*interval;

}

}

}

}

效果:

1、影象拼接重合區域mask

2、拼接前影象

3、影象拼接效果圖

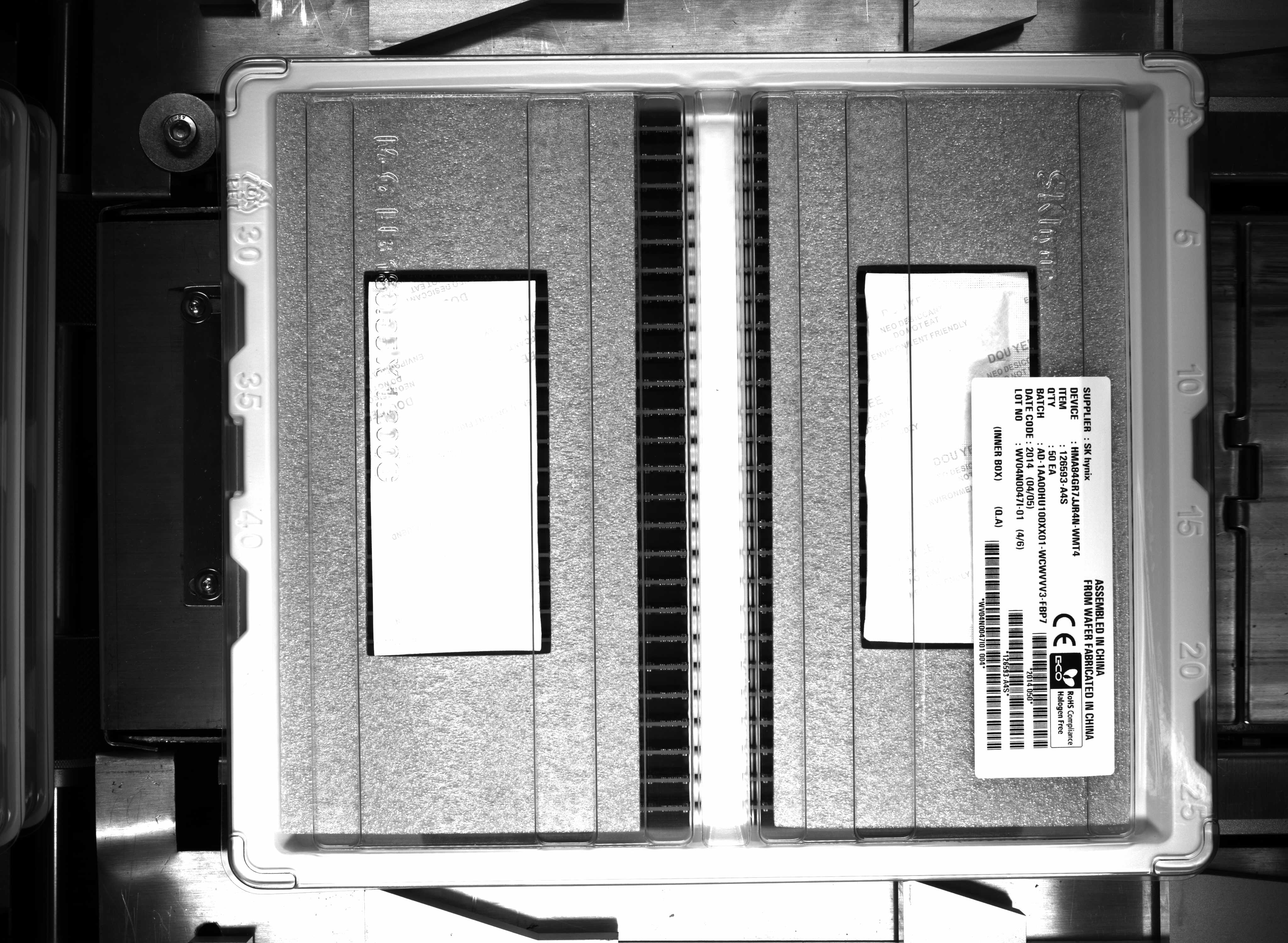

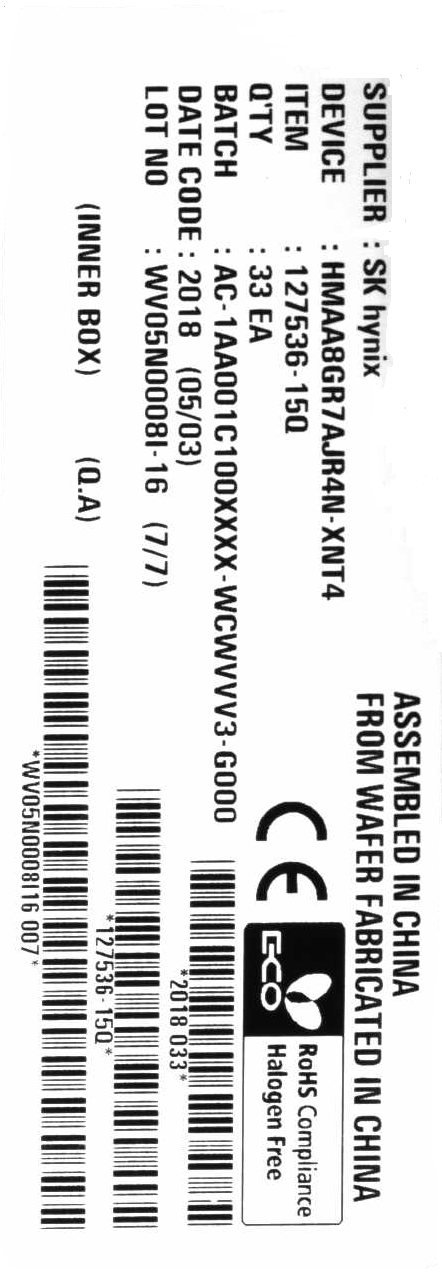

10、條碼標籤定位與有無判定

程式碼實現:

ORBDetector.h

#pragma once

#include <opencv2/opencv.hpp>

class ORBDetector {

public:

ORBDetector(void);

~ORBDetector(void);

void initORB(cv::Mat &refImg);

bool detect_and_analysis(cv::Mat &image, cv::Mat &aligned);

private:

cv::Ptr<cv::ORB> orb = cv::ORB::create(500);

std::vector<cv::KeyPoint> tpl_kps;

cv::Mat tpl_descriptors;

cv::Mat tpl;

};

ORBDetector.cpp

#include "ORBDetector.h"

ORBDetector::ORBDetector() {

std::cout << "create orb detector..." << std::endl;

}

ORBDetector::~ORBDetector() {

this->tpl_descriptors.release();

this->tpl_kps.clear();

this->orb.release();

this->tpl.release();

std::cout << "destory instance..." << std::endl;

}

void ORBDetector::initORB(cv::Mat &refImg) {

if (!refImg.empty()) {

cv::Mat tplGray;

cv::cvtColor(refImg, tplGray, cv::COLOR_BGR2GRAY);

orb->detectAndCompute(tplGray, cv::Mat(), this->tpl_kps, this->tpl_descriptors);

tplGray.copyTo(this->tpl);

}

}

bool ORBDetector::detect_and_analysis(cv::Mat &image, cv::Mat &aligned) {

// keypoints and match threshold

float GOOD_MATCH_PERCENT = 0.15f;

bool found = true;

// 處理資料集中每一張資料

cv::Mat img2Gray;

cv::cvtColor(image, img2Gray, cv::COLOR_BGR2GRAY);

std::vector<cv::KeyPoint> img_kps;

cv::Mat img_descriptors;

orb->detectAndCompute(img2Gray, cv::Mat(), img_kps, img_descriptors);

std::vector<cv::DMatch> matches;

cv::Ptr<cv::DescriptorMatcher> matcher = cv::DescriptorMatcher::create("BruteForce-Hamming");

// auto flann_matcher = cv::FlannBasedMatcher(new cv::flann::LshIndexParams(6, 12, 2));

matcher->match(img_descriptors, this->tpl_descriptors, matches, cv::Mat());

// Sort matches by score

std::sort(matches.begin(), matches.end());

// Remove not so good matches

const int numGoodMatches = matches.size() * GOOD_MATCH_PERCENT;

matches.erase(matches.begin() + numGoodMatches, matches.end());

// std::cout << numGoodMatches <<"distance:"<<matches [0].distance<< std::endl;

if (matches[0].distance > 30) {

found = false;

}

// Extract location of good matches

std::vector<cv::Point2f> points1, points2;

for (size_t i = 0; i < matches.size(); i++)

{

points1.push_back(img_kps[matches[i].queryIdx].pt);

points2.push_back(tpl_kps[matches[i].trainIdx].pt);

}

cv::Mat H = findHomography(points1, points2, cv::RANSAC);

cv::Mat im2Reg;

warpPerspective(image, im2Reg, H, tpl.size());

// 逆時針旋轉90度

cv::Mat result;

cv::rotate(im2Reg, result, cv::ROTATE_90_COUNTERCLOCKWISE);

result.copyTo(aligned);

return found;

}

object_analysis.cpp

#include "ORBDetector.h"

#include <iostream>

using namespace cv;

using namespace std;

int main(int argc, char** argv) {

Mat refImg = imread("D:/facedb/tiaoma/tpl2.png");

ORBDetector orb_detector;

orb_detector.initORB(refImg);

vector<std::string> files;

glob("D:/facedb/orb_barcode", files);

cv::Mat temp;

for (auto file : files) {

std::cout << file << std::endl;

cv::Mat image = imread(file);

int64 start = getTickCount();

bool OK = orb_detector.detect_and_analysis(image, temp);

double ct = (getTickCount() - start) / getTickFrequency();

printf("decode time: %.5f ms\n", ct * 1000);

std::cout << "標籤: " << (OK == true) << std::endl;

imshow("temp", temp);

waitKey(0);

}

}

效果:

1、檢測圖片

2、模板圖片

11、DNN概述

DNN模組介紹:

- DNN - Deep Neutal Network

- OpenCV3.3 開始釋出

- 支援VOC與COCO資料集的物件檢測模型

- 包括SSD/Faster-RCNN/YOLOv4等

- 支援自定義物件檢測

- 支援人臉檢測

函數知識:

- 讀取模型

- 轉換資料與設定

- 推理輸出

Net net = readNetFromTensorflow(model, config); // 支援tensorflow

Net net = readNetFromCaffe(config, model); // 支援caffe

Net net = readNetFromONNX(onnxfile);

// 讀取資料

Mat image = imread("D:/images/example.png");

Mat blob_img = blobFromImage(image, scalefactor, size, mean, swapRB);

net.setInput(blob_img);

// 推理輸出

Mat result = net.forward();

後處理/輸出解析:

- 不同網路的輸出不同

- 如何解析要根據模型輸出

- 物件檢測網路SSD/Faster-RCNN解析

SSD的輸出解析:

- http://download.tensorflow.org/models/object_detection/ssd_mobilenet_v1_coco_2017_11_17.tar.gz

- 1 x 1 x N x 7 - DetectOutput

- [image_id, label, conf, x_min, y_min, x_max, y_max]

Faster-RCNN輸出解析:

- http://download.tensorflow.org/models/object_detection/faster_rcnn_inception_v2_coco_2018_01_28.tar.gz

- 1 x 1 x N x 7 - DetectOutput

- [image_id, label, conf, x_min, y_min, x_max, y_max]

YOLOv4輸出解析:

- 解析多個輸出層,80個類別 - N x W x H x D

- 4 + 80 預測,三個輸出層

- centerx,centery,width,height

- 13 x 13 x 255 = 13 x 13 x 3 x 85

- NMS

- https://github.com/AlexeyAB/darknet/wiki/YOLOv4-model-zoo