mongodb.使用自帶命令工具匯出匯入資料

記錄 mongo 資料庫用原生自帶的命令工具使用 json 檔案方式進行匯入、匯出的操作!

在一次資料更新中,同事把老資料進行了清空操作,但是新的邏輯資料由於某種原因(好像是她的電腦中病毒了),一直無法正常連線資料庫進行資料插入,然後下午2點左右要給甲方演示,所以要緊急恢復原生的部分資料到生產庫。

在此之前我只用過 mongo 自帶的命令 mongoexport 進行過匯出操作,把資料庫的某個 collection 匯出為 json 檔案,那麼這次是要先匯出再匯入,實現了一個完整的資料遷移閉環,所以在此記錄一下,以備不時之需。

一、下載 mongo 工具包

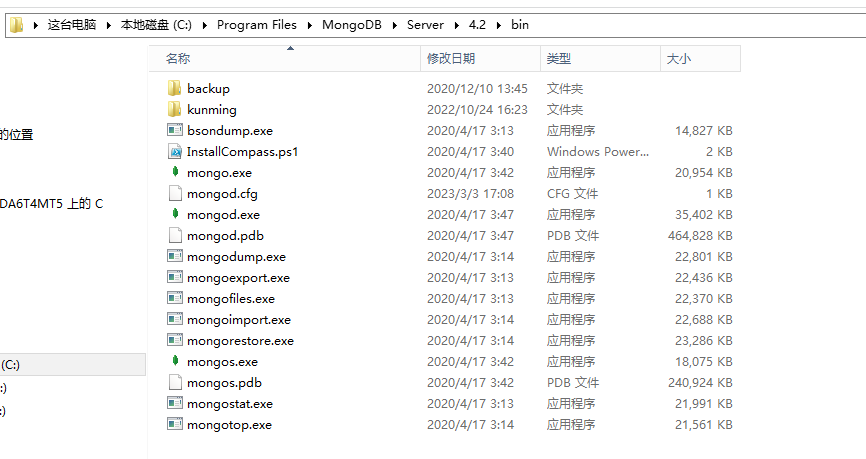

mongo工具包包括管理資料的一些工具 exe 檔案,具體如下:

- mongoexport.exe:匯出資料命令工具

- mongoimport.exe:匯入資料命令工具

- bsondump.exe: 用於將匯出的BSON檔案格式轉換為JSON格式

- mongodump.exe: 用於從mongodb資料庫中匯出BSON格式的檔案,類似於mysql的dump工具mysqldump

- mongofiles.exe: 用於和mongoDB的GridFS檔案系統互動的命令,並可操作其中的檔案,它提供了我們本地系統與GridFS檔案系統之間的儲存物件介面

- mongorestore.exe: 用於恢復匯出的BSON檔案到 mongodb 資料庫中

- mongostat.exe: 當前 mongod 狀態監控工具,像linux中監控linux的vmstat

- mongotop.exe: 提供了一個跟蹤mongod資料庫花費在讀寫資料的時間,為每個collection都會記錄,預設記錄時間是按秒記錄

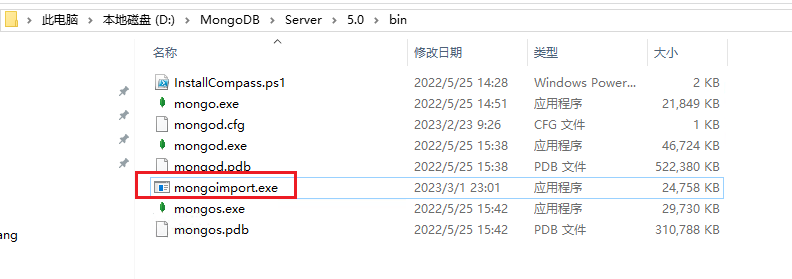

這個工具跟 mongo 的版本有關係,部分版本自帶該工具包,比如下圖的 4.x 版本,我用的 5.0 版本沒有自帶工具包,所以我需要先去官網下載工具包檔案,然後把 bin 目錄下的工具複製到 5.0 版本的 bin 目錄下,才能進行資料的匯出、匯入操作。

工具包的下載地址為:mongo工具包下載地址,解壓後把bin資料夾裡的檔案全部拷貝到 MongoDB 安裝目錄bin資料夾下。

二、匯出資料

進入到 mongo 的安裝目錄 bin 下,使用 mongoexport 工具進行資料的 匯出 操作

1、無密碼匯出操作:

mongoexport.exe -h localhost:28007 -d database -c result -o D:/project/result.json

2、有密碼的匯出操作:

mongoexport.exe -h localhost:28007 -d database -u admin -p 123456 -c result -o D:/project/result.json

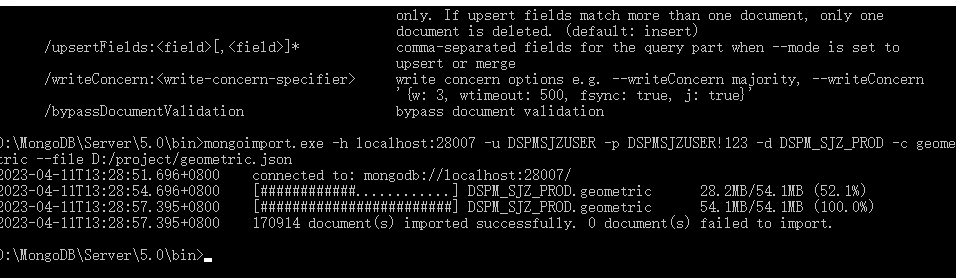

三、匯入資料

進入到 mongo 的安裝目錄 bin 下,使用 mongoimport 工具進行資料的 匯入 操作

mongoimport.exe -h localhost:28007 -u admin -p 123456 -d database -c result --file D:/project/result.json

執行結果如下表示匯入成功

D:\MongoDB\Server\5.0\bin>mongoimport.exe -h localhost:28007 -u admin -p 123456 -d database -c result --file D:/project/result.json

2023-04-11T13:34:39.799+0800 connected to: mongodb://localhost:28007/

2023-04-11T13:34:42.799+0800 [#######.................] database.result 20.2MB/66.4MB (30.4%)

2023-04-11T13:34:45.799+0800 [##############..........] database.result 40.5MB/66.4MB (61.1%)

2023-04-11T13:34:48.799+0800 [#####################...] database.result 60.4MB/66.4MB (91.0%)

2023-04-11T13:34:49.660+0800 [########################] database.result 66.4MB/66.4MB (100.0%)

2023-04-11T13:34:49.660+0800 386810 document(s) imported successfully. 0 document(s) failed to import.

引數釋義:

-h :指的是 host 主機地址

-u :指的是使用者賬號

-p :指的是賬戶密碼

-d :指的是資料庫 database 簡稱

-c :指的是表 collection 簡稱

-o :指的是匯出路徑 output 簡稱

--file :指的是需要匯入的檔案

四、其他

使用過程中可以使用 --help 進行引數意思的檢視

D:\MongoDB\Server\5.0\bin>mongoimport --help

Usage:

mongoimport <options> <connection-string> <file>

Import CSV, TSV or JSON data into MongoDB. If no file is provided, mongoimport reads from stdin.

Connection strings must begin with mongodb:// or mongodb+srv://.

See http://docs.mongodb.com/database-tools/mongoimport/ for more information.

general options:

/help print usage

/version print the tool version and exit

/config: path to a configuration file

verbosity options:

/v, /verbose:<level> more detailed log output (include multiple times for more verbosity,

e.g. -vvvvv, or specify a numeric value, e.g. --verbose=N)

/quiet hide all log output

connection options:

/h, /host:<hostname> mongodb host to connect to (setname/host1,host2 for replica sets)

/port:<port> server port (can also use --host hostname:port)

ssl options:

/ssl connect to a mongod or mongos that has ssl enabled

/sslCAFile:<filename> the .pem file containing the root certificate chain from the

certificate authority

/sslPEMKeyFile:<filename> the .pem file containing the certificate and key

/sslPEMKeyPassword:<password> the password to decrypt the sslPEMKeyFile, if necessary

/sslCRLFile:<filename> the .pem file containing the certificate revocation list

/sslFIPSMode use FIPS mode of the installed openssl library

/tlsInsecure bypass the validation for server's certificate chain and host name

authentication options:

/u, /username:<username> username for authentication

/p, /password:<password> password for authentication

/authenticationDatabase:<database-name> database that holds the user's credentials

/authenticationMechanism:<mechanism> authentication mechanism to use

/awsSessionToken:<aws-session-token> session token to authenticate via AWS IAM

kerberos options:

/gssapiServiceName:<service-name> service name to use when authenticating using GSSAPI/Kerberos

(default: mongodb)

/gssapiHostName:<host-name> hostname to use when authenticating using GSSAPI/Kerberos (default:

<remote server's address>)

namespace options:

/d, /db:<database-name> database to use

/c, /collection:<collection-name> collection to use

uri options:

/uri:mongodb-uri mongodb uri connection string

input options:

/f, /fields:<field>[,<field>]* comma separated list of fields, e.g. -f name,age

/fieldFile:<filename> file with field names - 1 per line

/file:<filename> file to import from; if not specified, stdin is used

/headerline use first line in input source as the field list (CSV and TSV only)

/jsonArray treat input source as a JSON array

/parseGrace:<grace> controls behavior when type coercion fails - one of: autoCast,

skipField, skipRow, stop (default: stop)

/type:<type> input format to import: json, csv, or tsv

/columnsHaveTypes indicates that the field list (from --fields, --fieldsFile, or

--headerline) specifies types; They must be in the form of

'<colName>.<type>(<arg>)'. The type can be one of: auto, binary,

boolean, date, date_go, date_ms, date_oracle, decimal, double, int32,

int64, string. For each of the date types, the argument is a datetime

layout string. For the binary type, the argument can be one of:

base32, base64, hex. All other types take an empty argument. Only

valid for CSV and TSV imports. e.g. zipcode.string(),

thumbnail.binary(base64)

/legacy use the legacy extended JSON format

/useArrayIndexFields indicates that field names may include array indexes that should be

used to construct arrays during import (e.g. foo.0,foo.1). Indexes

must start from 0 and increase sequentially (foo.1,foo.0 would fail).

ingest options:

/drop drop collection before inserting documents

/ignoreBlanks ignore fields with empty values in CSV and TSV

/maintainInsertionOrder insert the documents in the order of their appearance in the input

source. By default the insertions will be performed in an arbitrary

order. Setting this flag also enables the behavior of --stopOnError

and restricts NumInsertionWorkers to 1.

/j, /numInsertionWorkers:<number> number of insert operations to run concurrently

/stopOnError halt after encountering any error during importing. By default,

mongoimport will attempt to continue through document validation and

DuplicateKey errors, but with this option enabled, the tool will stop

instead. A small number of documents may be inserted after

encountering an error even with this option enabled; use

--maintainInsertionOrder to halt immediately after an error

/mode:[insert|upsert|merge|delete] insert: insert only, skips matching documents. upsert: insert new

documents or replace existing documents. merge: insert new documents

or modify existing documents. delete: deletes matching documents

only. If upsert fields match more than one document, only one

document is deleted. (default: insert)

/upsertFields:<field>[,<field>]* comma-separated fields for the query part when --mode is set to

upsert or merge

/writeConcern:<write-concern-specifier> write concern options e.g. --writeConcern majority, --writeConcern

'{w: 3, wtimeout: 500, fsync: true, j: true}'

/bypassDocumentValidation bypass document validation