Service Mesh之Istio部署bookinfo

前文我們瞭解了service mesh、分散式服務治理和istio部署相關話題,回顧請參考https://www.cnblogs.com/qiuhom-1874/p/17281541.html;今天我們在istio環境中部署官方範例專案bookinfo;通過部署bookinfo專案來了解istio;

給istio部署外掛

root@k8s-master01:/usr/local# cd istio root@k8s-master01:/usr/local/istio# ls samples/addons/ extras grafana.yaml jaeger.yaml kiali.yaml prometheus.yaml README.md root@k8s-master01:/usr/local/istio# kubectl apply -f samples/addons/ serviceaccount/grafana created configmap/grafana created service/grafana created deployment.apps/grafana created configmap/istio-grafana-dashboards created configmap/istio-services-grafana-dashboards created deployment.apps/jaeger created service/tracing created service/zipkin created service/jaeger-collector created serviceaccount/kiali created configmap/kiali created clusterrole.rbac.authorization.k8s.io/kiali-viewer created clusterrole.rbac.authorization.k8s.io/kiali created clusterrolebinding.rbac.authorization.k8s.io/kiali created role.rbac.authorization.k8s.io/kiali-controlplane created rolebinding.rbac.authorization.k8s.io/kiali-controlplane created service/kiali created deployment.apps/kiali created serviceaccount/prometheus created configmap/prometheus created clusterrole.rbac.authorization.k8s.io/prometheus created clusterrolebinding.rbac.authorization.k8s.io/prometheus created service/prometheus created deployment.apps/prometheus created root@k8s-master01:/usr/local/istio#

提示:istio外掛的部署清單在istio/samples/addons/目錄下,該目錄下有grafana、jaeger、kiali、prometheus的部署清單;其中jaeger是負責鏈路追蹤,kiali是istio的一個web使用者端工具,我們可以在web頁面來管控istio,prometheus是負責指標資料採集,grafana負責指標資料的展示工具;應用該目錄下的所有部署清單後,對應istio-system名稱空間下會跑相應的pod和相應的svc資源;

驗證:在istio-system名稱空間下,檢視對應pod是否正常跑起來了?對應svc資源是否建立?

root@k8s-master01:/usr/local/istio# kubectl get pods -n istio-system NAME READY STATUS RESTARTS AGE grafana-69f9b6bfdc-cm966 1/1 Running 0 12m istio-egressgateway-774d6846df-fv97t 1/1 Running 3 (144m ago) 22h istio-ingressgateway-69499dc-pdgld 1/1 Running 3 (144m ago) 22h istiod-65dcb8497-9skn9 1/1 Running 3 (145m ago) 22h jaeger-cc4688b98-wzfph 1/1 Running 0 12m kiali-594965b98c-kbllg 1/1 Running 0 64s prometheus-5f84bbfcfd-62nwc 2/2 Running 0 12m root@k8s-master01:/usr/local/istio# kubectl get svc -n istio-system NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE grafana ClusterIP 10.107.10.186 <none> 3000/TCP 12m istio-egressgateway ClusterIP 10.106.179.126 <none> 80/TCP,443/TCP 22h istio-ingressgateway LoadBalancer 10.102.211.120 192.168.0.252 15021:32639/TCP,80:31338/TCP,443:30597/TCP,31400:31714/TCP,15443:32154/TCP 22h istiod ClusterIP 10.96.6.69 <none> 15010/TCP,15012/TCP,443/TCP,15014/TCP 22h jaeger-collector ClusterIP 10.100.138.187 <none> 14268/TCP,14250/TCP,9411/TCP 12m kiali ClusterIP 10.99.88.50 <none> 20001/TCP,9090/TCP 12m prometheus ClusterIP 10.108.131.84 <none> 9090/TCP 12m tracing ClusterIP 10.100.53.36 <none> 80/TCP,16685/TCP 12m zipkin ClusterIP 10.110.231.233 <none> 9411/TCP 12m root@k8s-master01:/usr/local/istio#

提示:可以看到對應pod都在正常runing並處於ready狀態;對應svc資源也都正常建立;我們要想存取對應服務,可以在叢集內部存取對應的clusterIP來存取;也可以修改svc對應資源型別為nodeport或者loadbalancer型別;當然除了上述修改svc資源型別的方式實現叢集外部存取之外,我們也可以通過istio的入口閘道器來存取;不過這種方式需要我們先通過組態檔告訴給istiod,讓其把對應的服務通過ingressgate的外部IP地址暴露出來;

這裡說一下通過ingressgateway暴露服務的原理;我們在安裝istio以後,對應會在k8s上建立一些crd資源,這些crd資源就是用來定義如何管控流量的;即我們通過定義這些crd型別的資源來告訴istiod,對應服務該如何暴露;只要我們在k8s叢集上建立這些crd型別的資源以後,對應istiod就會將其收集起來,把對應資源轉換為envoy的組態檔格式,再統一下發給通過istio注入的sidecar,以實現設定envoy的目的(envoy就是istio注入到應用pod中的sidecar);

檢視crd

root@k8s-master01:~# kubectl get crds NAME CREATED AT authorizationpolicies.security.istio.io 2023-04-02T16:28:24Z bgpconfigurations.crd.projectcalico.org 2023-04-02T02:26:34Z bgppeers.crd.projectcalico.org 2023-04-02T02:26:34Z blockaffinities.crd.projectcalico.org 2023-04-02T02:26:34Z caliconodestatuses.crd.projectcalico.org 2023-04-02T02:26:34Z clusterinformations.crd.projectcalico.org 2023-04-02T02:26:34Z destinationrules.networking.istio.io 2023-04-02T16:28:24Z envoyfilters.networking.istio.io 2023-04-02T16:28:24Z felixconfigurations.crd.projectcalico.org 2023-04-02T02:26:34Z gateways.networking.istio.io 2023-04-02T16:28:24Z globalnetworkpolicies.crd.projectcalico.org 2023-04-02T02:26:34Z globalnetworksets.crd.projectcalico.org 2023-04-02T02:26:34Z hostendpoints.crd.projectcalico.org 2023-04-02T02:26:34Z ipamblocks.crd.projectcalico.org 2023-04-02T02:26:34Z ipamconfigs.crd.projectcalico.org 2023-04-02T02:26:34Z ipamhandles.crd.projectcalico.org 2023-04-02T02:26:34Z ippools.crd.projectcalico.org 2023-04-02T02:26:34Z ipreservations.crd.projectcalico.org 2023-04-02T02:26:34Z istiooperators.install.istio.io 2023-04-02T16:28:24Z kubecontrollersconfigurations.crd.projectcalico.org 2023-04-02T02:26:34Z networkpolicies.crd.projectcalico.org 2023-04-02T02:26:34Z networksets.crd.projectcalico.org 2023-04-02T02:26:34Z peerauthentications.security.istio.io 2023-04-02T16:28:24Z proxyconfigs.networking.istio.io 2023-04-02T16:28:24Z requestauthentications.security.istio.io 2023-04-02T16:28:24Z serviceentries.networking.istio.io 2023-04-02T16:28:24Z sidecars.networking.istio.io 2023-04-02T16:28:24Z telemetries.telemetry.istio.io 2023-04-02T16:28:24Z virtualservices.networking.istio.io 2023-04-02T16:28:24Z wasmplugins.extensions.istio.io 2023-04-02T16:28:24Z workloadentries.networking.istio.io 2023-04-02T16:28:24Z workloadgroups.networking.istio.io 2023-04-02T16:28:24Z root@k8s-master01:~# kubectl api-resources --api-group=networking.istio.io NAME SHORTNAMES APIVERSION NAMESPACED KIND destinationrules dr networking.istio.io/v1beta1 true DestinationRule envoyfilters networking.istio.io/v1alpha3 true EnvoyFilter gateways gw networking.istio.io/v1beta1 true Gateway proxyconfigs networking.istio.io/v1beta1 true ProxyConfig serviceentries se networking.istio.io/v1beta1 true ServiceEntry sidecars networking.istio.io/v1beta1 true Sidecar virtualservices vs networking.istio.io/v1beta1 true VirtualService workloadentries we networking.istio.io/v1beta1 true WorkloadEntry workloadgroups wg networking.istio.io/v1beta1 true WorkloadGroup root@k8s-master01:~#

提示:可以看到在networking.istio.io這個群組裡面有很多crd資源型別;其中gateway就是來定義如何接入外部流量的;virtualservice就是來定義虛擬主機的(類似apache中的虛擬主機),destinationrules用於定義外部流量通過gateway進來以後,結合virtualservice路由,對應目標該如何承接對應流量的;我們在k8s叢集上建立這些型別的crd資源以後,都會被istiod收集並由它負責將其轉換為envoy識別的格式設定統一下發給整個網格內所有的envoy sidecar或istio-system名稱空間下所有envoy pod;

通過istio ingressgateway暴露kiali服務

定義 kiali-gateway資源實現流量匹配

# cat kiali-gateway.yaml

apiVersion: networking.istio.io/v1beta1

kind: Gateway

metadata:

name: kiali-gateway

namespace: istio-system

spec:

selector:

app: istio-ingressgateway

servers:

- port:

number: 80

name: http-kiali

protocol: HTTP

hosts:

- "kiali.ik8s.cc"

提示:該資源定義了通過istio-ingresstateway進來的流量,匹配主機頭為kiali.ik8s.cc,協定為http,埠為80的流量;

定義virtualservice資源實現路由

# cat kiali-virtualservice.yaml

apiVersion: networking.istio.io/v1beta1

kind: VirtualService

metadata:

name: kiali-virtualservice

namespace: istio-system

spec:

hosts:

- "kiali.ik8s.cc"

gateways:

- kiali-gateway

http:

- match:

- uri:

prefix: /

route:

- destination:

host: kiali

port:

number: 20001

提示:該資源定義了gateway進來的流量匹配主機頭為kiali.ik8s.cc,uri匹配「/」;就把對應流量路由至對應服務名為kiali的服務的20001埠進行響應;

定義destinationrule實現如何承接對應流量

# cat kiali-destinationrule.yaml

apiVersion: networking.istio.io/v1beta1

kind: DestinationRule

metadata:

name: kiali

namespace: istio-system

spec:

host: kiali

trafficPolicy:

tls:

mode: DISABLE

提示:該資源定義了對應承接非tls的流量;即關閉kiali服務的tls功能;

應用上述設定清單

root@k8s-master01:~/istio-in-practise/Traffic-Management-Basics/kiali-port-80# kubectl apply -f . destinationrule.networking.istio.io/kiali created gateway.networking.istio.io/kiali-gateway created virtualservice.networking.istio.io/kiali-virtualservice created root@k8s-master01:~/istio-in-practise/Traffic-Management-Basics/kiali-port-80# kubectl get gw -n istio-system NAME AGE kiali-gateway 27s root@k8s-master01:~/istio-in-practise/Traffic-Management-Basics/kiali-port-80# kubectl get vs -n istio-system NAME GATEWAYS HOSTS AGE kiali-virtualservice ["kiali-gateway"] ["kiali.ik8s.cc"] 33s root@k8s-master01:~/istio-in-practise/Traffic-Management-Basics/kiali-port-80# kubectl get dr -n istio-system NAME HOST AGE kiali kiali 38s root@k8s-master01:~/istio-in-practise/Traffic-Management-Basics/kiali-port-80#

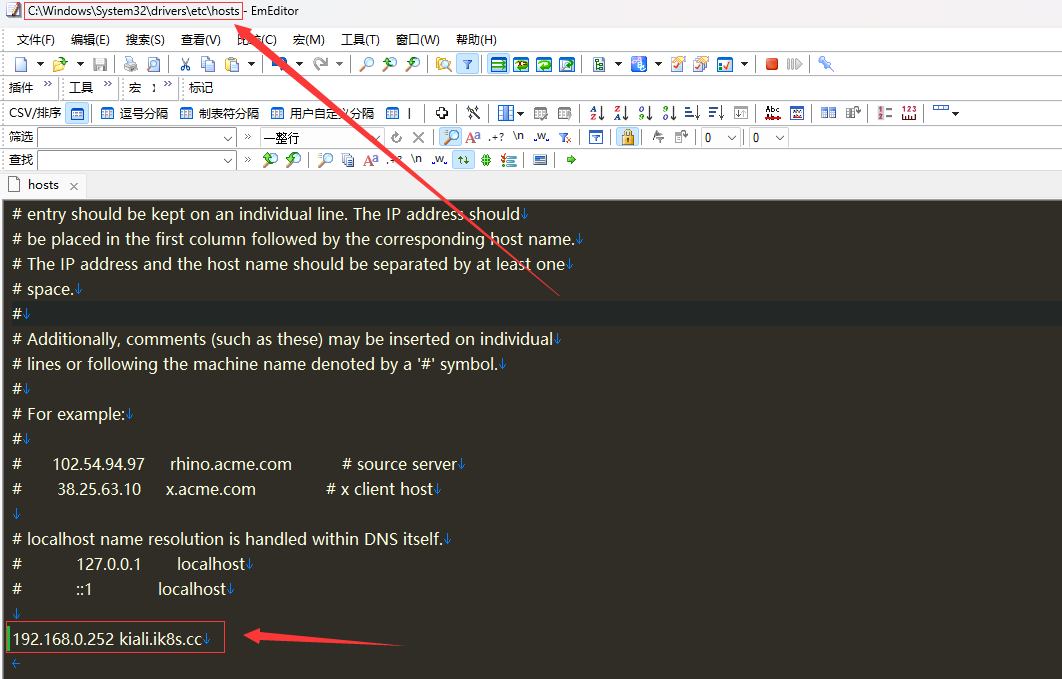

通過叢集外部使用者端的hosts檔案將kiali.ik8s.cc解析至istio-ingressgateway外部地址

提示:我這裡是一臺win11的使用者端,修改C:\Windows\System32\drivers\etc\hosts檔案來實現解析;

測試,用瀏覽器存取kiali.ik8s.cc看看對應是否能夠存取到kiali服務呢?

提示:可以看到我們現在就把叢集內部kiali服務通過gateway、virtualservice、destinationrule這三種資源的建立將其暴露給ingresgateway的外部地址上;對於其他服務我們也可以採用類似的邏輯將其暴露出來;這裡建議kiali不要直接暴露給叢集外部使用者端存取,因為kiali沒有認證,但它又具有管理istio的功能;

部署bookinfo

root@k8s-master01:/usr/local/istio# kubectl apply -f samples/bookinfo/platform/kube/bookinfo.yaml service/details created serviceaccount/bookinfo-details created deployment.apps/details-v1 created service/ratings created serviceaccount/bookinfo-ratings created deployment.apps/ratings-v1 created service/reviews created serviceaccount/bookinfo-reviews created deployment.apps/reviews-v1 created deployment.apps/reviews-v2 created deployment.apps/reviews-v3 created service/productpage created serviceaccount/bookinfo-productpage created deployment.apps/productpage-v1 created root@k8s-master01:/usr/local/istio#

提示:我們安裝istio以後,bookinfo的部署清單就在istio/samples/bookinfo/platform/kube/目錄下;該部署清單會在default名稱空間將bookinfo需要的pod執行起來,並建立相應的svc資源;

驗證:檢視default名稱空間下的pod和svc資源,看看對應pod和svc是否正常建立?

root@k8s-master01:/usr/local/istio# kubectl get pods NAME READY STATUS RESTARTS AGE details-v1-6997d94bb9-4jssp 2/2 Running 0 2m56s productpage-v1-d4f8dfd97-z2pcz 2/2 Running 0 2m55s ratings-v1-b8f8fcf49-j8l44 2/2 Running 0 2m56s reviews-v1-5896f547f5-v2h92 2/2 Running 0 2m56s reviews-v2-5d99885bc9-dhjdk 2/2 Running 0 2m55s reviews-v3-589cb4d56c-rw6rw 2/2 Running 0 2m55s root@k8s-master01:/usr/local/istio# kubectl get svc NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE details ClusterIP 10.109.96.34 <none> 9080/TCP 3m2s kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 38h productpage ClusterIP 10.101.76.112 <none> 9080/TCP 3m1s ratings ClusterIP 10.97.209.163 <none> 9080/TCP 3m2s reviews ClusterIP 10.108.1.117 <none> 9080/TCP 3m2s root@k8s-master01:/usr/local/istio#

提示:可以看到default名稱空間下跑了幾個pod,每個pod內部都有兩個容器,其中一個是bookinfo程式的主容器,一個是istio注入的sidecar;bookinfo的存取入口是productpage;

驗證:檢視istiod是否將設定下發給我們剛才部署的bookinfo中注入的sidecar設定?

root@k8s-master01:/usr/local/istio# istioctl ps NAME CLUSTER CDS LDS EDS RDS ECDS ISTIOD VERSION details-v1-6997d94bb9-4jssp.default Kubernetes SYNCED SYNCED SYNCED SYNCED NOT SENT istiod-65dcb8497-9skn9 1.17.1 istio-egressgateway-774d6846df-fv97t.istio-system Kubernetes SYNCED SYNCED SYNCED NOT SENT NOT SENT istiod-65dcb8497-9skn9 1.17.1 istio-ingressgateway-69499dc-pdgld.istio-system Kubernetes SYNCED SYNCED SYNCED SYNCED NOT SENT istiod-65dcb8497-9skn9 1.17.1 productpage-v1-d4f8dfd97-z2pcz.default Kubernetes SYNCED SYNCED SYNCED SYNCED NOT SENT istiod-65dcb8497-9skn9 1.17.1 ratings-v1-b8f8fcf49-j8l44.default Kubernetes SYNCED SYNCED SYNCED SYNCED NOT SENT istiod-65dcb8497-9skn9 1.17.1 reviews-v1-5896f547f5-v2h92.default Kubernetes SYNCED SYNCED SYNCED SYNCED NOT SENT istiod-65dcb8497-9skn9 1.17.1 reviews-v2-5d99885bc9-dhjdk.default Kubernetes SYNCED SYNCED SYNCED SYNCED NOT SENT istiod-65dcb8497-9skn9 1.17.1 reviews-v3-589cb4d56c-rw6rw.default Kubernetes SYNCED SYNCED SYNCED SYNCED NOT SENT istiod-65dcb8497-9skn9 1.17.1 root@k8s-master01:/usr/local/istio#

提示:這裡我們只需要關心cds、lds、eds、rds即可;顯示synced表示對應設定已經下發;設定下發完成以後,對應服務就可以在叢集內部存取了;

驗證:在叢集內部部署一個使用者端pod,存取productpage:9080看看對應bookinfo是否被存取到?

root@k8s-master01:/usr/local/istio# kubectl apply -f samples/sleep/sleep.yaml serviceaccount/sleep created service/sleep created deployment.apps/sleep created root@k8s-master01:/usr/local/istio# kubectl get pods NAME READY STATUS RESTARTS AGE details-v1-6997d94bb9-4jssp 2/2 Running 0 12m productpage-v1-d4f8dfd97-z2pcz 2/2 Running 0 12m ratings-v1-b8f8fcf49-j8l44 2/2 Running 0 12m reviews-v1-5896f547f5-v2h92 2/2 Running 0 12m reviews-v2-5d99885bc9-dhjdk 2/2 Running 0 12m reviews-v3-589cb4d56c-rw6rw 2/2 Running 0 12m sleep-bc9998558-vjc48 2/2 Running 0 50s root@k8s-master01:/usr/local/istio#

進入sleep pod,存取productpage:9080看看是否能存取?

root@k8s-master01:/usr/local/istio# kubectl exec -it sleep-bc9998558-vjc48 -- /bin/sh

/ $ cd

~ $ curl productpage:9080

<!DOCTYPE html>

<html>

<head>

<title>Simple Bookstore App</title>

<meta charset="utf-8">

<meta http-equiv="X-UA-Compatible" content="IE=edge">

<meta name="viewport" content="width=device-width, initial-scale=1">

<!-- Latest compiled and minified CSS -->

<link rel="stylesheet" href="static/bootstrap/css/bootstrap.min.css">

<!-- Optional theme -->

<link rel="stylesheet" href="static/bootstrap/css/bootstrap-theme.min.css">

</head>

<body>

<p>

<h3>Hello! This is a simple bookstore application consisting of three services as shown below</h3>

</p>

<table class="table table-condensed table-bordered table-hover"><tr><th>name</th><td>http://details:9080</td></tr><tr><th>endpoint</th><td>details</td></tr><tr><th>children</th><td><table class="table table-condensed table-bordered table-hover"><tr><th>name</th><th>endpoint</th><th>children</th></tr><tr><td>http://details:9080</td><td>details</td><td></td></tr><tr><td>http://reviews:9080</td><td>reviews</td><td><table class="table table-condensed table-bordered table-hover"><tr><th>name</th><th>endpoint</th><th>children</th></tr><tr><td>http://ratings:9080</td><td>ratings</td><td></td></tr></table></td></tr></table></td></tr></table>

<p>

<h4>Click on one of the links below to auto generate a request to the backend as a real user or a tester

</h4>

</p>

<p><a href="/productpage?u=normal">Normal user</a></p>

<p><a href="/productpage?u=test">Test user</a></p>

<!-- Latest compiled and minified JavaScript -->

<script src="static/jquery.min.js"></script>

<!-- Latest compiled and minified JavaScript -->

<script src="static/bootstrap/js/bootstrap.min.js"></script>

</body>

</html>

~ $

提示:可以看到對應使用者端pod能夠正常存取productpage:9080;這裡說一下我們在叢集內部pod中用productpage來存取服務是可以正常被coredns解析到對應svc上進行響應的;

暴露bookinfo給叢集外部使用者端存取

root@k8s-master01:~# cat /usr/local/istio/samples/bookinfo/networking/bookinfo-gateway.yaml

apiVersion: networking.istio.io/v1alpha3

kind: Gateway

metadata:

name: bookinfo-gateway

spec:

selector:

istio: ingressgateway # use istio default controller

servers:

- port:

number: 80

name: http

protocol: HTTP

hosts:

- "*"

---

apiVersion: networking.istio.io/v1alpha3

kind: VirtualService

metadata:

name: bookinfo

spec:

hosts:

- "*"

gateways:

- bookinfo-gateway

http:

- match:

- uri:

exact: /productpage

- uri:

prefix: /static

- uri:

exact: /login

- uri:

exact: /logout

- uri:

prefix: /api/v1/products

route:

- destination:

host: productpage

port:

number: 9080

root@k8s-master01:~#

提示:該清單將bookinfo通過關聯ingressgateway的外部地址的80埠關聯,所以我們存取ingressgateway的外部地址就可以存取到bookinfo;

應用清單

root@k8s-master01:~# kubectl apply -f /usr/local/istio/samples/bookinfo/networking/bookinfo-gateway.yaml gateway.networking.istio.io/bookinfo-gateway created virtualservice.networking.istio.io/bookinfo created root@k8s-master01:~#

驗證:存取ingressgateway的外部地址,看看對應bookinfo是否能夠被存取到?

提示:可以看到現在我們在叢集外部通過存取ingressgateway的外部地址就能正常存取到bookinfo,通過多次存取,還可以實現不同的效果;

模擬使用者端存取bookinfo

root@k8s-node03:~# while true ; do curl 192.168.0.252/productpage;sleep 0.$RANDOM;done

在kiali上檢視繪圖

提示:我們在kiali上看到的圖形,就是通過模擬使用者端存取流量所形成的圖形;該圖形能夠形象的展示對應服務流量的top,以及動態顯示對應流量存取應用的比例;我們可以通過定義組態檔的方式,動態調整使用者端能夠存取到bookinfo那個版本;對應繪圖也會通過採集到的指標資料動態將流量路徑繪製出來;

通過bookinfo測試流量治理功能

建立destinationrule

root@k8s-master01:/usr/local/istio# cat samples/bookinfo/networking/destination-rule-all.yaml

apiVersion: networking.istio.io/v1alpha3

kind: DestinationRule

metadata:

name: productpage

spec:

host: productpage

subsets:

- name: v1

labels:

version: v1

---

apiVersion: networking.istio.io/v1alpha3

kind: DestinationRule

metadata:

name: reviews

spec:

host: reviews

subsets:

- name: v1

labels:

version: v1

- name: v2

labels:

version: v2

- name: v3

labels:

version: v3

---

apiVersion: networking.istio.io/v1alpha3

kind: DestinationRule

metadata:

name: ratings

spec:

host: ratings

subsets:

- name: v1

labels:

version: v1

- name: v2

labels:

version: v2

- name: v2-mysql

labels:

version: v2-mysql

- name: v2-mysql-vm

labels:

version: v2-mysql-vm

---

apiVersion: networking.istio.io/v1alpha3

kind: DestinationRule

metadata:

name: details

spec:

host: details

subsets:

- name: v1

labels:

version: v1

- name: v2

labels:

version: v2

---

root@k8s-master01:/usr/local/istio#

提示:上述清單主要定義了不同版本對應的服務的版本;

應用清單

root@k8s-master01:/usr/local/istio# kubectl apply -f samples/bookinfo/networking/destination-rule-all.yaml destinationrule.networking.istio.io/productpage created destinationrule.networking.istio.io/reviews created destinationrule.networking.istio.io/ratings created destinationrule.networking.istio.io/details created root@k8s-master01:/usr/local/istio#

將所有流量路由至v1版本

root@k8s-master01:/usr/local/istio# kubectl apply -f samples/bookinfo/networking/virtual-service-all-v1.yaml virtualservice.networking.istio.io/productpage created virtualservice.networking.istio.io/reviews created virtualservice.networking.istio.io/ratings created virtualservice.networking.istio.io/details created root@k8s-master01:/usr/local/istio#

驗證:在kiali上檢視對應流量是否只有v1版本了?

提示:可以看到現在kiali繪製的圖裡面就只有v1版本的流量,其他v2,v3版本流量就沒有了;

通過使用者端登陸身份標識來路由

root@k8s-master01:/usr/local/istio# cat samples/bookinfo/networking/virtual-service-reviews-test-v2.yaml

apiVersion: networking.istio.io/v1alpha3

kind: VirtualService

metadata:

name: reviews

spec:

hosts:

- reviews

http:

- match:

- headers:

end-user:

exact: jason

route:

- destination:

host: reviews

subset: v2

- route:

- destination:

host: reviews

subset: v1

root@k8s-master01:/usr/local/istio#

提示:上述清單定義了,登入使用者名稱為jason,就響應v2版本;其他未登入的使用者端還是以v1版本響應;

驗證:應用設定清單,登入jason,看看是否是以v2版本響應?

提示:可以看到我們應用了設定清單以後,對應模擬使用者端存取的還是一直以v1的版本響應;我們登入jason使用者以後,對應響應給我們的頁面就是v2版本,退出jason使用者又是以v1版本響應;

以上就是bookinfo在istio服務網格中,通過定義不同的設定,實現高階流量治理的效果;