Centos7搭建hadoop3.3.4分散式叢集

- 1、背景

- 2、叢集規劃

- 2.1 hdfs叢集規劃

- 2.2 yarn叢集規劃

- 3、叢集搭建步驟

- 3.1 安裝JDK

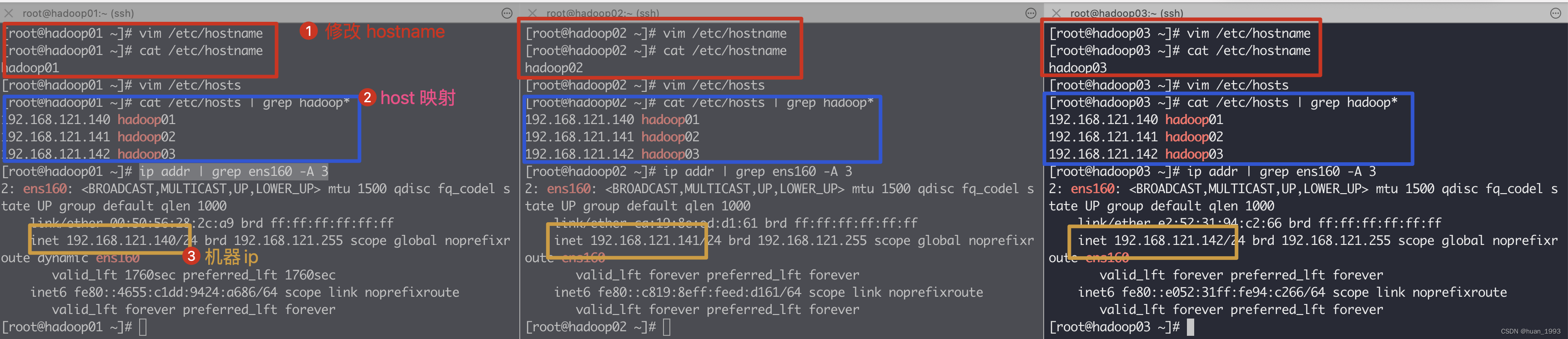

- 3.2 修改主機名和host對映

- 3.3 設定時間同步

- 3.4 關閉防火牆

- 3.5 設定ssh免密登入

- 3.7 設定hadoop

- 3.7.1 建立目錄(3臺機器都執行)

- 3.7.2 下載hadoop並解壓(hadoop01操作)

- 3.7.3 設定hadoop環境變數(hadoop01操作)

- 3.7.4 hadoop的組態檔分類(hadoop01操作)

- 3.7.5 設定 hadoop-env.sh(hadoop01操作)

- 3.7.6 設定core-site.xml檔案(hadoop01操作)(核心組態檔)

- 3.7.7 設定hdfs-site.xml檔案(hadoop01操作)(hdfs組態檔)

- 3.7.8 設定yarn-site.xml檔案(hadoop01操作)(yarn組態檔)

- 3.7.9 設定mapred-site.xml檔案(hadoop01操作)(mapreduce組態檔)

- 3.7.10 設定workers檔案(hadoop01操作)

- 3.7.11 3臺機器hadoop設定同步(hadoop01操作)

- 3、啟動叢集

- 4、參考連結

1、背景

最近在學習hadoop,本文記錄一下,怎樣在Centos7系統上搭建一個3個節點的hadoop叢集。

2、叢集規劃

hadoop叢集是由2個叢集構成的,分別是hdfs叢集和yarn叢集。2個叢集都是主從結構。

2.1 hdfs叢集規劃

| ip地址 | 主機名 | 部署服務 |

|---|---|---|

| 192.168.121.140 | hadoop01 | NameNode,DataNode,JobHistoryServer |

| 192.168.121.141 | hadoop02 | DataNode |

| 192.168.121.142 | hadoop03 | DataNode,SecondaryNameNode |

2.2 yarn叢集規劃

| ip地址 | 主機名 | 部署服務 |

|---|---|---|

| 192.168.121.140 | hadoop01 | NodeManager |

| 192.168.121.141 | hadoop02 | ResourceManager,NodeManager |

| 192.168.121.142 | hadoop03 | NodeManager |

3、叢集搭建步驟

3.1 安裝JDK

安裝jdk步驟較為簡單,此處省略。需要注意的是hadoop需要的jdk版本。 https://cwiki.apache.org/confluence/display/HADOOP/Hadoop+Java+Versions

3.2 修改主機名和host對映

| ip地址 | 主機名 |

|---|---|

| 192.168.121.140 | hadoop01 |

| 192.168.121.141 | hadoop02 |

| 192.168.121.142 | hadoop03 |

3臺機器上同時執行如下命令

# 此處修改主機名,3臺機器的主機名需要都不同

[root@hadoop01 ~]# vim /etc/hostname

[root@hadoop01 ~]# cat /etc/hostname

hadoop01

[root@hadoop01 ~]# vim /etc/hosts

[root@hadoop01 ~]# cat /etc/hosts | grep hadoop*

192.168.121.140 hadoop01

192.168.121.141 hadoop02

192.168.121.142 hadoop03

3.3 設定時間同步

叢集中的時間最好保持一致,否則可能會有問題。此處我本地搭建,虛擬機器器是可以連結外網,直接設定和外網時間同步。如果不能連結外網,則叢集中的3臺伺服器,讓另外的2臺和其中的一臺保持時間同步。

3臺機器同時執行如下命令

# 將centos7的時區設定成上海

[root@hadoop01 ~]# ln -sf /usr/share/zoneinfo/Asia/Shanghai /etc/localtime

# 安裝ntp

[root@hadoop01 ~]# yum install ntp

已載入外掛:fastestmirror

Loading mirror speeds from cached hostfile

base | 3.6 kB 00:00

extras | 2.9 kB 00:00

updates | 2.9 kB 00:00

軟體包 ntp-4.2.6p5-29.el7.centos.2.aarch64 已安裝並且是最新版本

無須任何處理

# 將ntp設定成預設啟動

[root@hadoop01 ~]# systemctl enable ntpd

# 重啟ntp服務

[root@hadoop01 ~]# service ntpd restart

Redirecting to /bin/systemctl restart ntpd.service

# 對準時間

[root@hadoop01 ~]# ntpdate asia.pool.ntp.org

19 Feb 12:36:22 ntpdate[1904]: the NTP socket is in use, exiting

# 對準硬體時間和系統時間

[root@hadoop01 ~]# /sbin/hwclock --systohc

# 檢視時間

[root@hadoop01 ~]# timedatectl

Local time: 日 2023-02-19 12:36:35 CST

Universal time: 日 2023-02-19 04:36:35 UTC

RTC time: 日 2023-02-19 04:36:35

Time zone: Asia/Shanghai (CST, +0800)

NTP enabled: yes

NTP synchronized: no

RTC in local TZ: no

DST active: n/a

# 開始自動時間和遠端ntp時間進行同步

[root@hadoop01 ~]# timedatectl set-ntp true

3.4 關閉防火牆

3臺機器上同時關閉防火牆,如果不關閉的話,則需要放行hadoop可能用到的所有埠等。

# 關閉防火牆

[root@hadoop01 ~]# systemctl stop firewalld

systemctl stop firewalld

# 關閉防火牆開機自啟

[root@hadoop01 ~]# systemctl disable firewalld.service

Removed symlink /etc/systemd/system/multi-user.target.wants/firewalld.service.

Removed symlink /etc/systemd/system/dbus-org.fedoraproject.FirewallD1.service.

[root@hadoop01 ~]#

3.5 設定ssh免密登入

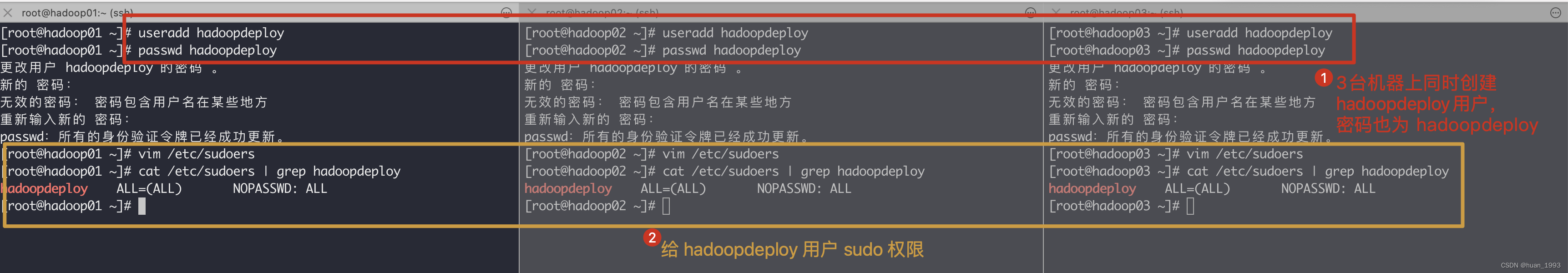

3.5.1 新建hadoop部署使用者

[root@hadoop01 ~]# useradd hadoopdeploy

[root@hadoop01 ~]# passwd hadoopdeploy

更改使用者 hadoopdeploy 的密碼 。

新的 密碼:

無效的密碼: 密碼包含使用者名稱在某些地方

重新輸入新的 密碼:

passwd:所有的身份驗證令牌已經成功更新。

[root@hadoop01 ~]# vim /etc/sudoers

[root@hadoop01 ~]# cat /etc/sudoers | grep hadoopdeploy

hadoopdeploy ALL=(ALL) NOPASSWD: ALL

[root@hadoop01 ~]#

3.5.2 設定hadoopdeploy使用者到任意一臺機器都免密登入

設定3臺機器,從任意一臺到自身和另外2臺都進行免密登入。

| 當前機器 | 當前使用者 | 免密登入的機器 | 免密登入的使用者 |

|---|---|---|---|

| hadoop01 | hadoopdeploy | hadoop01,hadoop02,hadoop03 | hadoopdeploy |

| hadoop02 | hadoopdeploy | hadoop01,hadoop02,hadoop03 | hadoopdeploy |

| hadoop03 | hadoopdeploy | hadoop01,hadoop02,hadoop03 | hadoopdeploy |

此處演示從 hadoop01到hadoop01,hadoop02,hadoop03免密登入的shell

# 切換到 hadoopdeploy 使用者

[root@hadoop01 ~]# su - hadoopdeploy

Last login: Sun Feb 19 13:05:43 CST 2023 on pts/0

# 生成公私鑰對,下方的提示直接3個回車即可

[hadoopdeploy@hadoop01 ~]$ ssh-keygen -t rsa

Generating public/private rsa key pair.

Enter file in which to save the key (/home/hadoopdeploy/.ssh/id_rsa):

Created directory '/home/hadoopdeploy/.ssh'.

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /home/hadoopdeploy/.ssh/id_rsa.

Your public key has been saved in /home/hadoopdeploy/.ssh/id_rsa.pub.

The key fingerprint is:

SHA256:PFvgTUirtNLwzDIDs+SD0RIzMPt0y1km5B7rY16h1/E hadoopdeploy@hadoop01

The key's randomart image is:

+---[RSA 2048]----+

|B . . |

| B o . o |

|+ * * + + . |

| O B / = + |

|. = @ O S o |

| o * o * |

| = o o E |

| o + |

| . |

+----[SHA256]-----+

[hadoopdeploy@hadoop01 ~]$ ssh-copy-id hadoop01

...

[hadoopdeploy@hadoop01 ~]$ ssh-copy-id hadoop02

...

[hadoopdeploy@hadoop01 ~]$ ssh-copy-id hadoop03

3.7 設定hadoop

此處如無特殊說明,都是使用的hadoopdeploy使用者來操作。

3.7.1 建立目錄(3臺機器都執行)

# 建立 /opt/bigdata 目錄

[hadoopdeploy@hadoop01 ~]$ sudo mkdir /opt/bigdata

# 將 /opt/bigdata/ 目錄及它下方所有的子目錄的所屬者和所屬組都給 hadoopdeploy

[hadoopdeploy@hadoop01 ~]$ sudo chown -R hadoopdeploy:hadoopdeploy /opt/bigdata/

[hadoopdeploy@hadoop01 ~]$ ll /opt

total 0

drwxr-xr-x. 2 hadoopdeploy hadoopdeploy 6 Feb 19 13:15 bigdata

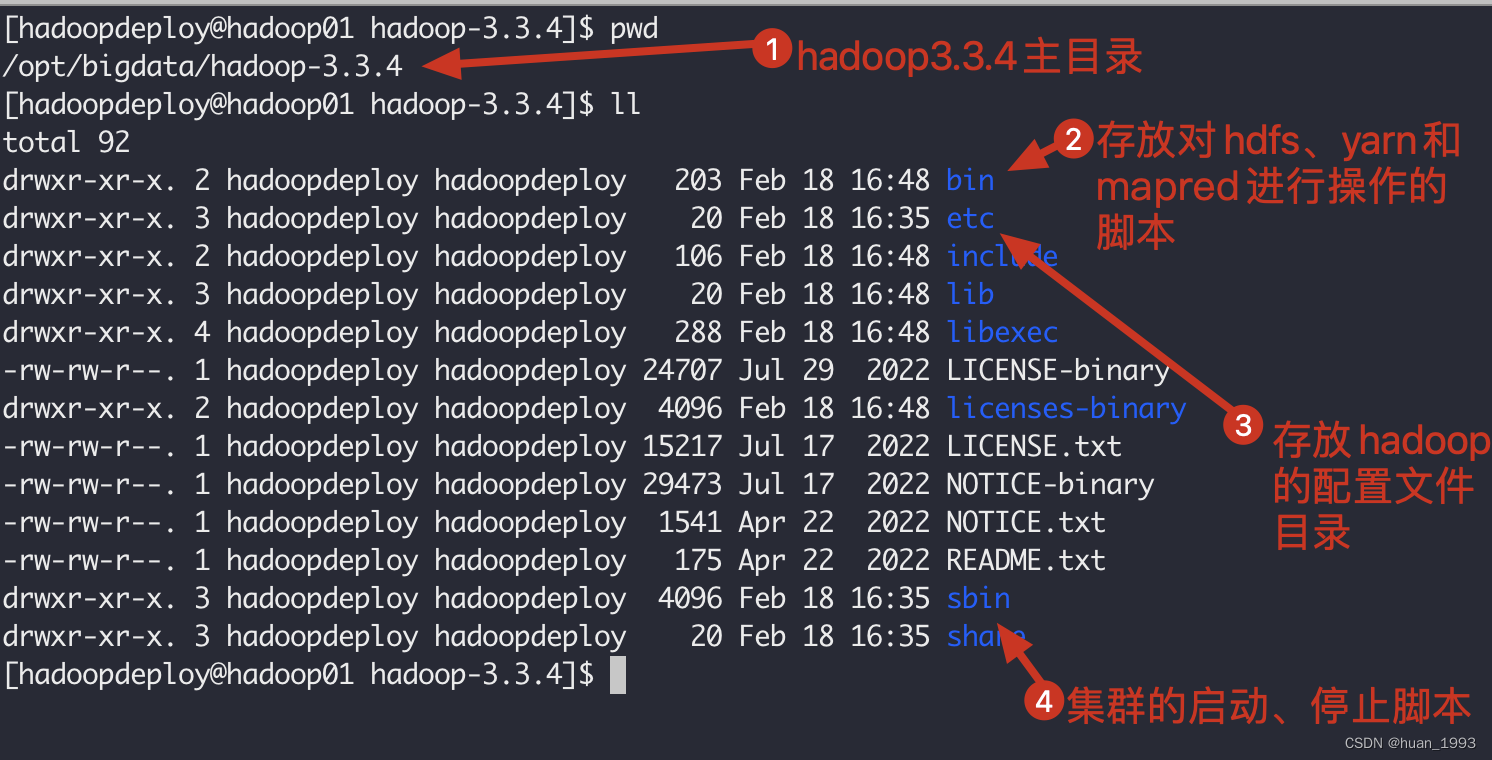

3.7.2 下載hadoop並解壓(hadoop01操作)

# 進入目錄

[hadoopdeploy@hadoop01 ~]$ cd /opt/bigdata/

# 下載

[hadoopdeploy@hadoop01 ~]$ https://www.apache.org/dyn/closer.cgi/hadoop/common/hadoop-3.3.4/hadoop-3.3.4.tar.gz

# 解壓並壓縮

[hadoopdeploy@hadoop01 bigdata]$ tar -zxvf hadoop-3.3.4.tar.gz && rm -rvf hadoop-3.3.4.tar.gz

3.7.3 設定hadoop環境變數(hadoop01操作)

# 進入hadoop目錄

[hadoopdeploy@hadoop01 hadoop-3.3.4]$ cd /opt/bigdata/hadoop-3.3.4/

# 切換到root使用者

[hadoopdeploy@hadoop01 hadoop-3.3.4]$ su - root

Password:

Last login: Sun Feb 19 13:06:41 CST 2023 on pts/0

[root@hadoop01 ~]# vim /etc/profile

# 檢視hadoop環境變數設定

[root@hadoop01 ~]# tail -n 3 /etc/profile

# 設定HADOOP

export HADOOP_HOME=/opt/bigdata/hadoop-3.3.4/

export PATH=${HADOOP_HOME}/bin:${HADOOP_HOME}/sbin:$PATH

# 讓環境變數生效

[root@hadoop01 ~]# source /etc/profile

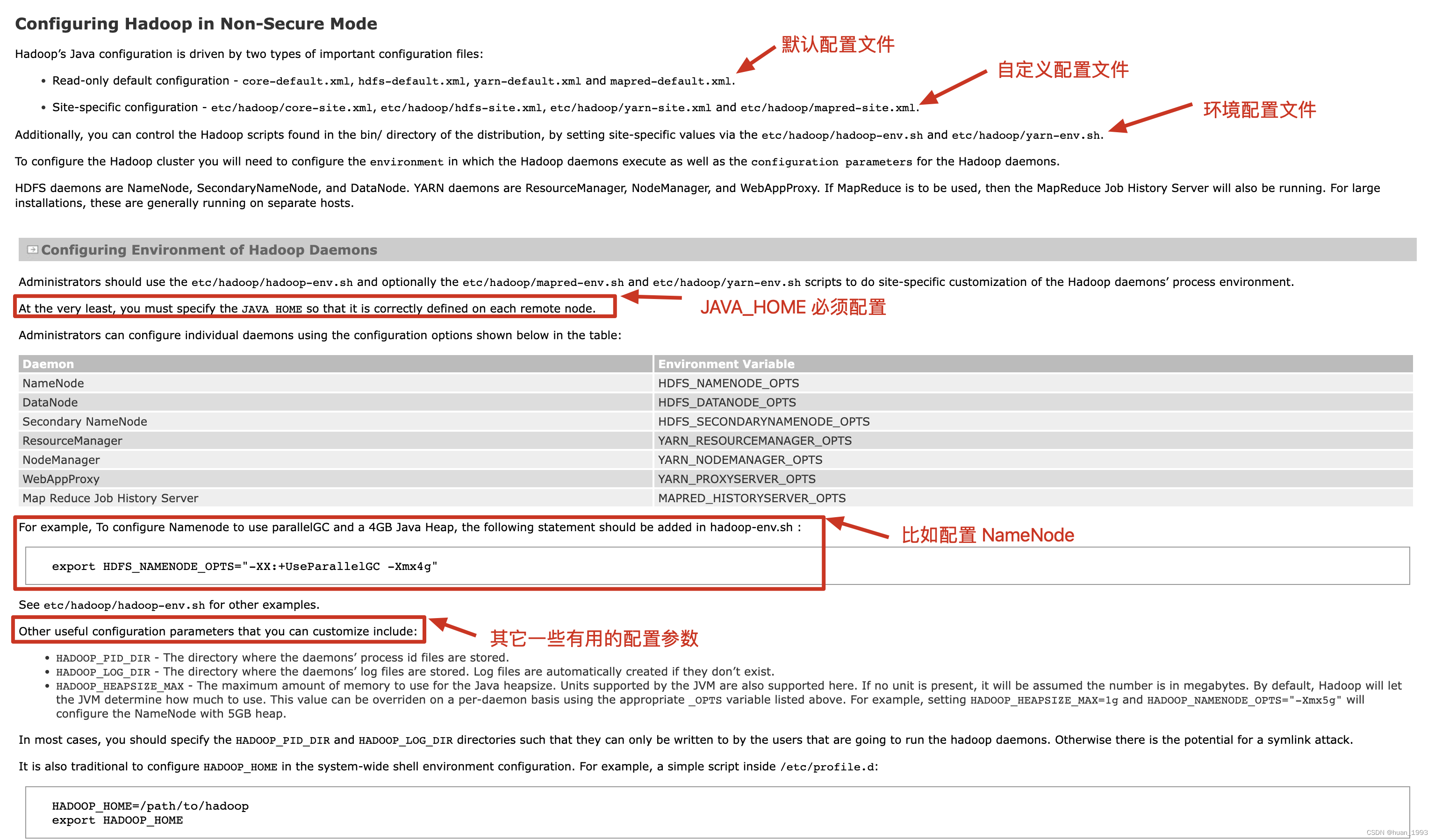

3.7.4 hadoop的組態檔分類(hadoop01操作)

在hadoop中組態檔大概有這麼3大類。

- 預設的唯讀組態檔:

core-default.xml, hdfs-default.xml, yarn-default.xml and mapred-default.xml. - 自定義組態檔:

etc/hadoop/core-site.xml, etc/hadoop/hdfs-site.xml, etc/hadoop/yarn-site.xml and etc/hadoop/mapred-site.xml會覆蓋預設的設定。 - 環境組態檔:

etc/hadoop/hadoop-env.sh and optionally the etc/hadoop/mapred-env.sh and etc/hadoop/yarn-env.sh比如設定NameNode的啟動引數HDFS_NAMENODE_OPTS等。

3.7.5 設定 hadoop-env.sh(hadoop01操作)

# 切換到hadoopdeploy使用者

[root@hadoop01 ~]# su - hadoopdeploy

Last login: Sun Feb 19 14:22:50 CST 2023 on pts/0

# 進入到hadoop的設定目錄

[hadoopdeploy@hadoop01 ~]$ cd /opt/bigdata/hadoop-3.3.4/etc/hadoop/

[hadoopdeploy@hadoop01 hadoop]$ vim hadoop-env.sh

# 增加如下內容

export JAVA_HOME=/usr/local/jdk8

export HDFS_NAMENODE_USER=hadoopdeploy

export HDFS_DATANODE_USER=hadoopdeploy

export HDFS_SECONDARYNAMENODE_USER=hadoopdeploy

export YARN_RESOURCEMANAGER_USER=hadoopdeploy

export YARN_NODEMANAGER_USER=hadoopdeploy

3.7.6 設定core-site.xml檔案(hadoop01操作)(核心組態檔)

預設組態檔路徑:https://hadoop.apache.org/docs/current/hadoop-project-dist/hadoop-common/core-default.xml

vim /opt/bigdata/hadoop-3.3.4/etc/hadoop/core-site.xml

<configuration>

<!-- 指定NameNode的地址 -->

<property>

<name>fs.defaultFS</name>

<value>hdfs://hadoop01:8020</value>

</property>

<!-- 指定hadoop資料的儲存目錄 -->

<property>

<name>hadoop.tmp.dir</name>

<value>/opt/bigdata/hadoop-3.3.4/data</value>

</property>

<!-- 設定HDFS網頁登入使用的靜態使用者為hadoopdeploy,如果不設定的話,當在hdfs頁面點選刪除時>看看結果 -->

<property>

<name>hadoop.http.staticuser.user</name>

<value>hadoopdeploy</value>

</property>

<!-- 檔案垃圾桶儲存時間 -->

<property>

<name>fs.trash.interval</name>

<value>1440</value>

</property>

</configuration>

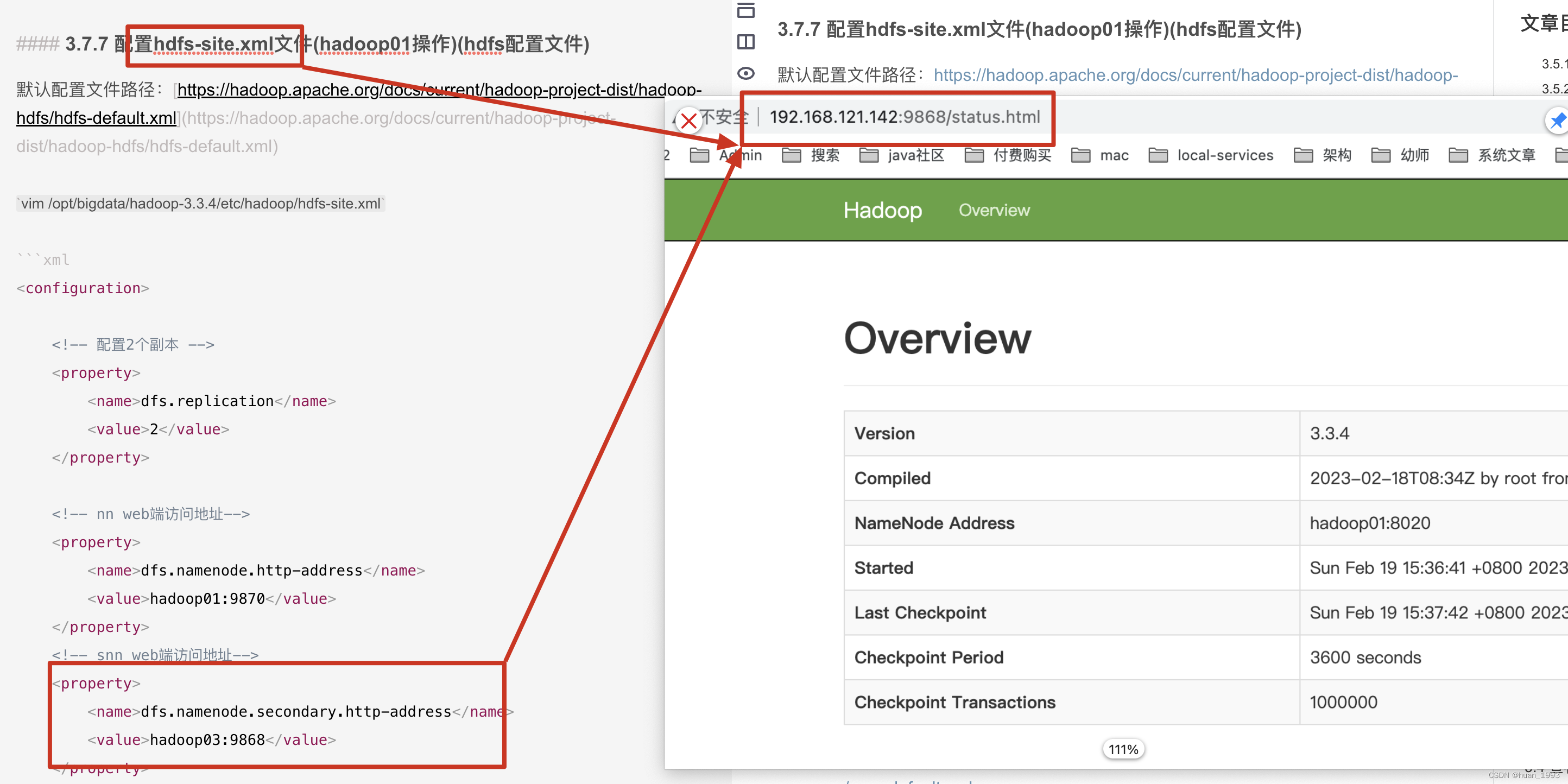

3.7.7 設定hdfs-site.xml檔案(hadoop01操作)(hdfs組態檔)

預設組態檔路徑:https://hadoop.apache.org/docs/current/hadoop-project-dist/hadoop-hdfs/hdfs-default.xml

vim /opt/bigdata/hadoop-3.3.4/etc/hadoop/hdfs-site.xml

<configuration>

<!-- 設定2個副本 -->

<property>

<name>dfs.replication</name>

<value>2</value>

</property>

<!-- nn web端存取地址-->

<property>

<name>dfs.namenode.http-address</name>

<value>hadoop01:9870</value>

</property>

<!-- snn web端存取地址-->

<property>

<name>dfs.namenode.secondary.http-address</name>

<value>hadoop03:9868</value>

</property>

</configuration>

3.7.8 設定yarn-site.xml檔案(hadoop01操作)(yarn組態檔)

預設組態檔路徑:https://hadoop.apache.org/docs/current/hadoop-yarn/hadoop-yarn-common/yarn-default.xml

vim /opt/bigdata/hadoop-3.3.4/etc/hadoop/yarn-site.xml

<configuration>

<!-- Site specific YARN configuration properties -->

<!-- 指定ResourceManager的地址 -->

<property>

<name>yarn.resourcemanager.hostname</name>

<value>hadoop02</value>

</property>

<!-- 指定MR走shuffle -->

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<!-- 是否對容器實施實體記憶體限制 -->

<property>

<name>yarn.nodemanager.pmem-check-enabled</name>

<value>false</value>

</property>

<!-- 是否對容器實施虛擬記憶體限制 -->

<property>

<name>yarn.nodemanager.vmem-check-enabled</name>

<value>false</value>

</property>

<!-- 設定 yarn 歷史伺服器地址 -->

<property>

<name>yarn.log.server.url</name>

<value>http://hadoop02:19888/jobhistory/logs</value>

</property>

<!-- 開啟紀錄檔聚集-->

<property>

<name>yarn.log-aggregation-enable</name>

<value>true</value>

</property>

<!-- 聚集紀錄檔保留的時間7天 -->

<property>

<name>yarn.log-aggregation.retain-seconds</name>

<value>604800</value>

</property>

</configuration>

3.7.9 設定mapred-site.xml檔案(hadoop01操作)(mapreduce組態檔)

vim /opt/bigdata/hadoop-3.3.4/etc/hadoop/yarn-site.xml

<configuration>

<!-- 設定 MR 程式預設執行模式:yarn 叢集模式,local 本地模式-->

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<!-- MR 程式歷史服務地址 -->

<property>

<name>mapreduce.jobhistory.address</name>

<value>hadoop01:10020</value>

</property>

<!-- MR 程式歷史伺服器 web 端地址 -->

<property>

<name>mapreduce.jobhistory.webapp.address</name>

<value>hadoop01:19888</value>

</property>

<property>

<name>yarn.app.mapreduce.am.env</name>

<value>HADOOP_MAPRED_HOME=${HADOOP_HOME}</value>

</property>

<property>

<name>mapreduce.map.env</name>

<value>HADOOP_MAPRED_HOME=${HADOOP_HOME}</value>

</property>

<property>

<name>mapreduce.reduce.env</name>

<value>HADOOP_MAPRED_HOME=${HADOOP_HOME}</value>

</property>

</configuration>

3.7.10 設定workers檔案(hadoop01操作)

vim /opt/bigdata/hadoop-3.3.4/etc/hadoop/workers

hadoop01

hadoop02

hadoop03

workers組態檔中不要有多餘的空格或換行。

3.7.11 3臺機器hadoop設定同步(hadoop01操作)

1、同步hadoop檔案

# 同步 hadoop 檔案

[hadoopdeploy@hadoop01 hadoop]$ scp -r /opt/bigdata/hadoop-3.3.4/ hadoopdeploy@hadoop02:/opt/bigdata/hadoop-3.3.4

[hadoopdeploy@hadoop01 hadoop]$ scp -r /opt/bigdata/hadoop-3.3.4/ hadoopdeploy@hadoop03:/opt/bigdata/hadoop-3.3.4

2、hadoop02和hadoop03設定hadoop的環境變數

[hadoopdeploy@hadoop03 bigdata]$ su - root

Password:

Last login: Sun Feb 19 13:07:40 CST 2023 on pts/0

[root@hadoop03 ~]# vim /etc/profile

[root@hadoop03 ~]# tail -n 4 /etc/profile

# 設定HADOOP

export HADOOP_HOME=/opt/bigdata/hadoop-3.3.4/

export PATH=${HADOOP_HOME}/bin:${HADOOP_HOME}/sbin:$PATH

[root@hadoop03 ~]# source /etc/profile

3、啟動叢集

3.1 叢集格式化

當是第一次啟動叢集時,需要對hdfs進行格式化,在NameNode節點操作。

[hadoopdeploy@hadoop01 hadoop]$ hdfs namenode -format

3.2 叢集啟動

啟動叢集有2種方式

方式一:每臺機器逐個啟動程序,比如:啟動NameNode,啟動DataNode,可以做到精確控制每個程序的啟動。方式二:設定好各個機器之間的免密登入並且設定好 workers 檔案,通過指令碼一鍵啟動。

3.2.1 逐個啟動程序

# HDFS 叢集

[hadoopdeploy@hadoop01 hadoop]$ hdfs --daemon start namenode | datanode | secondarynamenode

# YARN 叢集

[hadoopdeploy@hadoop01 hadoop]$ hdfs yarn --daemon start resourcemanager | nodemanager | proxyserver

3.2.2 指令碼一鍵啟動

start-dfs.sh一鍵啟動hdfs叢集的所有程序start-yarn.sh一鍵啟動yarn叢集的所有程序start-all.sh一鍵啟動hdfs和yarn叢集的所有程序

3.3 啟動叢集

3.3.1 啟動hdfs叢集

需要在NameNode這臺機器上啟動

# 改指令碼啟動叢集中的 NameNode、DataNode和SecondaryNameNode

[hadoopdeploy@hadoop01 hadoop]$ start-dfs.sh

3.3.2 啟動yarn叢集

需要在ResourceManager這臺機器上啟動

# 該指令碼啟動叢集中的 ResourceManager 和 NodeManager 程序

[hadoopdeploy@hadoop02 hadoop]$ start-yarn.sh

3.3.3 啟動JobHistoryServer

[hadoopdeploy@hadoop01 hadoop]$ mapred --daemon start historyserver

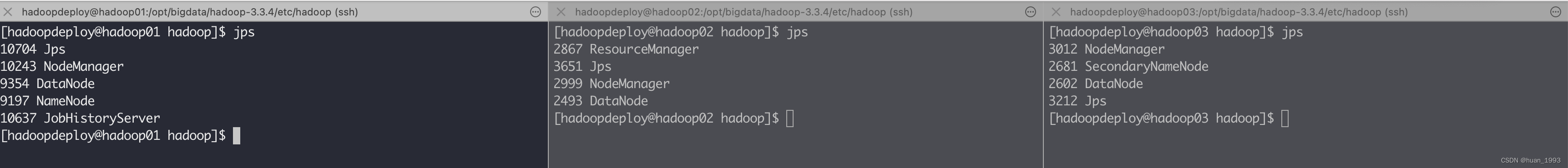

3.4 檢視各個機器上啟動的服務是否和我們規劃的一致

可以看到是一致的。

3.5 存取頁面

3.5.1 存取NameNode ui (hdfs叢集)

如果這個時候通過 hadoop fs 命令可以上傳檔案,但是在這個web介面上可以建立資料夾,但是上傳檔案報錯,此處就需要在存取ui介面的這個電腦的hosts檔案中,將部署hadoop的那幾臺的電腦的ip 和hostname 在本機上進行對映。

3.5.2 存取SecondaryNameNode ui

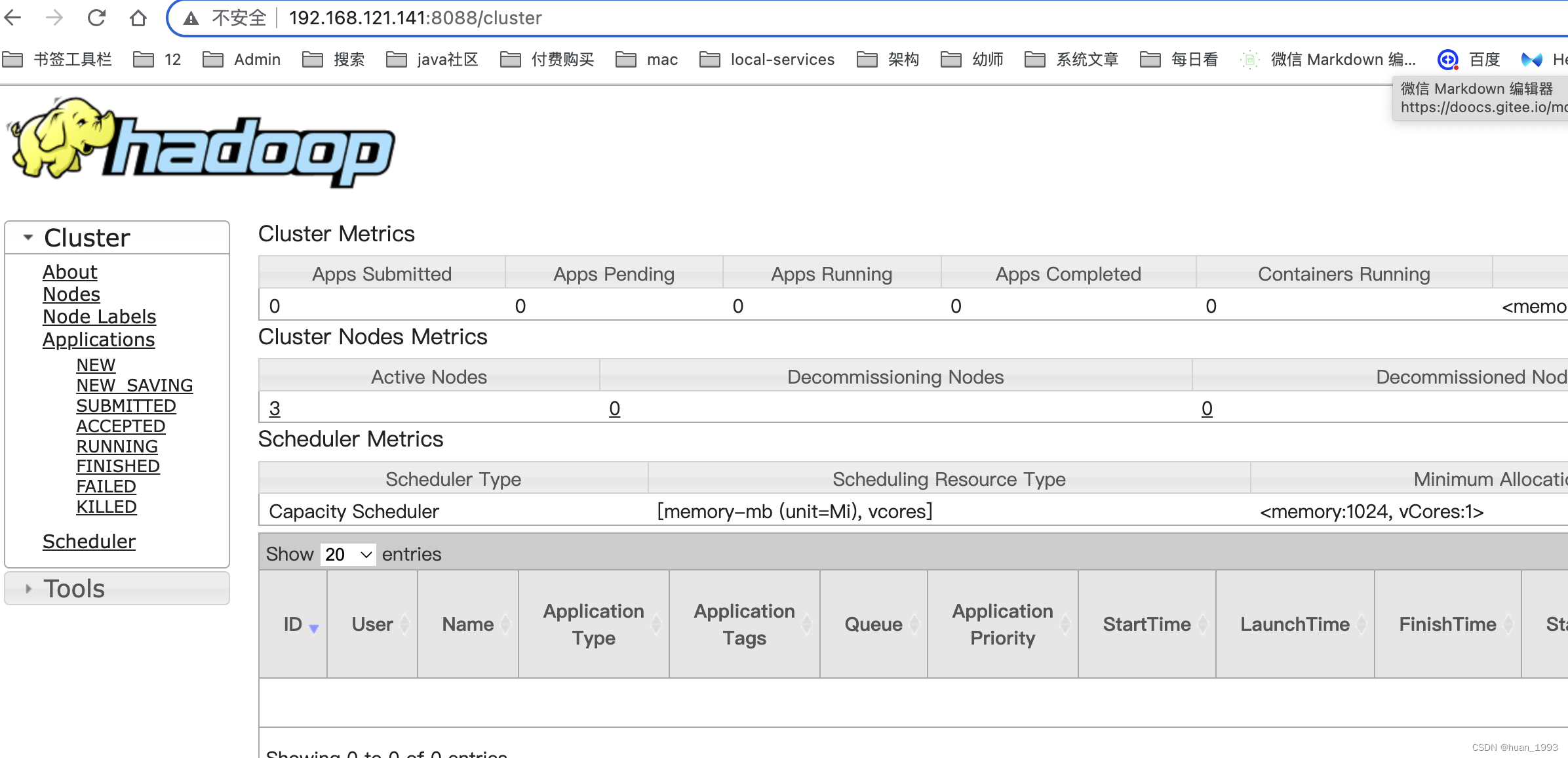

3.5.3 檢視ResourceManager ui(yarn叢集)

3.5.4 存取jobhistory

4、參考連結

1、https://cwiki.apache.org/confluence/display/HADOOP/Hadoop+Java+Versions

2、https://hadoop.apache.org/docs/current/hadoop-project-dist/hadoop-common/ClusterSetup.html

本文來自部落格園,作者:huan1993,轉載請註明原文連結:https://www.cnblogs.com/huan1993/p/17140572.html