Longhorn+K8S+KubeSphere雲端資料管理,實戰 Sentry PostgreSQL 資料卷增量快照/備份與還原

2023-01-22 15:00:21

雲端實驗環境設定

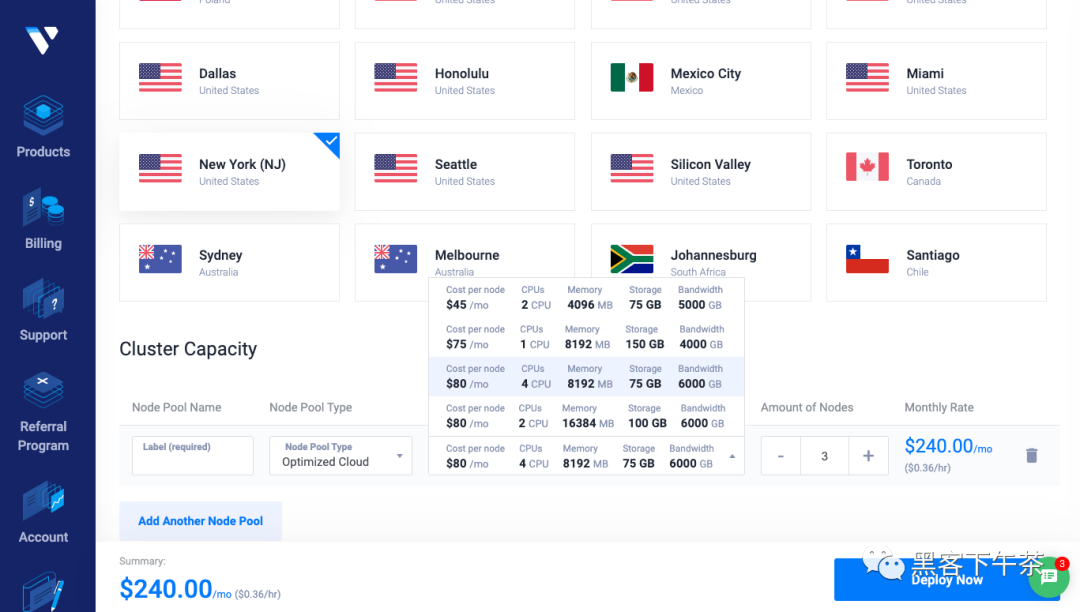

VKE K8S Cluster

Vultr託管叢集

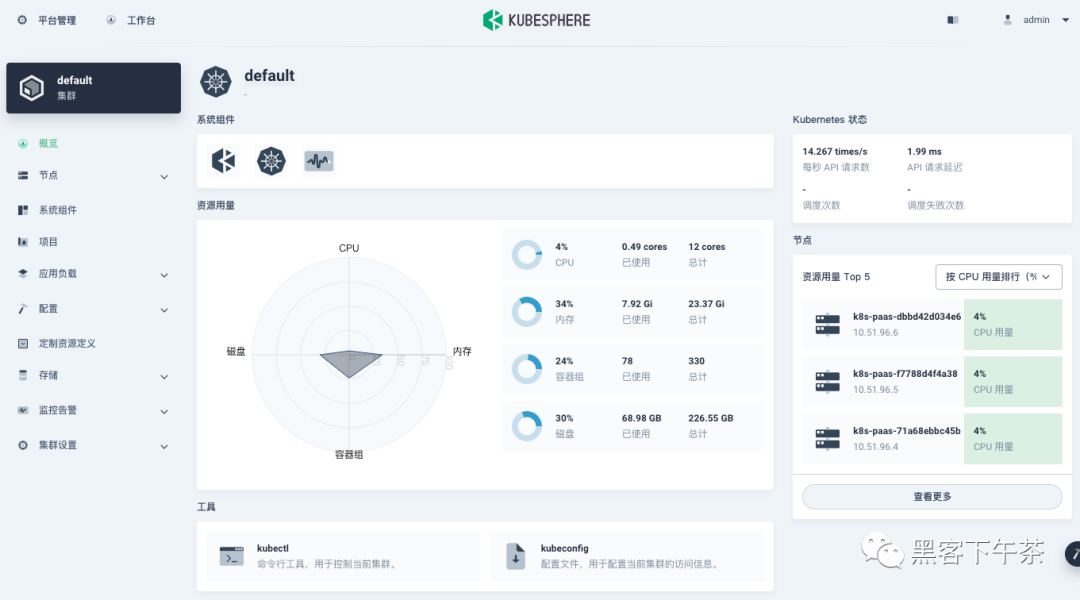

3個worker節點,kubectl get nodes。

k8s-paas-71a68ebbc45b Ready <none> 12d v1.23.14

k8s-paas-dbbd42d034e6 Ready <none> 12d v1.23.14

k8s-paas-f7788d4f4a38 Ready <none> 12d v1.23.14

Kubesphere v3.3.1 叢集視覺化管理

全棧的 Kubernetes 容器雲 PaaS 解決方案。

Longhorn 1.14

Kubernetes 的雲原生分散式塊儲存。

Sentry Helm Charts

非官方 k8s helm charts,大規模吞吐需建設微服務叢集/中介軟體叢集/邊緣儲存叢集。

helm repo add sentry https://sentry-kubernetes.github.io/charts

kubectl create ns sentry

helm install sentry sentry/sentry -f values.yaml -n sentry

# helm install sentry sentry/sentry -n sentry

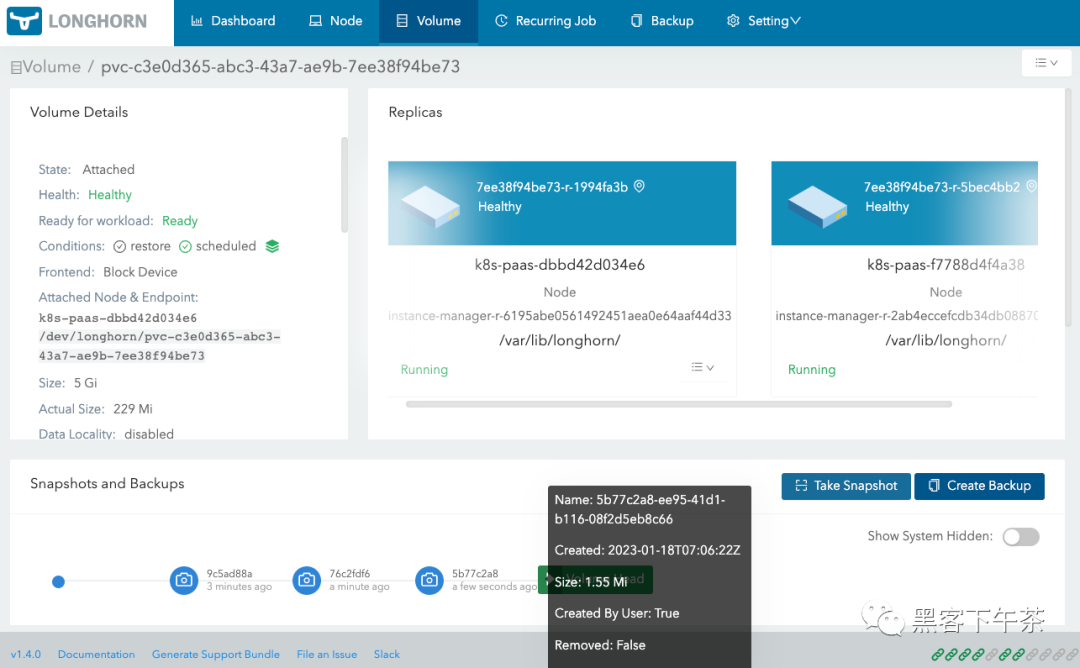

為 Sentry PostgreSQL 資料卷不同狀態下建立快照

建立快照

這裡我們建立 3 個 PostgreSQL 資料卷快照,分別對應 Sentry 後臺面板的不同狀態。

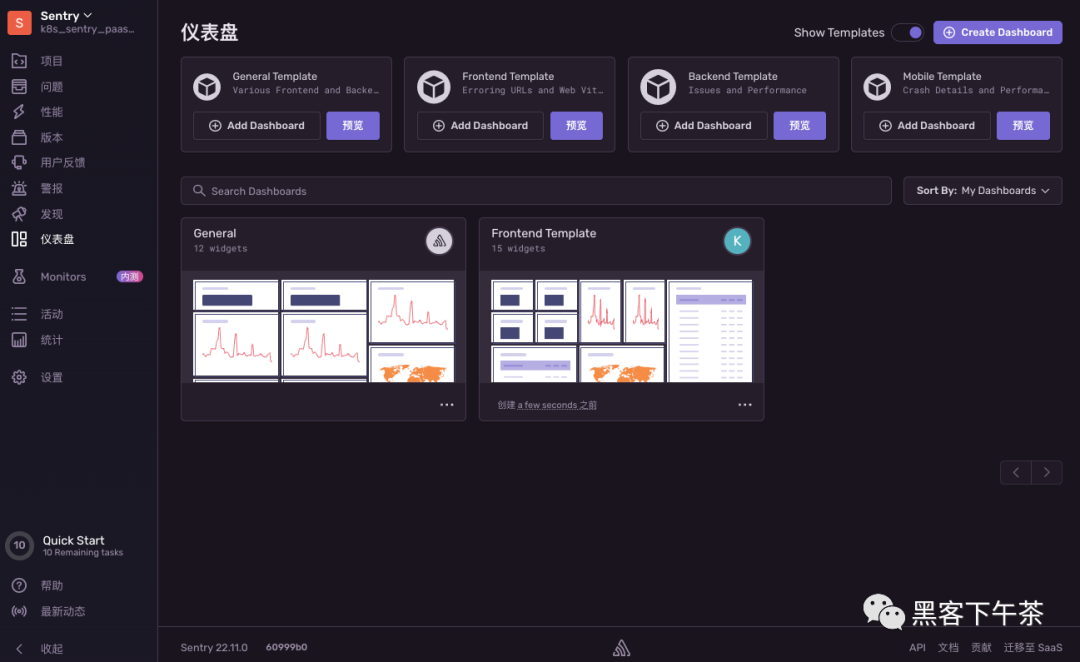

Sentry 後臺面板狀態-1

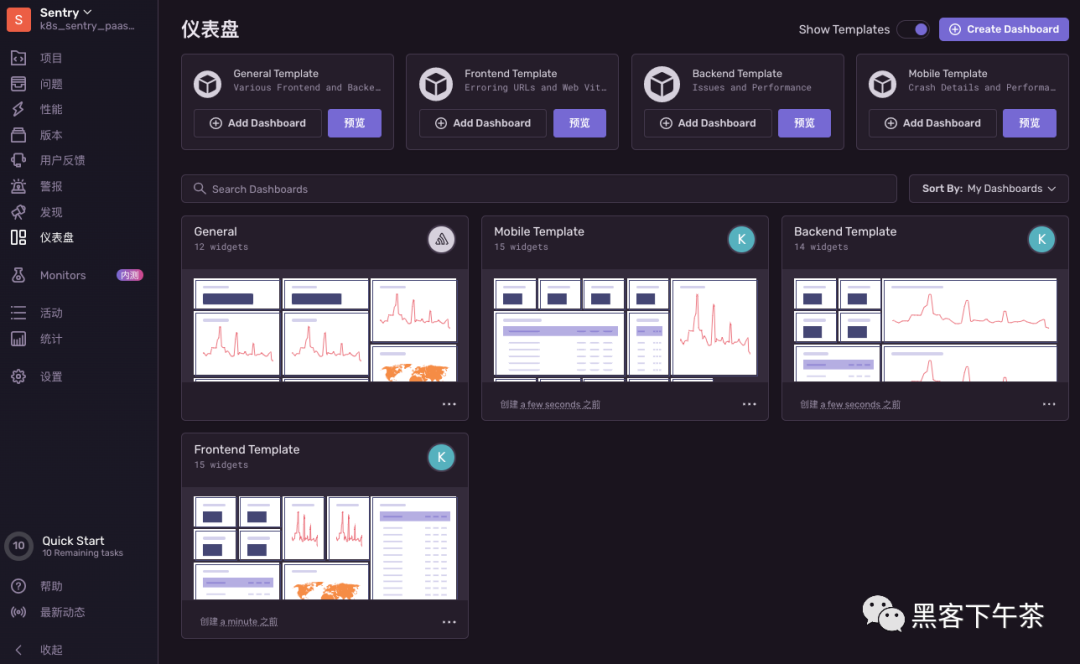

Sentry 後臺面板狀態-2

Sentry 後臺面板狀態-3

分別建立 3 個快照

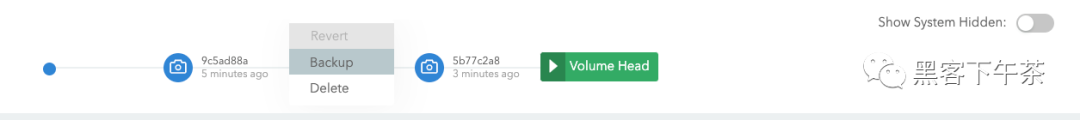

建立備份

設定備份目標伺服器

用於存取備份儲存的端點。支援 NFS 和 S3 協定的伺服器。

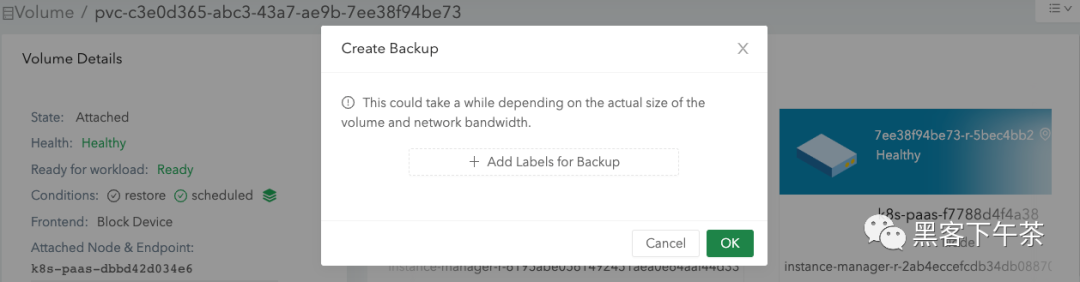

針對快照 2 建立備份

檢視備份卷

備份卷建立時間取決於你的卷大小和網路頻寬。

Longhorn 為 K8S StatefulSets 恢復卷的範例

官方檔案:https://longhorn.io/docs/1.4.0/snapshots-and-backups/backup-and-restore/restore-statefulset/

Longhorn 支援恢復備份,此功能的一個用例是恢復用於 Kubernetes StatefulSet 的資料,這需要為備份的每個副本恢復一個卷。

要恢復,請按照以下說明進行操作。 下面的範例使用了一個 StatefulSet,其中一個卷附加到每個 Pod 和兩個副本。

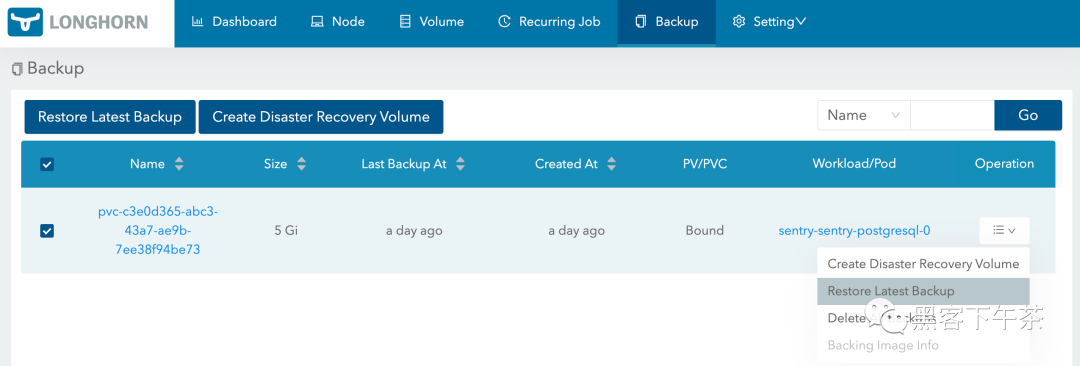

- 在您的 Web 瀏覽器中連線到

Longhorn UI頁面。在Backup索引標籤下,選擇 StatefulSet 卷的名稱。 單擊卷條目的下拉式選單並將其還原。將卷命名為稍後可以輕鬆參照的Persistent Volumes。

- 對需要恢復的每個卷重複此步驟。

- 例如,如果恢復一個有兩個副本的

StatefulSet,這些副本的卷名為pvc-01a和pvc-02b,則恢復可能如下所示:

| Backup Name | Restored Volume |

|---|---|

| pvc-01a | statefulset-vol-0 |

| pvc-02b | statefulset-vol-1 |

- 在 Kubernetes 中,為建立的每個 Longhorn 卷建立一個

Persistent Volume。將卷命名為以後可以輕鬆參照的Persistent Volume Claims。下面必須替換storage容量、numberOfReplicas、storageClassName和volumeHandle。在範例中,我們在Longhorn中參照statefulset-vol-0和statefulset-vol-1,並使用longhorn作為我們的storageClassName。

apiVersion: v1

kind: PersistentVolume

metadata:

name: statefulset-vol-0

spec:

capacity:

storage: <size> # must match size of Longhorn volume

volumeMode: Filesystem

accessModes:

- ReadWriteOnce

persistentVolumeReclaimPolicy: Delete

csi:

driver: driver.longhorn.io # driver must match this

fsType: ext4

volumeAttributes:

numberOfReplicas: <replicas> # must match Longhorn volume value

staleReplicaTimeout: '30' # in minutes

volumeHandle: statefulset-vol-0 # must match volume name from Longhorn

storageClassName: longhorn # must be same name that we will use later

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: statefulset-vol-1

spec:

capacity:

storage: <size> # must match size of Longhorn volume

volumeMode: Filesystem

accessModes:

- ReadWriteOnce

persistentVolumeReclaimPolicy: Delete

csi:

driver: driver.longhorn.io # driver must match this

fsType: ext4

volumeAttributes:

numberOfReplicas: <replicas> # must match Longhorn volume value

staleReplicaTimeout: '30'

volumeHandle: statefulset-vol-1 # must match volume name from Longhorn

storageClassName: longhorn # must be same name that we will use later

- 在將部署

StatefulSet的namespace中,為每個Persistent Volume建立PersistentVolume Claims。Persistent Volume Claim的名稱必須遵循以下命名方案:

<name of Volume Claim Template>-<name of StatefulSet>-<index>

StatefulSet Pod 是零索引的。在這個例子中,Volume Claim Template 的名稱是 data,StatefulSet 的名稱是 webapp,並且有兩個副本,分別是索引 0 和 1。

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: data-webapp-0

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 2Gi # must match size from earlier

storageClassName: longhorn # must match name from earlier

volumeName: statefulset-vol-0 # must reference Persistent Volume

---

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: data-webapp-1

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 2Gi # must match size from earlier

storageClassName: longhorn # must match name from earlier

volumeName: statefulset-vol-1 # must reference Persistent Volume

- 建立 StatefulSet:

apiVersion: apps/v1beta2

kind: StatefulSet

metadata:

name: webapp # match this with the PersistentVolumeClaim naming scheme

spec:

selector:

matchLabels:

app: nginx # has to match .spec.template.metadata.labels

serviceName: "nginx"

replicas: 2 # by default is 1

template:

metadata:

labels:

app: nginx # has to match .spec.selector.matchLabels

spec:

terminationGracePeriodSeconds: 10

containers:

- name: nginx

image: k8s.gcr.io/nginx-slim:0.8

ports:

- containerPort: 80

name: web

volumeMounts:

- name: data

mountPath: /usr/share/nginx/html

volumeClaimTemplates:

- metadata:

name: data # match this with the PersistentVolumeClaim naming scheme

spec:

accessModes: [ "ReadWriteOnce" ]

storageClassName: longhorn # must match name from earlier

resources:

requests:

storage: 2Gi # must match size from earlier

結果: 現在應該可以從 StatefulSet Pod 內部存取恢復的資料。

通過 Longhorn UI 恢復 Sentry PostgreSQL 資料卷

解除安裝 sentry 名稱空間下一切資源並自刪除 namespace

# 刪除 release

helm uninstall sentry -n sentry

# 刪除 namespace

kubectl delete ns sentry

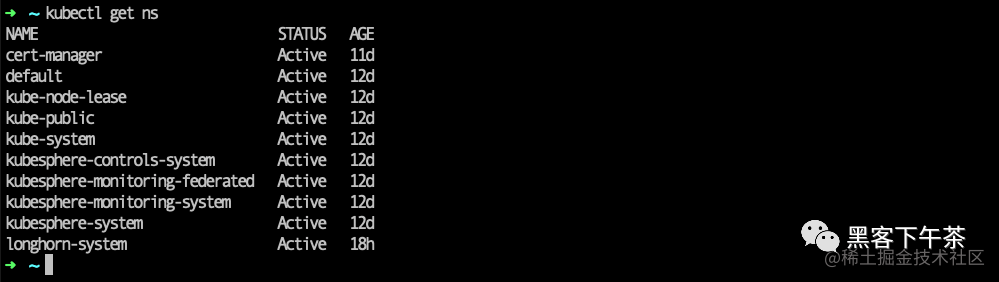

檢視當前 namespace

kubectl get ns,已無 sentry。

從備份伺服器恢復 PostgreSQL 資料卷

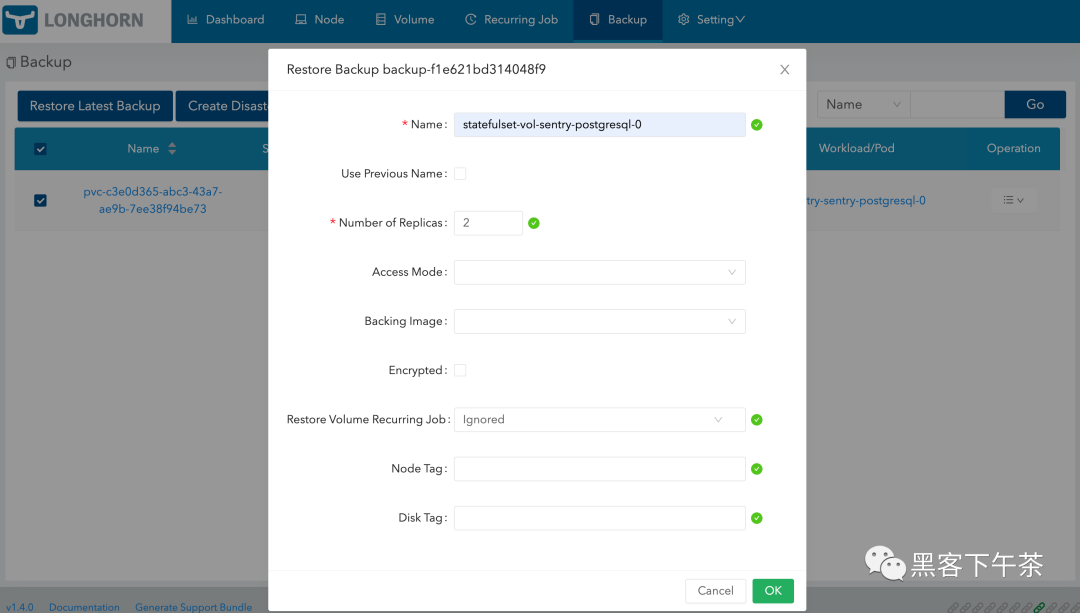

還原最新的備份

設定不同機器間多個卷副本, 高可用

- 卷名設定為

statefulset-vol-sentry-postgresql-0 - 副本設定為至少

2,卷副本會被自動排程到不同節點,保證卷高可用。

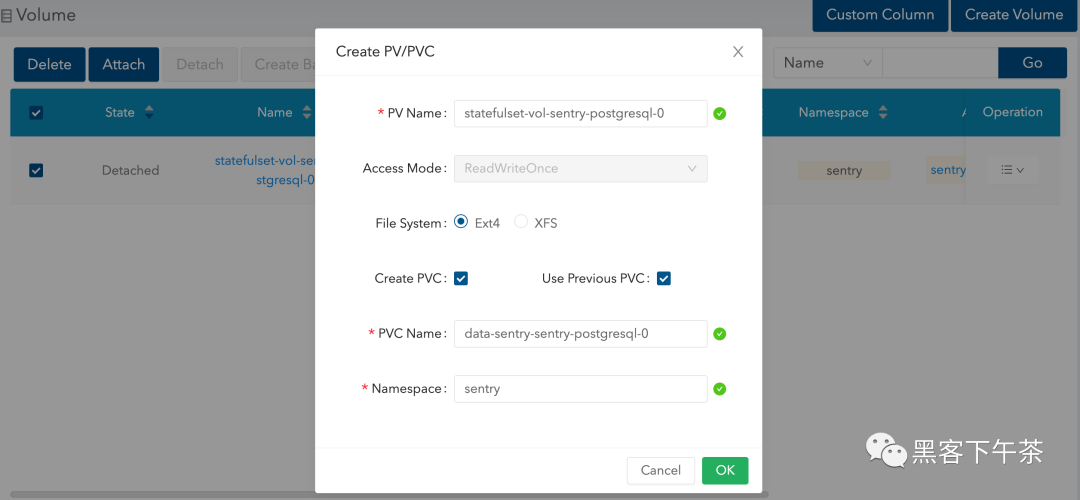

為 Longhorn 備份卷建立 PV/PVC

注意:這裡我們需要重新建立 namespace:sentry

kubectl create ns sentry

重新安裝 sentry

helm install sentry sentry/sentry -f values.yaml -n sentry

檢視 statefulset-vol-sentry-postgresql-0 副本

重新存取 Sentry

ok,成功恢復。

- 更多,K8S PaaS 雲原生中介軟體實戰教學

- 關注公眾號:駭客下午茶