(四)elasticsearch 原始碼之索引流程分析

2023-01-06 12:00:38

1.概覽

前面我們討論了es是如何啟動,本文研究下es是如何索引檔案的。

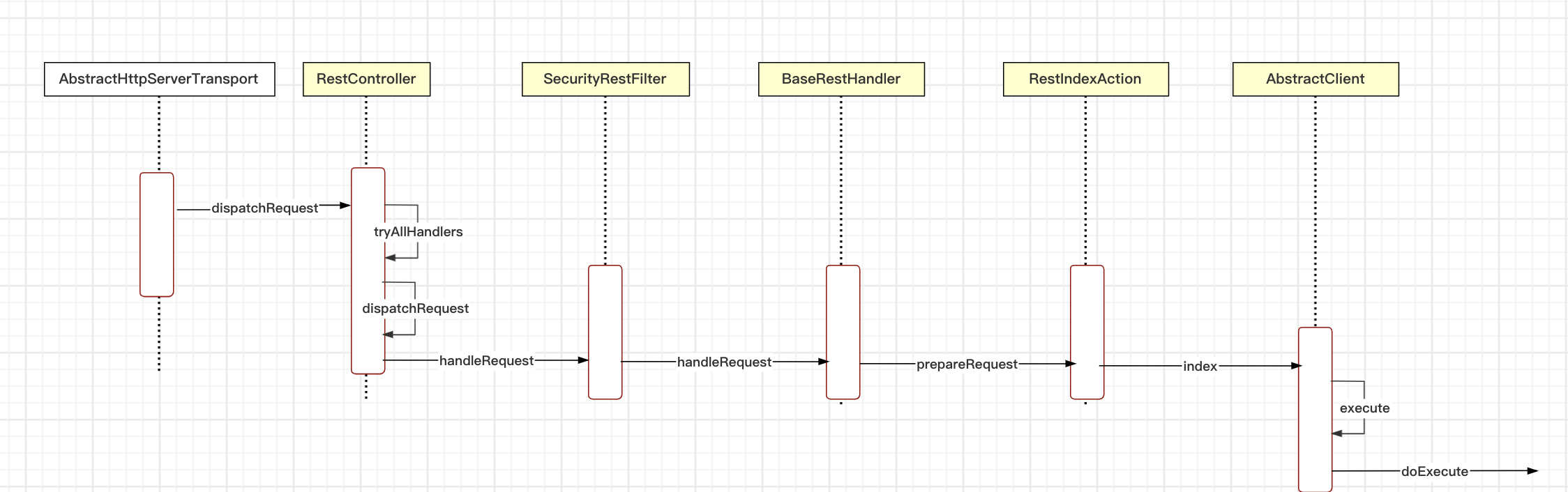

下面是啟動流程圖,我們按照流程圖的順序依次描述。

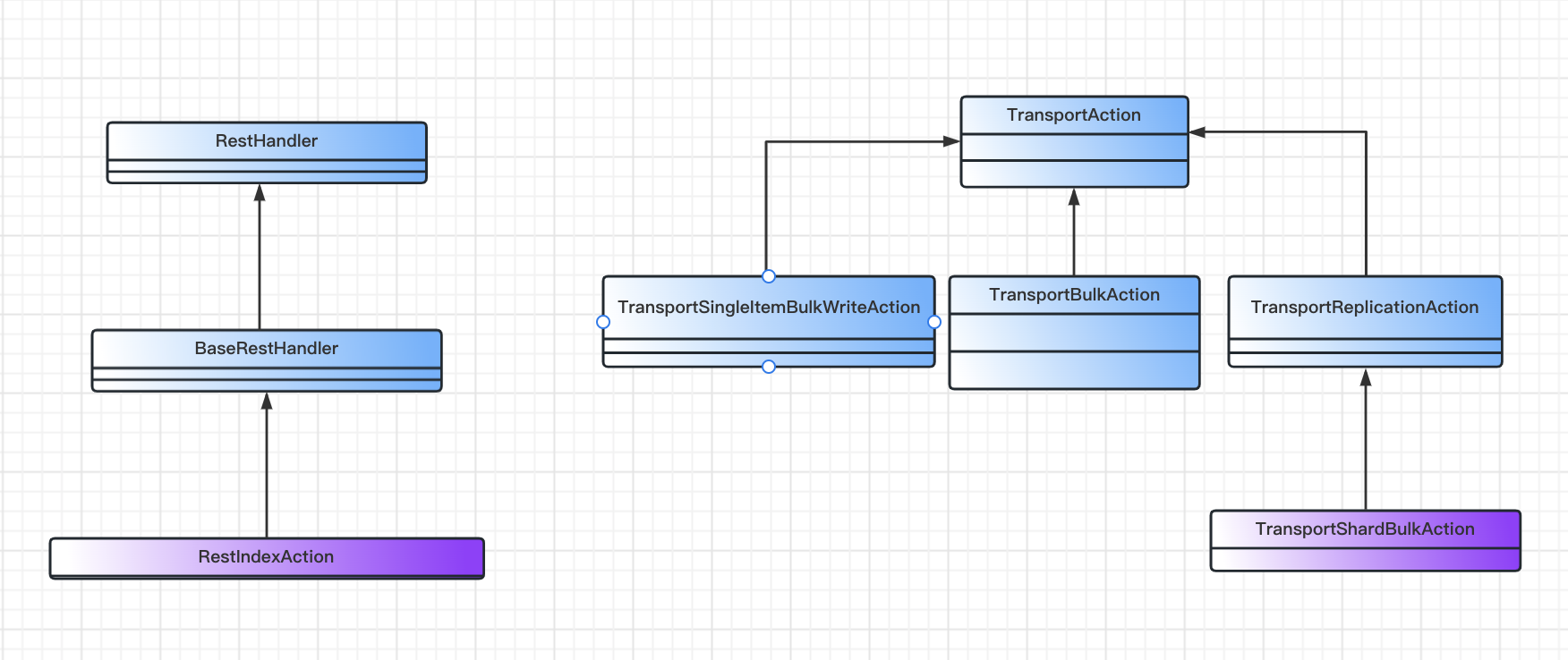

其中主要類的關係如下:

2. 索引流程

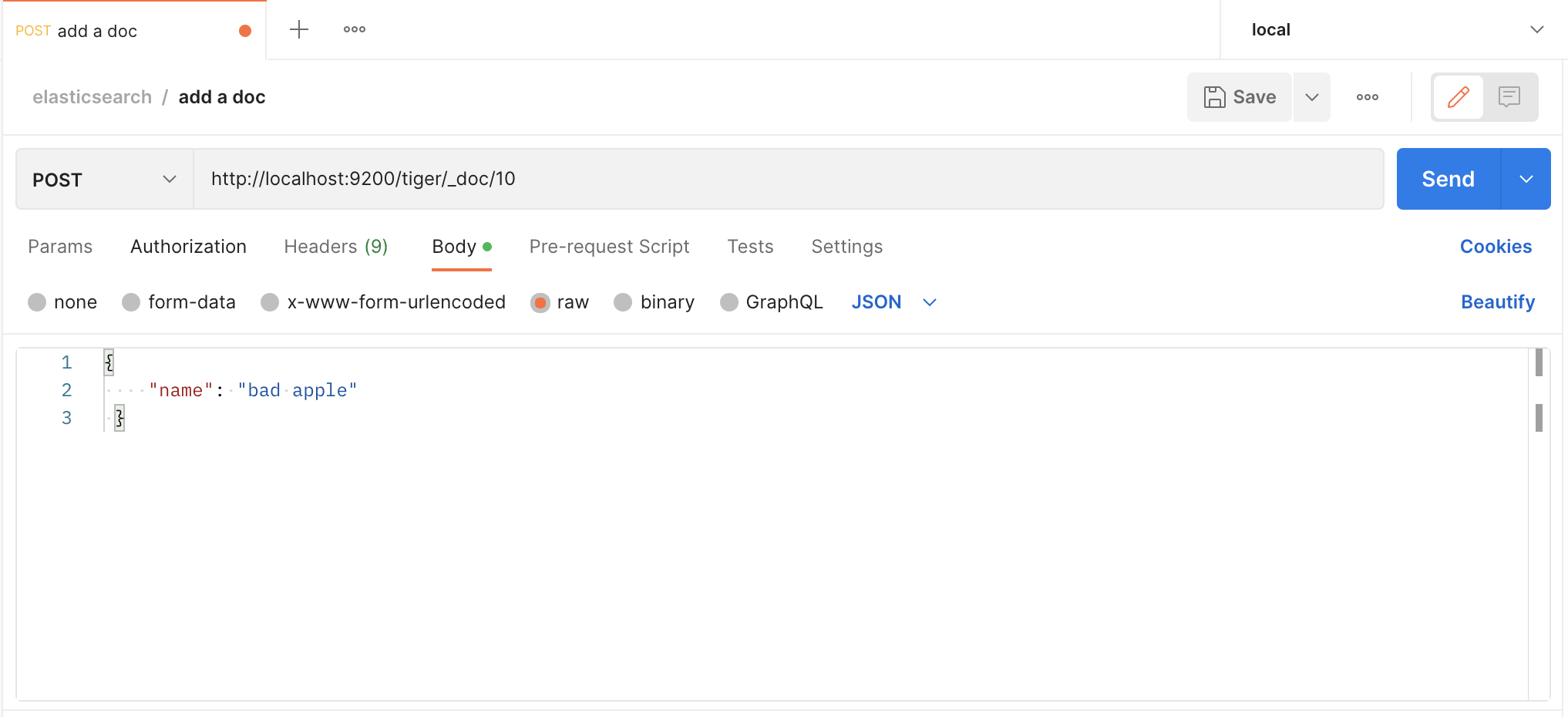

我們用postman傳送請求,建立一個檔案

我們傳送的是http請求,es也有一套http請求處理邏輯,和spring的mvc類似

// org.elasticsearch.rest.RestController

private void dispatchRequest(RestRequest request, RestChannel channel, RestHandler handler) throws Exception {

final int contentLength = request.content().length();

if (contentLength > 0) {

final XContentType xContentType = request.getXContentType(); // 校驗content-type

if (xContentType == null) {

sendContentTypeErrorMessage(request.getAllHeaderValues("Content-Type"), channel);

return;

}

if (handler.supportsContentStream() && xContentType != XContentType.JSON && xContentType != XContentType.SMILE) {

channel.sendResponse(BytesRestResponse.createSimpleErrorResponse(channel, RestStatus.NOT_ACCEPTABLE,

"Content-Type [" + xContentType + "] does not support stream parsing. Use JSON or SMILE instead"));

return;

}

}

RestChannel responseChannel = channel;

try {

if (handler.canTripCircuitBreaker()) {

inFlightRequestsBreaker(circuitBreakerService).addEstimateBytesAndMaybeBreak(contentLength, "<http_request>");

} else {

inFlightRequestsBreaker(circuitBreakerService).addWithoutBreaking(contentLength);

}

// iff we could reserve bytes for the request we need to send the response also over this channel

responseChannel = new ResourceHandlingHttpChannel(channel, circuitBreakerService, contentLength);

handler.handleRequest(request, responseChannel, client);

} catch (Exception e) {

responseChannel.sendResponse(new BytesRestResponse(responseChannel, e));

}

}// org.elasticsearch.rest.BaseRestHandler

@Override

public final void handleRequest(RestRequest request, RestChannel channel, NodeClient client) throws Exception {

// prepare the request for execution; has the side effect of touching the request parameters

final RestChannelConsumer action = prepareRequest(request, client);

// validate unconsumed params, but we must exclude params used to format the response

// use a sorted set so the unconsumed parameters appear in a reliable sorted order

final SortedSet<String> unconsumedParams =

request.unconsumedParams().stream().filter(p -> !responseParams().contains(p)).collect(Collectors.toCollection(TreeSet::new));

// validate the non-response params

if (!unconsumedParams.isEmpty()) {

final Set<String> candidateParams = new HashSet<>();

candidateParams.addAll(request.consumedParams());

candidateParams.addAll(responseParams());

throw new IllegalArgumentException(unrecognized(request, unconsumedParams, candidateParams, "parameter"));

}

if (request.hasContent() && request.isContentConsumed() == false) {

throw new IllegalArgumentException("request [" + request.method() + " " + request.path() + "] does not support having a body");

}

usageCount.increment();

// execute the action

action.accept(channel); // 執行action

}// org.elasticsearch.rest.action.document.RestIndexAction

public RestChannelConsumer prepareRequest(final RestRequest request, final NodeClient client) throws IOException {

IndexRequest indexRequest;

final String type = request.param("type");

if (type != null && type.equals(MapperService.SINGLE_MAPPING_NAME) == false) {

deprecationLogger.deprecatedAndMaybeLog("index_with_types", TYPES_DEPRECATION_MESSAGE); // type 已經廢棄

indexRequest = new IndexRequest(request.param("index"), type, request.param("id"));

} else {

indexRequest = new IndexRequest(request.param("index"));

indexRequest.id(request.param("id"));

}

indexRequest.routing(request.param("routing"));

indexRequest.setPipeline(request.param("pipeline"));

indexRequest.source(request.requiredContent(), request.getXContentType());

indexRequest.timeout(request.paramAsTime("timeout", IndexRequest.DEFAULT_TIMEOUT));

indexRequest.setRefreshPolicy(request.param("refresh"));

indexRequest.version(RestActions.parseVersion(request));

indexRequest.versionType(VersionType.fromString(request.param("version_type"), indexRequest.versionType()));

indexRequest.setIfSeqNo(request.paramAsLong("if_seq_no", indexRequest.ifSeqNo()));

indexRequest.setIfPrimaryTerm(request.paramAsLong("if_primary_term", indexRequest.ifPrimaryTerm()));

String sOpType = request.param("op_type");

String waitForActiveShards = request.param("wait_for_active_shards");

if (waitForActiveShards != null) {

indexRequest.waitForActiveShards(ActiveShardCount.parseString(waitForActiveShards));

}

if (sOpType != null) {

indexRequest.opType(sOpType);

}

return channel ->

client.index(indexRequest, new RestStatusToXContentListener<>(channel, r -> r.getLocation(indexRequest.routing()))); // 執行index操作的consumer

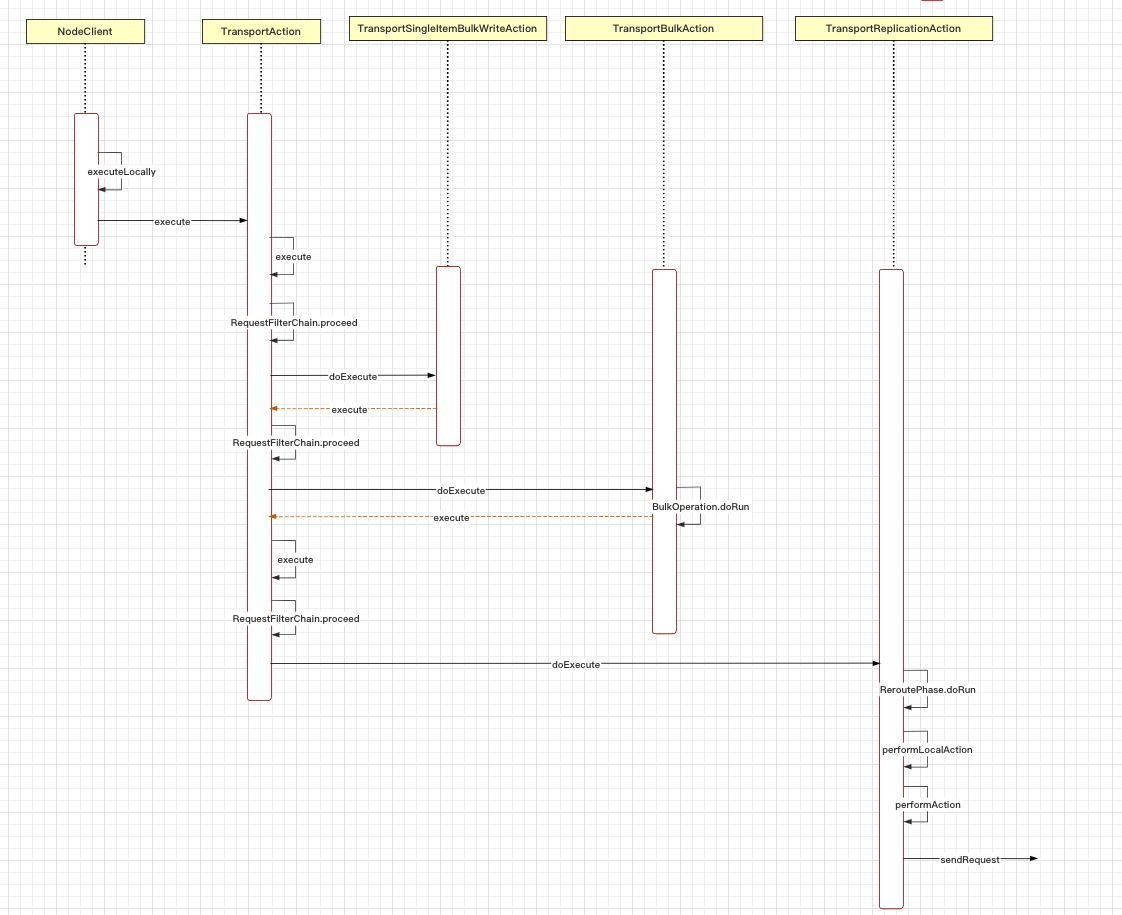

}然後我們來看index操作具體是怎麼處理的,主要由TransportAction管理

// org.elasticsearch.action.support.TransportAction

public final Task execute(Request request, ActionListener<Response> listener) {

/*

* While this version of execute could delegate to the TaskListener

* version of execute that'd add yet another layer of wrapping on the

* listener and prevent us from using the listener bare if there isn't a

* task. That just seems like too many objects. Thus the two versions of

* this method.

*/

Task task = taskManager.register("transport", actionName, request); // 註冊工作管理員,call -> task

execute(task, request, new ActionListener<Response>() { // ActionListener 封裝

@Override

public void onResponse(Response response) {

try {

taskManager.unregister(task);

} finally {

listener.onResponse(response);

}

}

@Override

public void onFailure(Exception e) {

try {

taskManager.unregister(task);

} finally {

listener.onFailure(e);

}

}

});

return task;

}

...

public final void execute(Task task, Request request, ActionListener<Response> listener) {

ActionRequestValidationException validationException = request.validate();

if (validationException != null) {

listener.onFailure(validationException);

return;

}

if (task != null && request.getShouldStoreResult()) {

listener = new TaskResultStoringActionListener<>(taskManager, task, listener);

}

RequestFilterChain<Request, Response> requestFilterChain = new RequestFilterChain<>(this, logger); // 鏈式處理

requestFilterChain.proceed(task, actionName, request, listener);

}

...

public void proceed(Task task, String actionName, Request request, ActionListener<Response> listener) {

int i = index.getAndIncrement();

try {

if (i < this.action.filters.length) {

this.action.filters[i].apply(task, actionName, request, listener, this); // 先處理過濾器

} else if (i == this.action.filters.length) {

this.action.doExecute(task, request, listener); // 執行action操作

} else {

listener.onFailure(new IllegalStateException("proceed was called too many times"));

}

} catch(Exception e) {

logger.trace("Error during transport action execution.", e);

listener.onFailure(e);

}

}實際上是TransportBulkAction執行具體操作

// org.elasticsearch.action.bulk.TransportBulkAction

protected void doExecute(Task task, BulkRequest bulkRequest, ActionListener<BulkResponse> listener) {

final long startTime = relativeTime();

final AtomicArray<BulkItemResponse> responses = new AtomicArray<>(bulkRequest.requests.size());

boolean hasIndexRequestsWithPipelines = false;

final MetaData metaData = clusterService.state().getMetaData();

ImmutableOpenMap<String, IndexMetaData> indicesMetaData = metaData.indices();

for (DocWriteRequest<?> actionRequest : bulkRequest.requests) {

IndexRequest indexRequest = getIndexWriteRequest(actionRequest);

if (indexRequest != null) {

// get pipeline from request

String pipeline = indexRequest.getPipeline();

if (pipeline == null) { // 不是管道

// start to look for default pipeline via settings found in the index meta data

IndexMetaData indexMetaData = indicesMetaData.get(actionRequest.index());

// check the alias for the index request (this is how normal index requests are modeled)

if (indexMetaData == null && indexRequest.index() != null) {

AliasOrIndex indexOrAlias = metaData.getAliasAndIndexLookup().get(indexRequest.index()); // 使用別名

if (indexOrAlias != null && indexOrAlias.isAlias()) {

AliasOrIndex.Alias alias = (AliasOrIndex.Alias) indexOrAlias;

indexMetaData = alias.getWriteIndex();

}

}

// check the alias for the action request (this is how upserts are modeled)

if (indexMetaData == null && actionRequest.index() != null) {

AliasOrIndex indexOrAlias = metaData.getAliasAndIndexLookup().get(actionRequest.index());

if (indexOrAlias != null && indexOrAlias.isAlias()) {

AliasOrIndex.Alias alias = (AliasOrIndex.Alias) indexOrAlias;

indexMetaData = alias.getWriteIndex();

}

}

if (indexMetaData != null) {

// Find the default pipeline if one is defined from and existing index.

String defaultPipeline = IndexSettings.DEFAULT_PIPELINE.get(indexMetaData.getSettings());

indexRequest.setPipeline(defaultPipeline);

if (IngestService.NOOP_PIPELINE_NAME.equals(defaultPipeline) == false) {

hasIndexRequestsWithPipelines = true;

}

} else if (indexRequest.index() != null) {

// No index exists yet (and is valid request), so matching index templates to look for a default pipeline

List<IndexTemplateMetaData> templates = MetaDataIndexTemplateService.findTemplates(metaData, indexRequest.index());

assert (templates != null);

String defaultPipeline = IngestService.NOOP_PIPELINE_NAME;

// order of templates are highest order first, break if we find a default_pipeline

for (IndexTemplateMetaData template : templates) {

final Settings settings = template.settings();

if (IndexSettings.DEFAULT_PIPELINE.exists(settings)) {

defaultPipeline = IndexSettings.DEFAULT_PIPELINE.get(settings);

break;

}

}

indexRequest.setPipeline(defaultPipeline);

if (IngestService.NOOP_PIPELINE_NAME.equals(defaultPipeline) == false) {

hasIndexRequestsWithPipelines = true;

}

}

} else if (IngestService.NOOP_PIPELINE_NAME.equals(pipeline) == false) {

hasIndexRequestsWithPipelines = true;

}

}

}

if (hasIndexRequestsWithPipelines) {

// this method (doExecute) will be called again, but with the bulk requests updated from the ingest node processing but

// also with IngestService.NOOP_PIPELINE_NAME on each request. This ensures that this on the second time through this method,

// this path is never taken.

try {

if (clusterService.localNode().isIngestNode()) {

processBulkIndexIngestRequest(task, bulkRequest, listener);

} else {

ingestForwarder.forwardIngestRequest(BulkAction.INSTANCE, bulkRequest, listener);

}

} catch (Exception e) {

listener.onFailure(e);

}

return;

}

if (needToCheck()) { // 根據批次請求自動建立索引,方便後續寫入資料

// Attempt to create all the indices that we're going to need during the bulk before we start.

// Step 1: collect all the indices in the request

final Set<String> indices = bulkRequest.requests.stream()

// delete requests should not attempt to create the index (if the index does not

// exists), unless an external versioning is used

.filter(request -> request.opType() != DocWriteRequest.OpType.DELETE

|| request.versionType() == VersionType.EXTERNAL

|| request.versionType() == VersionType.EXTERNAL_GTE)

.map(DocWriteRequest::index)

.collect(Collectors.toSet());

/* Step 2: filter that to indices that don't exist and we can create. At the same time build a map of indices we can't create

* that we'll use when we try to run the requests. */

final Map<String, IndexNotFoundException> indicesThatCannotBeCreated = new HashMap<>();

Set<String> autoCreateIndices = new HashSet<>();

ClusterState state = clusterService.state();

for (String index : indices) {

boolean shouldAutoCreate;

try {

shouldAutoCreate = shouldAutoCreate(index, state);

} catch (IndexNotFoundException e) {

shouldAutoCreate = false;

indicesThatCannotBeCreated.put(index, e);

}

if (shouldAutoCreate) {

autoCreateIndices.add(index);

}

}

// Step 3: create all the indices that are missing, if there are any missing. start the bulk after all the creates come back.

if (autoCreateIndices.isEmpty()) {

executeBulk(task, bulkRequest, startTime, listener, responses, indicesThatCannotBeCreated); // 索引

} else {

final AtomicInteger counter = new AtomicInteger(autoCreateIndices.size());

for (String index : autoCreateIndices) {

createIndex(index, bulkRequest.timeout(), new ActionListener<CreateIndexResponse>() {

@Override

public void onResponse(CreateIndexResponse result) {

if (counter.decrementAndGet() == 0) {

threadPool.executor(ThreadPool.Names.WRITE).execute(

() -> executeBulk(task, bulkRequest, startTime, listener, responses, indicesThatCannotBeCreated));

}

}

@Override

public void onFailure(Exception e) {

if (!(ExceptionsHelper.unwrapCause(e) instanceof ResourceAlreadyExistsException)) {

// fail all requests involving this index, if create didn't work

for (int i = 0; i < bulkRequest.requests.size(); i++) {

DocWriteRequest<?> request = bulkRequest.requests.get(i);

if (request != null && setResponseFailureIfIndexMatches(responses, i, request, index, e)) {

bulkRequest.requests.set(i, null);

}

}

}

if (counter.decrementAndGet() == 0) {

executeBulk(task, bulkRequest, startTime, ActionListener.wrap(listener::onResponse, inner -> {

inner.addSuppressed(e);

listener.onFailure(inner);

}), responses, indicesThatCannotBeCreated);

}

}

});

}

}

} else {

executeBulk(task, bulkRequest, startTime, listener, responses, emptyMap());

}

}接下來, BulkOperation將 BulkRequest 轉換成 BulkShardRequest,也就是具體在哪個分片上執行操作

// org.elasticsearch.action.bulk.TransportBulkAction

protected void doRun() {

final ClusterState clusterState = observer.setAndGetObservedState();

if (handleBlockExceptions(clusterState)) {

return;

}

final ConcreteIndices concreteIndices = new ConcreteIndices(clusterState, indexNameExpressionResolver);

MetaData metaData = clusterState.metaData();

for (int i = 0; i < bulkRequest.requests.size(); i++) {

DocWriteRequest<?> docWriteRequest = bulkRequest.requests.get(i);

//the request can only be null because we set it to null in the previous step, so it gets ignored

if (docWriteRequest == null) {

continue;

}

if (addFailureIfIndexIsUnavailable(docWriteRequest, i, concreteIndices, metaData)) {

continue;

}

Index concreteIndex = concreteIndices.resolveIfAbsent(docWriteRequest); // 解析索引

try {

switch (docWriteRequest.opType()) {

case CREATE:

case INDEX:

IndexRequest indexRequest = (IndexRequest) docWriteRequest;

final IndexMetaData indexMetaData = metaData.index(concreteIndex);

MappingMetaData mappingMd = indexMetaData.mappingOrDefault();

Version indexCreated = indexMetaData.getCreationVersion();

indexRequest.resolveRouting(metaData);

indexRequest.process(indexCreated, mappingMd, concreteIndex.getName()); // 校驗indexRequest,自動生成id

break;

case UPDATE:

TransportUpdateAction.resolveAndValidateRouting(metaData, concreteIndex.getName(),

(UpdateRequest) docWriteRequest);

break;

case DELETE:

docWriteRequest.routing(metaData.resolveWriteIndexRouting(docWriteRequest.routing(), docWriteRequest.index()));

// check if routing is required, if so, throw error if routing wasn't specified

if (docWriteRequest.routing() == null && metaData.routingRequired(concreteIndex.getName())) {

throw new RoutingMissingException(concreteIndex.getName(), docWriteRequest.type(), docWriteRequest.id());

}

break;

default: throw new AssertionError("request type not supported: [" + docWriteRequest.opType() + "]");

}

} catch (ElasticsearchParseException | IllegalArgumentException | RoutingMissingException e) {

BulkItemResponse.Failure failure = new BulkItemResponse.Failure(concreteIndex.getName(), docWriteRequest.type(),

docWriteRequest.id(), e);

BulkItemResponse bulkItemResponse = new BulkItemResponse(i, docWriteRequest.opType(), failure);

responses.set(i, bulkItemResponse);

// make sure the request gets never processed again

bulkRequest.requests.set(i, null);

}

}

// first, go over all the requests and create a ShardId -> Operations mapping

Map<ShardId, List<BulkItemRequest>> requestsByShard = new HashMap<>();

for (int i = 0; i < bulkRequest.requests.size(); i++) {

DocWriteRequest<?> request = bulkRequest.requests.get(i);

if (request == null) {

continue;

}

String concreteIndex = concreteIndices.getConcreteIndex(request.index()).getName();

ShardId shardId = clusterService.operationRouting().indexShards(clusterState, concreteIndex, request.id(),

request.routing()).shardId();

List<BulkItemRequest> shardRequests = requestsByShard.computeIfAbsent(shardId, shard -> new ArrayList<>());

shardRequests.add(new BulkItemRequest(i, request));

}

if (requestsByShard.isEmpty()) {

listener.onResponse(new BulkResponse(responses.toArray(new BulkItemResponse[responses.length()]),

buildTookInMillis(startTimeNanos)));

return;

}

final AtomicInteger counter = new AtomicInteger(requestsByShard.size());

String nodeId = clusterService.localNode().getId();

for (Map.Entry<ShardId, List<BulkItemRequest>> entry : requestsByShard.entrySet()) {

final ShardId shardId = entry.getKey();

final List<BulkItemRequest> requests = entry.getValue();

BulkShardRequest bulkShardRequest = new BulkShardRequest(shardId, bulkRequest.getRefreshPolicy(), // 構建BulkShardRequest

requests.toArray(new BulkItemRequest[requests.size()]));

bulkShardRequest.waitForActiveShards(bulkRequest.waitForActiveShards());

bulkShardRequest.timeout(bulkRequest.timeout());

if (task != null) {

bulkShardRequest.setParentTask(nodeId, task.getId());

}

shardBulkAction.execute(bulkShardRequest, new ActionListener<BulkShardResponse>() {

@Override

public void onResponse(BulkShardResponse bulkShardResponse) {

for (BulkItemResponse bulkItemResponse : bulkShardResponse.getResponses()) {

// we may have no response if item failed

if (bulkItemResponse.getResponse() != null) {

bulkItemResponse.getResponse().setShardInfo(bulkShardResponse.getShardInfo());

}

responses.set(bulkItemResponse.getItemId(), bulkItemResponse);

}

if (counter.decrementAndGet() == 0) {

finishHim();

}

}

@Override

public void onFailure(Exception e) {

// create failures for all relevant requests

for (BulkItemRequest request : requests) {

final String indexName = concreteIndices.getConcreteIndex(request.index()).getName();

DocWriteRequest<?> docWriteRequest = request.request();

responses.set(request.id(), new BulkItemResponse(request.id(), docWriteRequest.opType(),

new BulkItemResponse.Failure(indexName, docWriteRequest.type(), docWriteRequest.id(), e)));

}

if (counter.decrementAndGet() == 0) {

finishHim();

}

}

private void finishHim() {

listener.onResponse(new BulkResponse(responses.toArray(new BulkItemResponse[responses.length()]),

buildTookInMillis(startTimeNanos)));

}

});

}

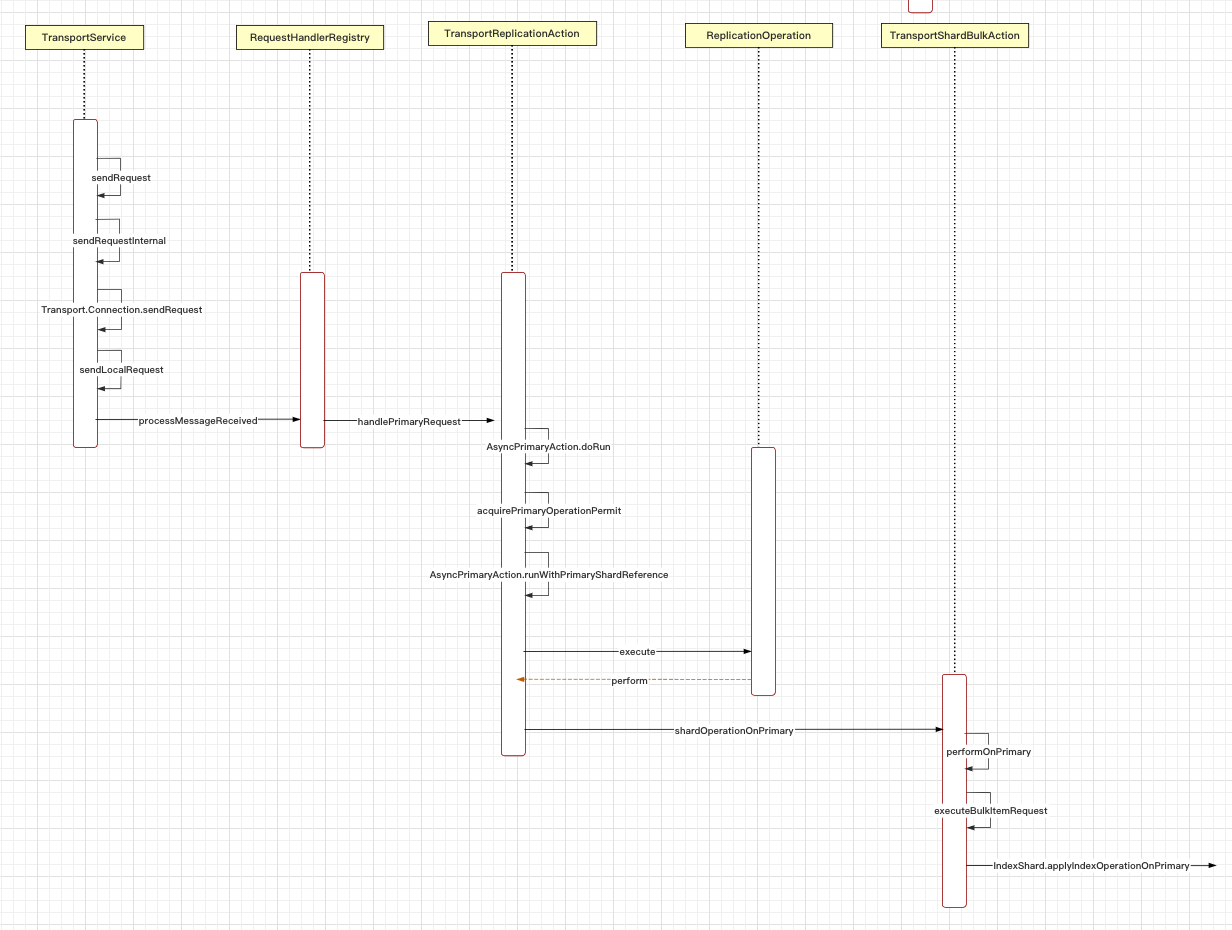

}然後看看此分片是在當前節點,還是遠端節點上,現在進入routing階段。(筆者這裡只啟動了一個節點,我們就看下本地節點的邏輯)

// org.elasticsearch.action.support.replication.TransportReplicationAction

protected void doRun() {

setPhase(task, "routing");

final ClusterState state = observer.setAndGetObservedState();

final String concreteIndex = concreteIndex(state, request);

final ClusterBlockException blockException = blockExceptions(state, concreteIndex);

if (blockException != null) {

if (blockException.retryable()) {

logger.trace("cluster is blocked, scheduling a retry", blockException);

retry(blockException);

} else {

finishAsFailed(blockException);

}

} else {

// request does not have a shardId yet, we need to pass the concrete index to resolve shardId

final IndexMetaData indexMetaData = state.metaData().index(concreteIndex);

if (indexMetaData == null) {

retry(new IndexNotFoundException(concreteIndex));

return;

}

if (indexMetaData.getState() == IndexMetaData.State.CLOSE) {

throw new IndexClosedException(indexMetaData.getIndex());

}

// resolve all derived request fields, so we can route and apply it

resolveRequest(indexMetaData, request);

assert request.waitForActiveShards() != ActiveShardCount.DEFAULT :

"request waitForActiveShards must be set in resolveRequest";

final ShardRouting primary = primary(state);

if (retryIfUnavailable(state, primary)) {

return;

}

final DiscoveryNode node = state.nodes().get(primary.currentNodeId());

if (primary.currentNodeId().equals(state.nodes().getLocalNodeId())) { // 根據路由確定primary在哪個node上,然後和當前node做比較

performLocalAction(state, primary, node, indexMetaData);

} else {

performRemoteAction(state, primary, node);

}

}

}既然是當前節點,那就是傳送內部請求

// org.elasticsearch.transport.TransportService

private <T extends TransportResponse> void sendRequestInternal(final Transport.Connection connection, final String action,

final TransportRequest request,

final TransportRequestOptions options,

TransportResponseHandler<T> handler) {

if (connection == null) {

throw new IllegalStateException("can't send request to a null connection");

}

DiscoveryNode node = connection.getNode();

Supplier<ThreadContext.StoredContext> storedContextSupplier = threadPool.getThreadContext().newRestorableContext(true);

ContextRestoreResponseHandler<T> responseHandler = new ContextRestoreResponseHandler<>(storedContextSupplier, handler);

// TODO we can probably fold this entire request ID dance into connection.sendReqeust but it will be a bigger refactoring

final long requestId = responseHandlers.add(new Transport.ResponseContext<>(responseHandler, connection, action));

final TimeoutHandler timeoutHandler;

if (options.timeout() != null) {

timeoutHandler = new TimeoutHandler(requestId, connection.getNode(), action);

responseHandler.setTimeoutHandler(timeoutHandler);

} else {

timeoutHandler = null;

}

try {

if (lifecycle.stoppedOrClosed()) {

/*

* If we are not started the exception handling will remove the request holder again and calls the handler to notify the

* caller. It will only notify if toStop hasn't done the work yet.

*

* Do not edit this exception message, it is currently relied upon in production code!

*/

// TODO: make a dedicated exception for a stopped transport service? cf. ExceptionsHelper#isTransportStoppedForAction

throw new TransportException("TransportService is closed stopped can't send request");

}

if (timeoutHandler != null) {

assert options.timeout() != null;

timeoutHandler.scheduleTimeout(options.timeout());

}

connection.sendRequest(requestId, action, request, options); // local node optimization happens upstream

...

private void sendLocalRequest(long requestId, final String action, final TransportRequest request, TransportRequestOptions options) {

final DirectResponseChannel channel = new DirectResponseChannel(localNode, action, requestId, this, threadPool);

try {

onRequestSent(localNode, requestId, action, request, options);

onRequestReceived(requestId, action);

final RequestHandlerRegistry reg = getRequestHandler(action); // 註冊器模式 action -> handler

if (reg == null) {

throw new ActionNotFoundTransportException("Action [" + action + "] not found");

}

final String executor = reg.getExecutor();

if (ThreadPool.Names.SAME.equals(executor)) {

//noinspection unchecked

reg.processMessageReceived(request, channel);

} else {

threadPool.executor(executor).execute(new AbstractRunnable() {

@Override

protected void doRun() throws Exception {

//noinspection unchecked

reg.processMessageReceived(request, channel); // 處理請求

}

@Override

public boolean isForceExecution() {

return reg.isForceExecution();

}

@Override

public void onFailure(Exception e) {

try {

channel.sendResponse(e);

} catch (Exception inner) {

inner.addSuppressed(e);

logger.warn(() -> new ParameterizedMessage(

"failed to notify channel of error message for action [{}]", action), inner);

}

}

@Override

public String toString() {

return "processing of [" + requestId + "][" + action + "]: " + request;

}

});

}然後獲取在分片上的執行請求許可

// org.elasticsearch.action.support.replication.TransportReplicationAction

protected void doRun() throws Exception {

final ShardId shardId = primaryRequest.getRequest().shardId();

final IndexShard indexShard = getIndexShard(shardId);

final ShardRouting shardRouting = indexShard.routingEntry();

// we may end up here if the cluster state used to route the primary is so stale that the underlying

// index shard was replaced with a replica. For example - in a two node cluster, if the primary fails

// the replica will take over and a replica will be assigned to the first node.

if (shardRouting.primary() == false) {

throw new ReplicationOperation.RetryOnPrimaryException(shardId, "actual shard is not a primary " + shardRouting);

}

final String actualAllocationId = shardRouting.allocationId().getId();

if (actualAllocationId.equals(primaryRequest.getTargetAllocationID()) == false) {

throw new ShardNotFoundException(shardId, "expected allocation id [{}] but found [{}]",

primaryRequest.getTargetAllocationID(), actualAllocationId);

}

final long actualTerm = indexShard.getPendingPrimaryTerm();

if (actualTerm != primaryRequest.getPrimaryTerm()) {

throw new ShardNotFoundException(shardId, "expected allocation id [{}] with term [{}] but found [{}]",

primaryRequest.getTargetAllocationID(), primaryRequest.getPrimaryTerm(), actualTerm);

}

acquirePrimaryOperationPermit( // 獲取在primary分片上執行操作的許可

indexShard,

primaryRequest.getRequest(),

ActionListener.wrap(

releasable -> runWithPrimaryShardReference(new PrimaryShardReference(indexShard, releasable)),

e -> {

if (e instanceof ShardNotInPrimaryModeException) {

onFailure(new ReplicationOperation.RetryOnPrimaryException(shardId, "shard is not in primary mode", e));

} else {

onFailure(e);

}

}));

}現在進入primary階段

// org.elasticsearch.action.support.replication.TransportReplicationAction

setPhase(replicationTask, "primary");

final ActionListener<Response> referenceClosingListener = ActionListener.wrap(response -> {

primaryShardReference.close(); // release shard operation lock before responding to caller

setPhase(replicationTask, "finished");

onCompletionListener.onResponse(response);

}, e -> handleException(primaryShardReference, e));

final ActionListener<Response> globalCheckpointSyncingListener = ActionListener.wrap(response -> {

if (syncGlobalCheckpointAfterOperation) {

final IndexShard shard = primaryShardReference.indexShard;

try {

shard.maybeSyncGlobalCheckpoint("post-operation");

} catch (final Exception e) {

// only log non-closed exceptions

if (ExceptionsHelper.unwrap(

e, AlreadyClosedException.class, IndexShardClosedException.class) == null) {

// intentionally swallow, a missed global checkpoint sync should not fail this operation

logger.info(

new ParameterizedMessage(

"{} failed to execute post-operation global checkpoint sync", shard.shardId()), e);

}

}

}

referenceClosingListener.onResponse(response);

}, referenceClosingListener::onFailure);

new ReplicationOperation<>(primaryRequest.getRequest(), primaryShardReference,

ActionListener.wrap(result -> result.respond(globalCheckpointSyncingListener), referenceClosingListener::onFailure),

newReplicasProxy(), logger, actionName, primaryRequest.getPrimaryTerm()).execute();中間的呼叫跳轉不贅述,最後TransportShardBulkAction 呼叫索引引引擎

// org.elasticsearch.action.bulk.TransportShardBulkAction

static boolean executeBulkItemRequest(BulkPrimaryExecutionContext context, UpdateHelper updateHelper, LongSupplier nowInMillisSupplier,

MappingUpdatePerformer mappingUpdater, Consumer<ActionListener<Void>> waitForMappingUpdate,

ActionListener<Void> itemDoneListener) throws Exception {

final DocWriteRequest.OpType opType = context.getCurrent().opType();

final UpdateHelper.Result updateResult;

if (opType == DocWriteRequest.OpType.UPDATE) {

final UpdateRequest updateRequest = (UpdateRequest) context.getCurrent();

try {

updateResult = updateHelper.prepare(updateRequest, context.getPrimary(), nowInMillisSupplier);

} catch (Exception failure) {

// we may fail translating a update to index or delete operation

// we use index result to communicate failure while translating update request

final Engine.Result result =

new Engine.IndexResult(failure, updateRequest.version());

context.setRequestToExecute(updateRequest);

context.markOperationAsExecuted(result);

context.markAsCompleted(context.getExecutionResult());

return true;

}

// execute translated update request

switch (updateResult.getResponseResult()) {

case CREATED:

case UPDATED:

IndexRequest indexRequest = updateResult.action();

IndexMetaData metaData = context.getPrimary().indexSettings().getIndexMetaData();

MappingMetaData mappingMd = metaData.mappingOrDefault();

indexRequest.process(metaData.getCreationVersion(), mappingMd, updateRequest.concreteIndex());

context.setRequestToExecute(indexRequest);

break;

case DELETED:

context.setRequestToExecute(updateResult.action());

break;

case NOOP:

context.markOperationAsNoOp(updateResult.action());

context.markAsCompleted(context.getExecutionResult());

return true;

default:

throw new IllegalStateException("Illegal update operation " + updateResult.getResponseResult());

}

} else {

context.setRequestToExecute(context.getCurrent());

updateResult = null;

}

assert context.getRequestToExecute() != null; // also checks that we're in TRANSLATED state

final IndexShard primary = context.getPrimary();

final long version = context.getRequestToExecute().version();

final boolean isDelete = context.getRequestToExecute().opType() == DocWriteRequest.OpType.DELETE;

final Engine.Result result;

if (isDelete) {

final DeleteRequest request = context.getRequestToExecute();

result = primary.applyDeleteOperationOnPrimary(version, request.type(), request.id(), request.versionType(),

request.ifSeqNo(), request.ifPrimaryTerm());

} else {

final IndexRequest request = context.getRequestToExecute();

result = primary.applyIndexOperationOnPrimary(version, request.versionType(), new SourceToParse( // lucene 執行引擎

request.index(), request.type(), request.id(), request.source(), request.getContentType(), request.routing()),

request.ifSeqNo(), request.ifPrimaryTerm(), request.getAutoGeneratedTimestamp(), request.isRetry());

}3.總結

本文簡單描述了es索引流程,包括了http請求是如何解析的,如何確定分片的。但是仍有許多不足,比如沒有討論遠端節點是如何處理的,lucene執行引擎的細節,後面部落格會繼續探討這些課題。