OpenCV影象處理與視訊分析詳解

1、OpenCV4環境搭建

-

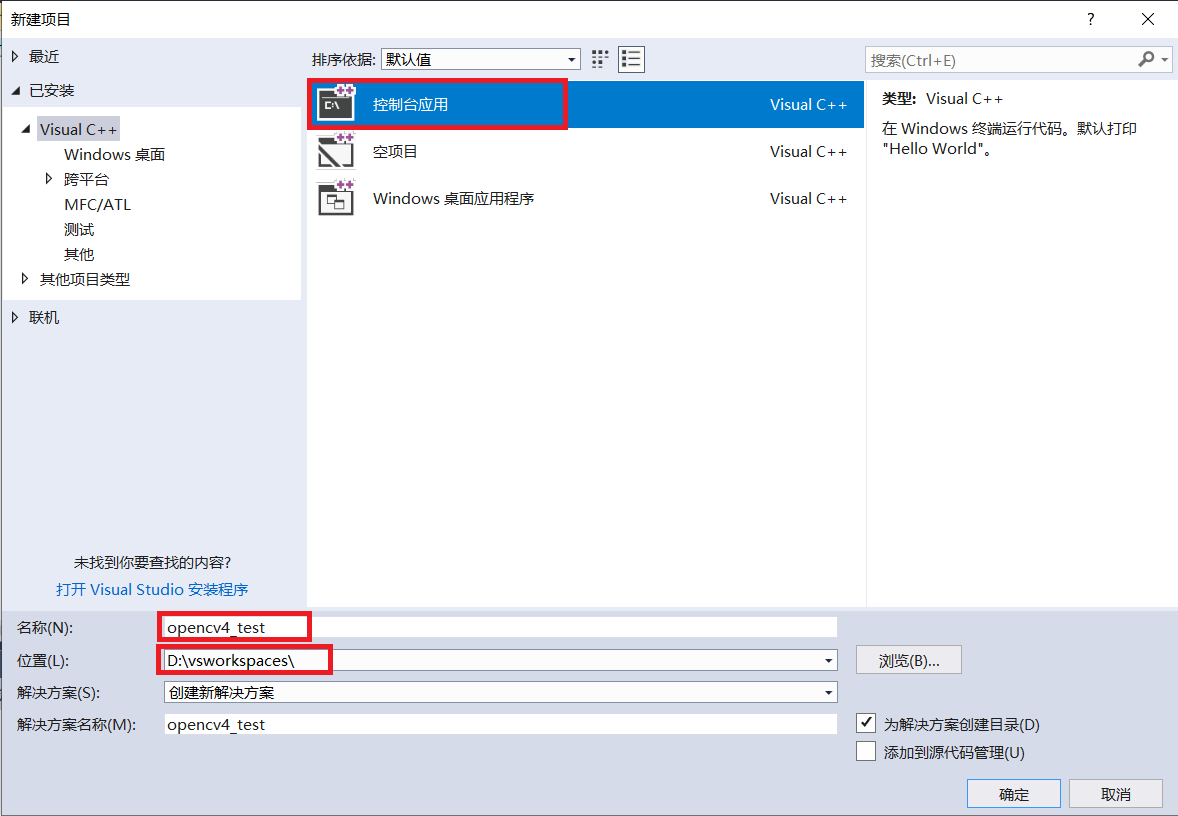

VS2017新建一個控制檯專案

-

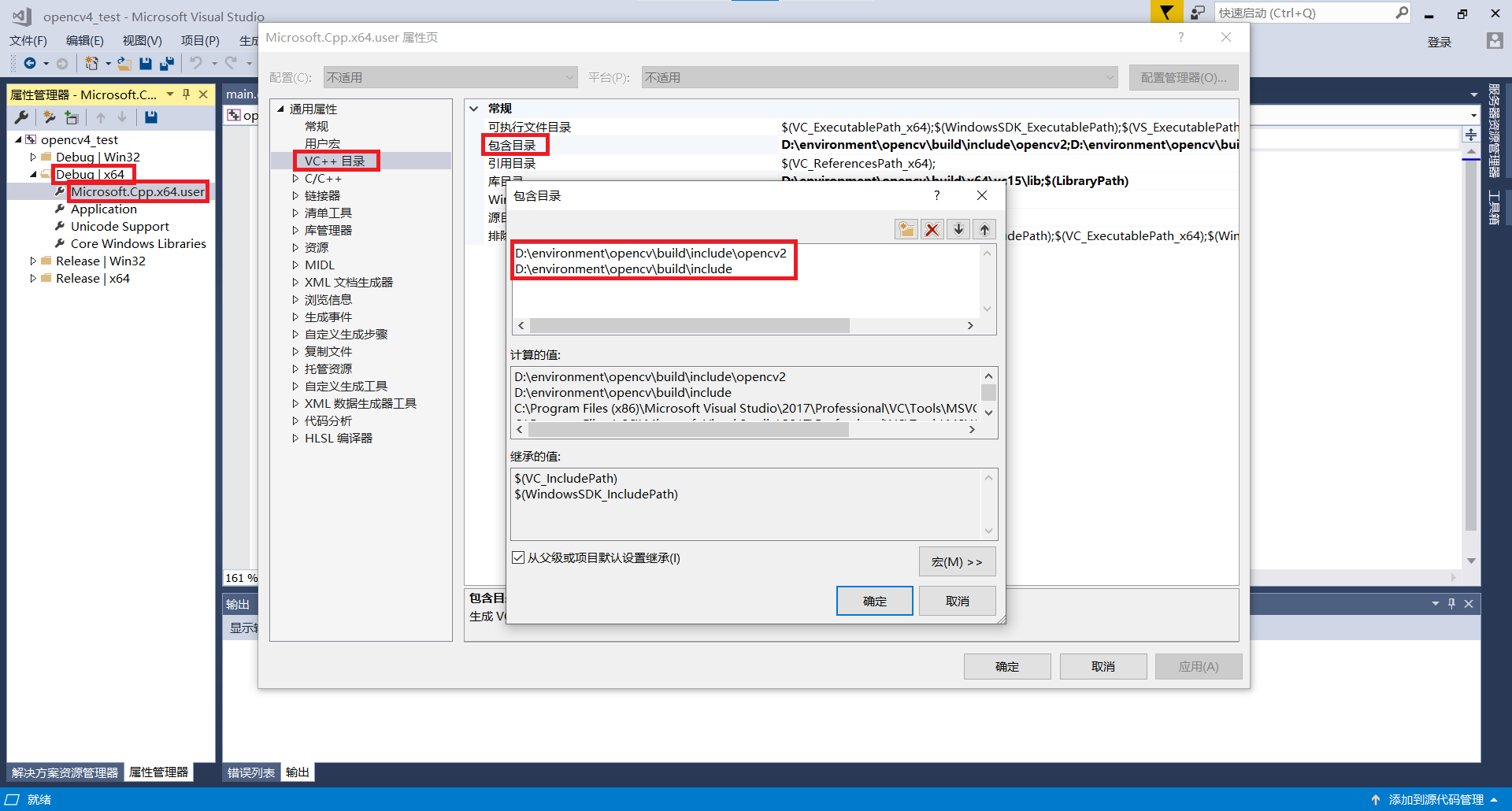

設定包含目錄

-

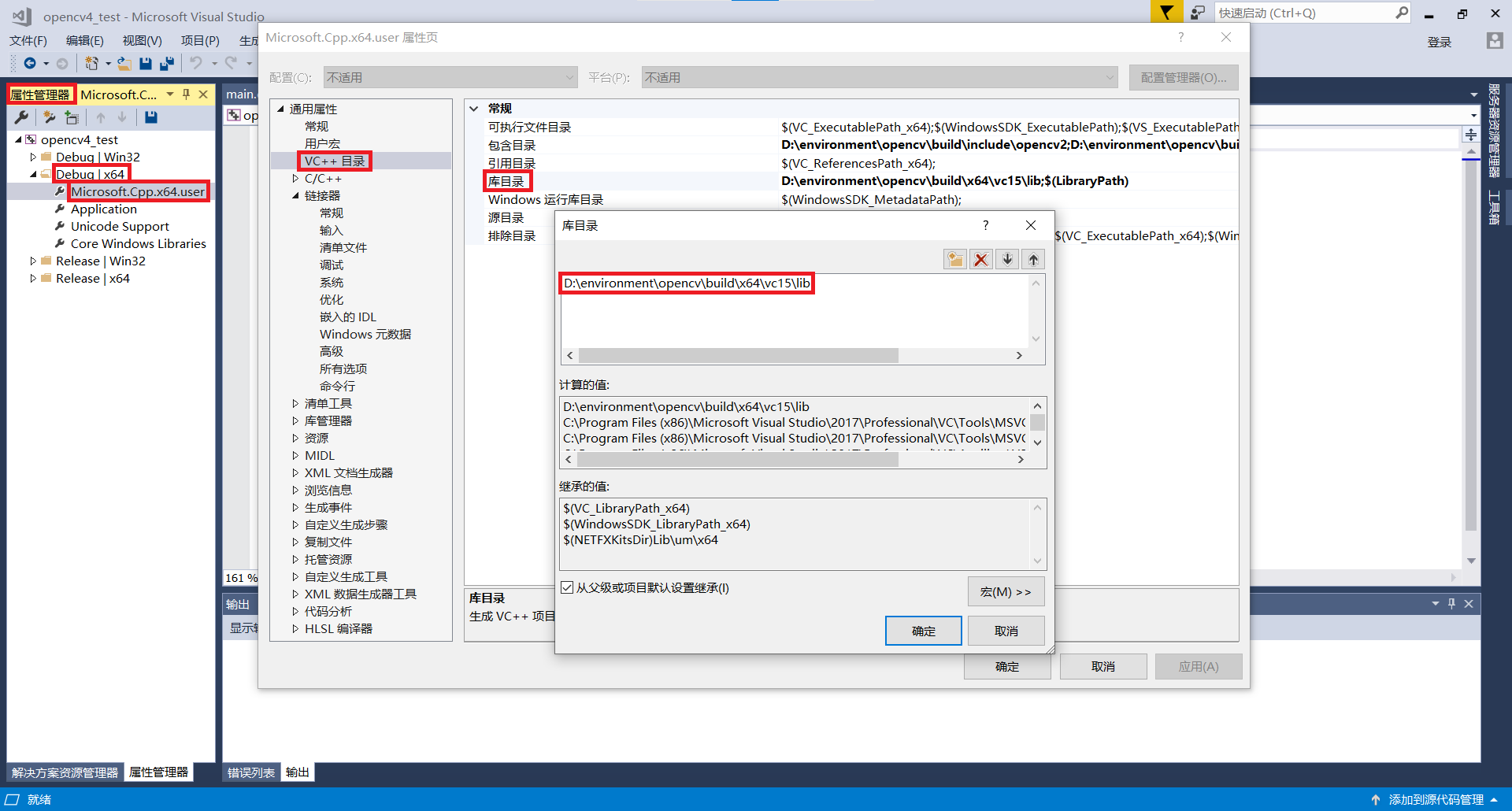

設定庫目錄

-

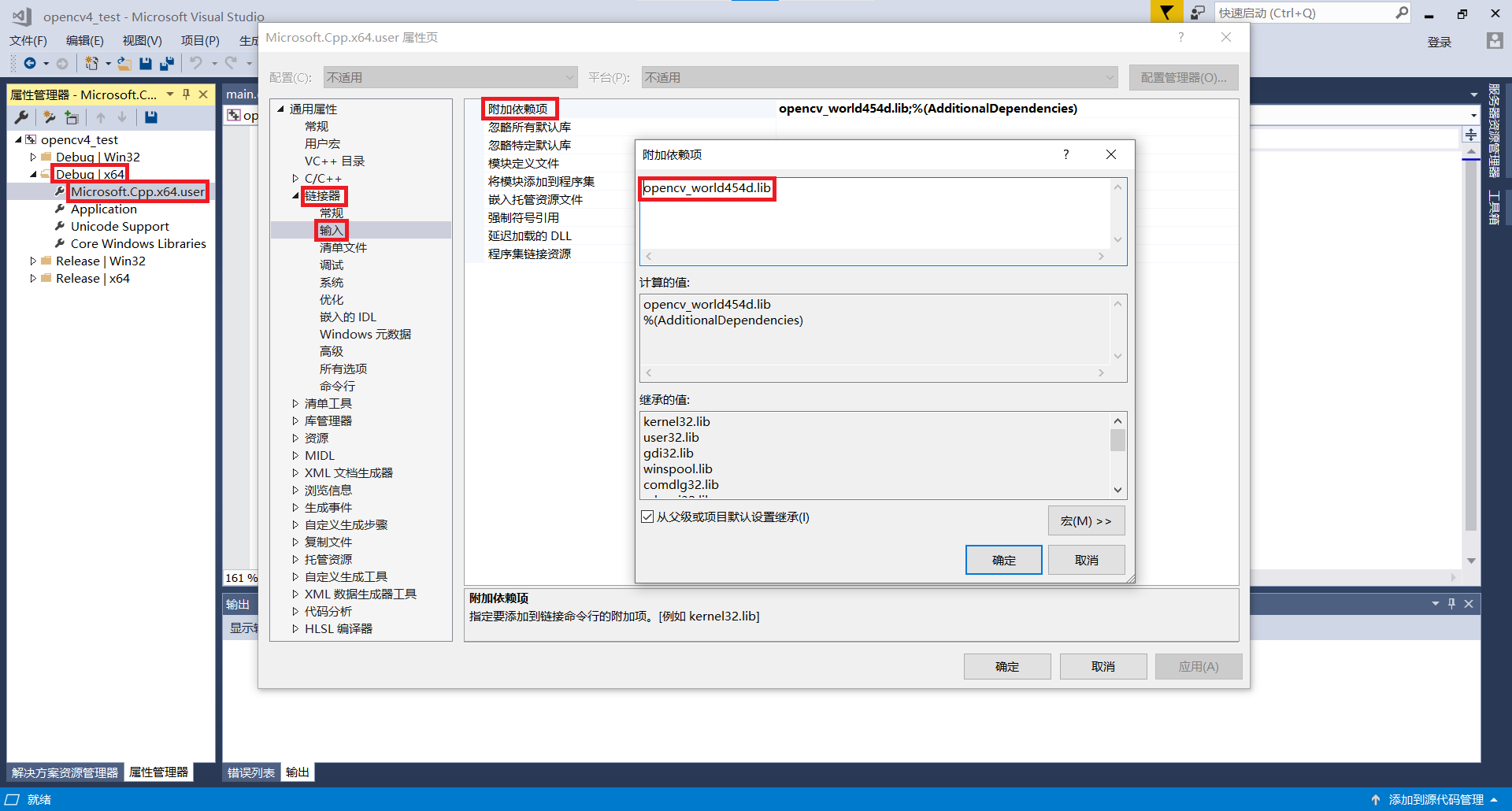

設定連結器

-

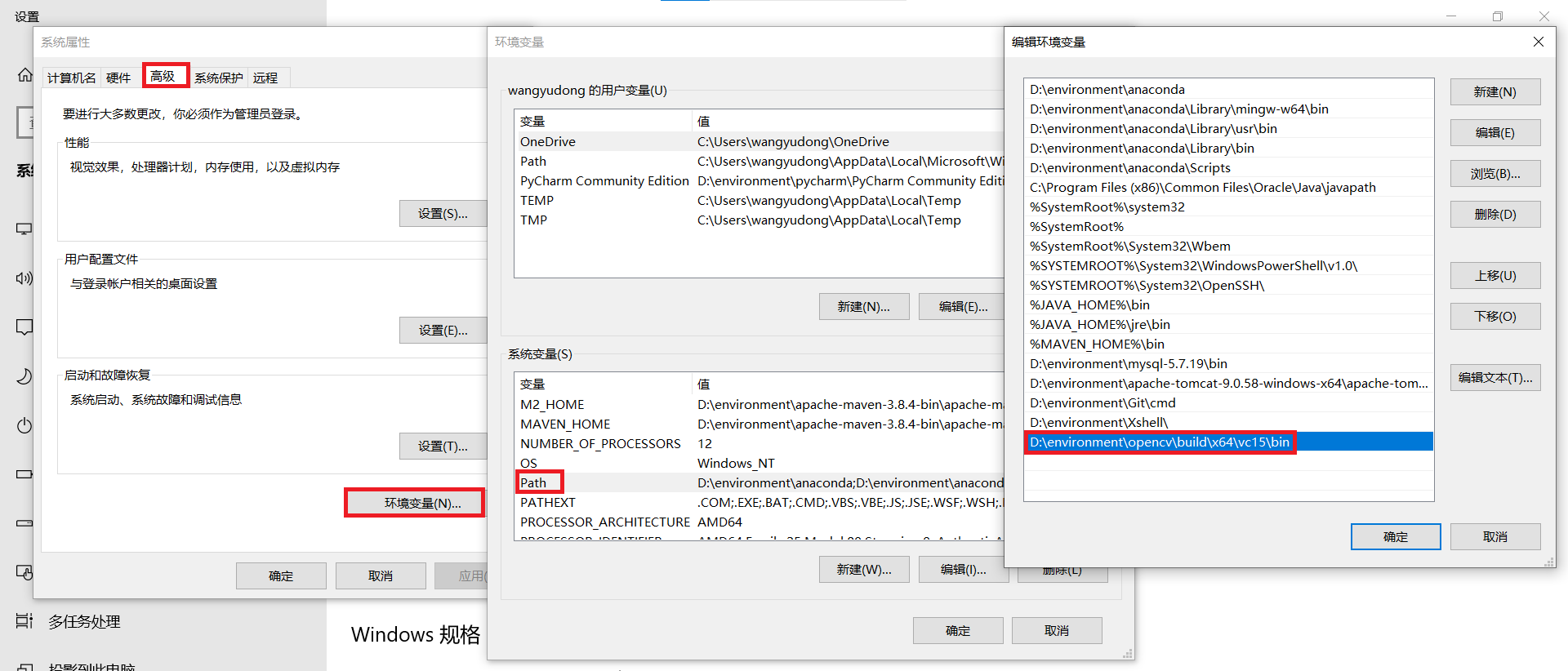

設定環境變數

-

重新啟動VS2017

2、第一個影象顯示程式

main.cpp

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

int main(int argc, char** argv) {

Mat src = imread("D:/images/lena.jpg");

if (src.empty()) {

printf("could not find image file");

return -1;

}

namedWindow("input", WINDOW_AUTOSIZE);

imshow("input", src);

waitKey(0);

destroyAllWindows();

return 0;

}

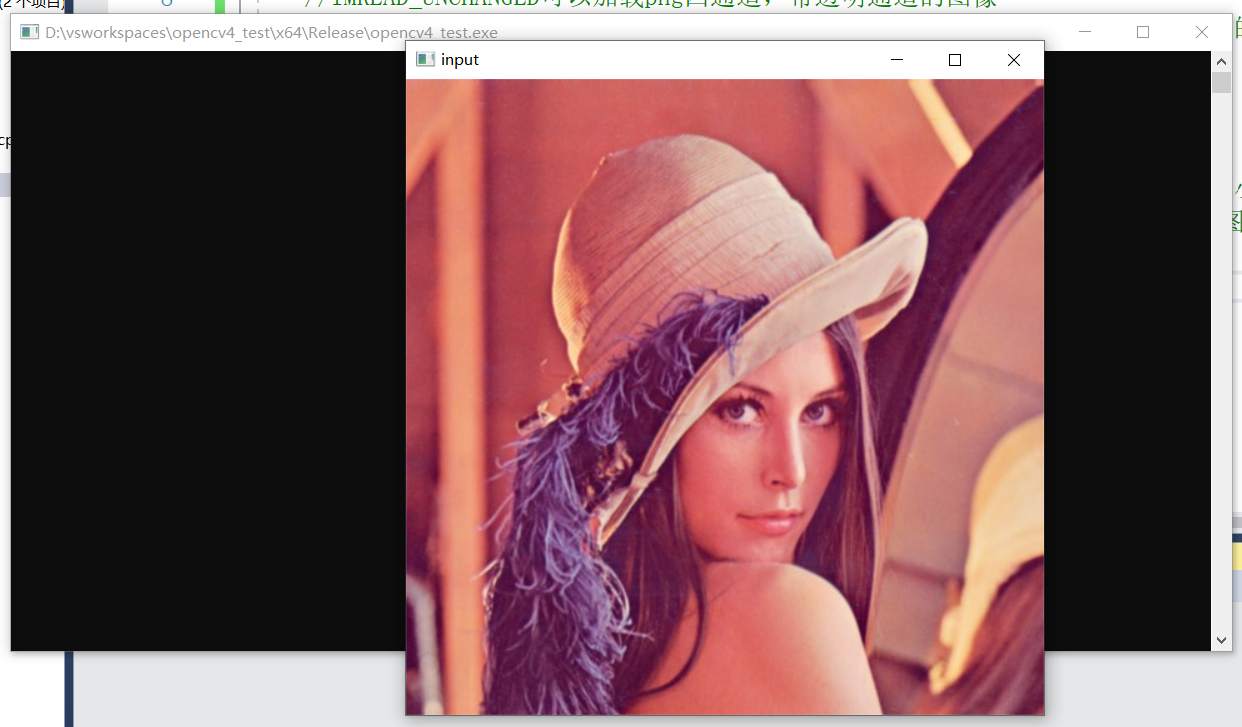

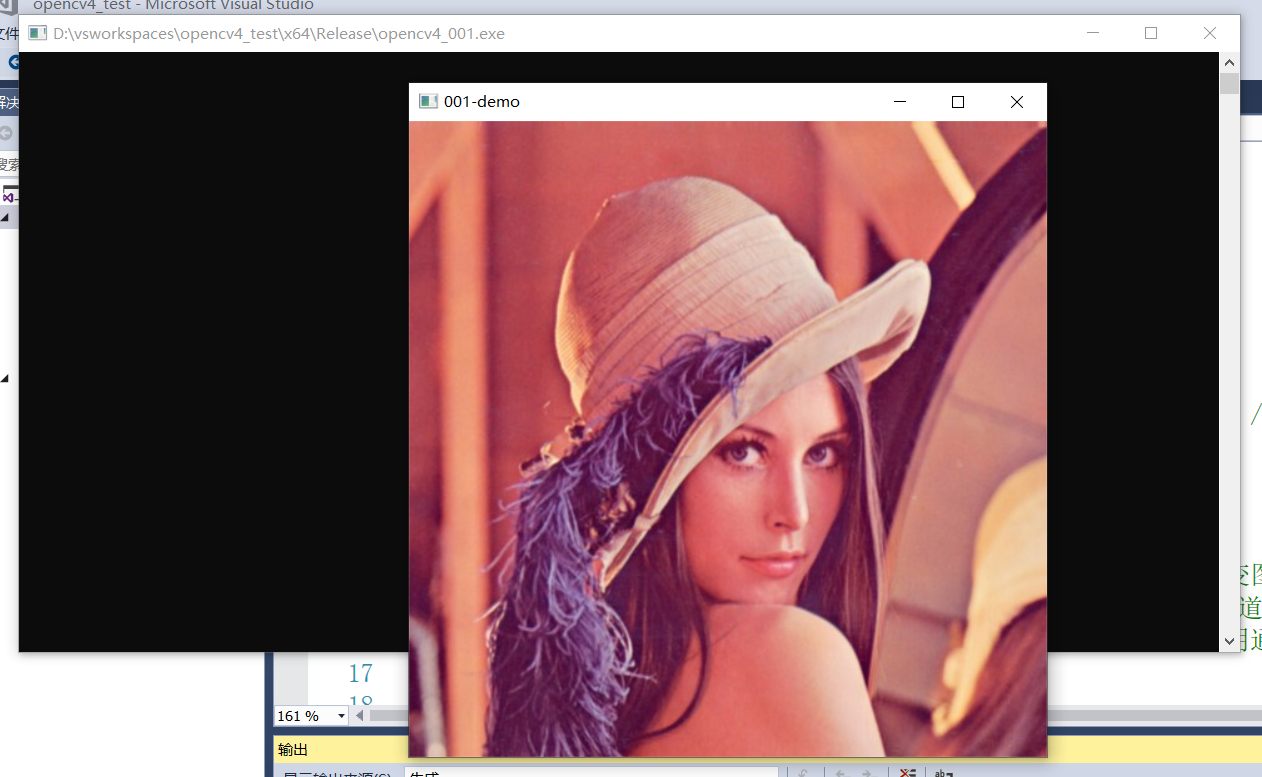

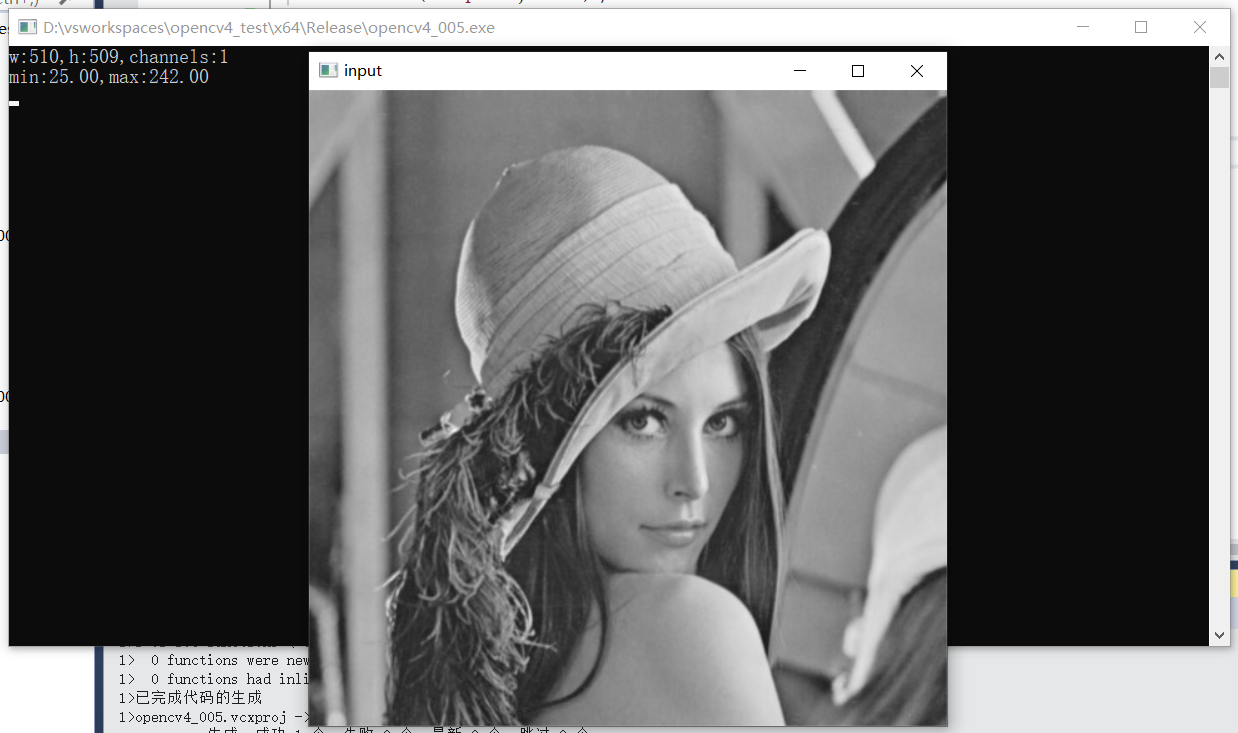

效果:

3、影象載入與儲存

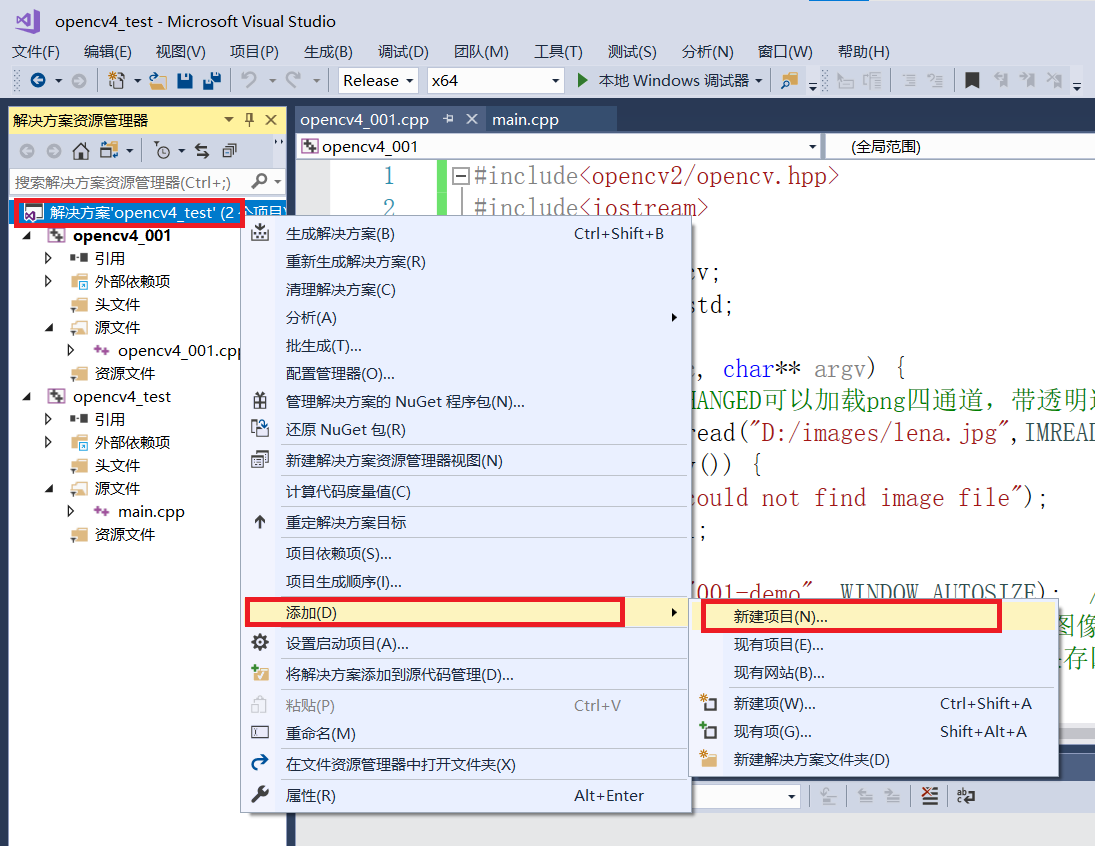

新增一個專案opencv4_001

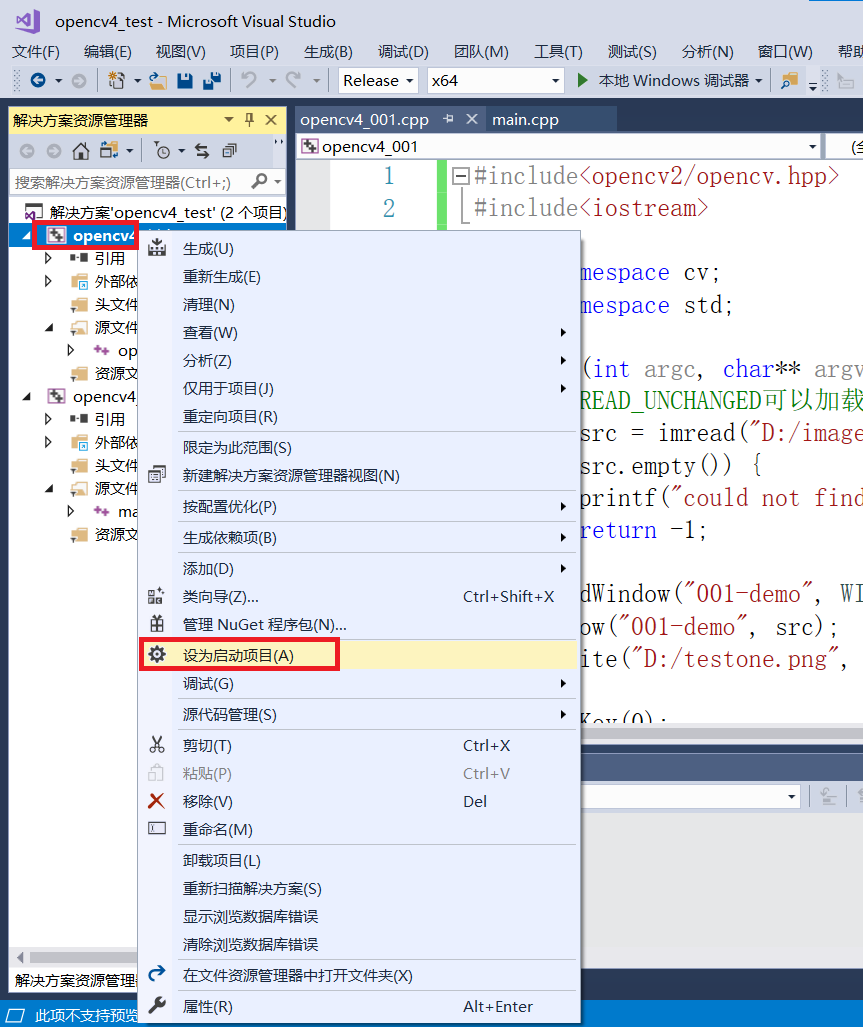

將該專案設為啟動專案

opencv4_001.cpp

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

int main(int argc, char** argv) {

//IMREAD_UNCHANGED可以載入png四通道,帶透明通道的影象

Mat src = imread("D:/images/lena.jpg",IMREAD_ANYCOLOR); //改變載入影象的色彩空間

if (src.empty()) {

printf("could not find image file");

return -1;

}

namedWindow("001-demo", WINDOW_AUTOSIZE); //可以設定改變圖形顯示視窗大小WINDOW_FREERATIO

imshow("001-demo", src); //imshow()彩色影象只能顯示三通道,處理四通道影象時,需要將影象儲存

imwrite("D:/testone.png", src); //可以儲存四通道,帶透明通道的影象

waitKey(0);

destroyAllWindows();

return 0;

}

效果:

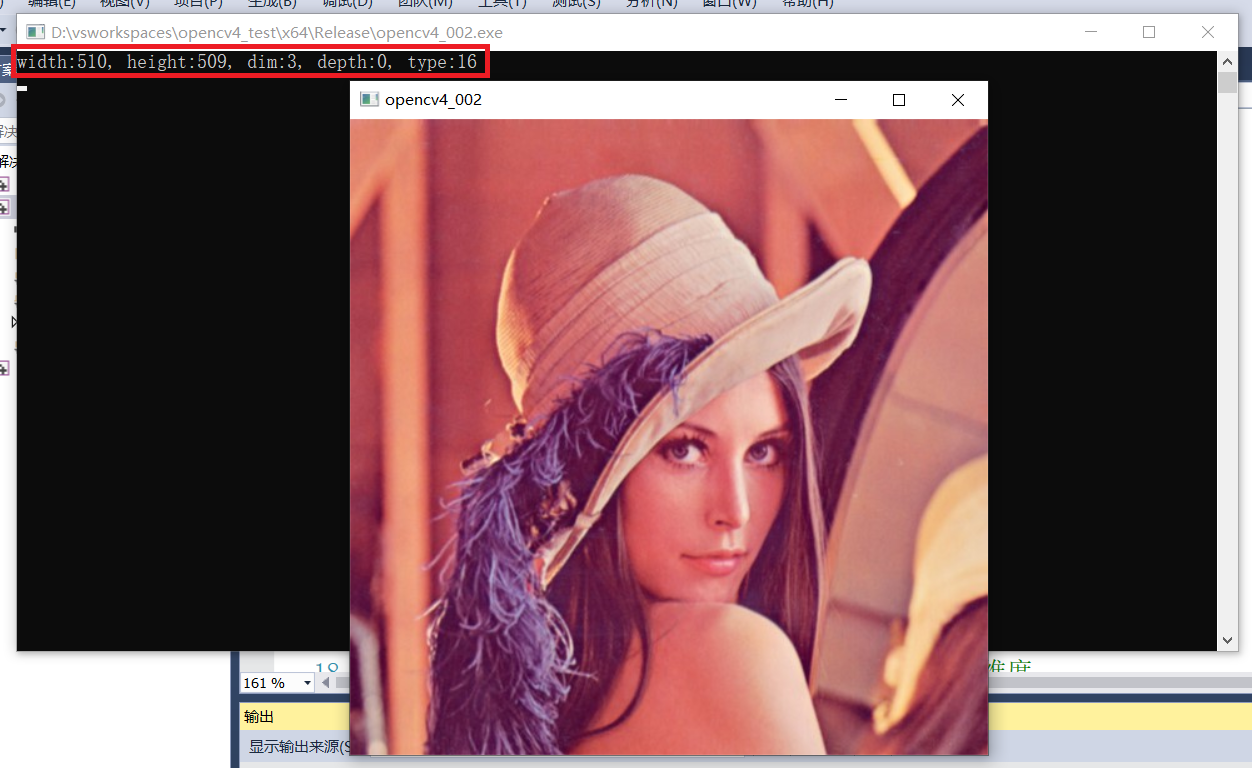

4、認識Mat物件

depth:深度,即每一個畫素的位數(bits),在opencv的Mat.depth()中得到的是一個 0-6 的數位,分別代表不同的位數:enum{ CV_8U=0,CV_8S=1,CV_16U=2,CV_16S=3,CV_32S=4,CV_32F=5,CV_64F=6};可見 0和1都代表8位元,2和3都代表16位元,4和5代表32位元,6代表64位元;

depth:深度,即每一個畫素的位數(bits),那麼我們建立的時候就可以知道根據型別也就可以知道每個畫素的位數,也就是知道了建立mat的深度。

影象深度(depth)

| 代表數位 | 影象深度 | 對應資料型別 |

|---|---|---|

| 0 | CV_8U | 8位元無符號-位元組型別 |

| 1 | CV_8S | 8位元有符號-位元組型別 |

| 2 | CV_16U | 16位元無符號型別 |

| 3 | CV_16S | 16位元有符號型別 |

| 4 | CV_32S | 32整型資料型別 |

| 5 | CV_32F | 32位元浮點數型別 |

| 6 | CV_64F | 64位元雙精度型別 |

影象型別即影象深度與影象通道陣列合,單通道影象型別代表的數位與影象深度相同,每增加一個通道,在單通道對應數位基礎上加8。例如:單通道CV_8UC1=0,雙連結CV_8UC2=CV8UC1+8=8,三通道CV_8UC3=CV8UC1+8+8=16。

影象型別(type(對應數位))

| 單通道 | 雙連結 | 三通道 | 四通道 |

|---|---|---|---|

| CV_8UC1(0) | CV_8UC2(8) | CV_8UC3(16) | CV_8UC4(24) |

| CV_32SC1(4) | CV_32SC2(12) | CV_32SC3(20) | CV_32SC4(28) |

| CV_32FC1(5) | CV_32FC2(13) | CV_32FC3(21) | CV_32FC4(29) |

| CV_64FC1(6) | CV_64FC2(14) | CV_64FC3(22) | CV_64FC4(30) |

| CV_16SC1(3) | CV_16SC2(11) | CV_16SC3(19) | CV_16SC4(27) |

opencv4_002.cpp

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

int main(int argc, char** argv) {

Mat src = imread("D:/images/lena.jpg");

if (src.empty()) {

printf("could not find image file");

return -1;

}

namedWindow("opencv4_002", WINDOW_AUTOSIZE);

imshow("opencv4_002", src);

int width = src.cols; //影象寬度

int height = src.rows; //影象高度

int dim = src.channels(); //dimension 維度

int d = src.depth(); //影象深度

int t = src.type(); //影象型別

printf("width:%d, height:%d, dim:%d, depth:%d, type:%d \n", width, height, dim, d, t);

waitKey(0);

destroyAllWindows();

return 0;

}

效果:

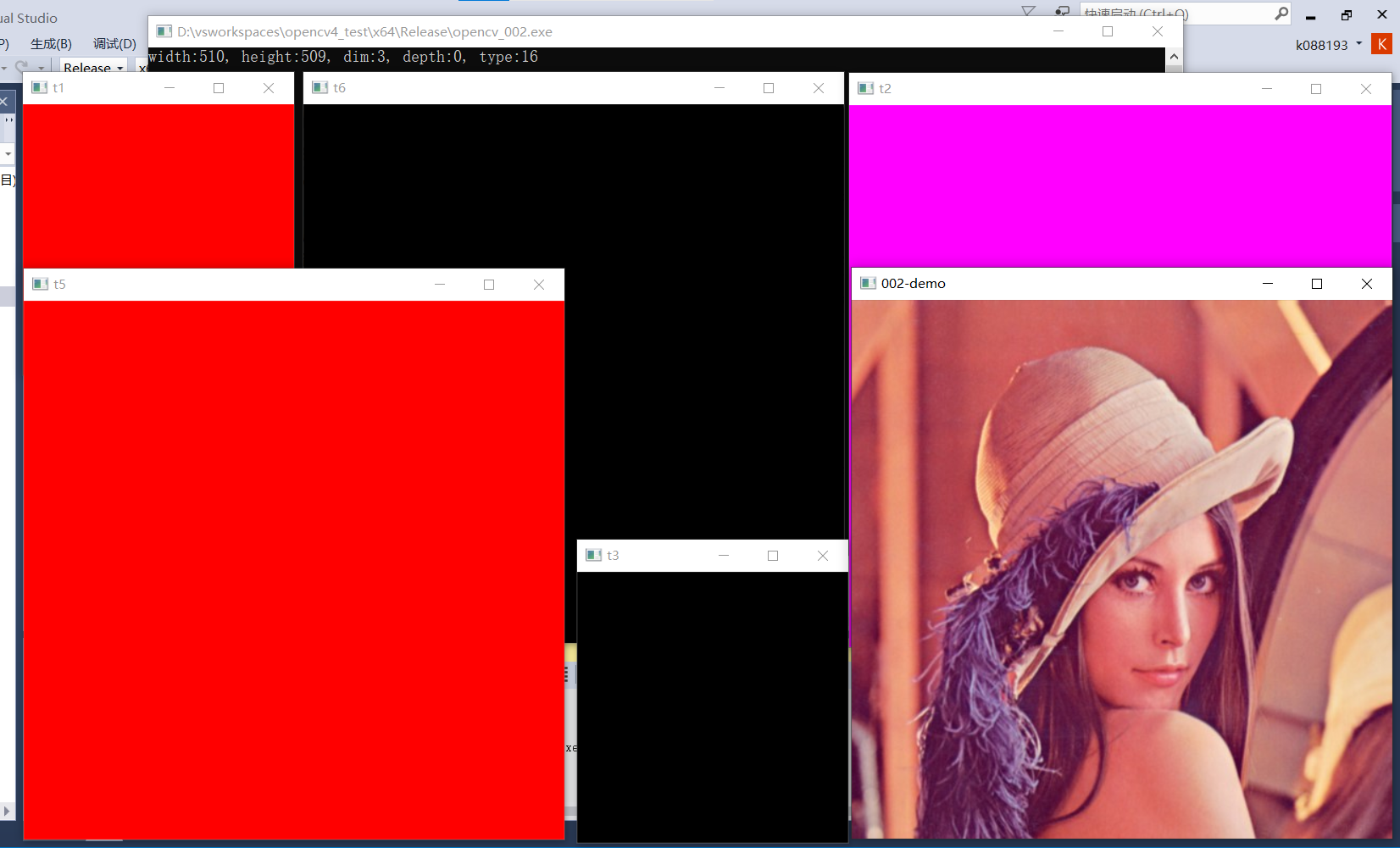

5、Mat物件建立與使用

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

int main(int argc, char** argv) {

Mat src = imread("D:/images/lena.jpg");

if (src.empty()) {

printf("could not find image file");

return -1;

}

//namedWindow("opencv4_002", WINDOW_AUTOSIZE);

//imshow("opencv4_002", src);

int width = src.cols; //影象寬度

int height = src.rows; //影象高度

int dim = src.channels(); //dimension 維度

int d = src.depth(); //影象深度

int t = src.type(); //影象型別

if (t == CV_8UC3) {

printf("width:%d, height:%d, dim:%d, depth:%d, type:%d \n", width, height, dim, d, t);

}

//create one

Mat t1 = Mat(256, 256, CV_8UC3);

t1 = Scalar(0, 0, 255);

imshow("t1", t1);

//create two

Mat t2 = Mat(Size(512, 512), CV_8UC3);

t2 = Scalar(255, 0, 255);

imshow("t2", t2);

//create three

Mat t3 = Mat::zeros(Size(256, 256), CV_8UC3);

imshow("t3", t3);

//create from source

Mat t4 = src;

Mat t5;

//Mat t5 = src.clone();

src.copyTo(t5);

t5 = Scalar(0, 0, 255);

imshow("t5", t5);

imshow("002-demo", src);

Mat t6 = Mat::zeros(src.size(), src.type());

imshow("t6", t6);

waitKey(0);

destroyAllWindows();

return 0;

}

效果:

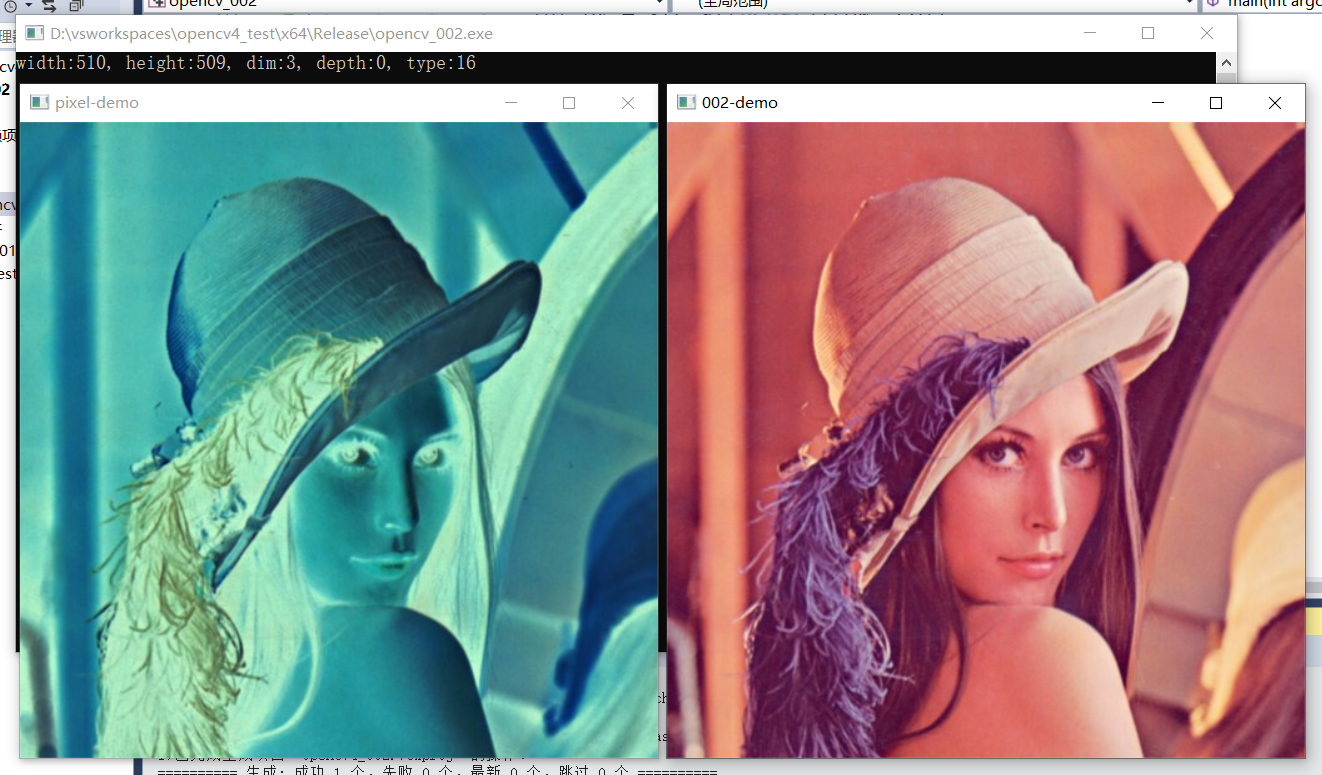

6、遍歷與存取每個畫素

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

int main(int argc, char** argv) {

Mat src = imread("D:/images/lena.jpg");

if (src.empty()) {

printf("could not find image file");

return -1;

}

//namedWindow("opencv4_002", WINDOW_AUTOSIZE);

//imshow("opencv4_002", src);

int width = src.cols; //影象寬度

int height = src.rows; //影象高度

int dim = src.channels(); //dimension 維度

int d = src.depth(); //影象深度

int t = src.type(); //影象型別

if (t == CV_8UC3) {

printf("width:%d, height:%d, dim:%d, depth:%d, type:%d \n", width, height, dim, d, t);

}

//create one

Mat t1 = Mat(256, 256, CV_8UC3);

t1 = Scalar(0, 0, 255);

//imshow("t1", t1);

//create two

Mat t2 = Mat(Size(512, 512), CV_8UC3);

t2 = Scalar(255, 0, 255);

//imshow("t2", t2);

//create three

Mat t3 = Mat::zeros(Size(256, 256), CV_8UC3);

//imshow("t3", t3);

//create from source

Mat t4 = src;

Mat t5;

//Mat t5 = src.clone();

src.copyTo(t5);

t5 = Scalar(0, 0, 255);

//imshow("t5", t5);

imshow("002-demo", src);

Mat t6 = Mat::zeros(src.size(), src.type());

//imshow("t6", t6);

//int height = src.rows;

//int width = src.cols;

/*int ch = src.channels();

for (int row = 0; row < height; row++) { //遍歷每行的每個畫素

for (int col = 0; col < width; col++) {

if (ch == 3) {

Vec3b pixel = src.at<Vec3b>(row, col); //Vec3i,Vec3f對應不同型別影象畫素值

int blue = pixel[0];

int green = pixel[1];

int red = pixel[2];

src.at<Vec3b>(row, col)[0] = 255 - blue; //BGR影象反轉

src.at<Vec3b>(row, col)[1] = 255 - green;

src.at<Vec3b>(row, col)[2] = 255 - red;

}

if (ch == 1) {

int pv = src.at<uchar>(row, col);

src.at<uchar>(row, col) = 255 - pv;

}

}

}*/

Mat result = Mat::zeros(src.size(), src.type());

for (int row = 0; row < height; row++) { //使用指標遍歷

uchar* current_row = src.ptr<uchar>(row);

uchar* result_row = result.ptr<uchar>(row); //使用指標控制影象複製輸出

for (int col = 0; col < width; col++) {

if (dim == 3) {

int blue = *current_row++;

int green = *current_row++;

int red = *current_row++;

*result_row++ = blue;

*result_row++ = green;

*result_row++ = red;

}

if (dim == 1) {

int pv = *current_row++;

*result_row++ = pv;

}

}

}

//imshow("pixel-demo", src);

imshow("pixel-demo", result);

waitKey(0);

destroyAllWindows();

return 0;

}

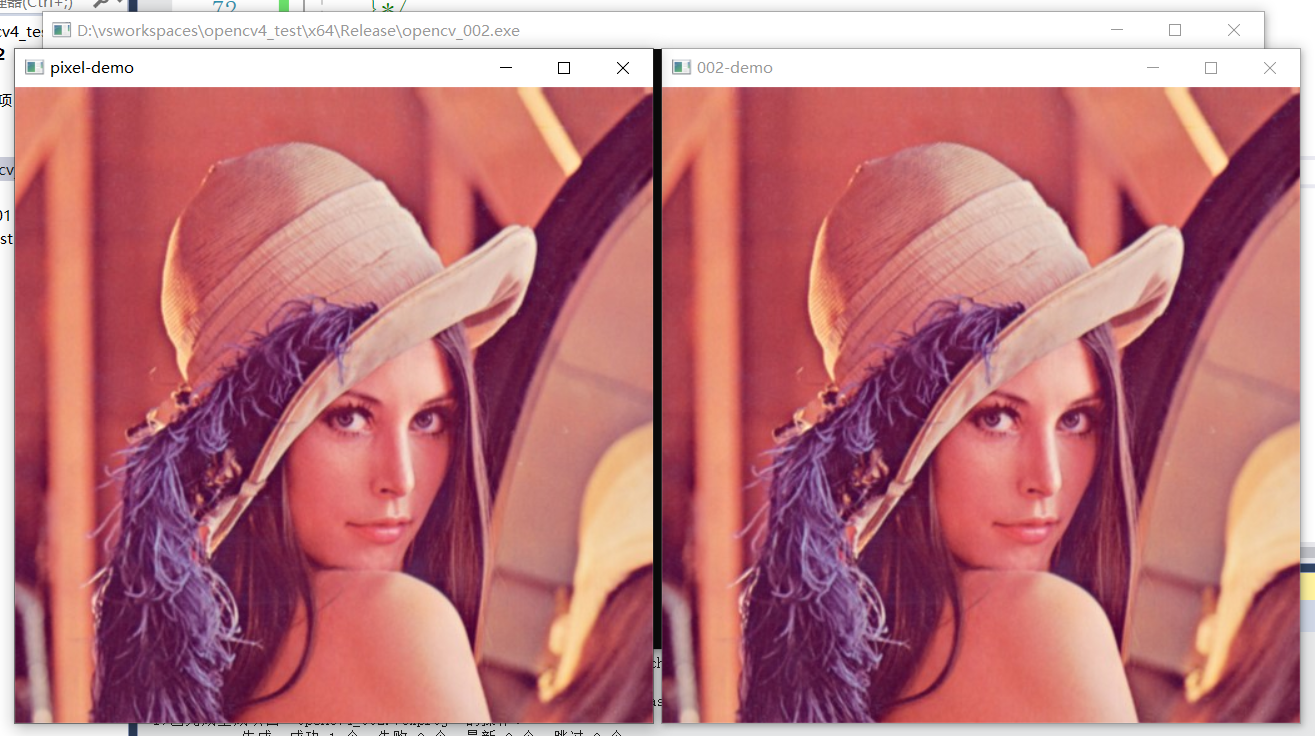

效果:

1、通過行列遍歷每個畫素

2、使用指標遍歷影象

7、影象的算術操作

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

int main(int argc, char** argv) {

Mat src1 = imread("D:/environment/opencv/sources/samples/data/WindowsLogo.jpg");

Mat src2 = imread("D:/environment/opencv/sources/samples/data/LinuxLogo.jpg");

if (src1.empty() || src2.empty()) {

printf("could not find image file");

return -1;

}

//imshow("input1", src1);

//imshow("input2", src2);

/*

//程式碼演示

Mat dst1;

add(src1, src2, dst1);

imshow("add-demo", dst1);

Mat dst2;

subtract(src1, src2, dst2);

imshow("subtract-demo", dst2);

Mat dst3;

multiply(src1, src2, dst3);

imshow("multiply-demo", dst3);

Mat dst4;

divide(src1, src2, dst4);

imshow("divide-demo", dst4);

*/

Mat src = imread("D:/images/lena.jpg");

imshow("input", src);

Mat black = Mat::zeros(src.size(), src.type());

black = Scalar(40, 40, 40);

Mat dst_add,dst_subtract,dst_addWeight;

add(src, black, dst_add); //提升亮度

subtract(src, black, dst_subtract); //降低亮度

addWeighted(src, 1.5, black, 0.5, 0.0, dst_addWeight); //提升影象對比度,addWeighted(圖1,權重1,圖2,權重2,增加的常數,輸出結果)

imshow("result_add", dst_add);

imshow("result_subtract", dst_subtract);

imshow("result_addWeight", dst_addWeight);

waitKey(0);

destroyAllWindows();

return 0;

}

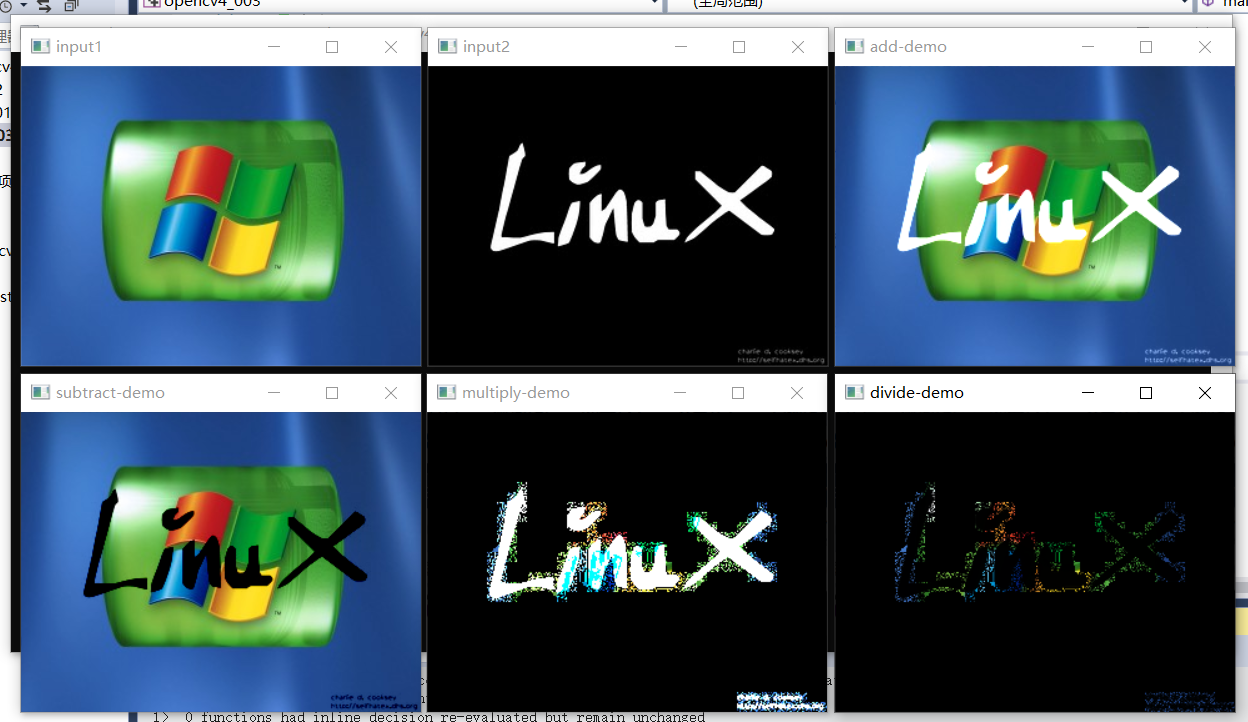

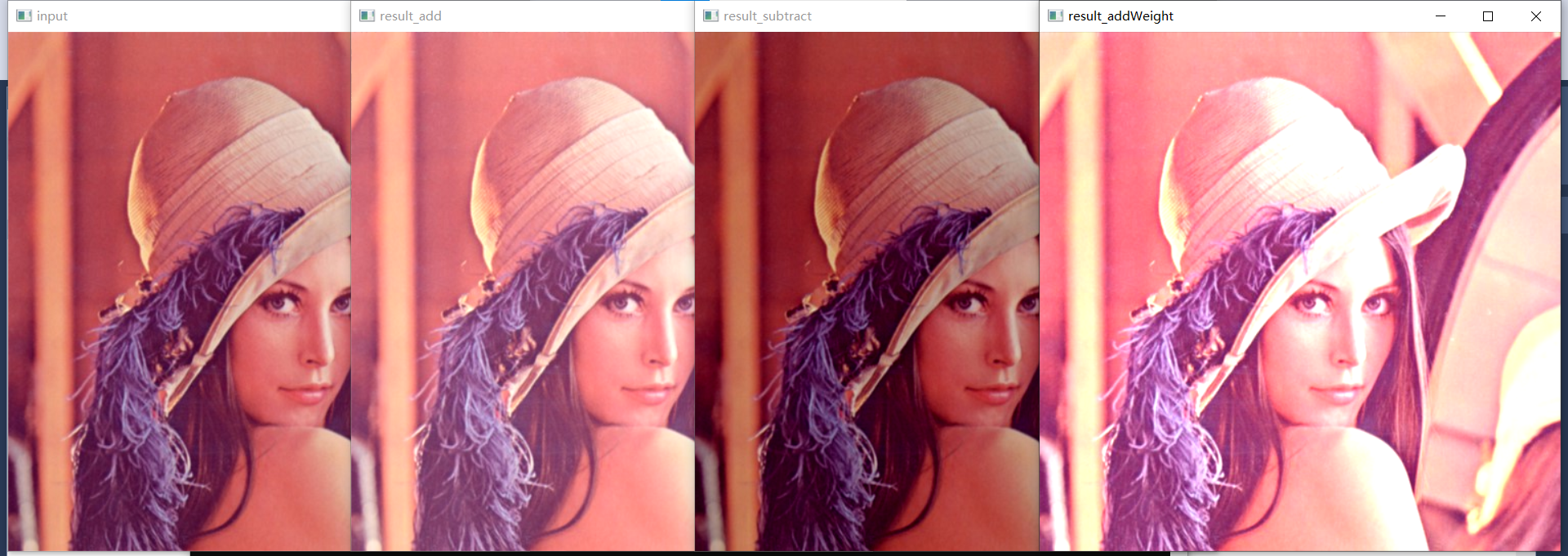

效果:

1、不同影象加減乘除運算

2、影象亮度及對比度調整

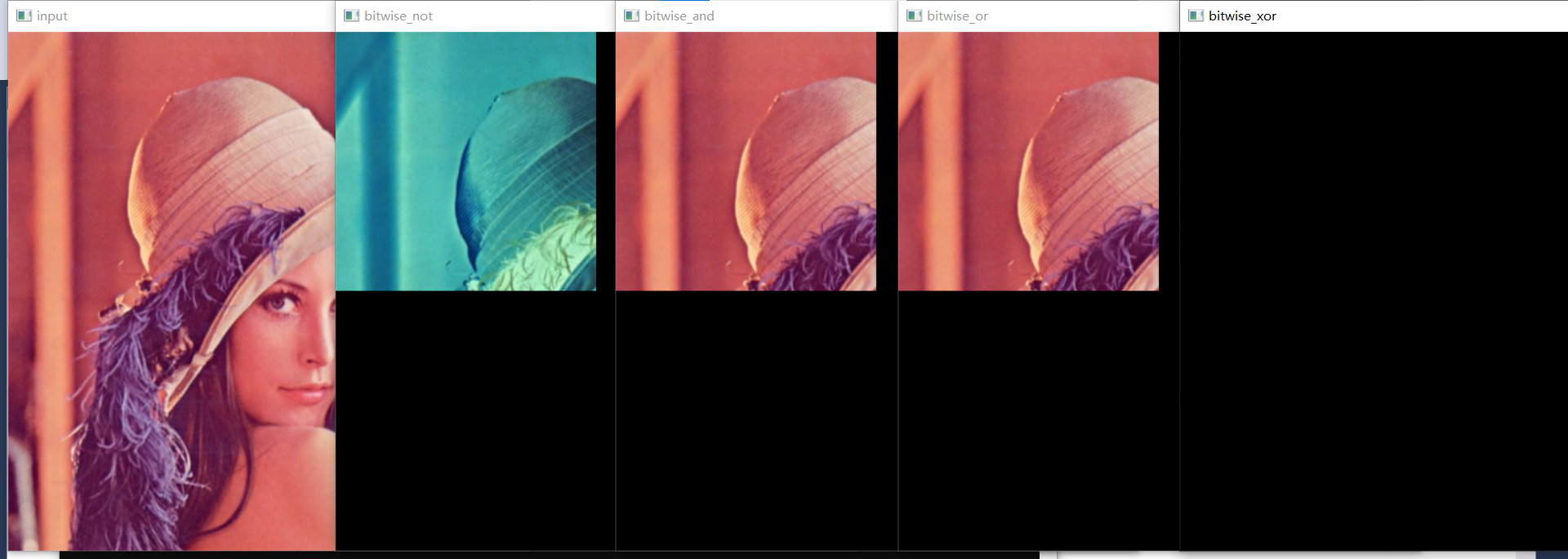

8、影象位元運算

位元運算包括:與、或、非、互斥或,進行影象取反等操作時效率較高。

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

int main(int argc, char** argv) {

Mat src = imread("D:/images/lena.jpg");

if (src.empty()) {

printf("could not find image file");

return -1;

}

imshow("input", src);

//建立mask矩陣,用於限定ROI區域範圍

Mat mask = Mat::zeros(src.size(), CV_8UC1);

int width = src.cols / 2;

int height = src.rows / 2;

for (int row = 0; row < height; row++) {

for (int col = 0; col < width; col++) {

mask.at<uchar>(row, col) = 127;

}

}

Mat dst; //影象取反

//mask表示ROI區域

//非操作

bitwise_not(src, dst, mask); //bitwise_not(輸入影象,輸出影象,進行影象取反的範圍(對mask中不為0的部分對原影象進行取反))

imshow("bitwise_not", dst);

//與操作

bitwise_and(src, src, dst, mask);

imshow("bitwise_and", dst);

//或操作

bitwise_or(src, src, dst, mask);

imshow("bitwise_or", dst);

//互斥或操作,表示兩幅圖或操作後不相同的部分

bitwise_xor(src, src, dst, mask);

imshow("bitwise_xor", dst);

waitKey(0);

destroyAllWindows();

return 0;

}

效果:

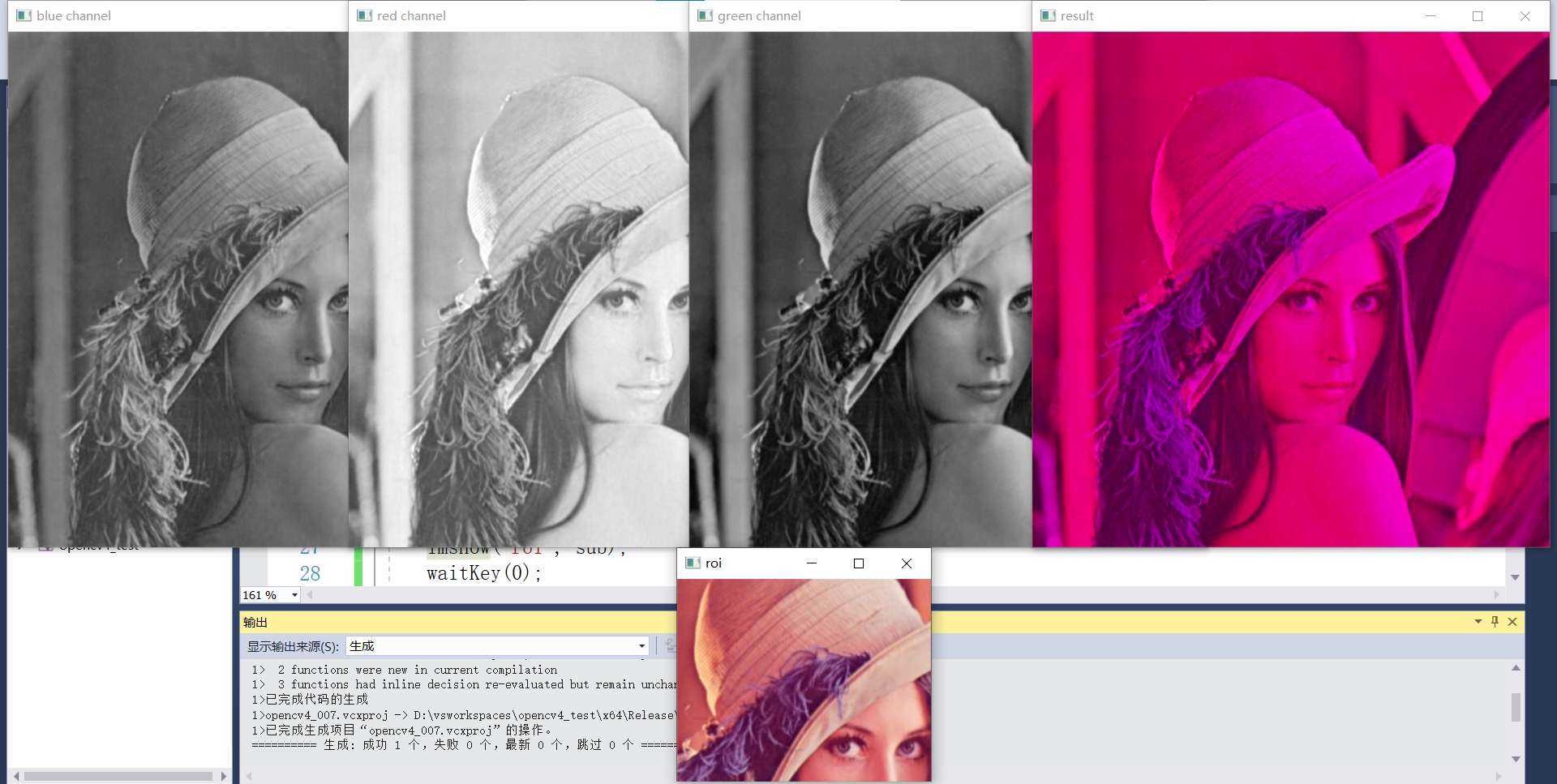

9、畫素資訊統計

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

int main(int argc, char** argv) {

//Mat src = imread("D:/images/lena.jpg",IMREAD_GRAYSCALE);

Mat src = imread("D:/images/lena.jpg");

if (src.empty()) {

printf("could not find image file");

return -1;

}

namedWindow("input", WINDOW_AUTOSIZE);

imshow("input", src);

int h = src.rows;

int w = src.cols;

int ch = src.channels();

printf("w:%d,h:%d,channels:%d\n", w, h, ch);

//最大最小值

//double min_val;

//double max_val;

//Point minloc;

//Point maxloc;

//minMaxLoc(src, &min_val, &max_val, &minloc, &maxloc);

//printf("min:%.2f,max:%.2f\n", min_val, max_val);

//畫素值統計資訊,獲取每個畫素值的畫素個數

vector<int> hist(256);

for (int i = 0; i < 256; i++) {

hist[i] = 0;

}

for (int row = 0; row < h; row++) {

for (int col = 0; col < w; col++) {

int pv = src.at<uchar>(row, col);

hist[pv]++;

}

}

//均值方差

Scalar s = mean(src);

printf("mean:%.2f,%.2f,%.2f\n", s[0], s[1], s[2]);

Mat mm, mstd;

meanStdDev(src, mm, mstd);

int rows = mstd.rows;

printf("rows:%d\n", rows);

printf("stddev:%.2f,%.2f,%.2f\n", mstd.at<double>(0, 0), mstd.at<double>(1, 0), mstd.at<double>(2, 0));

waitKey(0);

destroyAllWindows();

return 0;

}

效果一(最大最小值):

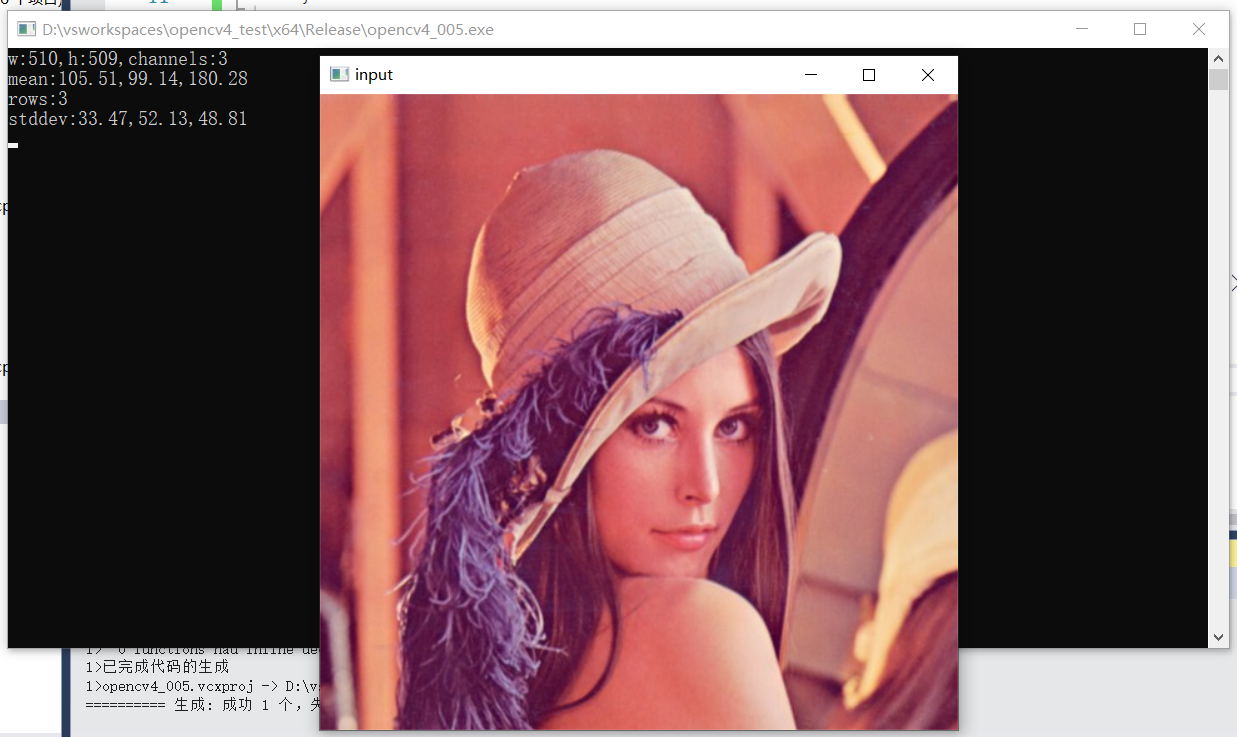

效果二(均值方差):

10、圖形繪製與填充

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

int main(int argc, char** argv) {

Mat canvas = Mat::zeros(Size(512, 512), CV_8UC3);

namedWindow("canvas", WINDOW_AUTOSIZE);

imshow("canvas", canvas);

//相關繪製API演示

line(canvas, Point(10, 10), Point(400, 400), Scalar(0, 0, 255), 1, LINE_8); //輸入線段的起止點

Rect rect(100, 100, 200, 200); //繪製長方形

rectangle(canvas, rect, Scalar(255, 0, 0), 1, 8);

circle(canvas, Point(256, 256), 100, Scalar(0, 255, 255), -1, 8); //繪製圓

RotatedRect rrt; //繪製橢圓

rrt.center = Point2f(256, 256);

rrt.angle = 45.0;

rrt.size = Size(100, 200);

ellipse(canvas, rrt, Scalar(0, 255, 255), -1, 8); //線段寬度為負數時表示填充,整數表示線寬

imshow("canvas", canvas);

Mat image = Mat::zeros(Size(512, 512), CV_8UC3);

int x1 = 0, y1 = 0;

int x2 = 0, y2 = 0;

RNG rng(12345); //生成亂數

while (true) {

x1 = (int)rng.uniform(0, 512); //將亂數轉換成整數

x2 = (int)rng.uniform(0, 512);

y1 = (int)rng.uniform(0, 512);

y2 = (int)rng.uniform(0, 512);

int w = abs(x2 - x1);

int h = abs(y2 - y1);

rect.x = x1; //給定矩形左上角座標

rect.y = y1;

rect.width = w;

rect.height = h;

//image = Scalar(0, 0, 0); //將畫布清空的操作,可以保證每次只繪製一個圖形

rectangle(image, rect, Scalar((int)rng.uniform(0, 255), (int)rng.uniform(0, 255), (int)rng.uniform(0, 255)), 1, LINE_8);

//line(image, Point(x1, y1), Point(x2, y2), Scalar((int)rng.uniform(0, 255), (int)rng.uniform(0, 255), (int)rng.uniform(0, 255)), 1, LINE_8);

imshow("image", image);

char c = waitKey(10);

if (c == 27) {

break;

}

}

waitKey(0);

destroyAllWindows();

return 0;

}

效果:

11、影象通道合併與分離

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

int main(int argc, char** argv) {

Mat src = imread("D:/images/lena.jpg");

vector<Mat> mv; //通道分離儲存的容器

split(src, mv);

int size = mv.size();

printf("number of channels:%d\n", size);

imshow("blue channel", mv[0]);

imshow("green channel", mv[1]);

imshow("red channel", mv[2]);

mv[1] = Scalar(0); //將mv[1]通道畫素值都設為0

Mat dst;

merge(mv, dst);

imshow("result", dst);

Rect roi; //選取影象中的roi區域顯示

roi.x = 100;

roi.y = 100;

roi.width = 250;

roi.height = 200;

Mat sub = src(roi);

imshow("roi", sub);

waitKey(0);

destroyAllWindows();

return 0;

}

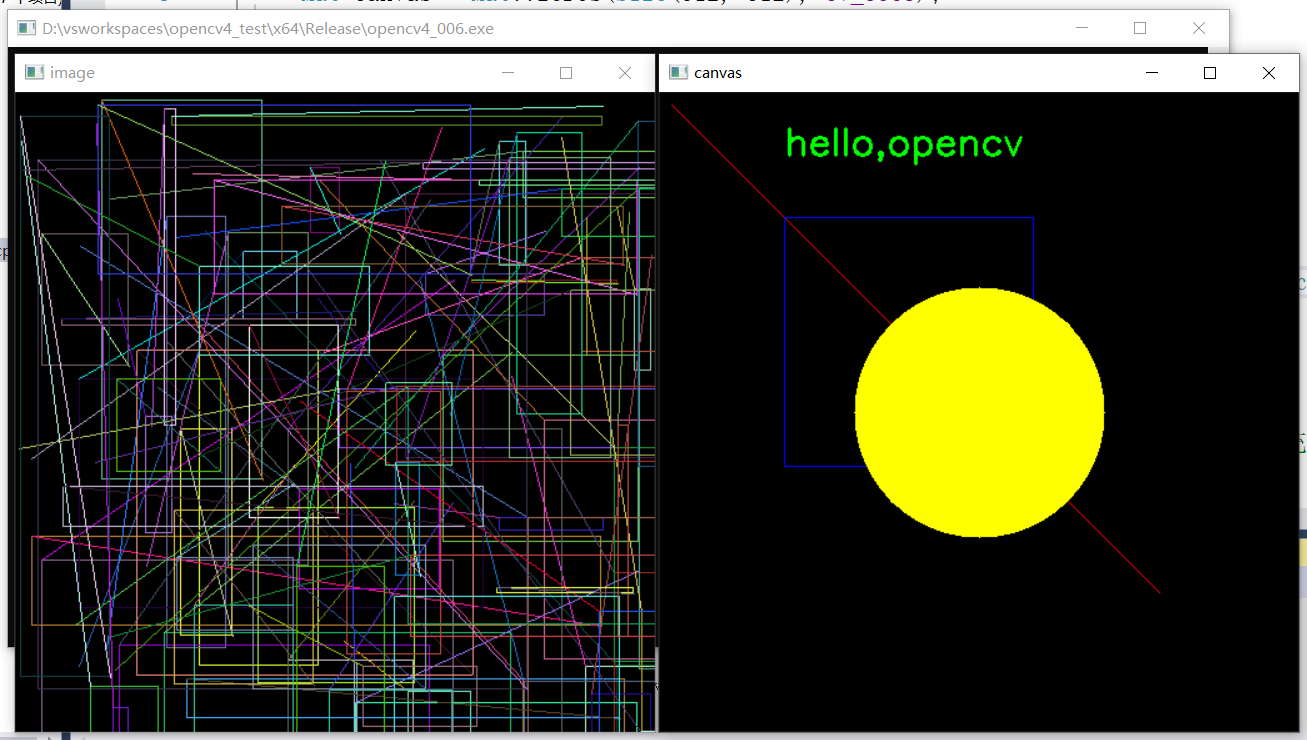

效果:

12、影象直方圖統計

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

int main(int argc, char** argv) {

Mat src = imread("D:/images/lena.jpg");

vector<Mat> mv; //通道分離儲存的容器

split(src, mv);

int size = mv.size();

//計算直方圖

int histSize = 256;

float range[] = { 0,255 };

const float* histRanges = { range };

Mat blue_hist, green_hist, red_hist;

calcHist(&mv[0], 1, 0, Mat(), blue_hist, 1, &histSize, &histRanges, true, false); //計算每個通道的直方圖

calcHist(&mv[1], 1, 0, Mat(), green_hist, 1, &histSize, &histRanges, true, false);

calcHist(&mv[2], 1, 0, Mat(), red_hist, 1, &histSize, &histRanges, true, false);

Mat result = Mat::zeros(Size(600, 400), CV_8UC3);

int margin = 50;

int nm = result.rows - 2 * margin;

normalize(blue_hist, blue_hist, 0, nm, NORM_MINMAX, -1, Mat()); //將直方圖進行歸一化操作,對映到指定範圍內

normalize(green_hist, green_hist, 0, nm, NORM_MINMAX, -1, Mat());

normalize(red_hist, red_hist, 0, nm, NORM_MINMAX, -1, Mat());

float step = 500.0 / 256.0;

for (int i = 0; i < 255; i++) { //迴圈遍歷直方圖每個畫素值的數量,將相鄰的兩個畫素值數量相連

line(result, Point(step*i + 50, 50 + nm - blue_hist.at<float>(i, 0)), Point(step*(i + 1) + 50, 50 + nm - blue_hist.at<float>(i + 1, 0)), Scalar(255, 0, 0), 2, LINE_AA, 0);

line(result, Point(step*i + 50, 50 + nm - green_hist.at<float>(i, 0)), Point(step*(i + 1) + 50, 50 + nm - green_hist.at<float>(i + 1, 0)), Scalar(0, 255, 0), 2, LINE_AA, 0);

line(result, Point(step*i + 50, 50 + nm - red_hist.at<float>(i, 0)), Point(step*(i + 1) + 50, 50 + nm - red_hist.at<float>(i + 1, 0)), Scalar(0, 0, 255), 2, 8, 0);

}

imshow("histgram-demo", result);

waitKey(0);

destroyAllWindows();

return 0;

}

效果:

13、影象直方圖均衡化

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

void eh_demo();

int main(int argc, char** argv) {

eh_demo();

}

void eh_demo() {

//Mat src = imread("D:/images/box_in_scene.png");

Mat src = imread("D:/images/gray.png");

Mat gray, dst;

cvtColor(src, gray, COLOR_BGR2GRAY);

imshow("gray", gray);

equalizeHist(gray, dst);

imshow("eh-demo", dst);

//計算直方圖

int histSize = 256;

float range[] = { 0,255 };

const float* histRanges = { range };

Mat blue_hist, green_hist, red_hist;

calcHist(&gray, 1, 0, Mat(), blue_hist, 1, &histSize, &histRanges, true, false); //計算每個通道的直方圖

calcHist(&dst, 1, 0, Mat(), green_hist, 1, &histSize, &histRanges, true, false);

Mat result = Mat::zeros(Size(600, 400), CV_8UC3);

int margin = 50;

int nm = result.rows - 2 * margin;

normalize(blue_hist, blue_hist, 0, nm, NORM_MINMAX, -1, Mat()); //將直方圖進行歸一化操作,對映到指定範圍內

normalize(green_hist, green_hist, 0, nm, NORM_MINMAX, -1, Mat());

normalize(red_hist, red_hist, 0, nm, NORM_MINMAX, -1, Mat());

float step = 500.0 / 256.0;

for (int i = 0; i < 255; i++) { //迴圈遍歷直方圖每個畫素值的數量,將相鄰的兩個畫素值數量相連

line(result, Point(step*i + 50, 50 + nm - blue_hist.at<float>(i, 0)), Point(step*(i + 1) + 50, 50 + nm - blue_hist.at<float>(i + 1, 0)), Scalar(0, 0, 255), 2, LINE_AA, 0);

line(result, Point(step*i + 50, 50 + nm - green_hist.at<float>(i, 0)), Point(step*(i + 1) + 50, 50 + nm - green_hist.at<float>(i + 1, 0)), Scalar(0, 255, 255), 2, LINE_AA, 0);

}

imshow("result", result);

waitKey(0);

destroyAllWindows();

}

效果:

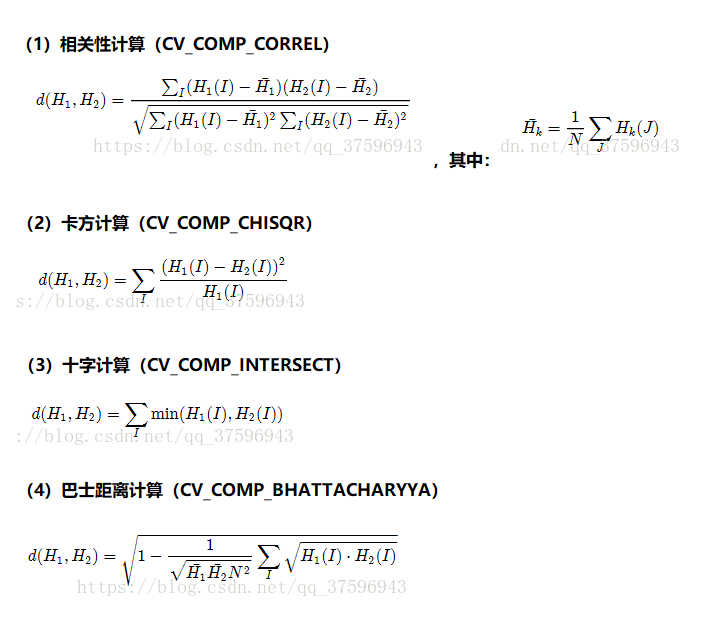

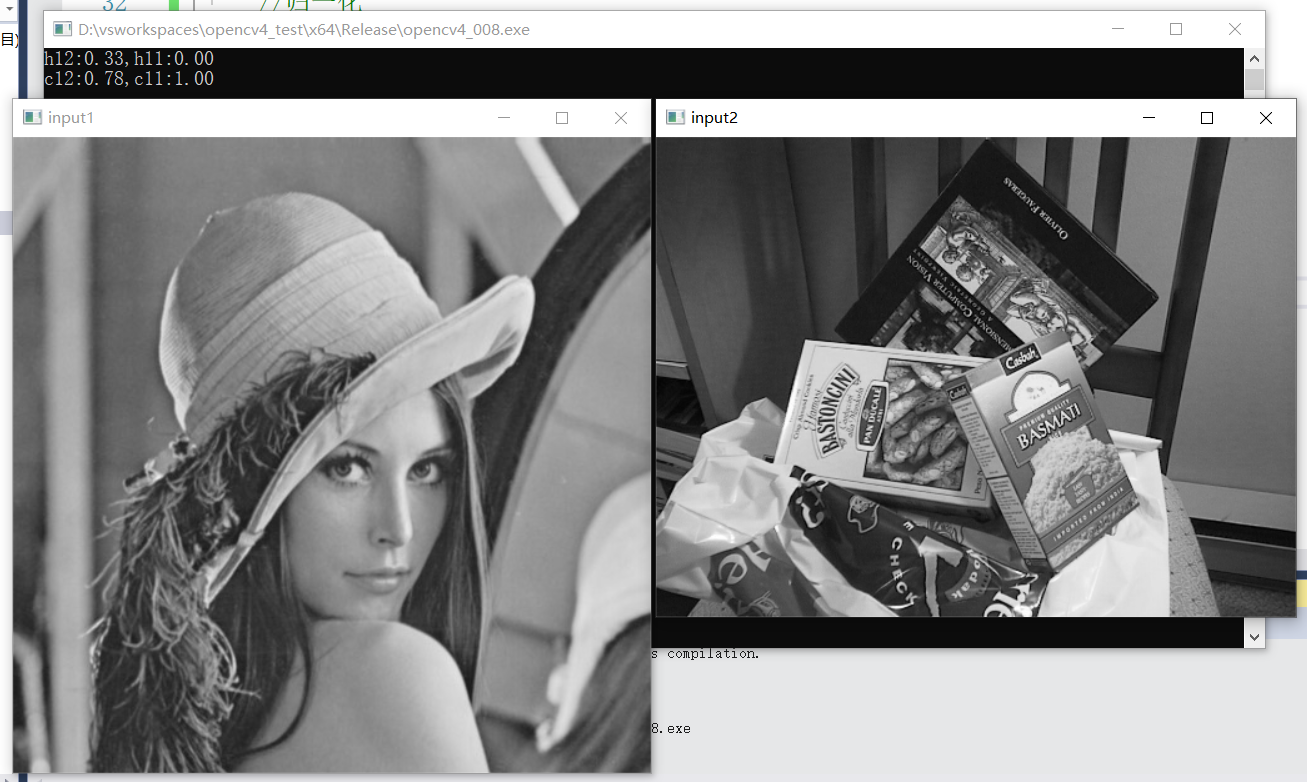

14、影象直方圖相似比較

對輸入的兩張影象計算得到直方圖H1與H2,歸一化到相同的尺度空間,然後可以通過計算H1與H2的之間的距離得到兩個直方圖的

相似度進而比較影象本身的相似程度。

OpenCV提供的比較方法有四種:

-

Correlation 相關性比較

-

Chi-Square 卡方比較

-

Intersection 十字交叉性

-

Bhattacharyya distance 巴氏距離

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

void hist_compare();

int main(int argc, char** argv) {

hist_compare();

}

void hist_compare() {

Mat src1 = imread("D:/images/gray.png");

Mat src2 = imread("D:/images/box_in_scene.png");

imshow("input1", src1);

imshow("input2", src2);

//計算直方圖

int histSize[] = { 256,256,256 };

int channels[] = { 0,1,2 };

Mat hist1, hist2;

float c1[] = { 0,255 };

float c2[] = { 0,255 };

float c3[] = { 0,255 };

const float* histRanges[] = { c1,c2,c3 };

Mat blue_hist, green_hist, red_hist;

calcHist(&src1, 1, channels, Mat(), hist1, 3, histSize, histRanges, true, false); //計算每個通道的直方圖

calcHist(&src2, 1, channels, Mat(), hist2, 3, histSize, histRanges, true, false);

//歸一化

normalize(hist1, hist1, 0, 1.0, NORM_MINMAX, -1, Mat());

normalize(hist2, hist2, 0, 1.0, NORM_MINMAX, -1, Mat());

//比較巴氏距離,值越小表示越相似,與相似度負相關,最小為0.00

double h12 = compareHist(hist1, hist2, HISTCMP_BHATTACHARYYA);

double h11 = compareHist(hist1, hist1, HISTCMP_BHATTACHARYYA);

printf("h12:%.2f,h11:%.2f\n", h12, h11);

//相關性比較,值越大表示越相關,與相似度正相關,最大為1.00

double c12 = compareHist(hist1, hist2, HISTCMP_CORREL);

double c11 = compareHist(hist1, hist1, HISTCMP_CORREL);

printf("c12:%.2f,c11:%.2f\n", c12, c11);

waitKey(0);

destroyAllWindows();

}

效果:

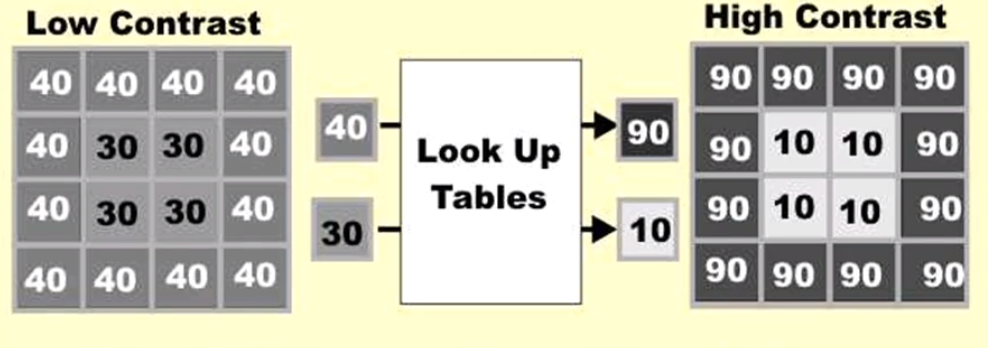

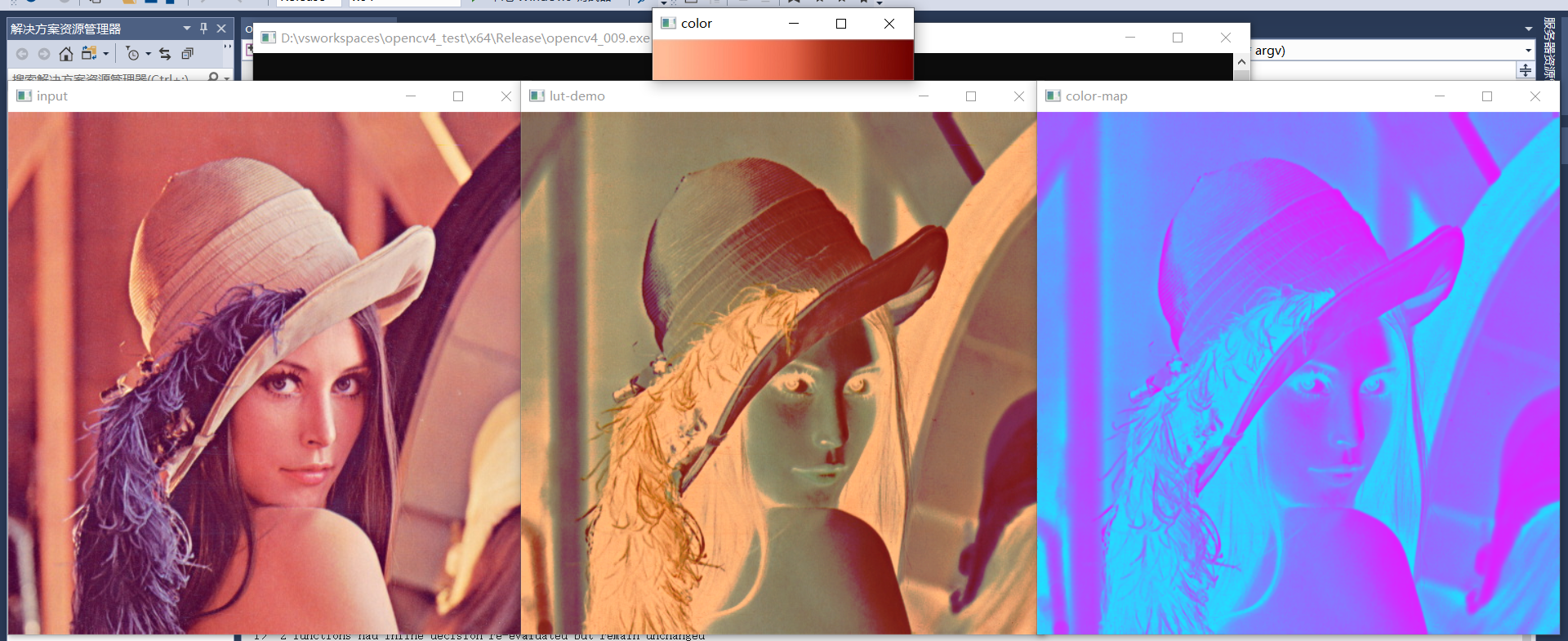

15、影象查詢表與顏色表

LUT(Look Up Tables):影象查詢表。通過預先對每一個畫素值對應的計算結果進行計算,將計算結果放在影象查詢表中,在實際影象處理時,只需要查詢每個畫素在影象查詢表中對應的結果即可,不需要再進行大量重複計算。

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

int main(int argc, char** argv) {

Mat src = imread("D:/images/lena.jpg");

if (src.empty()) {

printf("could not find image file");

return -1;

}

namedWindow("input", WINDOW_AUTOSIZE);

imshow("input", src);

Mat color = imread("D:/images/lut.png");

Mat lut = Mat::zeros(256, 1, CV_8UC3); //第一個引數為行數,第二個引數為列數

for (int i = 0; i < 256; i++) {

lut.at<Vec3b>(i, 0) = color.at<Vec3b>(10, i);

}

imshow("color", color);

Mat dst;

LUT(src, lut, dst); //使用自己的影象查詢表

imshow("lut-demo", dst);

applyColorMap(src, dst, COLORMAP_COOL); //使用OpenCV內建的影象查詢表

imshow("color-map", dst);

waitKey(0);

destroyAllWindows();

return 0;

}

效果:

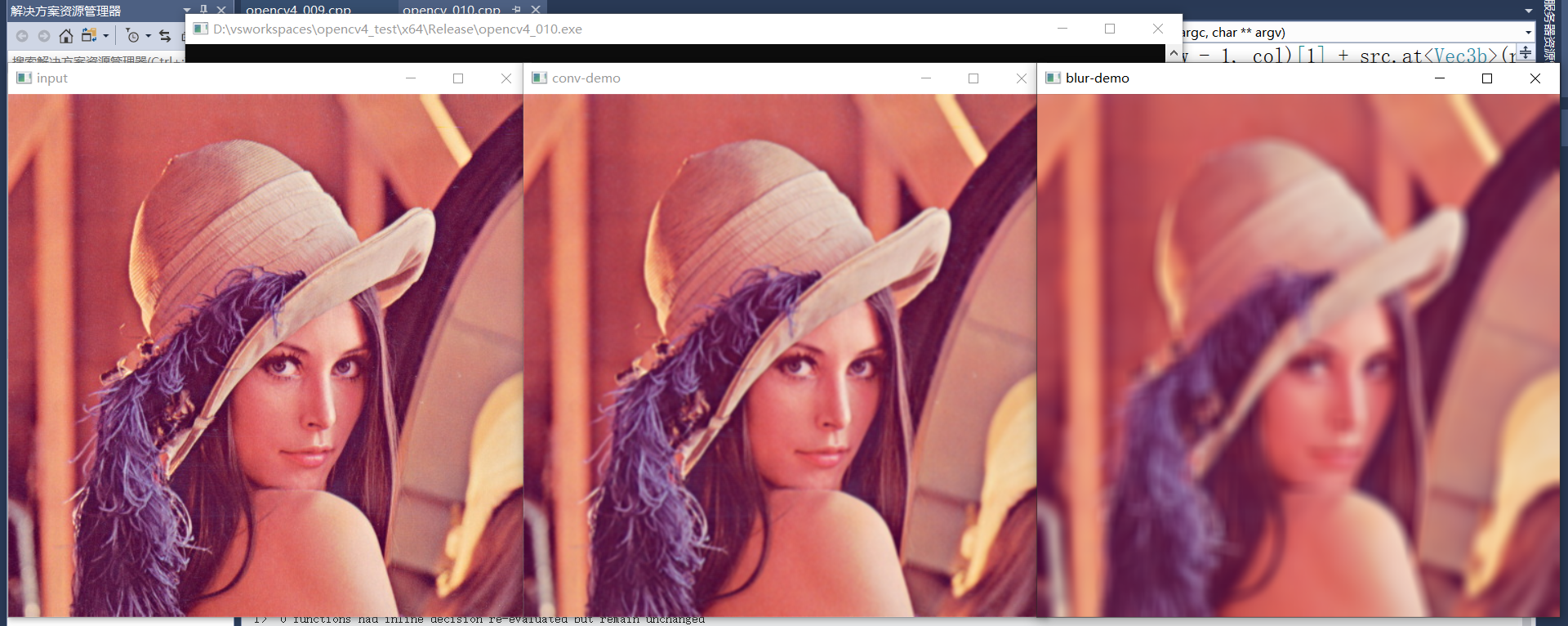

16、影象折積

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

int main(int argc, char** argv) {

Mat src = imread("D:/images/lena.jpg");

Mat result = src.clone();

if (src.empty()) {

printf("could not find image file");

return -1;

}

namedWindow("input", WINDOW_AUTOSIZE);

imshow("input", src);

int h = src.rows;

int w = src.cols;

for (int row = 1; row < h - 1; row++) {

for (int col = 1; col < w - 1; col++) {

int sb = src.at<Vec3b>(row - 1, col - 1)[0] + src.at<Vec3b>(row - 1, col)[0] + src.at<Vec3b>(row - 1, col + 1)[0] +

src.at<Vec3b>(row, col - 1)[0] + src.at<Vec3b>(row, col)[0] + src.at<Vec3b>(row, col + 1)[0] +

src.at<Vec3b>(row + 1, col - 1)[0] + src.at<Vec3b>(row + 1, col)[0] + src.at<Vec3b>(row + 1, col + 1)[0];

int sg = src.at<Vec3b>(row - 1, col - 1)[1] + src.at<Vec3b>(row - 1, col)[1] + src.at<Vec3b>(row - 1, col + 1)[1] +

src.at<Vec3b>(row, col - 1)[1] + src.at<Vec3b>(row, col)[1] + src.at<Vec3b>(row, col + 1)[1] +

src.at<Vec3b>(row + 1, col - 1)[1] + src.at<Vec3b>(row + 1, col)[1] + src.at<Vec3b>(row + 1, col + 1)[1];

int sr = src.at<Vec3b>(row - 1, col - 1)[2] + src.at<Vec3b>(row - 1, col)[2] + src.at<Vec3b>(row - 1, col + 1)[2] +

src.at<Vec3b>(row, col - 1)[2] + src.at<Vec3b>(row, col)[2] + src.at<Vec3b>(row, col + 1)[2] +

src.at<Vec3b>(row + 1, col - 1)[2] + src.at<Vec3b>(row + 1, col)[2] + src.at<Vec3b>(row + 1, col + 1)[2];

result.at<Vec3b>(row, col)[0] = sb / 9;

result.at<Vec3b>(row, col)[1] = sg / 9;

result.at<Vec3b>(row, col)[2] = sr / 9;

}

}

imshow("conv-demo", result);

Mat dst;

blur(src, dst, Size(13, 13), Point(-1, -1), BORDER_DEFAULT); //錨定點Point座標為負值時,預設取影象中心點

imshow("blur-demo", dst);

waitKey(0);

destroyAllWindows();

return 0;

}

效果:

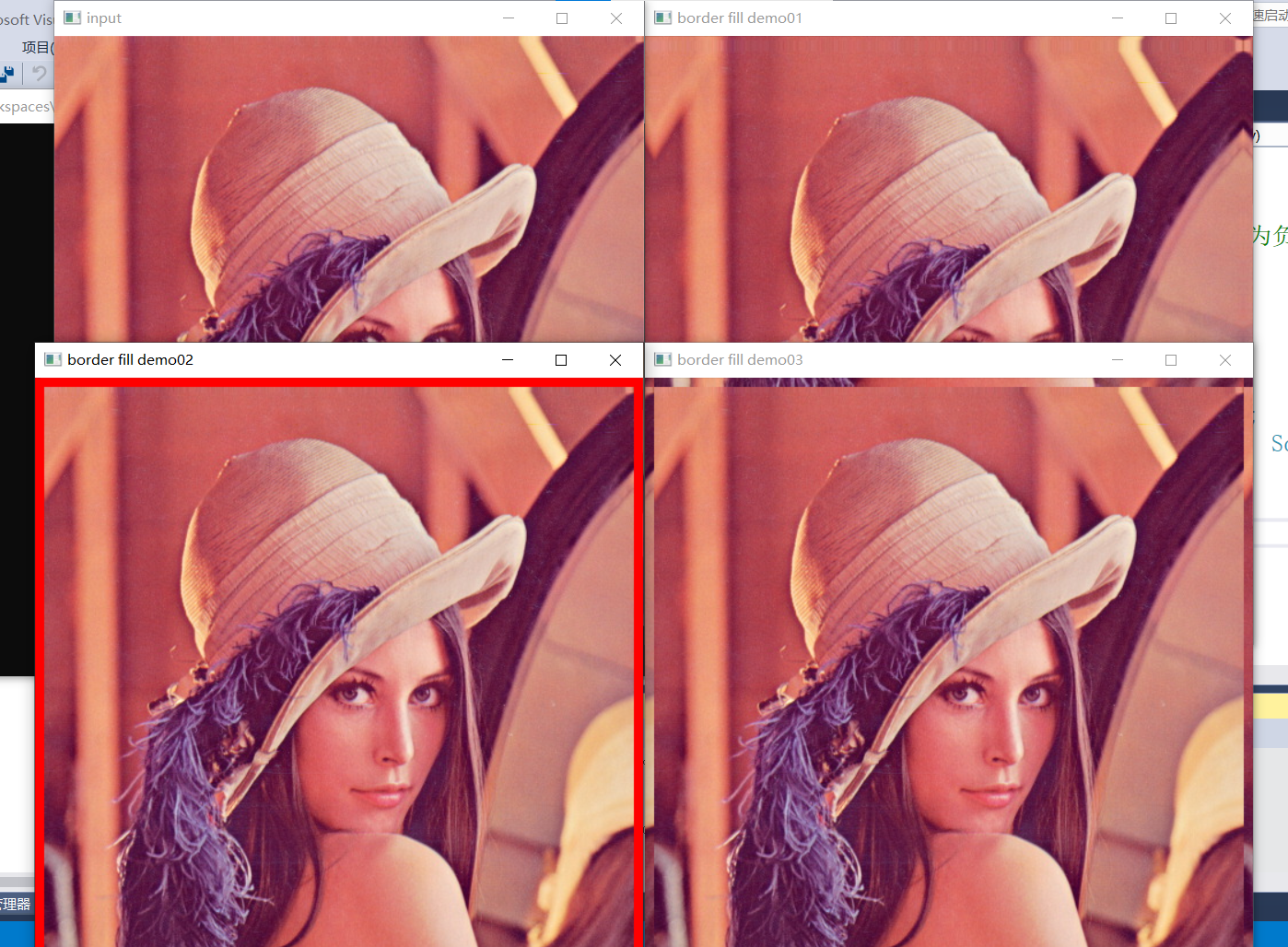

17、折積邊緣處理

- 折積處理時的邊緣畫素填充方法

| 填充型別 | 方法 |

|---|---|

| BORDER_CONSTANT | iiiiii|abcdefgh|iiiiiii |

| BORDER_REPLICATE | aaaaaa|abcdefgh|hhhhhhh |

| BORDER_WRAP | cdefgh|abcdefgh|abcdefg |

| BORDER_REFLECT_101(軸對稱) | gfedcb|abcdefgh|gfedcba |

| BORDER_DEFAULT | gfedcb|abcdefgh|gfedcba |

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

int main(int argc, char** argv) {

Mat src = imread("D:/images/lena.jpg");

Mat result = src.clone();

if (src.empty()) {

printf("could not find image file");

return -1;

}

namedWindow("input", WINDOW_AUTOSIZE);

imshow("input", src);

int h = src.rows;

int w = src.cols;

for (int row = 1; row < h - 1; row++) {

for (int col = 1; col < w - 1; col++) {

int sb = src.at<Vec3b>(row - 1, col - 1)[0] + src.at<Vec3b>(row - 1, col)[0] + src.at<Vec3b>(row - 1, col + 1)[0] +

src.at<Vec3b>(row, col - 1)[0] + src.at<Vec3b>(row, col)[0] + src.at<Vec3b>(row, col + 1)[0] +

src.at<Vec3b>(row + 1, col - 1)[0] + src.at<Vec3b>(row + 1, col)[0] + src.at<Vec3b>(row + 1, col + 1)[0];

int sg = src.at<Vec3b>(row - 1, col - 1)[1] + src.at<Vec3b>(row - 1, col)[1] + src.at<Vec3b>(row - 1, col + 1)[1] +

src.at<Vec3b>(row, col - 1)[1] + src.at<Vec3b>(row, col)[1] + src.at<Vec3b>(row, col + 1)[1] +

src.at<Vec3b>(row + 1, col - 1)[1] + src.at<Vec3b>(row + 1, col)[1] + src.at<Vec3b>(row + 1, col + 1)[1];

int sr = src.at<Vec3b>(row - 1, col - 1)[2] + src.at<Vec3b>(row - 1, col)[2] + src.at<Vec3b>(row - 1, col + 1)[2] +

src.at<Vec3b>(row, col - 1)[2] + src.at<Vec3b>(row, col)[2] + src.at<Vec3b>(row, col + 1)[2] +

src.at<Vec3b>(row + 1, col - 1)[2] + src.at<Vec3b>(row + 1, col)[2] + src.at<Vec3b>(row + 1, col + 1)[2];

result.at<Vec3b>(row, col)[0] = sb / 9;

result.at<Vec3b>(row, col)[1] = sg / 9;

result.at<Vec3b>(row, col)[2] = sr / 9;

}

}

imshow("conv-demo", result);

Mat dst;

blur(src, dst, Size(13, 13), Point(-1, -1), BORDER_DEFAULT); //錨定點Point座標為負值時,預設取影象中心點

imshow("blur-demo", dst);

//邊緣填充

int border = 8;

Mat border_m01, border_m02, border_m03;

copyMakeBorder(src, border_m01, border, border, border, border, BORDER_DEFAULT);

copyMakeBorder(src, border_m02, border, border, border, border, BORDER_CONSTANT, Scalar(0, 0, 255));

copyMakeBorder(src, border_m03, border, border, border, border, BORDER_WRAP);

imshow("border fill demo01", border_m01);

imshow("border fill demo02", border_m02);

imshow("border fill demo03", border_m03);

waitKey(0);

destroyAllWindows();

return 0;

}

效果:

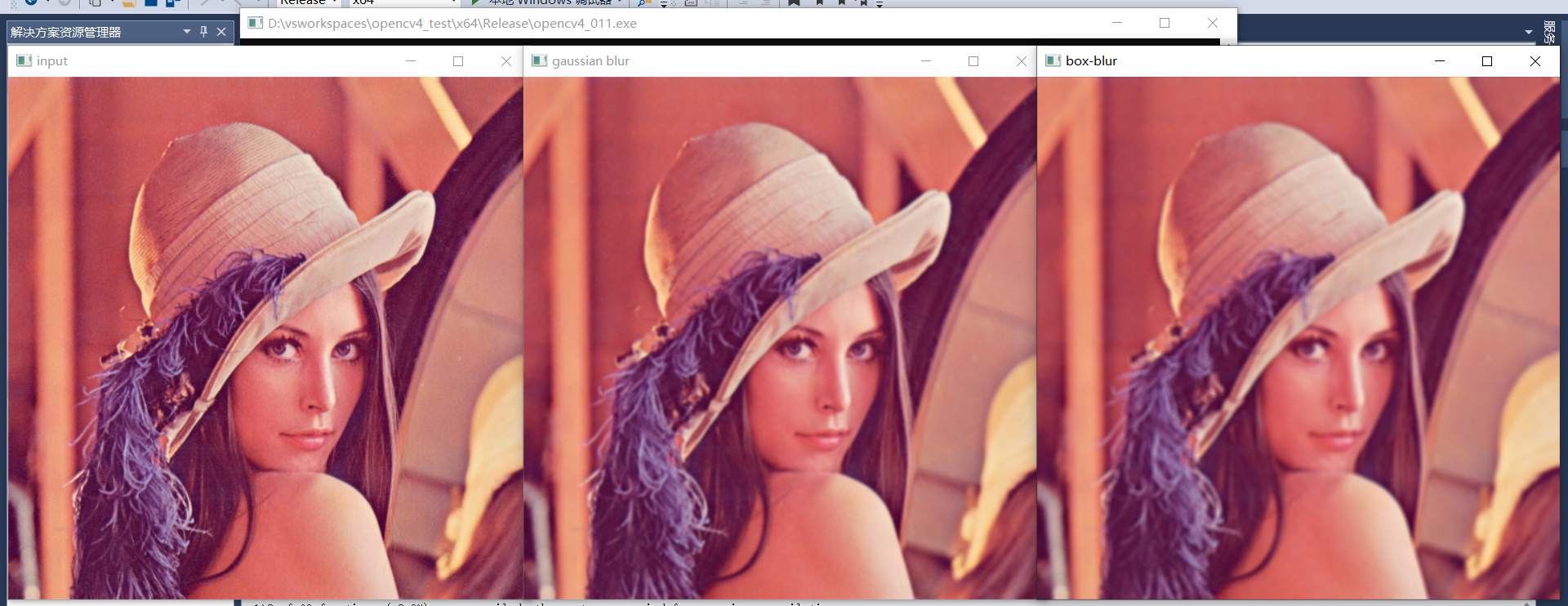

18、影象模糊

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

int main(int argc, char** argv) {

Mat src = imread("D:/images/lena.jpg");

if (src.empty()) {

printf("could not find image file");

return -1;

}

namedWindow("input", WINDOW_AUTOSIZE);

imshow("input", src);

//高斯模糊

Mat dst;

GaussianBlur(src, dst, Size(5, 5), 0);

imshow("gaussian blur", dst);

//盒子模糊 - 均值模糊

Mat box_dst;

boxFilter(src, box_dst, -1, Size(5, 5), Point(-1, -1), true, BORDER_DEFAULT);

imshow("box-blur", box_dst);

waitKey(0);

destroyAllWindows();

return 0;

}

效果:

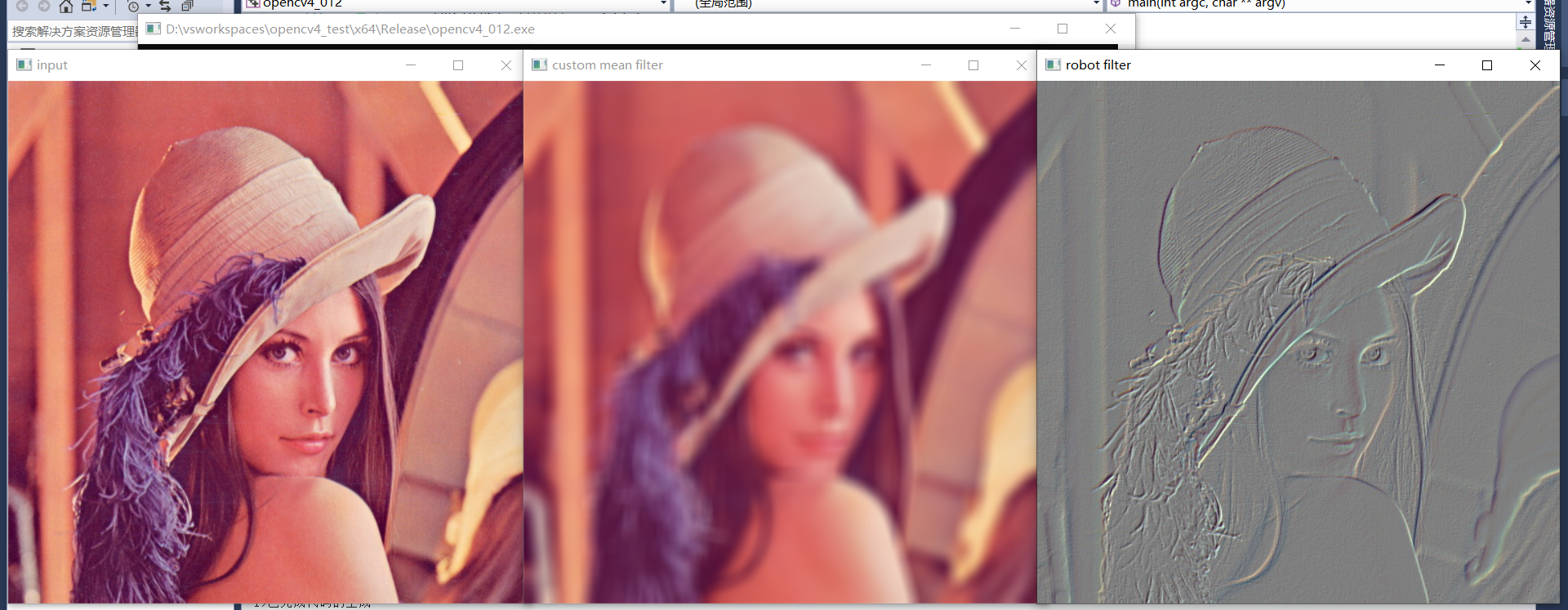

19、自定義濾波

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

int main(int argc, char** argv) {

Mat src = imread("D:/images/lena.jpg");

if (src.empty()) {

printf("could not find image file");

return -1;

}

namedWindow("input", WINDOW_AUTOSIZE);

imshow("input", src);

//自定義濾波 - 均值折積

int k = 15;

Mat mkernel = Mat::ones(k, k, CV_32F) / (float)(k*k);

Mat dst;

filter2D(src, dst, -1, mkernel, Point(-1, -1), 0, BORDER_DEFAULT); //delta可用來提升亮度

imshow("custom mean filter", dst);

//非均值濾波,影象梯度,便於觀察邊緣資訊

Mat robot = (Mat_<int>(2, 2) << 1, 0, 0, -1);

Mat result;

filter2D(src, result, CV_32F, robot, Point(-1, -1), 127, BORDER_DEFAULT); //轉換之後增加亮度便於觀察

convertScaleAbs(result, result); //將正負值的差異轉換成絕對值,避免負值無法顯示影響最終顯示效果

imshow("robot filter", result);

waitKey(0);

destroyAllWindows();

return 0;

}

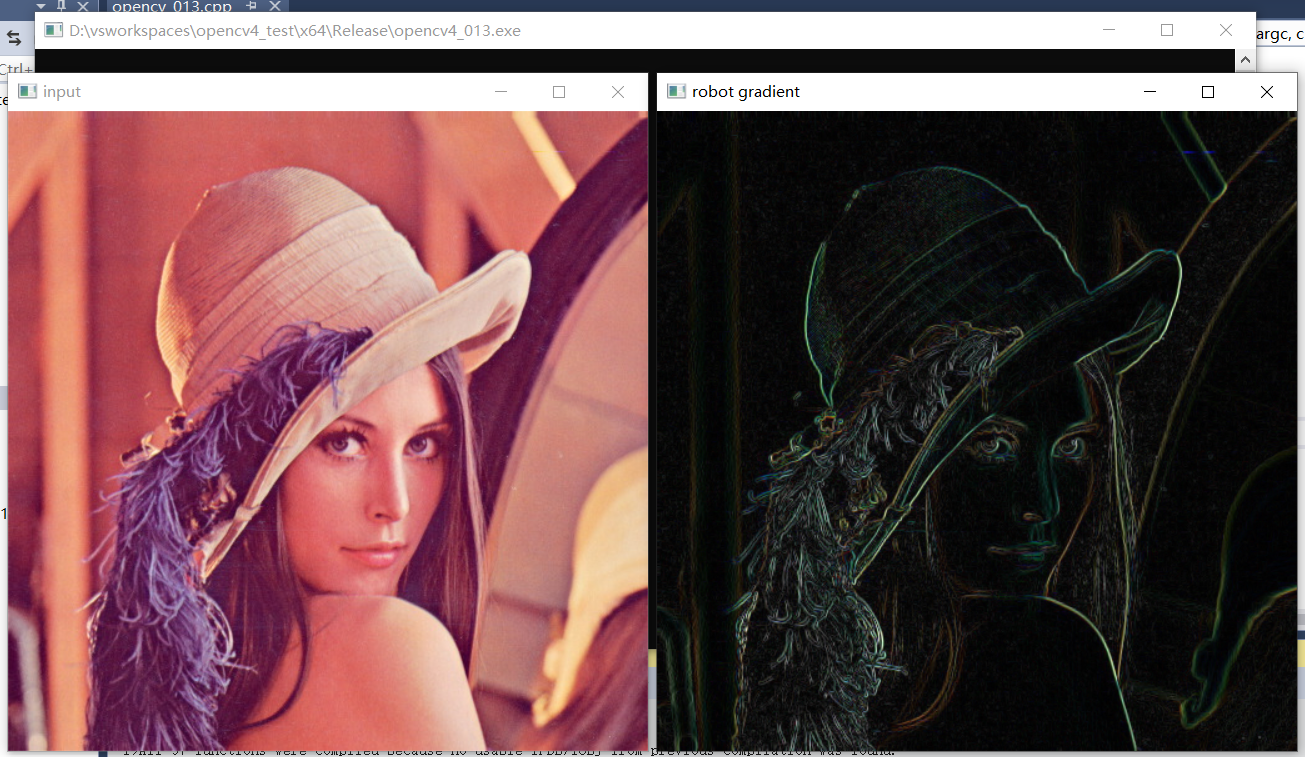

效果:

20、影象梯度

- 影象折積

- 模糊

- 梯度:Sobel運算元,Scharr運算元,Robot運算元

- 邊緣

- 銳化

每種運算元有兩個,分別為x方向運算元和y方向運算元。

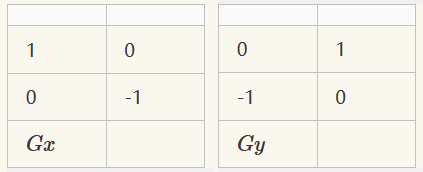

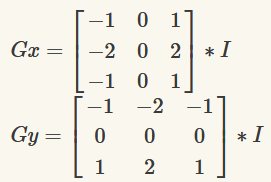

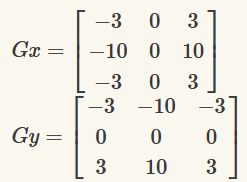

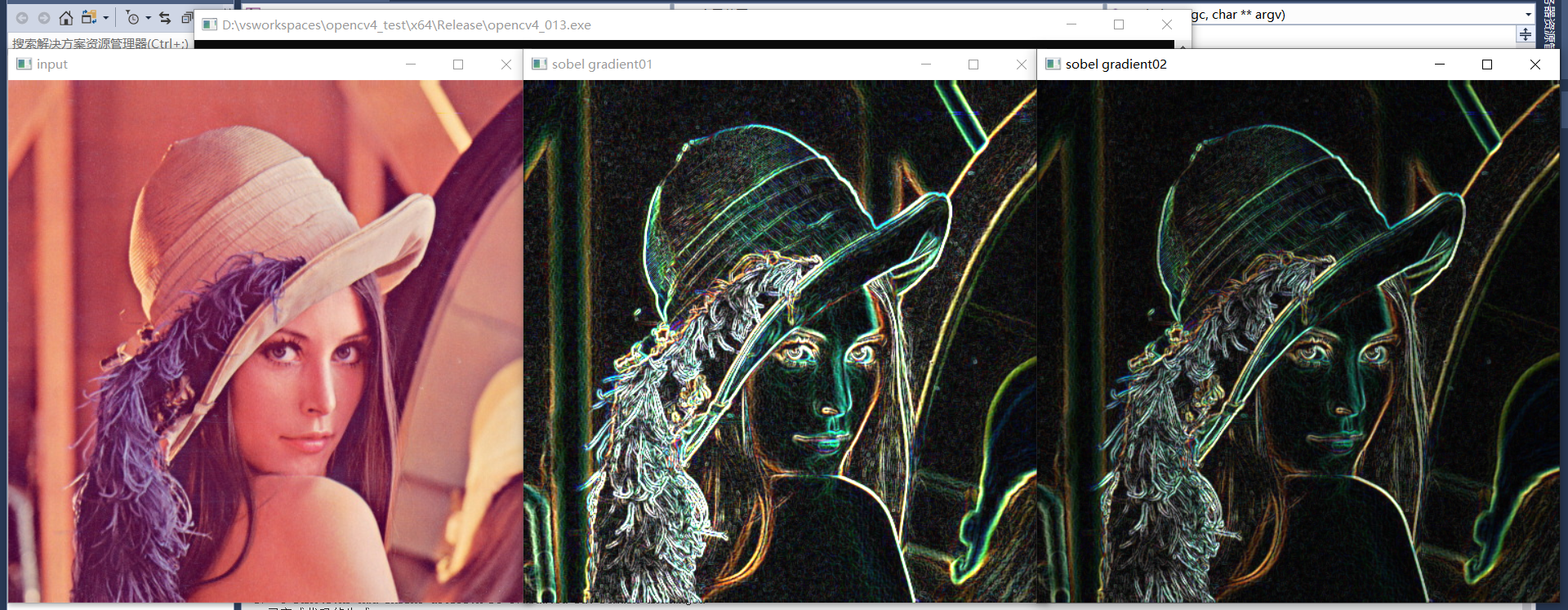

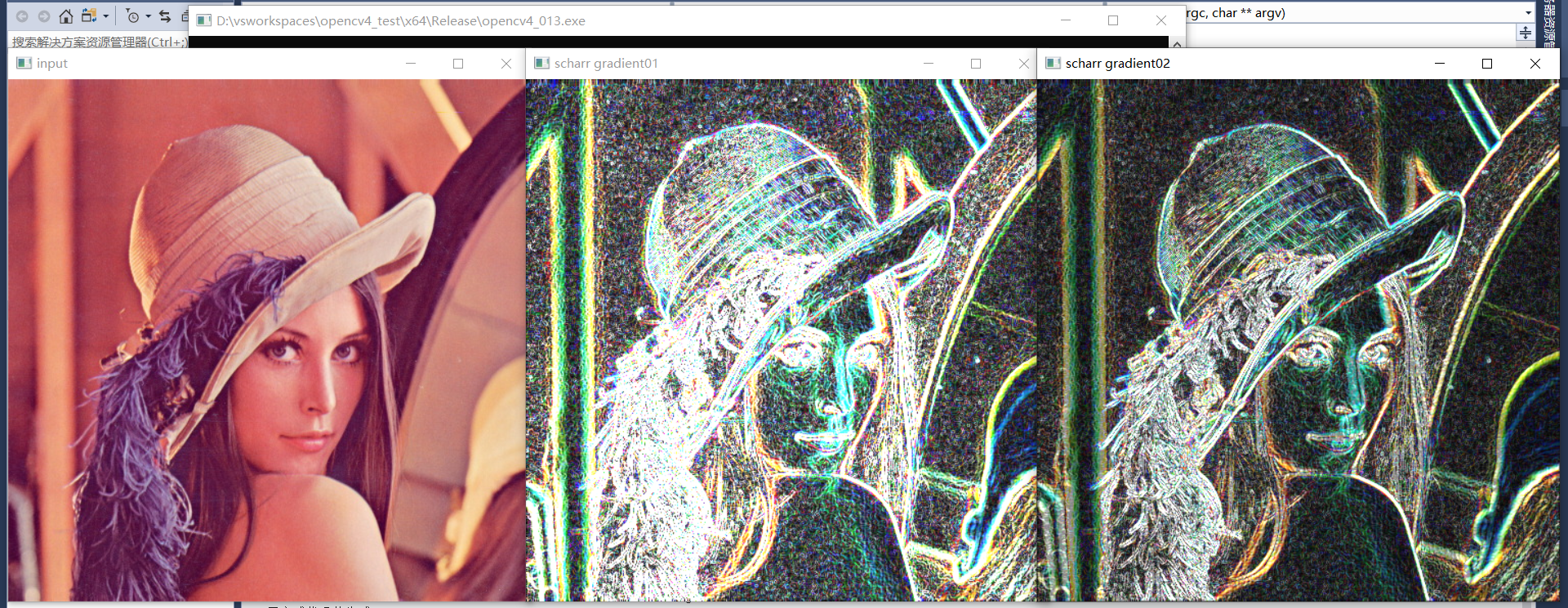

1、robot運算元,最簡單但效果最差

2、sobel運算元,梯度檢測效果較好,抗噪能力不如scharr運算元

3、scharr運算元,抗噪能力比sobel運算元好但干擾資訊較多

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

int main(int argc, char** argv) {

Mat src = imread("D:/images/lena.jpg");

if (src.empty()) {

printf("could not find image file");

return -1;

}

namedWindow("input", WINDOW_AUTOSIZE);

imshow("input", src);

//robot gradient 計算

Mat robot_x = (Mat_<int>(2, 2) << 1, 0, 0, -1);

Mat robot_y = (Mat_<int>(2, 2) << 0, 1, -1, 0);

Mat grad_x,grad_y;

filter2D(src, grad_x, CV_32F, robot_x, Point(-1, -1), 0, BORDER_DEFAULT);

filter2D(src, grad_y, CV_32F, robot_y, Point(-1, -1), 0, BORDER_DEFAULT);

convertScaleAbs(grad_x, grad_x); //要轉換為差異的絕對值,否則有正負值差異,負值無法顯示影響檢測顯示效果

convertScaleAbs(grad_y, grad_y);

Mat result;

add(grad_x, grad_y, result);

//imshow("robot gradient", result);

//sobel運算元

Sobel(src, grad_x, CV_32F, 1, 0);

Sobel(src, grad_y, CV_32F, 0, 1);

convertScaleAbs(grad_x, grad_x);

convertScaleAbs(grad_y, grad_y);

Mat result2,result3;

add(grad_x, grad_y, result2);

addWeighted(grad_x, 0.5, grad_y, 0.5, 0, result3);

imshow("sobel gradient01", result2);

imshow("sobel gradient02", result3);

//scharr運算元

Scharr(src, grad_x, CV_32F, 1, 0);

Scharr(src, grad_y, CV_32F, 0, 1);

convertScaleAbs(grad_x, grad_x);

convertScaleAbs(grad_y, grad_y);

Mat result4, result5;

add(grad_x, grad_y, result4);

addWeighted(grad_x, 0.5, grad_y, 0.5, 0, result5);

imshow("scharr gradient01", result4);

imshow("scharr gradient02", result5);

waitKey(0);

destroyAllWindows();

return 0;

}

效果:

1、robot運算元

2、sobel運算元

3、scharr運算元

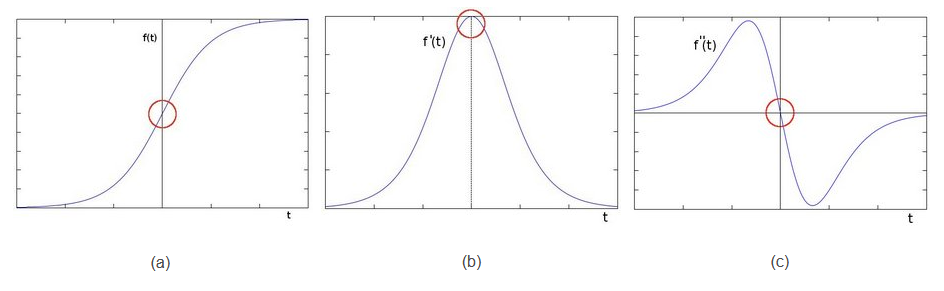

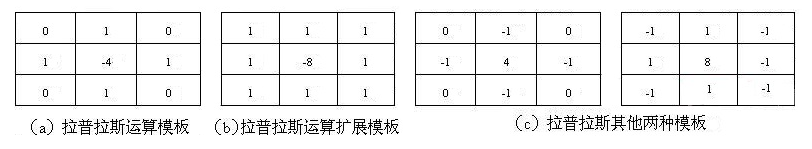

21、影象邊緣發現

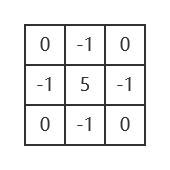

拉普拉斯運算元(二階導數運算元),對噪聲敏感!

拉普拉斯運算模板

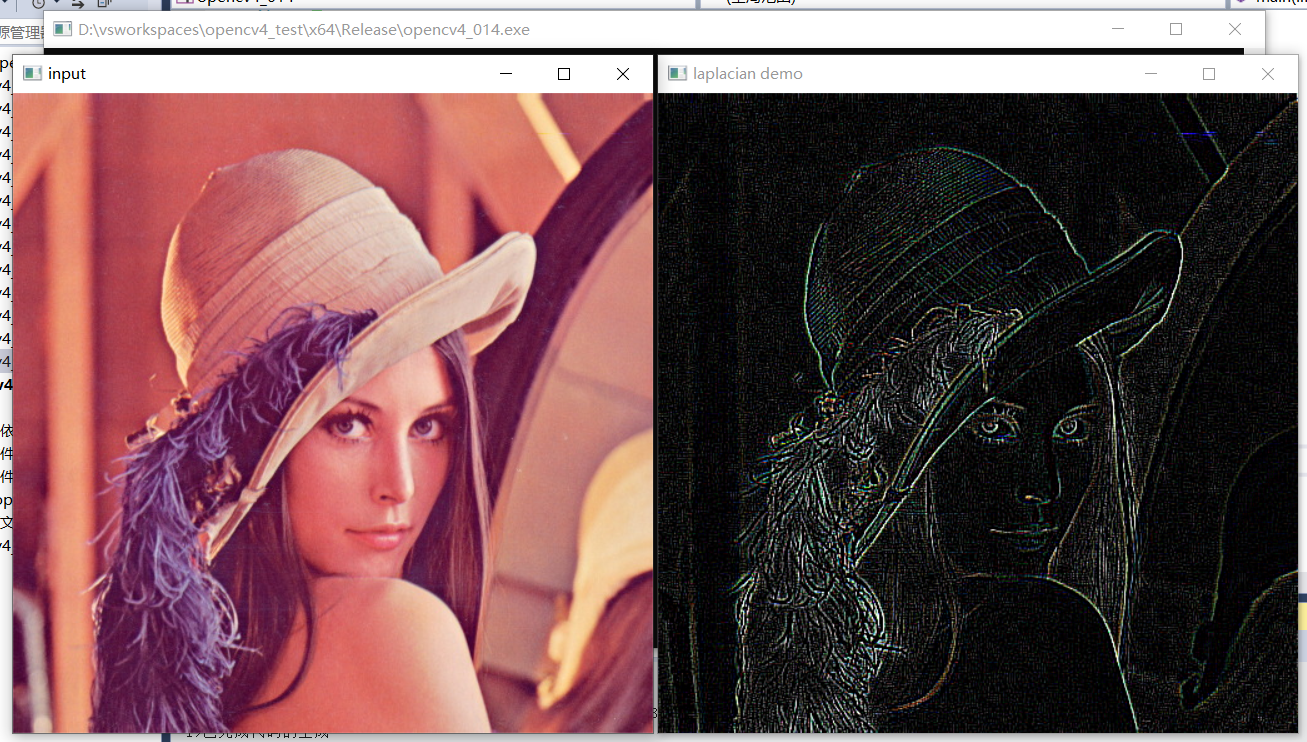

影象銳化

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

int main(int argc, char** argv) {

Mat src = imread("D:/images/lena.jpg");

if (src.empty()) {

printf("could not find image file");

return -1;

}

namedWindow("input", WINDOW_AUTOSIZE);

imshow("input", src);

Mat dst;

Laplacian(src, dst, -1, 3, 1.0, 0, BORDER_DEFAULT); //拉普拉斯運算元對噪聲敏感

imshow("laplacian demo", dst);

//銳化

Mat sh_op = (Mat_<int>(3, 3) << 0, -1, 0, //銳化就相當於拉普拉斯運算元再加原圖

-1, 5, -1,

0, -1, 0);

Mat result;

filter2D(src, result, CV_32F, sh_op, Point(-1, -1), 0, BORDER_DEFAULT);

convertScaleAbs(result, result);

imshow("sharp filter", result);

waitKey(0);

destroyAllWindows();

return 0;

}

效果:

拉普拉斯邊緣發現:

影象銳化:

22、USM銳化(Un Sharp Mask)

影象銳化增強:sharp_image = blur - laplacian

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

int main(int argc, char** argv) {

Mat src = imread("D:/images/lena.jpg");

if (src.empty()) {

printf("could not find image file");

return -1;

}

namedWindow("input", WINDOW_AUTOSIZE);

imshow("input", src);

Mat blur_image, dst;

GaussianBlur(src, blur_image, Size(3, 3), 0); //先進行高斯濾波,再使用拉普拉斯運算元獲取邊緣及噪聲

Laplacian(src, dst, -1, 1, 1.0, 0, BORDER_DEFAULT);

imshow("laplacian demo", dst);

Mat usm_image;

//通過權重函數對兩個影象相減,去掉噪聲和大的邊界,增強影象細節和小的邊緣

addWeighted(blur_image, 1.0, dst, -1.0, 0, usm_image);

imshow("usm filter", usm_image);

waitKey(0);

destroyAllWindows();

return 0;

}

效果:

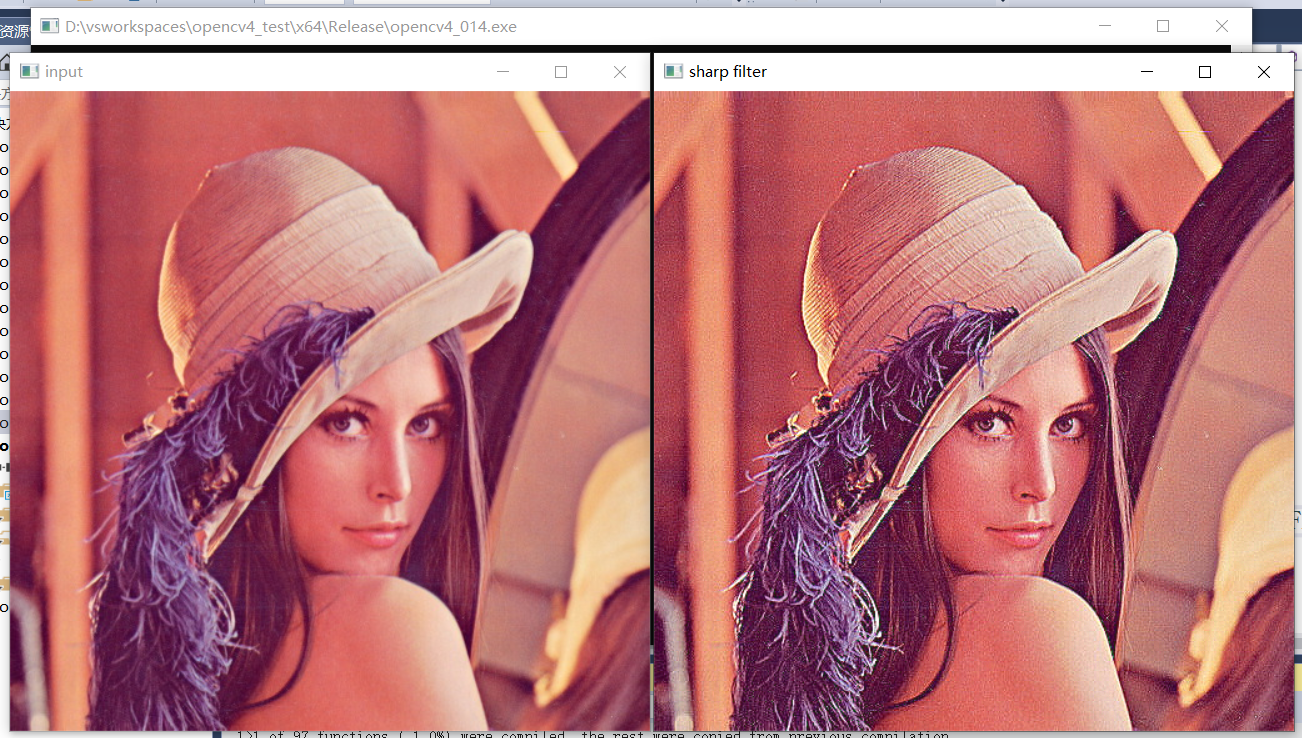

23、影象噪聲

噪聲型別:

- 椒鹽噪聲

- 高斯噪聲

- 其他噪聲...

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

int main(int argc, char** argv) {

Mat src = imread("D:/images/lena.jpg");

if (src.empty()) {

printf("could not find image file");

return -1;

}

namedWindow("input", WINDOW_AUTOSIZE);

imshow("input", src);

// salt and pepper noise 隨機分佈的黑白點

RNG rng(12345);

Mat image = src.clone();

int h = src.rows;

int w = src.cols;

int nums = 10000;

for (int i = 0; i < nums; i++) {

int x = rng.uniform(0, w);

int y = rng.uniform(0, h);

if (i % 2 == 1) {

src.at<Vec3b>(y, x) = Vec3b(255, 255, 255);

}

else {

src.at<Vec3b>(y, x) = Vec3b(0, 0, 0);

}

}

imshow("salt and pepper noise", src);

//高斯噪聲 正態分佈的不同顏色的點

Mat noise = Mat::zeros(image.size(), image.type());

randn(noise, Scalar(25, 25, 25), Scalar(30, 30, 30));

Mat dst;

add(image, noise, dst);

imshow("gaussian noise", dst);

waitKey(0);

destroyAllWindows();

return 0;

}

效果:

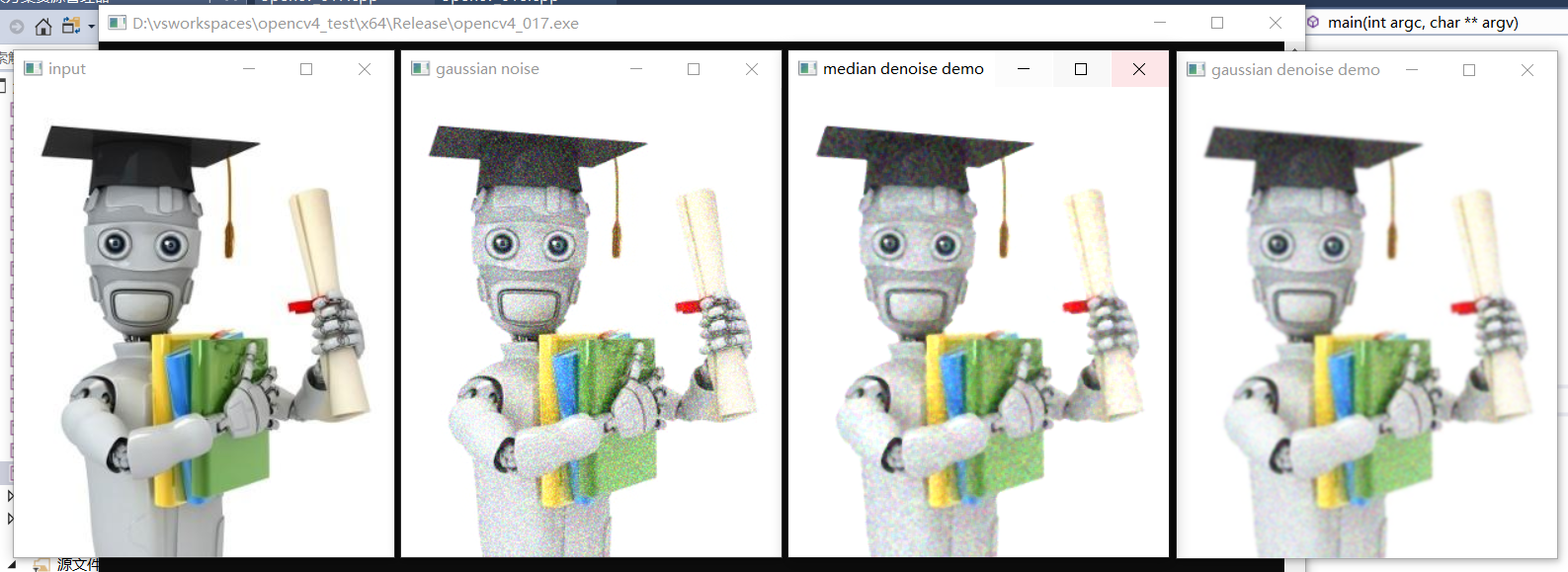

24、影象去噪聲

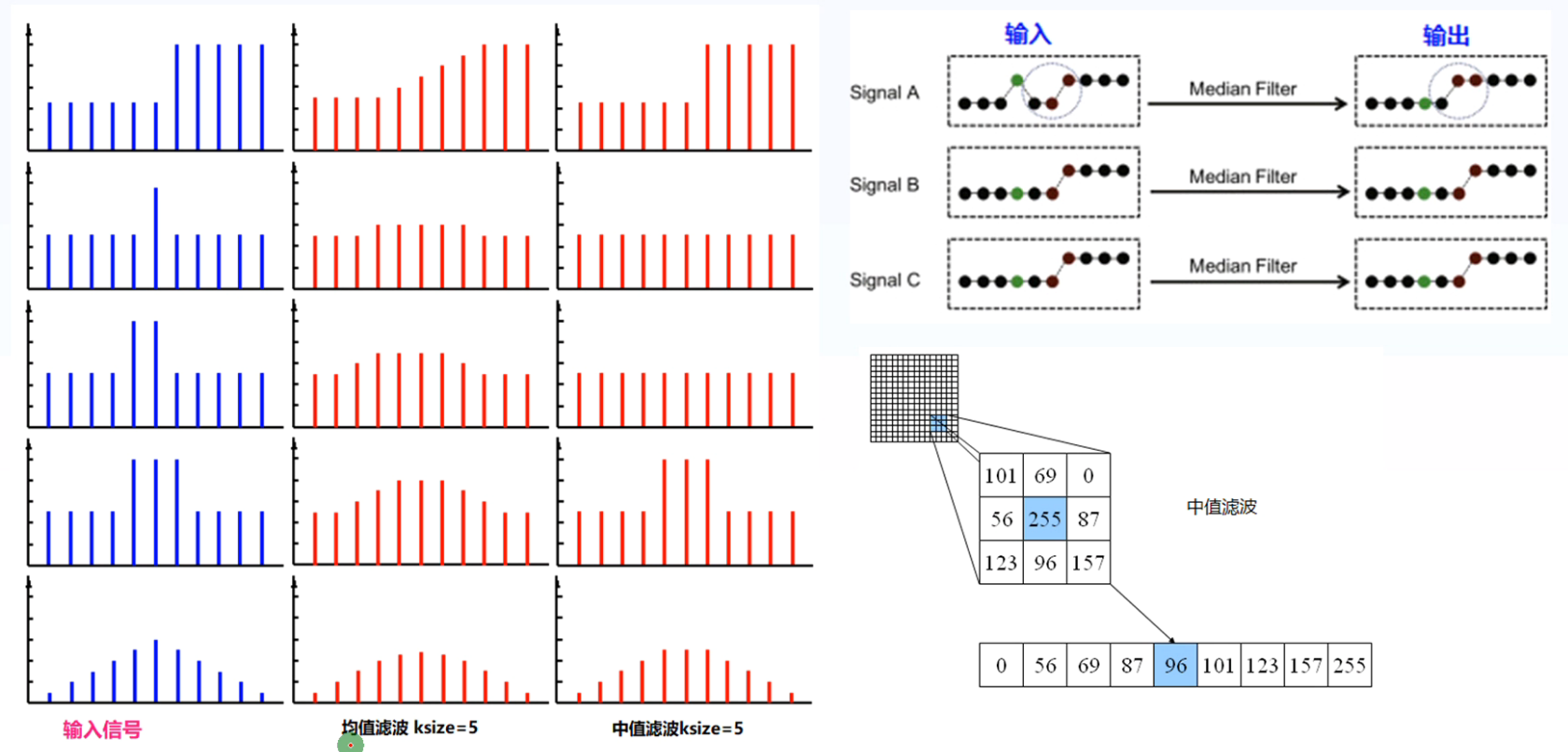

中值濾波與均值濾波示意圖:

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

void add_salt_and_pepper_noise(Mat &image);

void add_gaussian_noise(Mat &image);

int main(int argc, char** argv) {

Mat src = imread("D:/images/ml.png");

if (src.empty()) {

printf("could not find image file");

return -1;

}

namedWindow("input", WINDOW_AUTOSIZE);

imshow("input", src);

add_gaussian_noise(src);

//中值濾波

Mat dst;

medianBlur(src, dst, 3);

imshow("median denoise demo", dst);

//高斯濾波

GaussianBlur(src, dst, Size(5, 5), 0);

imshow("gaussian denoise demo", dst);

waitKey(0);

destroyAllWindows();

return 0;

}

void add_salt_and_pepper_noise(Mat &image) {

// salt and pepper noise 隨機分佈的黑白點

RNG rng(12345);

int h = image.rows;

int w = image.cols;

int nums = 10000;

for (int i = 0; i < nums; i++) {

int x = rng.uniform(0, w);

int y = rng.uniform(0, h);

if (i % 2 == 1) {

image.at<Vec3b>(y, x) = Vec3b(255, 255, 255);

}

else {

image.at<Vec3b>(y, x) = Vec3b(0, 0, 0);

}

}

imshow("salt and pepper noise", image);

}

void add_gaussian_noise(Mat &image) {

//高斯噪聲 正態分佈的不同顏色的點

Mat noise = Mat::zeros(image.size(), image.type());

randn(noise, Scalar(25, 25, 25), Scalar(30, 30, 30));

Mat dst;

add(image, noise, dst);

imshow("gaussian noise", dst);

dst.copyTo(image);

}

效果:

椒鹽噪聲:

高斯噪聲:

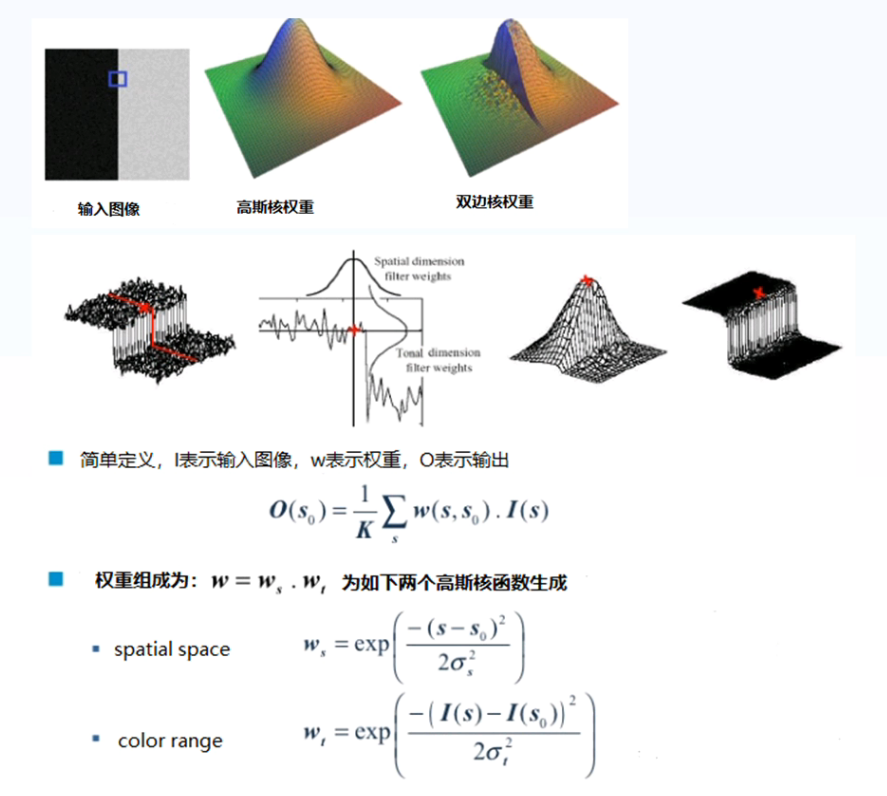

25、邊緣保留濾波EPF(高斯噪聲)

- 高斯雙邊濾波

- 除了空間位置核函數以外,增加了一個顏色差異和函數,當中心畫素與其他畫素顏色差異過大時,則w權重趨近於0,忽略該畫素的影響,只對與中心畫素顏色相近的畫素進行高斯濾波

- 主要是降低畫素值高的畫素對畫素值低的中心畫素的影響,畫素值高的畫素權重低,最終中心畫素的值仍然會保持較低。最終保持畫素之間的差異。而畫素值低的畫素不管權重高低,對畫素值高的中心畫素本來影響都較小。

-

均值遷移濾波

-

非區域性均值濾波

- 相似的畫素塊,權重比較大,不相似的畫素塊權重比較小

- 效果好,但運算量大,速度慢

-

區域性均方差

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

void add_salt_and_pepper_noise(Mat &image);

void add_gaussian_noise(Mat &image);

int main(int argc, char** argv) {

//Mat src = imread("D:/images/ml.png");

Mat src = imread("D:/images/lena.jpg");

if (src.empty()) {

printf("could not find image file");

return -1;

}

namedWindow("input", WINDOW_AUTOSIZE);

imshow("input", src);

add_gaussian_noise(src);

/*

//中值濾波

Mat dst;

medianBlur(src, dst, 3);

imshow("median denoise demo", dst);

//高斯濾波

GaussianBlur(src, dst, Size(5, 5), 0);

imshow("gaussian denoise demo", dst);

*/

//雙邊濾波

Mat dst;

bilateralFilter(src, dst, 0, 50, 10);

imshow("bilateral denoise demo", dst);

//非區域性均值濾波

fastNlMeansDenoisingColored(src, dst, 7., 7., 15, 45);

imshow("NLM denoise", dst);

waitKey(0);

destroyAllWindows();

return 0;

}

void add_salt_and_pepper_noise(Mat &image) {

// salt and pepper noise 隨機分佈的黑白點

RNG rng(12345);

int h = image.rows;

int w = image.cols;

int nums = 10000;

for (int i = 0; i < nums; i++) {

int x = rng.uniform(0, w);

int y = rng.uniform(0, h);

if (i % 2 == 1) {

image.at<Vec3b>(y, x) = Vec3b(255, 255, 255);

}

else {

image.at<Vec3b>(y, x) = Vec3b(0, 0, 0);

}

}

imshow("salt and pepper noise", image);

}

void add_gaussian_noise(Mat &image) {

//高斯噪聲 正態分佈的不同顏色的點

Mat noise = Mat::zeros(image.size(), image.type());

randn(noise, Scalar(25, 25, 25), Scalar(30, 30, 30));

Mat dst;

add(image, noise, dst);

imshow("gaussian noise", dst);

dst.copyTo(image);

}

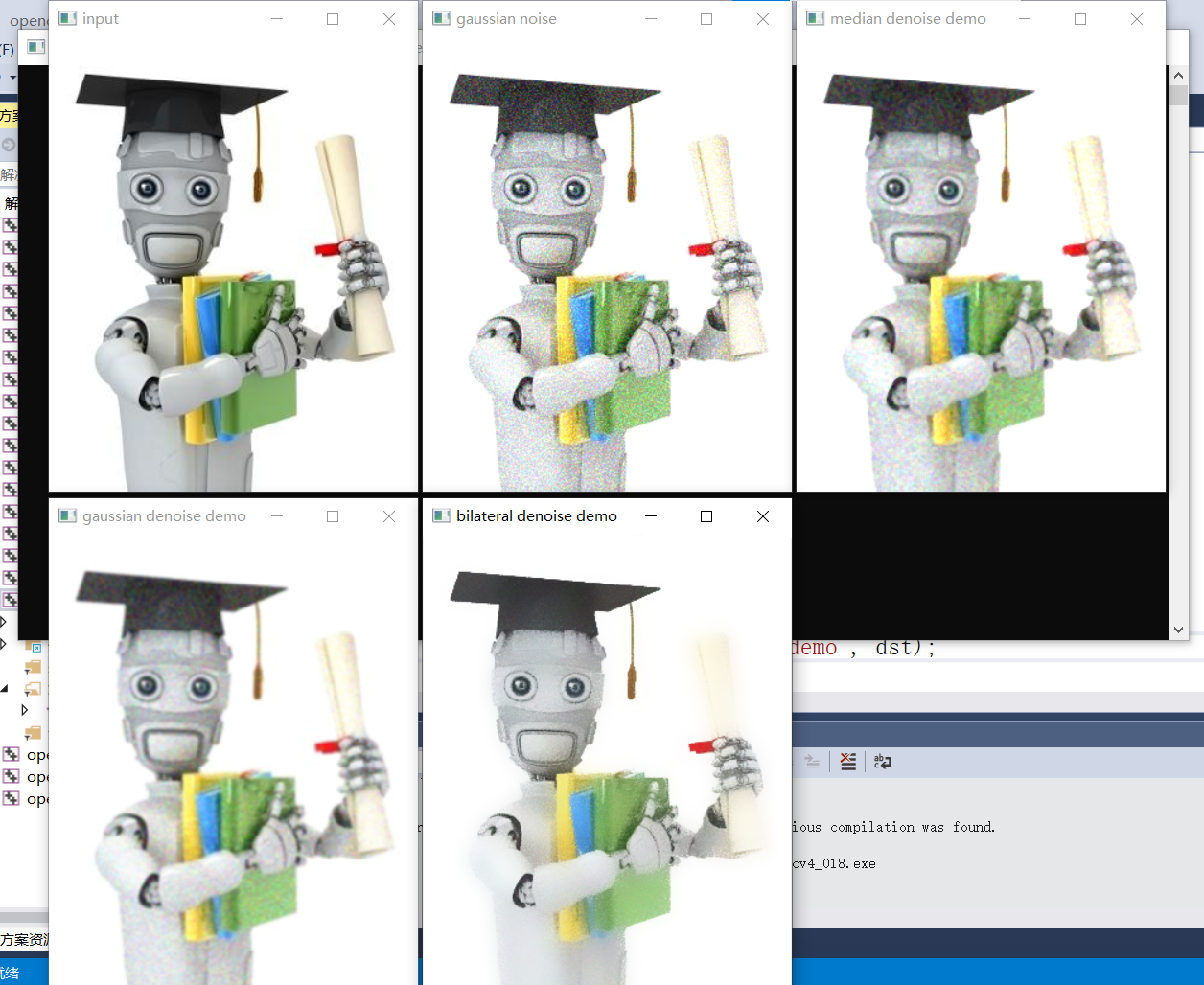

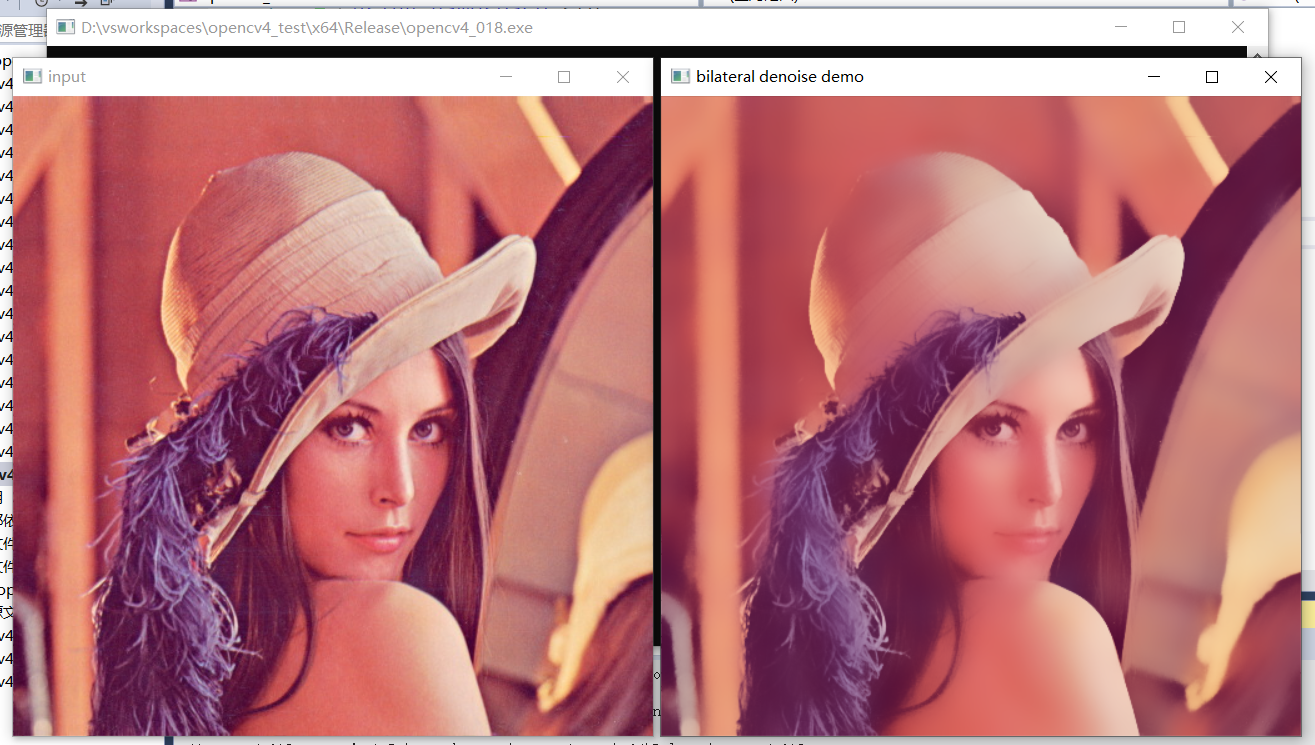

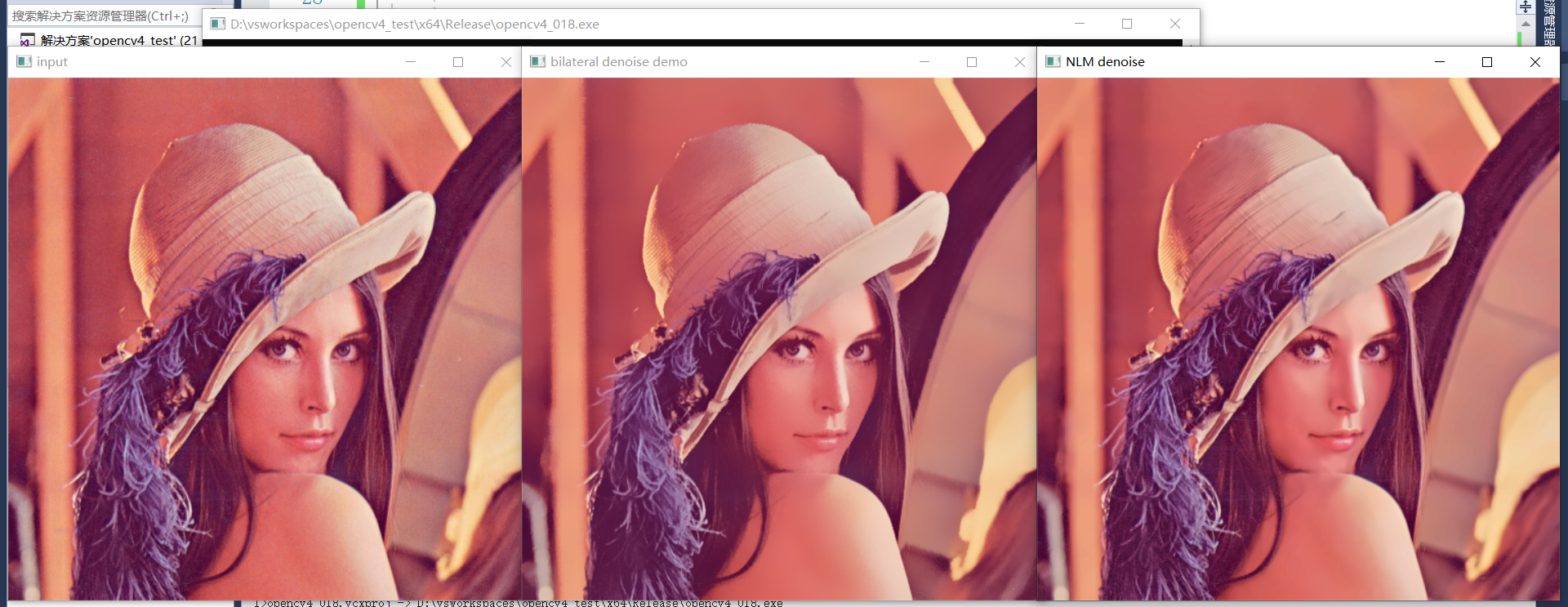

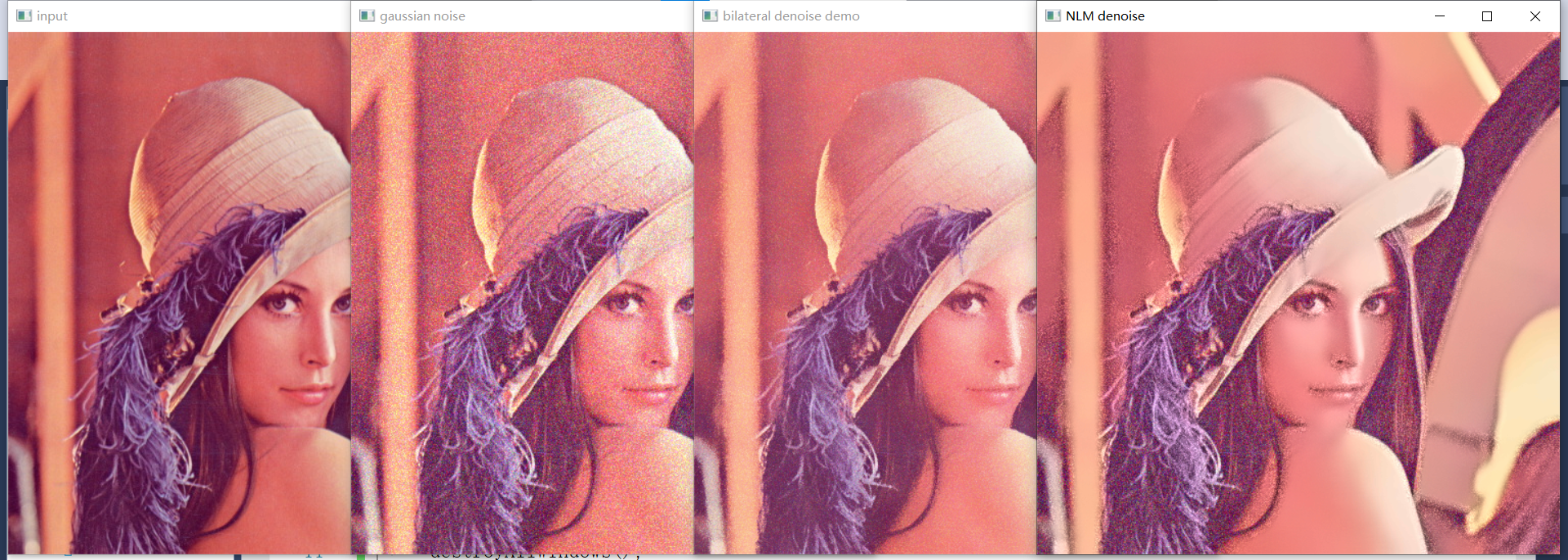

效果:

中值濾波、高斯濾波與雙邊濾波對高斯噪聲的效果:

雙邊濾波對原圖的效果:

調整後雙邊濾波與非區域性均值濾波對原圖的效果:

雙邊濾波與非區域性均值濾波對高斯噪聲的作用:

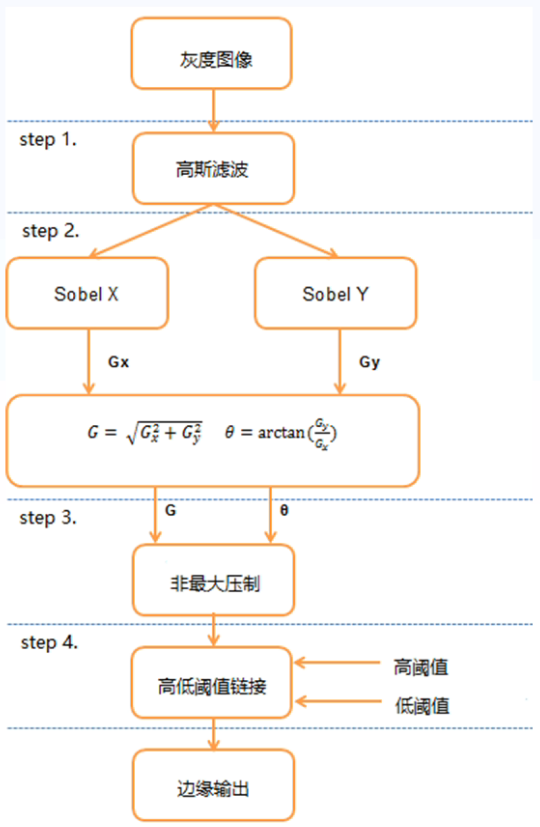

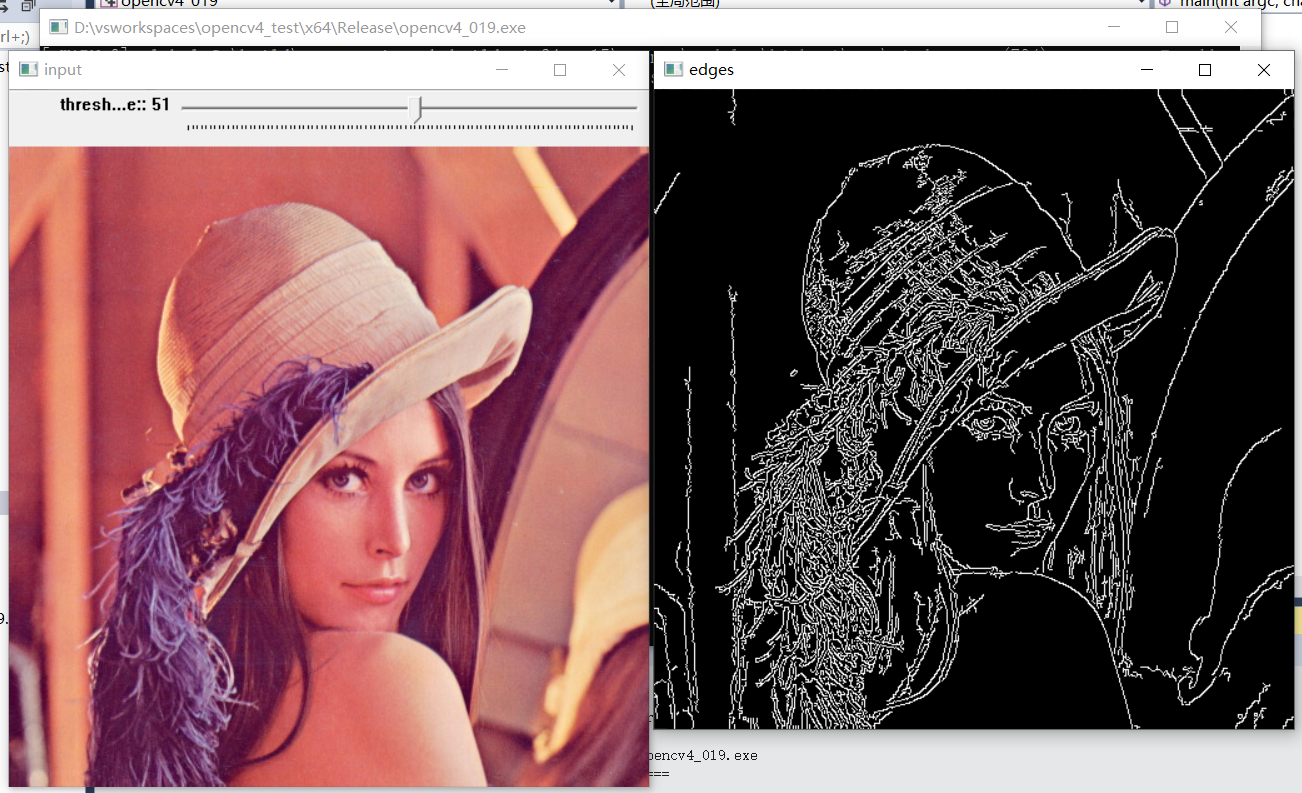

26、邊緣提取

- 邊緣法線:單位向量在該方向上影象畫素強度變化最大。

- 邊緣方向:與邊緣法線垂直的向量方向。

- 邊緣位置或者中心:影象邊緣所在位置。

- 邊緣強度:跟沿法線方向的影象區域性對比相關,對比越大,越是邊緣。

Canny邊緣提取:

- 模糊去噪聲 - 高斯模糊

- 提取梯度與方向

- 非最大訊號抑制(比較邊緣方向畫素值是否比兩側畫素值都大)

- 高低閾值連結:

- 高低閾值連結T1,T2,其中T1/T2=2~3,策略:

- 大於高閾值T1的全部保留

- 小於低閾值T2的全部丟棄

- 在T1到T2之間的,可以連結(八鄰域)到高閾值的畫素點,保留;無法連結的,丟棄

- 高低閾值連結T1,T2,其中T1/T2=2~3,策略:

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

int t1 = 50;

Mat src;

void canny_demo(int, void*) {

Mat edges, dst;

Canny(src, edges, t1, t1 * 3, 3, false);

bitwise_and(src, src, dst, edges); //獲得彩色邊緣

imshow("edges", dst);

}

int main(int argc, char** argv) {

src = imread("D:/images/lena.jpg");

if (src.empty()) {

printf("could not find image file");

return -1;

}

namedWindow("input", WINDOW_AUTOSIZE);

imshow("input", src);

//Canny邊緣提取

createTrackbar("threshold value:", "input", &t1, 100, canny_demo);

//canny_demo(0, 0); //老版本不呼叫canny_demo(0, 0); 可能會不拖動trackbar時不會預設顯示影象

waitKey(0);

destroyAllWindows();

return 0;

}

效果:

Canny邊緣提取:

Canny彩色邊緣提取:

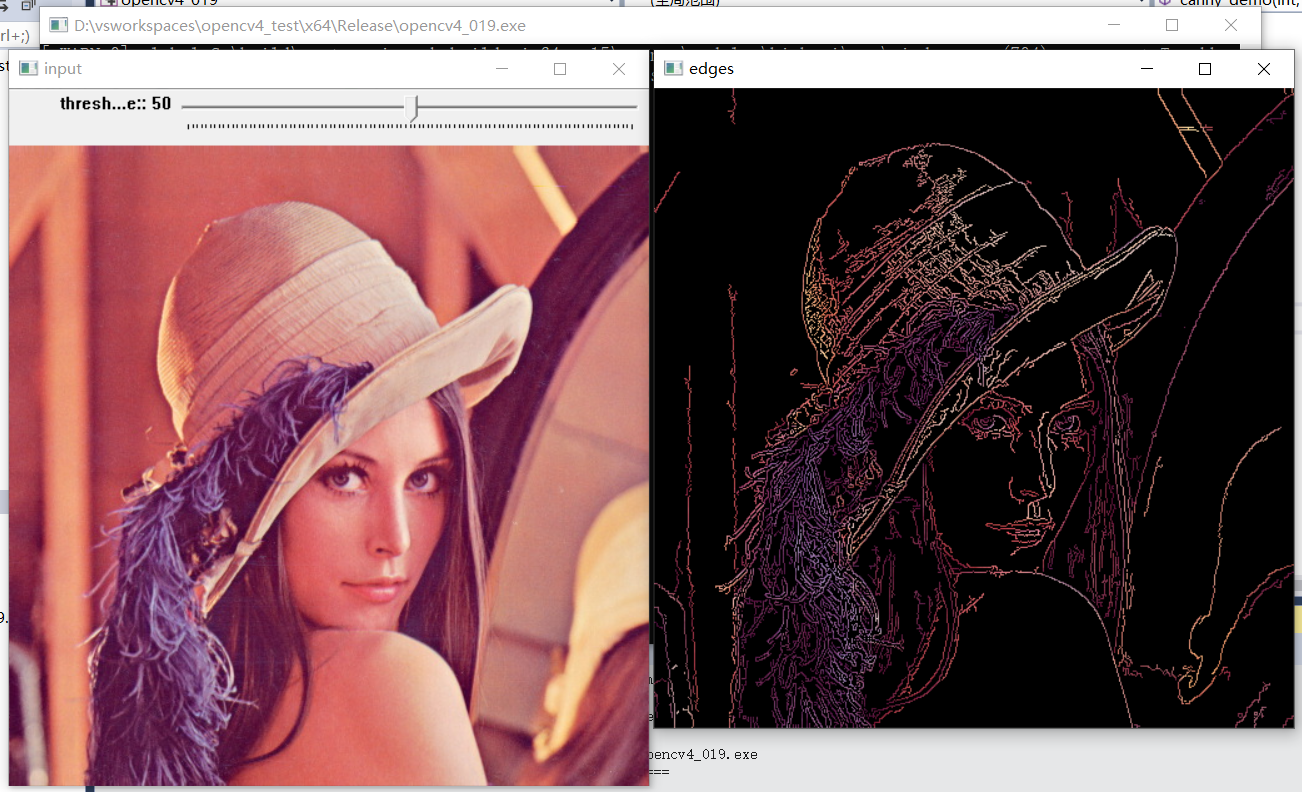

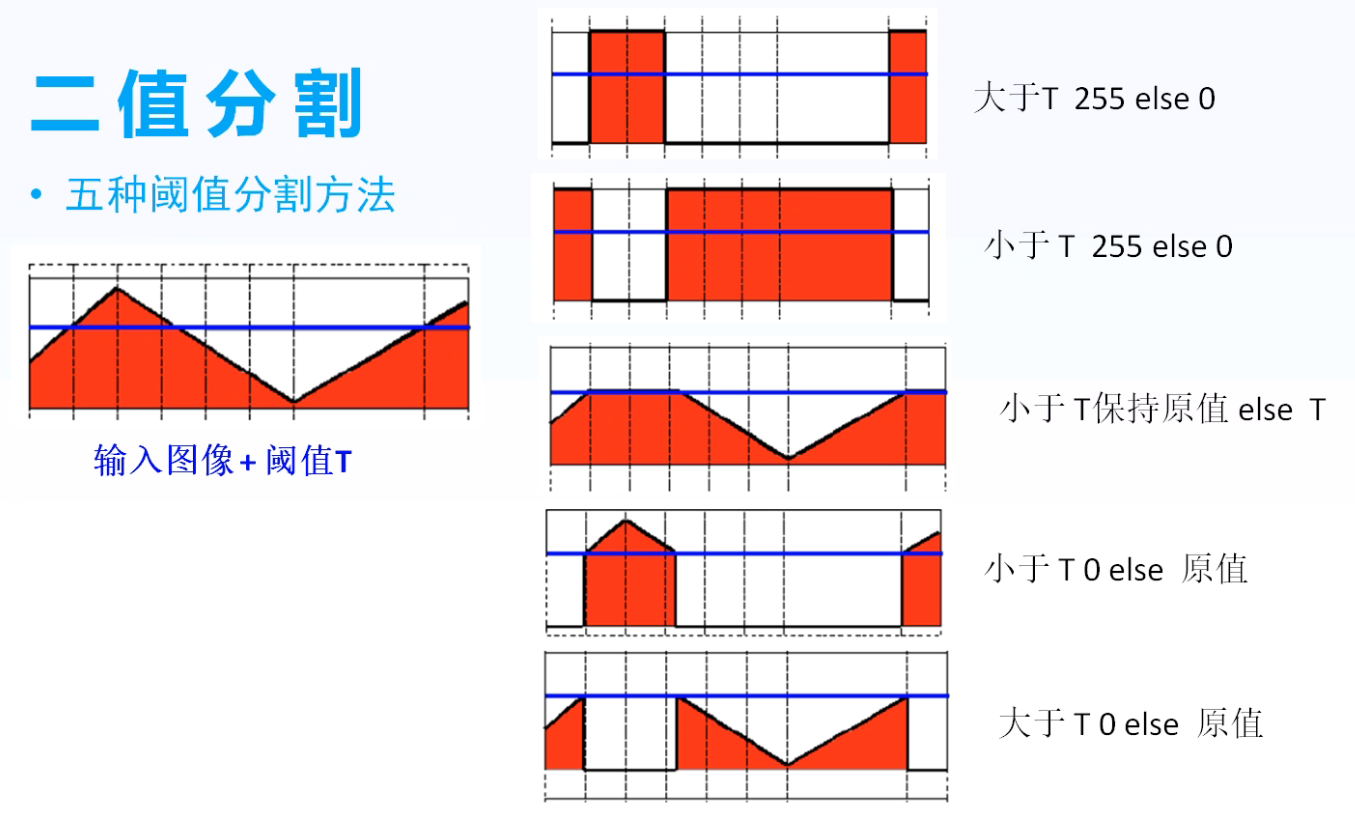

27、二值影象概念

灰度與二值影象:

- 灰度影象 - 單通道,取值範圍 0~255

- 二值影象 - 單通道,取值0(黑色)與255(白色)

二值分割:

- 五種閾值分割方法

二值化與閾值化區別:

- 二值化為大於閾值的畫素設為255,小於閾值的畫素設為0;閾值化為大於閾值或小於閾值的設為0,其餘部分不變。

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

int main(int argc, char** argv) {

//Mat src = imread("D:/images/lena.jpg");

Mat src = imread("D:/images/ml.png");

if (src.empty()) {

printf("could not find image file");

return -1;

}

namedWindow("input", WINDOW_AUTOSIZE);

imshow("input", src);

Mat gray, binary;

cvtColor(src, gray, COLOR_BGR2GRAY);

imshow("gray", gray);

threshold(gray, binary, 127, 255, THRESH_BINARY);

imshow("threshold binary", binary);

threshold(gray, binary, 127, 255, THRESH_BINARY_INV);

imshow("threshold binary invert", binary);

threshold(gray, binary, 127, 255, THRESH_TRUNC);

imshow("threshold TRUNC", binary);

threshold(gray, binary, 127, 255, THRESH_TOZERO);

imshow("threshold to zero", binary);

threshold(gray, binary, 127, 255, THRESH_TOZERO_INV);

imshow("threshold to zero invert", binary);

waitKey(0);

destroyAllWindows();

return 0;

}

效果:

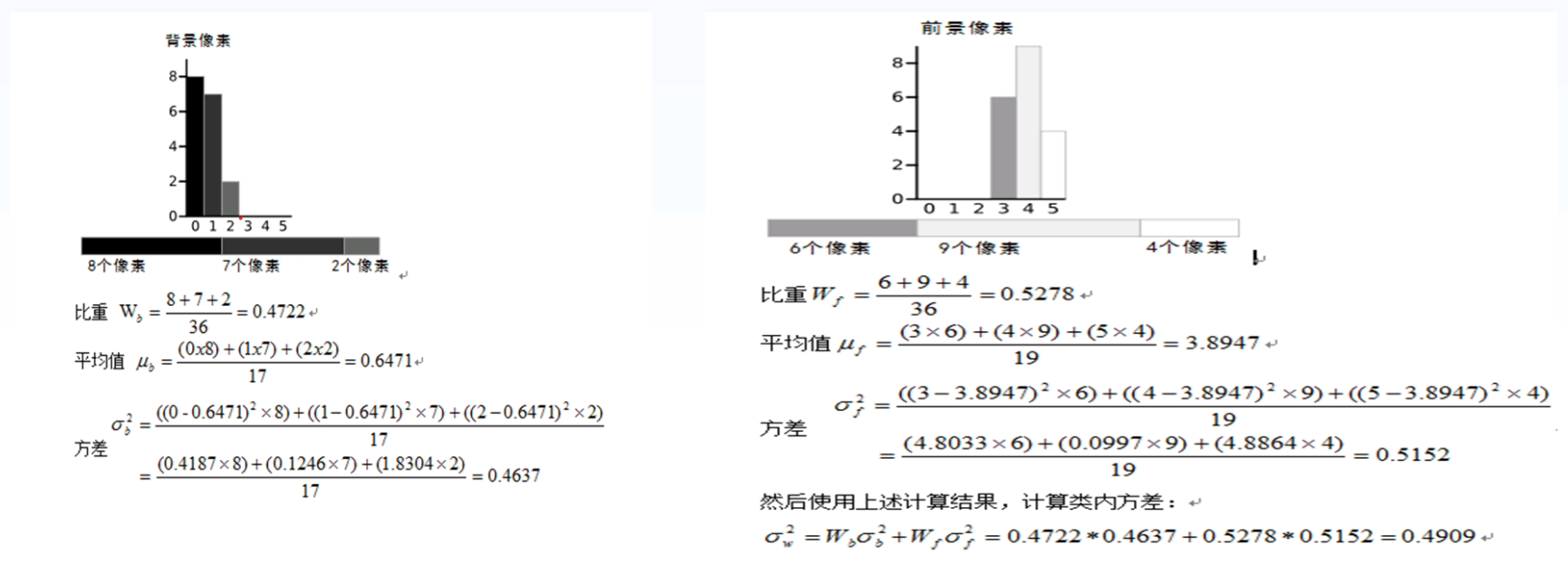

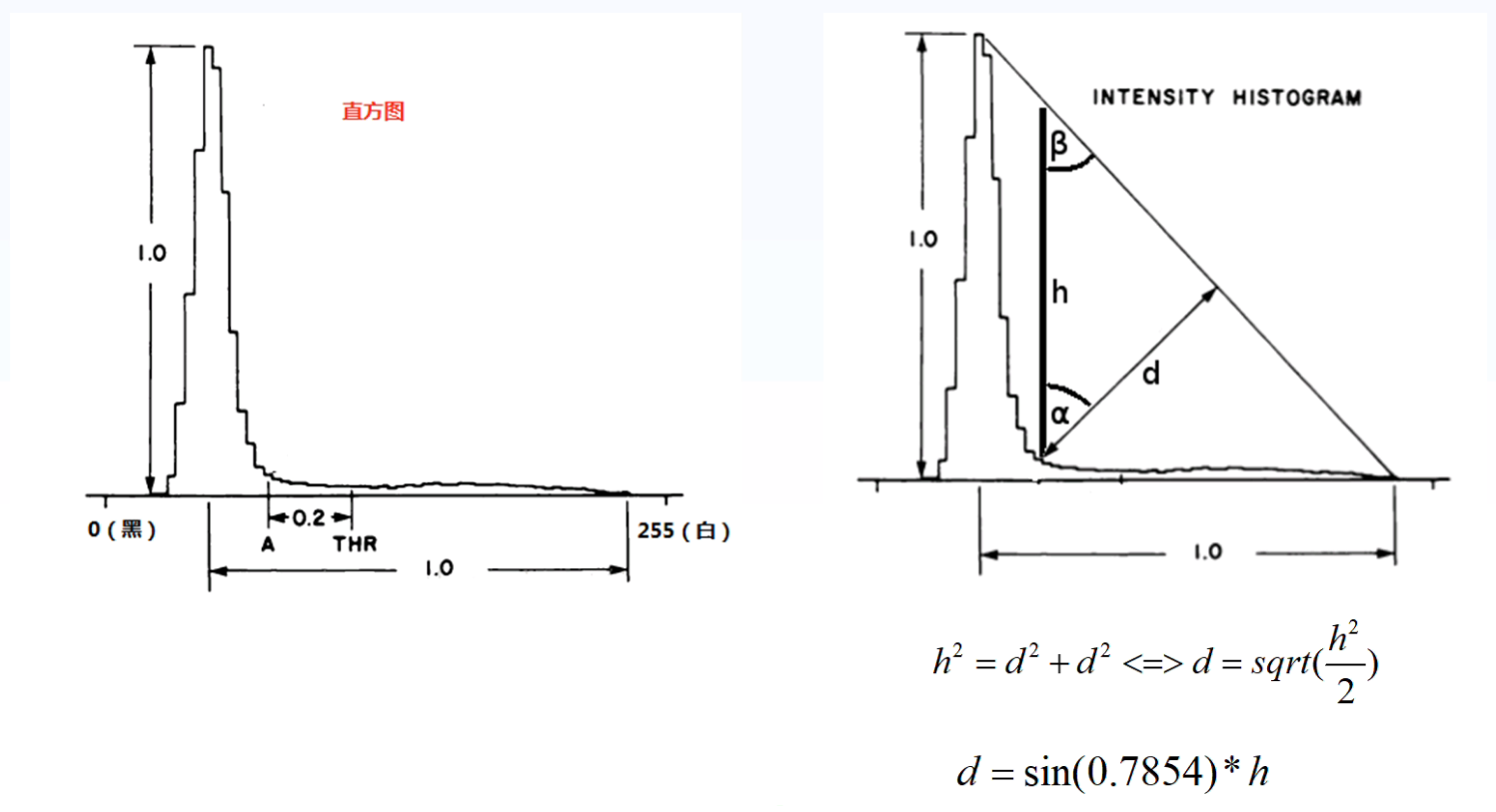

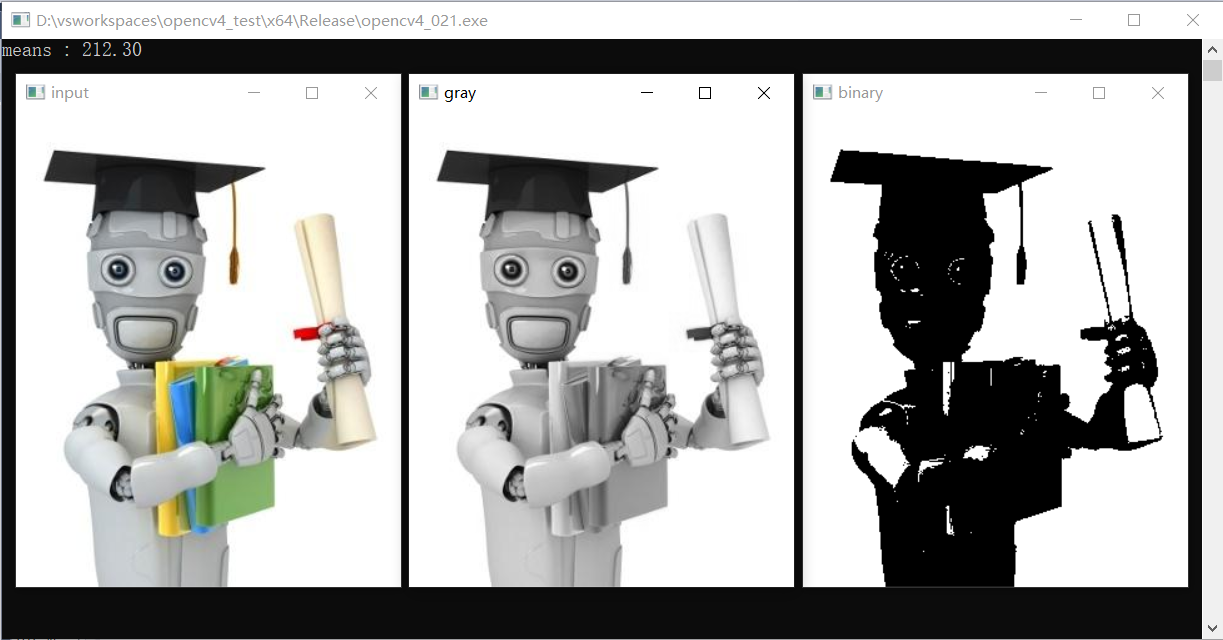

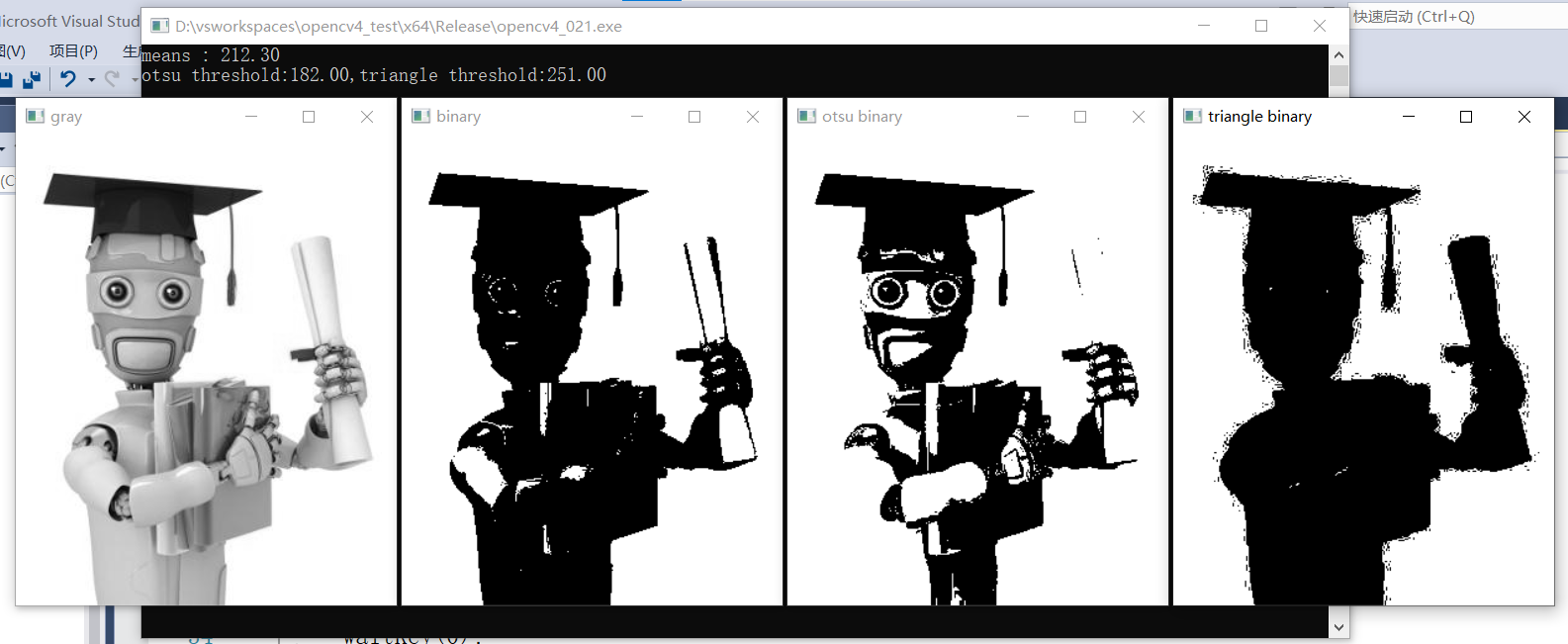

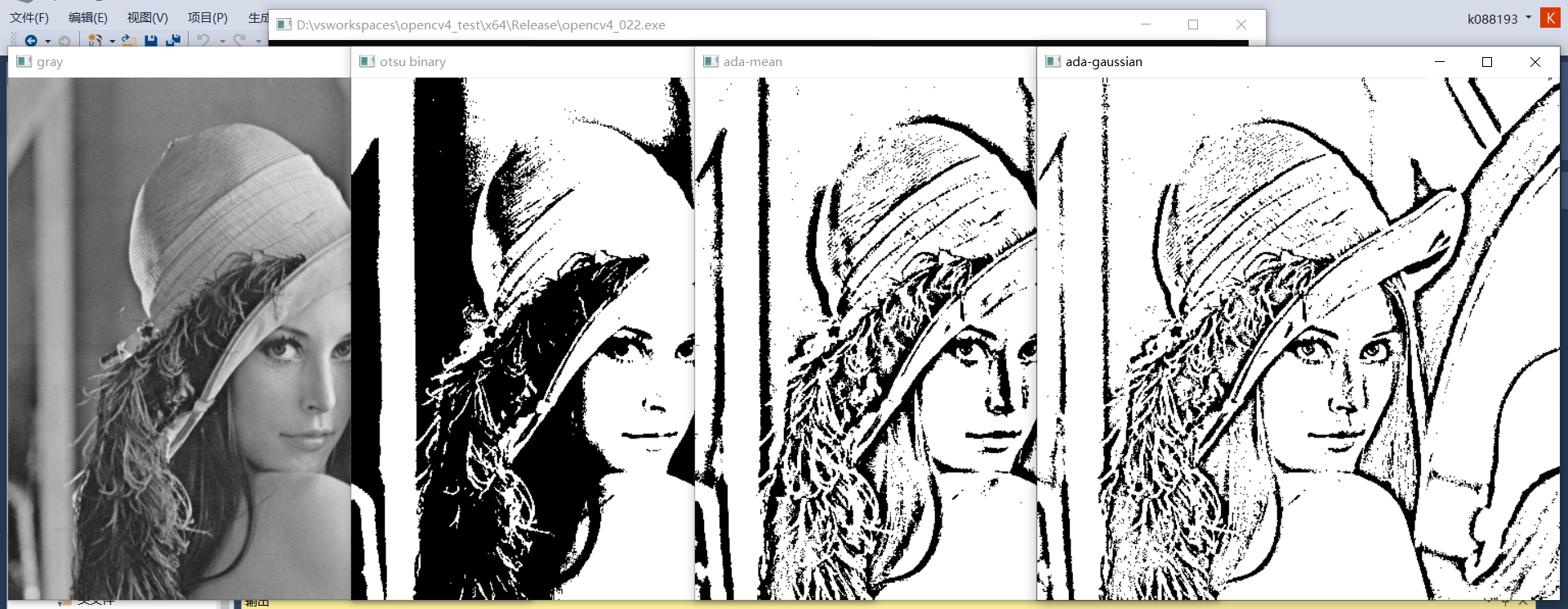

28、全域性閾值分割

- 影象二值化分割,最重要的就是計算域值

- 閾值計算演演算法很多,基本分為兩類,全域性閾值與自適應閾值

- OTSU(基於最小類內方差之和求取最佳閾值),多峰影象效果較好

- Triangle(三角法),對於醫學影象等單峰影象效果較好

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

int main(int argc, char** argv) {

Mat src = imread("D:/images/ml.png");

if (src.empty()) {

printf("could not find image file");

return -1;

}

namedWindow("input", WINDOW_AUTOSIZE);

imshow("input", src);

Mat gray, binary;

cvtColor(src, gray, COLOR_BGR2GRAY);

imshow("gray", gray);

Scalar m = mean(gray);

printf("means : %.2f\n", m[0]);

threshold(gray, binary, m[0], 255, THRESH_BINARY);

imshow("binary", binary);

double t1 = threshold(gray, binary, 0, 255, THRESH_BINARY | THRESH_OTSU);

imshow("otsu binary", binary);

double t2 = threshold(gray, binary, 0, 255, THRESH_BINARY | THRESH_TRIANGLE);

imshow("triangle binary", binary);

printf("otsu threshold:%.2f,triangle threshold:%.2f\n", t1, t2);

waitKey(0);

destroyAllWindows();

return 0;

}

效果:

1、基於畫素均值分割

2、基於OTSU、triangle分割

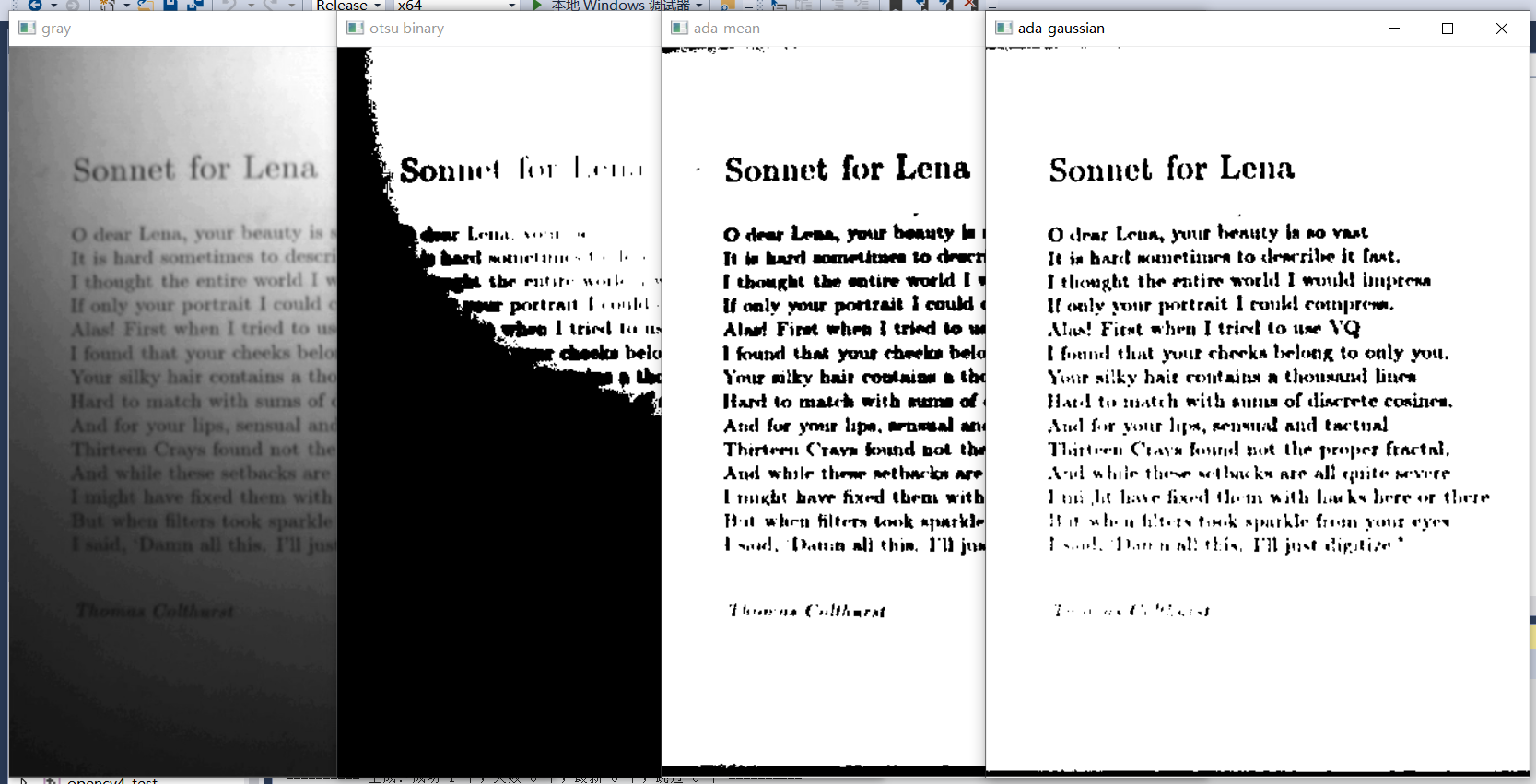

29、自適應閾值分割

-

全域性閾值的侷限性,對光照度不均勻的影象容易錯誤的二值化分割

-

自適應閾值對影象模糊求差值然後二值化

-

演演算法流程:(C+原圖) - D > 0 ? 255:0

- 盒子模糊(高斯模糊、均值模糊)影象 → D

- 原圖 + 加上偏置常數C得到 → S

- T = S - D + 255

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

int main(int argc, char** argv) {

//Mat src = imread("D:/images/sonnet for lena.png");

Mat src = imread("D:/images/lena.jpg");

if (src.empty()) {

printf("could not find image file");

return -1;

}

namedWindow("input", WINDOW_AUTOSIZE);

imshow("input", src);

Mat gray, binary;

cvtColor(src, gray, COLOR_BGR2GRAY);

imshow("gray", gray);

double t1 = threshold(gray, binary, 0, 255, THRESH_BINARY | THRESH_OTSU);

imshow("otsu binary", binary);

adaptiveThreshold(gray, binary, 255, ADAPTIVE_THRESH_GAUSSIAN_C, THRESH_BINARY, 25, 10);

imshow("ada-gaussian", binary);

adaptiveThreshold(gray, binary, 255, ADAPTIVE_THRESH_MEAN_C, THRESH_BINARY, 25, 10);

imshow("ada-mean", binary);

waitKey(0);

destroyAllWindows();

return 0;

}

效果:

1、文字:

2、其他影象:

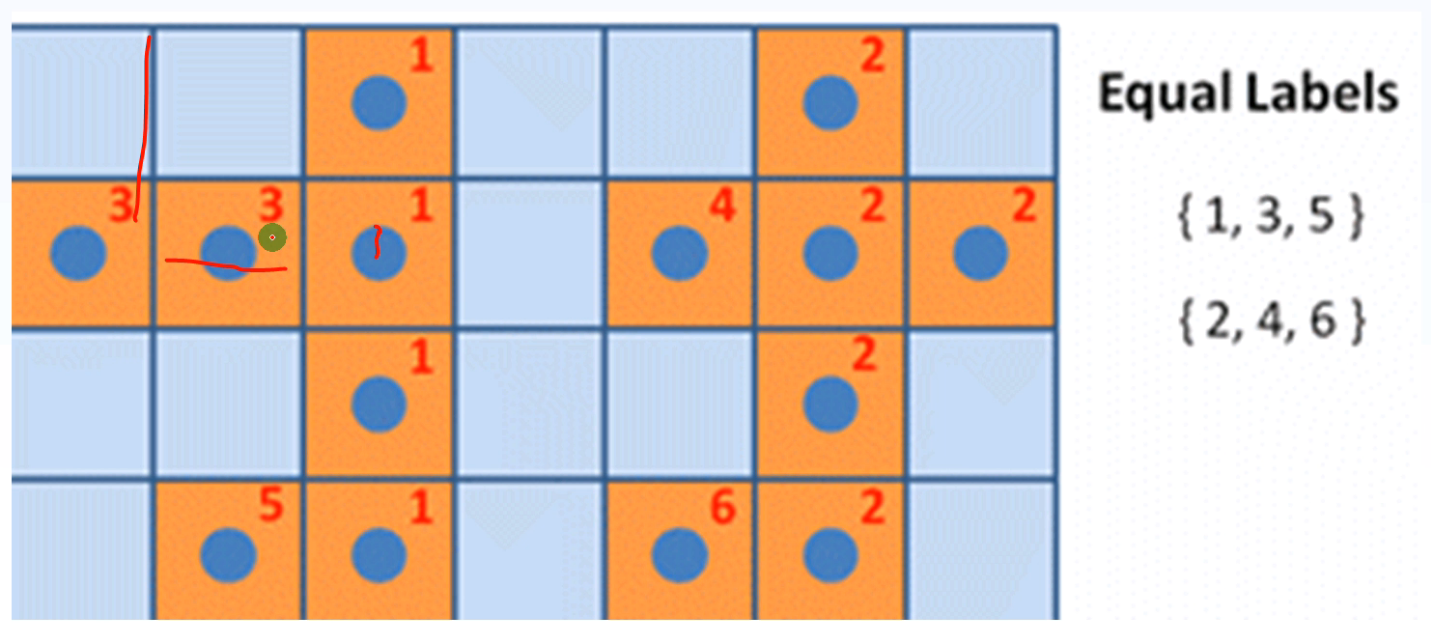

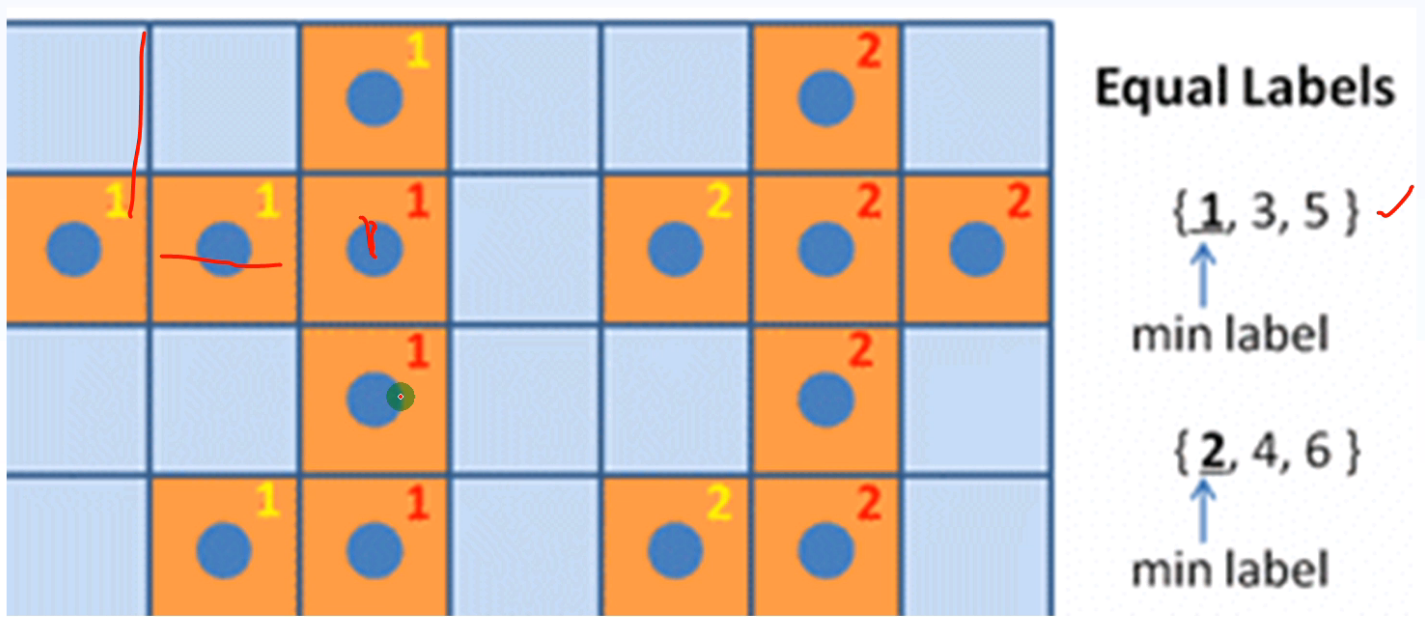

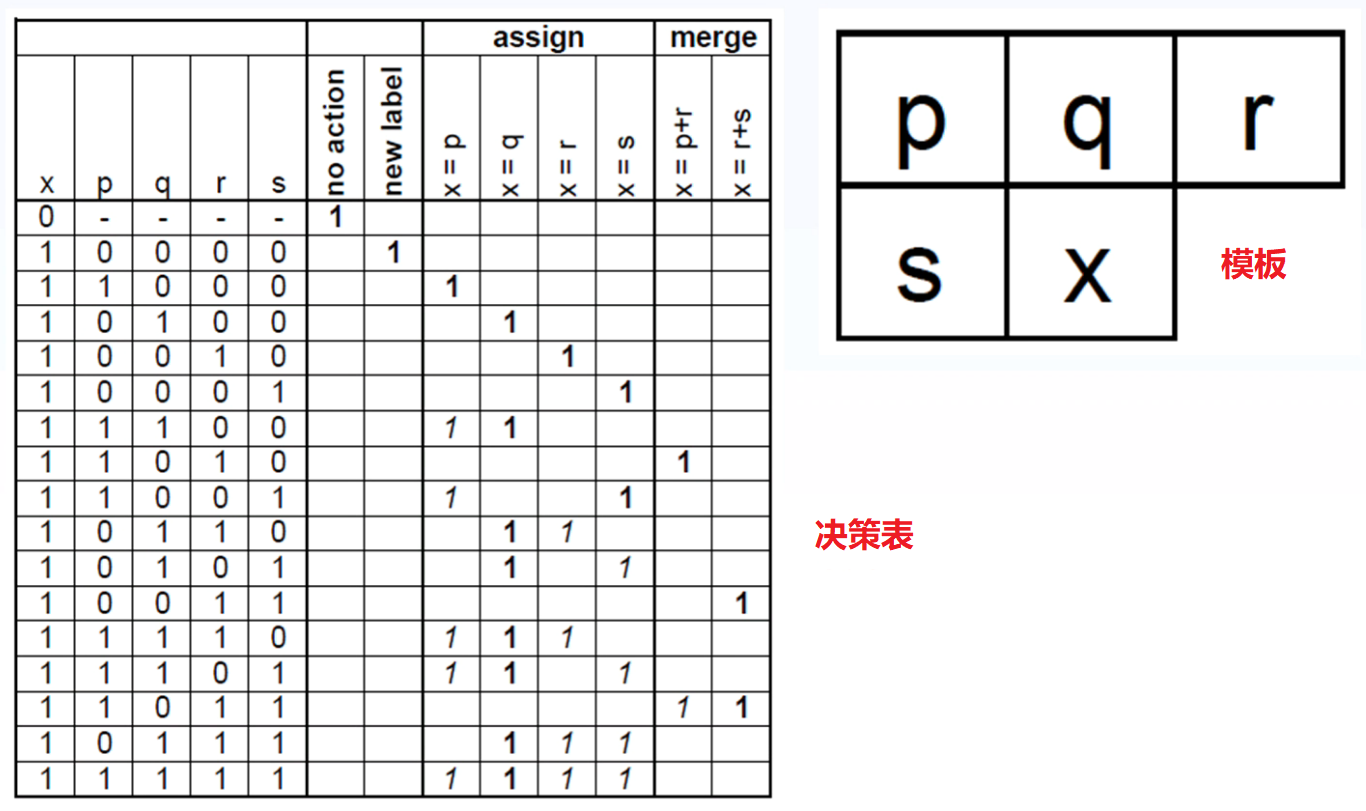

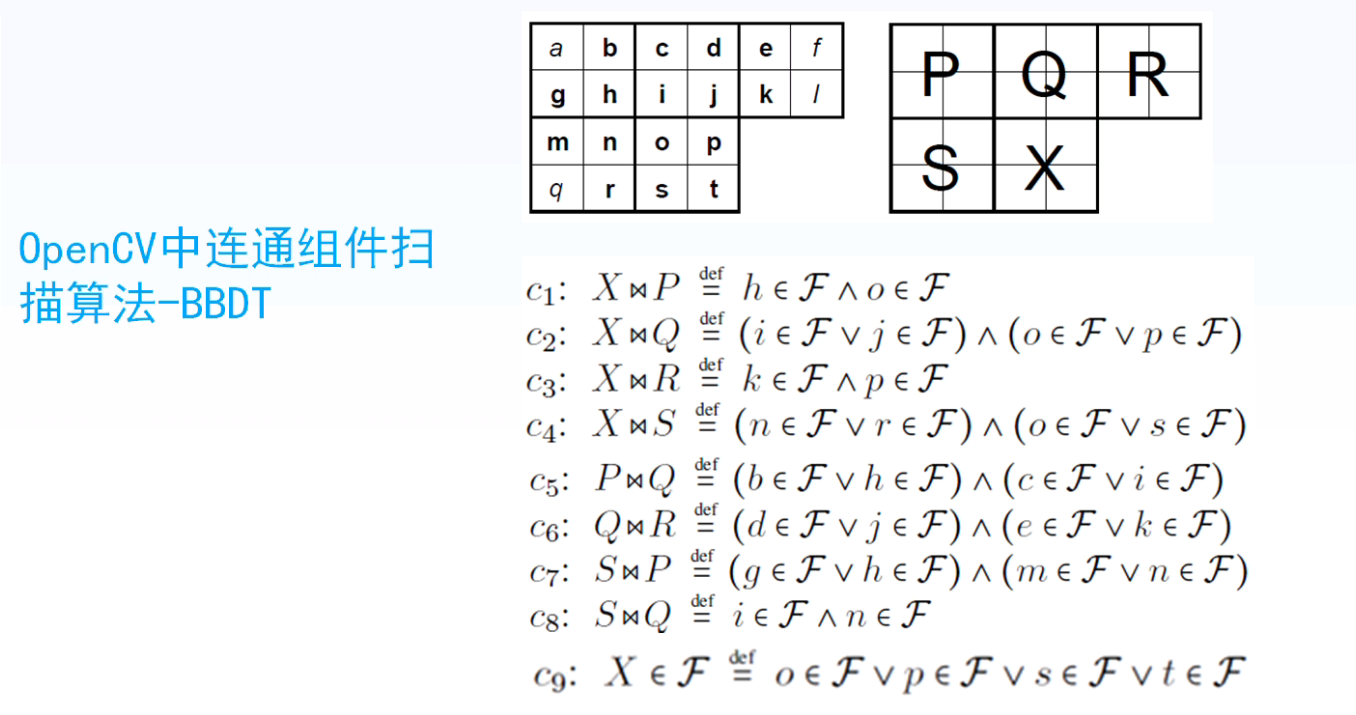

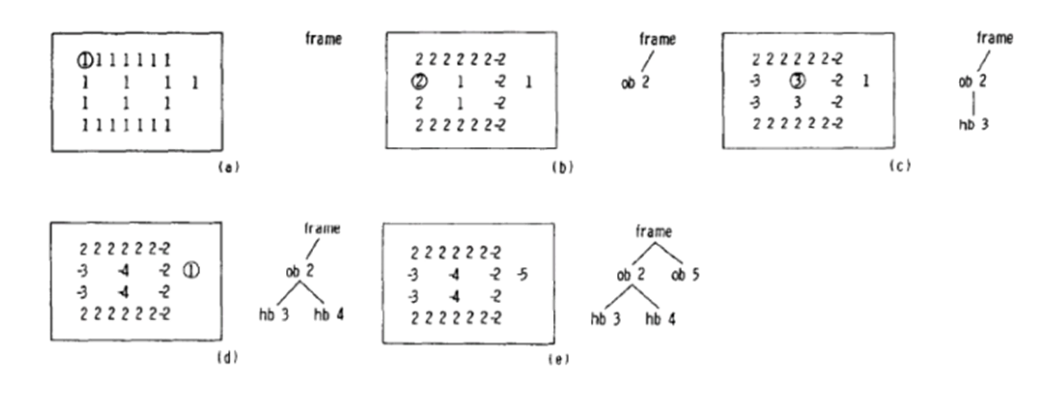

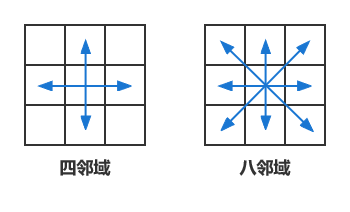

30、聯通元件掃描

四鄰域與八鄰域聯通:

常用演演算法:BBDT(Block Base Decision Table)

-

基於畫素掃描的方法

-

基於塊掃描的方法

-

兩步法掃描:

- 第一步:

- 第二步:

掃描模板:

決策表:

OpenCV中聯通元件掃描演演算法-BBDT:

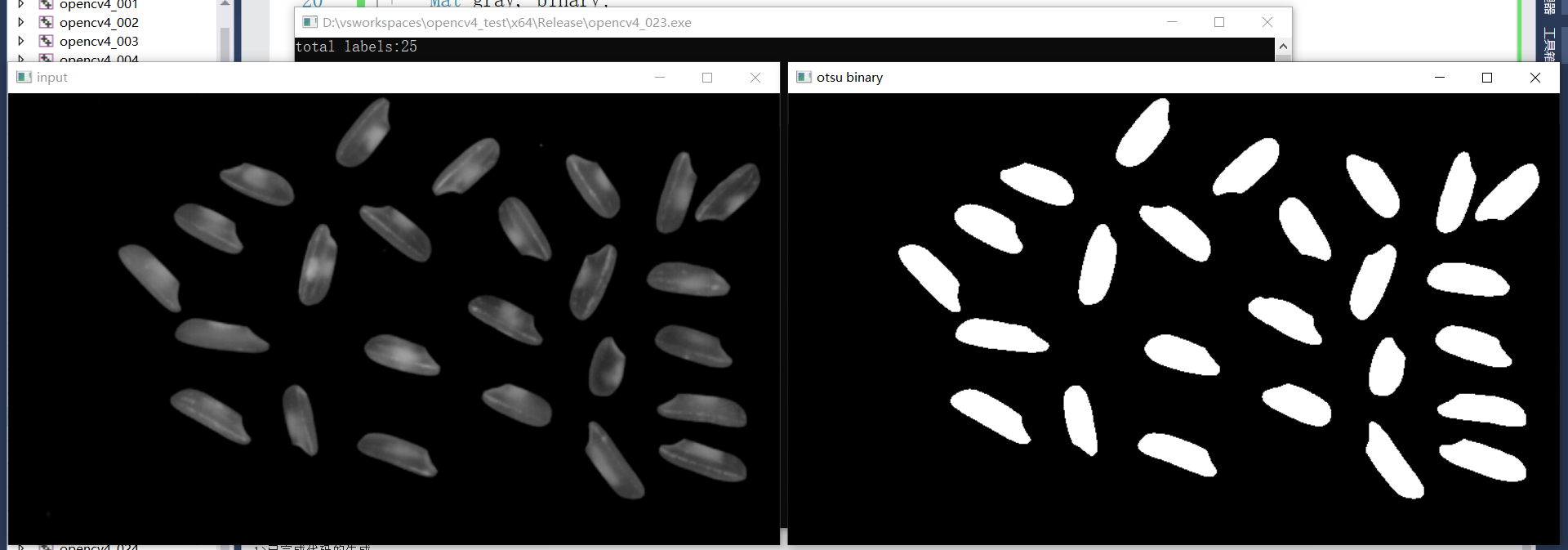

31、帶統計資訊的聯通元件掃描

API知識點:

- connectedComponentsWithStats

- 黑色背景

| 輸出 | 內容 |

|---|---|

| 1 | 聯通元件數目 |

| 2 | 標籤矩陣 |

| 3 | 狀態矩陣 |

| 4 | 中心位置座標 |

狀態矩陣[label,COLUMN]

| 標籤索引 | 列-1 | 列-1 | 列-1 | 列-1 | 列-1 |

|---|---|---|---|---|---|

| 1 | CC_STAT_LEFT | CC_STAT_TOP | CC_STAT_WIDTH | CC_STAT_HEIGHT | CC_STAT_AREA |

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

RNG rng(12345);

void ccl_stats_demo(Mat &image);

int main(int argc, char** argv) {

//Mat src = imread("D:/images/lena.jpg");

Mat src = imread("D:/images/rice.png");

if (src.empty()) {

printf("could not find image file");

return -1;

}

namedWindow("input", WINDOW_AUTOSIZE);

imshow("input", src);

GaussianBlur(src, src, Size(3, 3), 0); //最好在分割處理之前使用高斯濾波降噪

Mat gray, binary;

cvtColor(src, gray, COLOR_BGR2GRAY);

//adaptiveThreshold(gray, binary, 255, ADAPTIVE_THRESH_GAUSSIAN_C, THRESH_BINARY, 81, 10);

threshold(gray, binary, 0, 255, THRESH_BINARY | THRESH_OTSU);

imshow("otsu binary", binary);

ccl_stats_demo(binary); //帶統計資訊的聯通元件

/* 不帶統計資訊的聯通元件

Mat labels = Mat::zeros(binary.size(), CV_32S);

int num_labels = connectedComponents(binary, labels, 8, CV_32S, CCL_DEFAULT); //CCL_DEFAULT就是CCL_GRANA

vector<Vec3b> colorTable(num_labels);

//background color

colorTable[0] = Vec3b(0, 0, 0);

for (int i = 1; i < num_labels; i++) { //背景標籤為0,跳過背景從1開始

colorTable[i] = Vec3b(rng.uniform(0, 256), rng.uniform(0, 256), rng.uniform(0, 256));

}

Mat result = Mat::zeros(src.size(), src.type());

int w = result.cols;

int h = result.rows;

for (int row = 0; row < h; row++) {

for (int col = 0; col < w; col++) {

int label = labels.at<int>(row, col); //判斷每一個畫素的標籤

result.at<Vec3b>(row, col) = colorTable[label]; //根據每一個畫素的標籤對該畫素賦予顏色

}

}

putText(result, format("number:%d", num_labels - 1), Point(50, 50), FONT_HERSHEY_PLAIN, 1.0, Scalar(0, 255, 0), 1);

printf("total labels:%d\n", (num_labels - 1)); //num_labels包含背景,num_labels - 1為前景物件個數

imshow("CCL demo", result);

*/

waitKey(0);

destroyAllWindows();

return 0;

}

void ccl_stats_demo(Mat &image) {

Mat labels = Mat::zeros(image.size(), CV_32S);

Mat stats, centroids;

int num_labels = connectedComponentsWithStats(image, labels, stats, centroids, 8, CV_32S, CCL_DEFAULT);

vector<Vec3b> colorTable(num_labels);

//background color

colorTable[0] = Vec3b(0, 0, 0);

for (int i = 1; i < num_labels; i++) { //背景標籤為0,跳過背景從1開始

colorTable[i] = Vec3b(rng.uniform(0, 256), rng.uniform(0, 256), rng.uniform(0, 256));

}

Mat result = Mat::zeros(image.size(), CV_8UC3);

int w = result.cols;

int h = result.rows;

for (int row = 0; row < h; row++) {

for (int col = 0; col < w; col++) {

int label = labels.at<int>(row, col); //判斷每一個畫素的標籤

result.at<Vec3b>(row, col) = colorTable[label]; //根據每一個畫素的標籤對該畫素賦予顏色

}

}

for (int i = 1; i < num_labels; i++) {

//center

int cx = centroids.at<double>(i, 0);

int cy = centroids.at<double>(i, 1);

//rectangle and area

int x = stats.at<int>(i, CC_STAT_LEFT);

int y = stats.at<int>(i, CC_STAT_TOP);

int width = stats.at<int>(i, CC_STAT_WIDTH);

int height = stats.at<int>(i, CC_STAT_HEIGHT);

int area = stats.at<int>(i, CC_STAT_AREA);

//繪製

circle(result, Point(cx, cy), 3, Scalar(0, 0, 255), 2, 8, 0);

//外接矩形

Rect box(x, y, width, height);

rectangle(result, box, Scalar(0, 255, 0), 2, 8);

putText(result, format("%d", area), Point(x, y), FONT_HERSHEY_PLAIN, 1.0, Scalar(0, 255, 0), 1);

}

putText(result, format("number:%d", num_labels - 1), Point(10, 10), FONT_HERSHEY_PLAIN, 1.0, Scalar(0, 255, 0), 1);

printf("total labels:%d\n", (num_labels - 1)); //num_labels包含背景,num_labels - 1為前景物件個數

imshow("CCL demo", result);

}

效果:

1、OSTU二值化分割

2、帶統計資訊的聯通元件

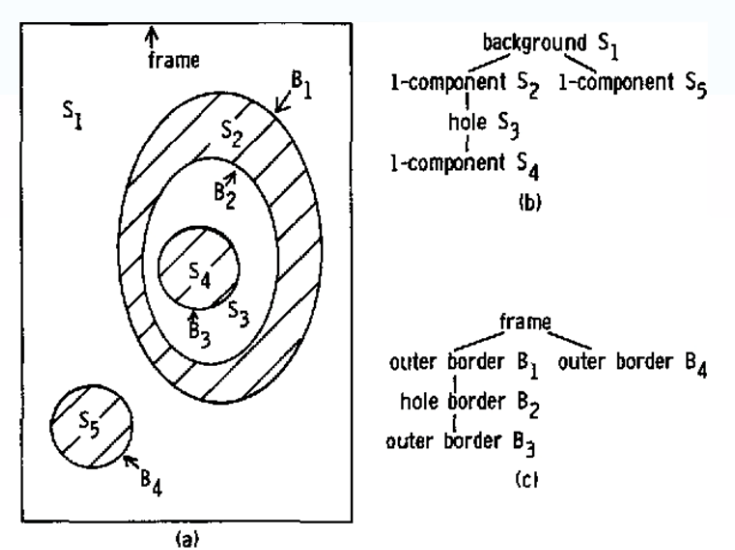

32、影象輪廓發現

- 影象輪廓 - 影象邊界

- 主要針對二值影象,輪廓是一系列點的集合

輪廓發現

- 基於聯通元件

- 反映影象拓撲結構(將最後一個畫素標記為負數,與負數相鄰的畫素標記為相同的負數)

API知識點

- findContours

- drawContours

層次資訊 vector

| 同層下個輪廓索引 | 同層上個輪廓索引 | 下層第一個孩子索引 | 上層父輪廓索引 |

|---|---|---|---|

| hierarchy [i] [0] | hierarchy [i] [1] | hierarchy [i] [2] | hierarchy [i] [3] |

| 引數順序 | 解釋 |

|---|---|

| Image | 二值影象 |

| mode | 拓撲結構(Tree、List、External) |

| method | 編碼方式 |

| contours | 輪廓個數 - 每個輪廓有一系列的點組成 |

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

RNG rng(12345);

int main(int argc, char** argv) {

//Mat src = imread("D:/images/lena.jpg");

Mat src = imread("D:/images/morph3.png");

if (src.empty()) {

printf("could not find image file");

return -1;

}

namedWindow("input", WINDOW_AUTOSIZE);

imshow("input", src);

//二值化

GaussianBlur(src, src, Size(3, 3), 0); //最好在分割處理之前使用高斯濾波降噪

Mat gray, binary;

cvtColor(src, gray, COLOR_BGR2GRAY);

threshold(gray, binary, 0, 255, THRESH_BINARY | THRESH_OTSU);

imshow("otsu binary", binary);

//輪廓發現

vector<vector<Point>> contours;

vector<Vec4i> hirearchy;

//RETR_EXTERNAL表示只繪製最外面的輪廓(外輪廓內的輪廓不繪製),RETR_TREE表示繪製所有輪廓

findContours(binary, contours, hirearchy, RETR_EXTERNAL, CHAIN_APPROX_SIMPLE, Point());

drawContours(src, contours, -1, Scalar(0, 0, 255), 2, 8);

imshow("find contours external demo", src);

findContours(binary, contours, hirearchy, RETR_TREE, CHAIN_APPROX_SIMPLE, Point()); //simple表示用四個點表示輪廓

drawContours(src, contours, -1, Scalar(0, 0, 255), 2, 8);

imshow("find contours tree demo", src);

waitKey(0);

destroyAllWindows();

return 0;

}

效果:

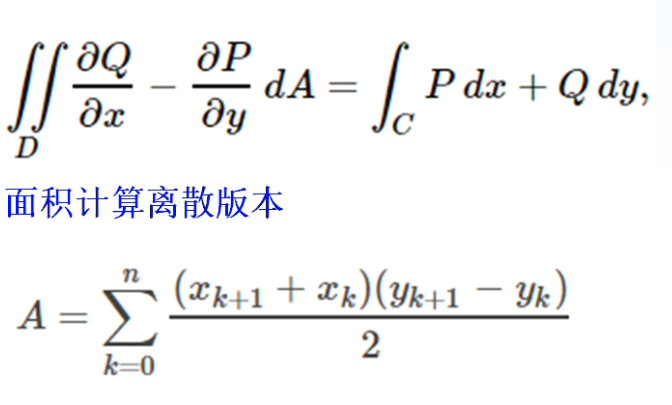

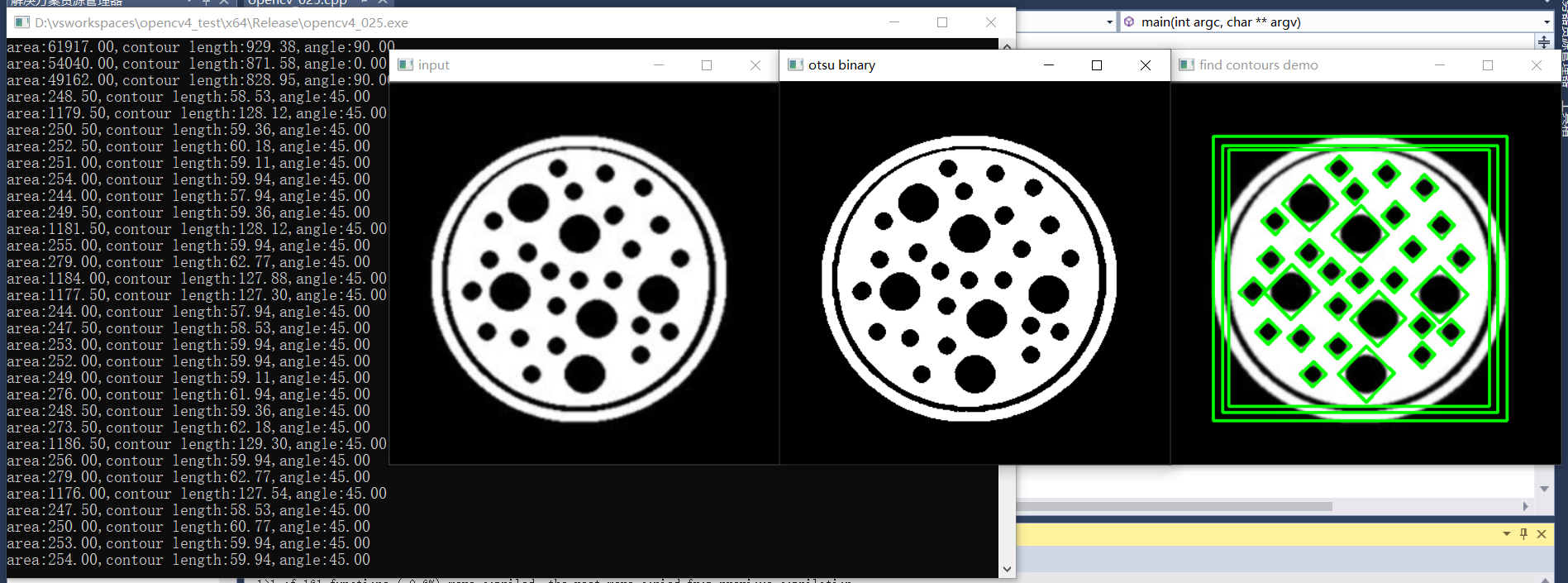

33、影象輪廓計算

輪廓面積與周長:

-

基於格林公式求面積

- 格林公式:

-

二重積分概念理解:

(1)曲頂柱體的體積

什麼是曲頂柱體?設一立體,它的底是一閉合區域D,側面是以D的邊界曲線為準線而母線平行於底的柱面,頂是一個曲面。因為柱體的頂是一個曲面,所以叫曲頂柱體。

想求出這種柱體的體積該怎麼辦呢?一般柱體的體積=底×高,曲頂柱體顯然不適合這種方法,因為頂是曲面。來回想一下不定積分的思想:把曲線分割成非常多份,每一份就相當於一個矩形,所有矩形的面積和就是曲線覆蓋的面積。這種思想在這裡仍然適用。如果把這個曲頂柱體分割成無窮多份,每一份就可以近似看成一個平頂柱體,這些平頂柱體體積的和就是曲頂柱體的體積。

再詳細點講,當分割的柱體直徑最大值趨近0時,取上述和的極限,這個極限就是就是曲頂柱體的體積。

曲頂柱體的體積用公式表達就是這樣子的。公式中f(x,y)是平頂柱體的高,Δ&就是平頂柱體的底。

(2) 平面薄片的質量

一個密度不均勻的薄片,如何計算其質量呢?可以拿稱測量,總之有很多方法。這裡用一個不是特別常規的方法。像上一個例子一樣,把薄片分成若干份,當分的份數足夠多時,每一份小薄片可以看成密度均勻的薄片,密度均勻的薄片求質量是很好算的,所有小薄片的質量的和就是整個薄片的質量。

整個薄片質量用公式來表達就是這樣。公式裡的f(x,y)代表在點(x,y)處薄片的面密度,Δ&代表小薄片的面積。

-

基於L2距離來求周長

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

RNG rng(12345);

int main(int argc, char** argv) {

//Mat src = imread("D:/images/lena.jpg");

Mat src = imread("D:/images/morph3.png");

if (src.empty()) {

printf("could not find image file");

return -1;

}

namedWindow("input", WINDOW_AUTOSIZE);

imshow("input", src);

//二值化

GaussianBlur(src, src, Size(3, 3), 0); //最好在分割處理之前使用高斯濾波降噪

Mat gray, binary;

cvtColor(src, gray, COLOR_BGR2GRAY);

threshold(gray, binary, 0, 255, THRESH_BINARY | THRESH_OTSU);

imshow("otsu binary", binary);

//輪廓發現

vector<vector<Point>> contours;

vector<Vec4i> hirearchy;

//RETR_EXTERNAL表示只繪製最外面的輪廓(外輪廓內的輪廓不繪製),RETR_TREE表示繪製所有輪廓

findContours(binary, contours, hirearchy, RETR_TREE, CHAIN_APPROX_SIMPLE, Point());

for (size_t t = 0; t < contours.size(); t++) {

double area = contourArea(contours[t]); //面積

double length = arcLength(contours[t], true); //周長

if (area < 100 || length < 10) continue; //新增繪製時的過濾條件

Rect box = boundingRect(contours[t]);

//rectangle(src, box, Scalar(0, 0, 255), 2, 8, 0); //繪製最大外接矩形

RotatedRect rrt = minAreaRect(contours[t]);

//ellipse(src, rrt, Scalar(255, 0, 0), 2, 8);

Point2f pts[4];

rrt.points(pts);

for (int i = 0; i < 4; i++) {

line(src, pts[i], pts[(i + 1) % 4], Scalar(0, 255, 0), 2, 8); //繪製最小外接矩形

}

printf("area:%.2f,contour length:%.2f,angle:%.2f\n", area, length, rrt.angle);

//drawContours(src, contours, t, Scalar(0, 0, 255), 2, 8);

}

imshow("find contours demo", src);

waitKey(0);

destroyAllWindows();

return 0;

}

效果:

1、最大外接矩形及周長面積

2、最小外接矩形及周長面積角度

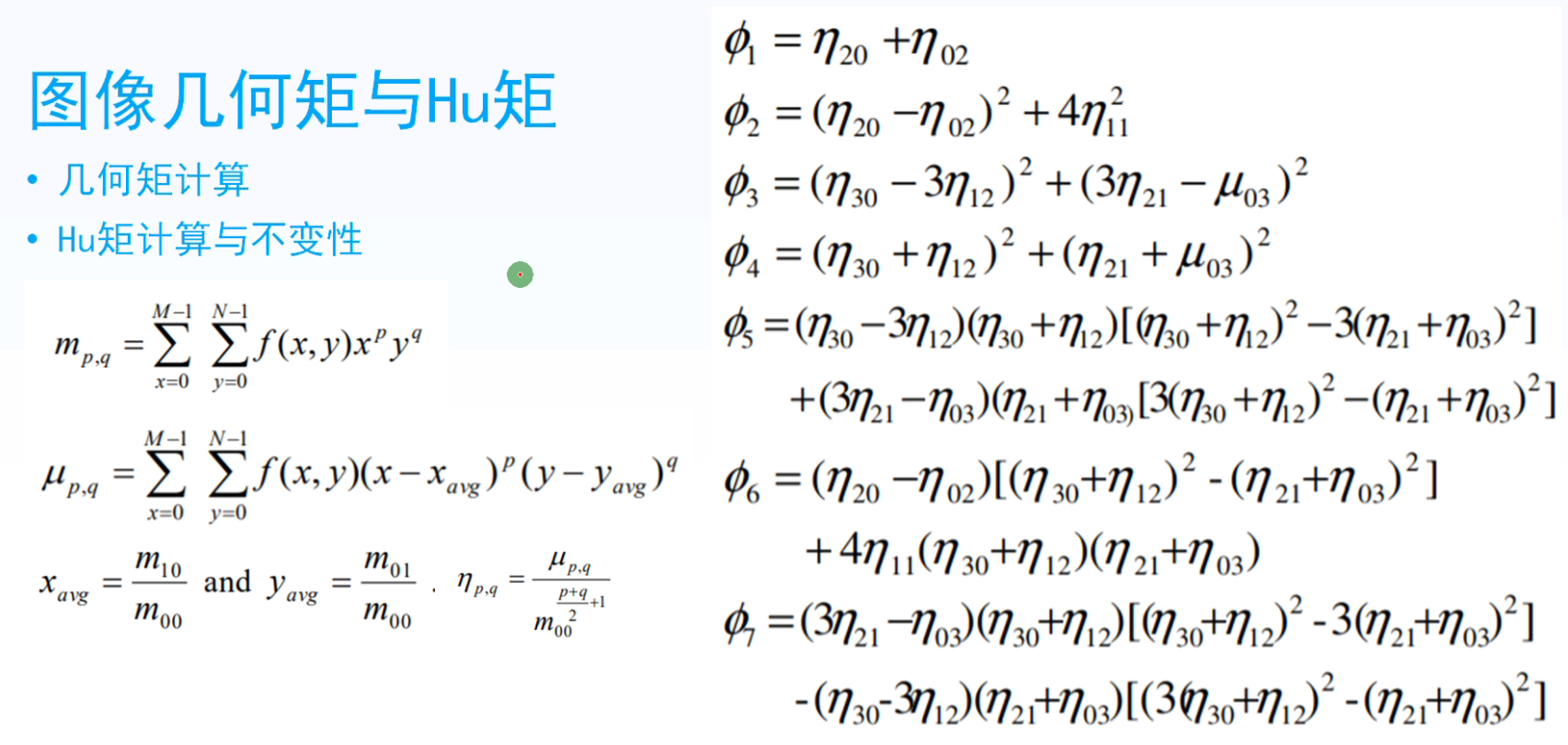

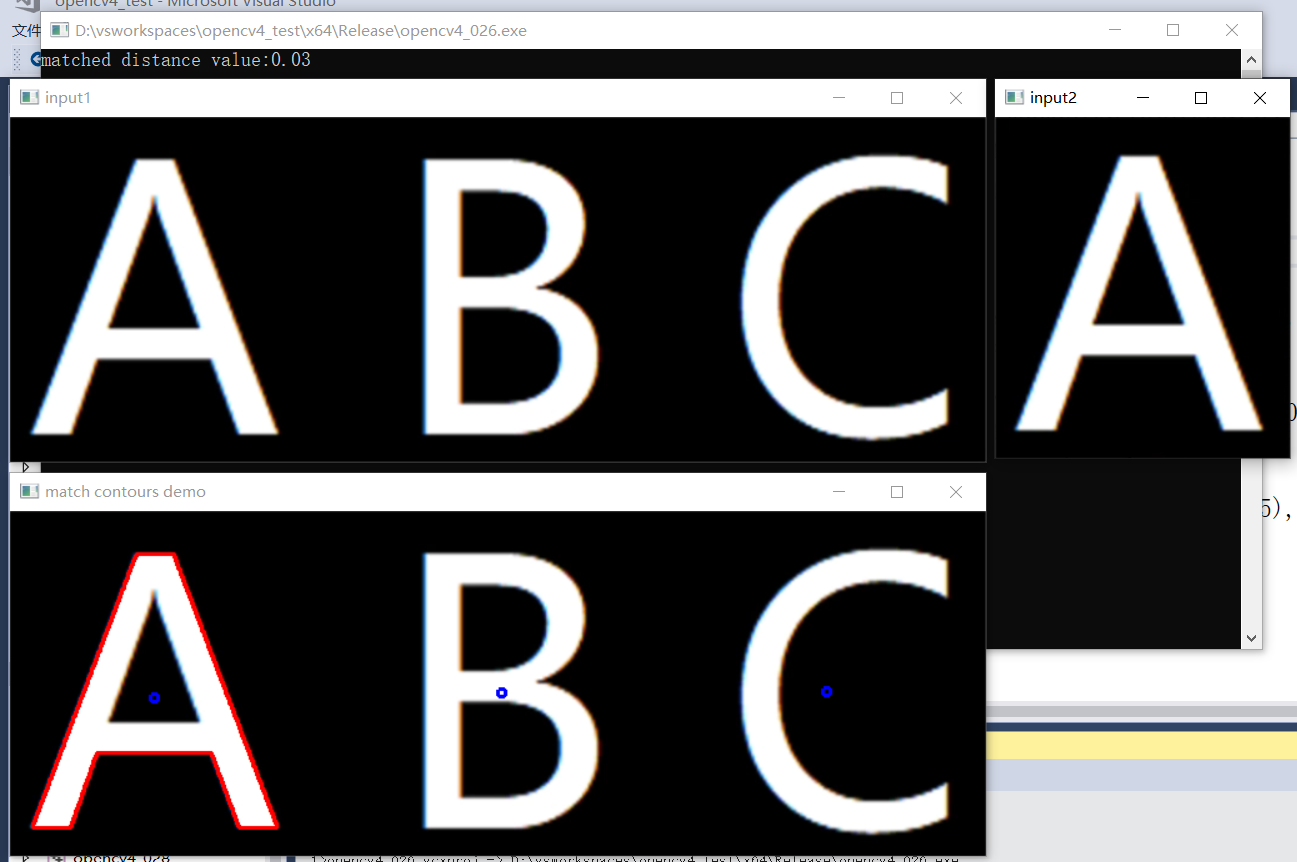

34、輪廓匹配

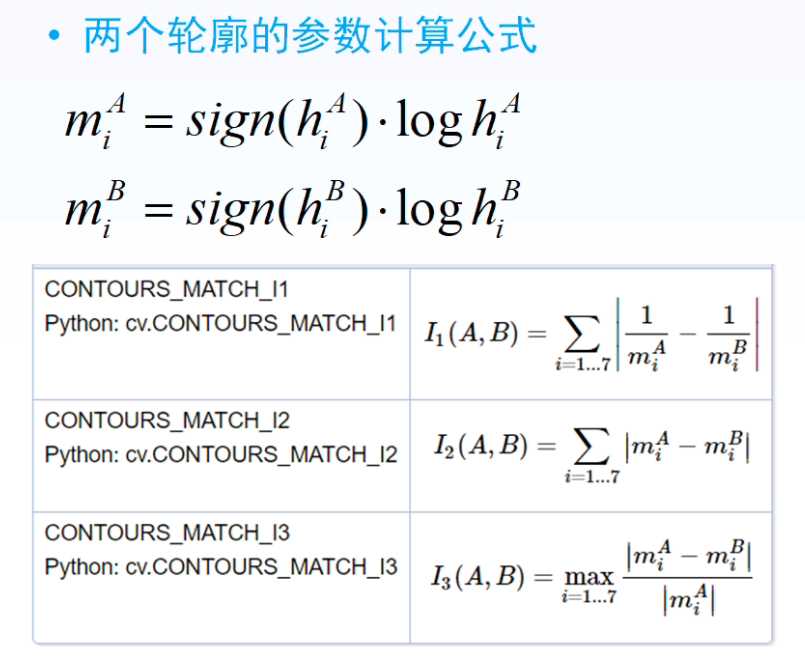

- 影象幾何矩與Hu矩(最終得到的七個值有放縮、旋轉、平移不變性)

- 先令 x 或 y 等於0,求取 x 和 y 的平均值,再計算Hu矩

- 基於Hu矩輪廓匹配

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

RNG rng(12345);

void contour_info(Mat &image, vector<vector<Point>> &contours);

int main(int argc, char** argv) {

Mat src1 = imread("D:/images/abc.png");

Mat src2 = imread("D:/images/a.png");

if (src1.empty() || src2.empty()) {

printf("could not find image file");

return -1;

}

imshow("input1", src1);

imshow("input2", src2);

vector<vector<Point>> contours1;

vector<vector<Point>> contours2;

contour_info(src1, contours1);

contour_info(src2, contours2);

Moments mm2 = moments(contours2[0]); //計算幾何矩

Mat hu2;

HuMoments(mm2, hu2); //計算Hu矩

for (size_t t = 0; t < contours1.size(); t++) {

Moments mm = moments(contours1[t]);

double cx = mm.m10 / mm.m00; //繪製每個輪廓中心點

double cy = mm.m01 / mm.m00;

circle(src1, Point(cx, cy), 3, Scalar(255, 0, 0), 2, 8, 0);

Mat hu;

HuMoments(mm, hu);

double dist = matchShapes(hu, hu2, CONTOURS_MATCH_I1, 0);

if (dist < 1.0) {

printf("matched distance value:%.2f\n", dist);

drawContours(src1, contours1, t, Scalar(0, 0, 255), 2, 8);

}

}

imshow("match contours demo", src1);

waitKey(0);

destroyAllWindows();

return 0;

}

void contour_info(Mat &image, vector<vector<Point>> &contours) {

//二值化

Mat dst;

GaussianBlur(image, dst, Size(3, 3), 0); //最好在分割處理之前使用高斯濾波降噪

Mat gray, binary;

cvtColor(dst, gray, COLOR_BGR2GRAY);

threshold(gray, binary, 0, 255, THRESH_BINARY | THRESH_OTSU);

//輪廓發現

vector<Vec4i> hirearchy;

//RETR_EXTERNAL表示只繪製最外面的輪廓(外輪廓內的輪廓不繪製),RETR_TREE表示繪製所有輪廓

findContours(binary, contours, hirearchy, RETR_EXTERNAL, CHAIN_APPROX_SIMPLE, Point());

}

效果:

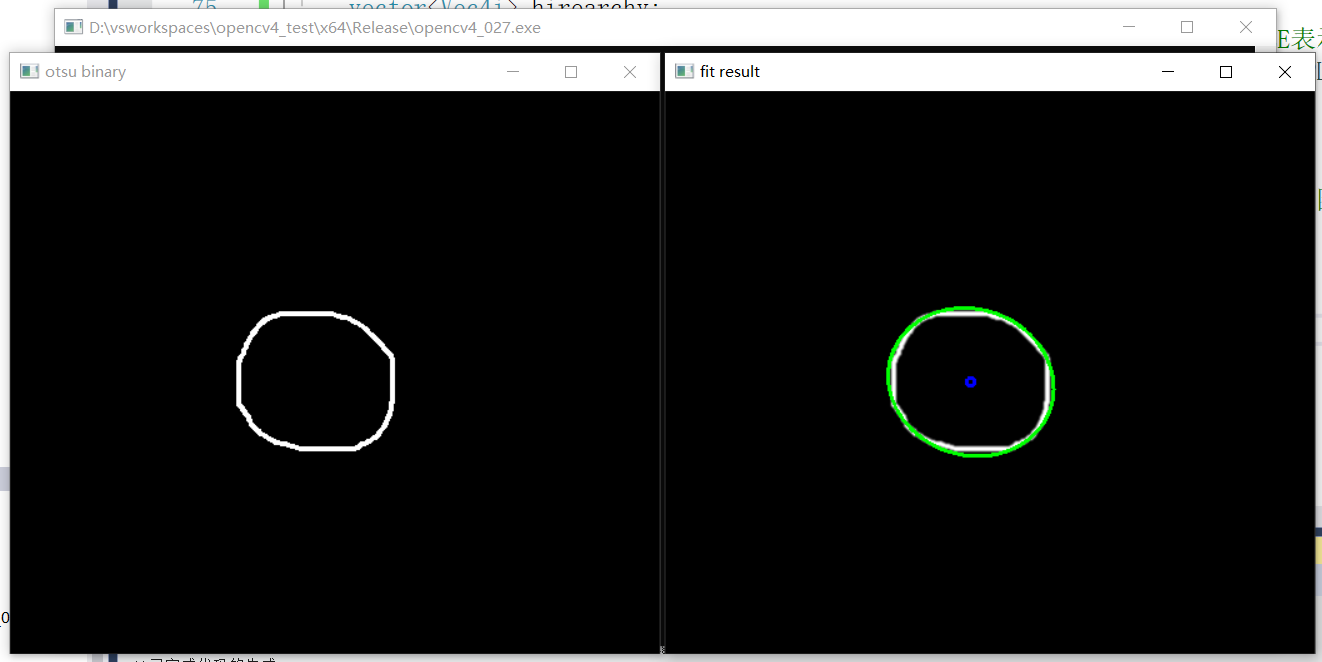

35、輪廓逼近與擬合

- 輪廓逼近,本質是減少編碼點

- 擬合圓,生成最相似的圓或者橢圓

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

void fit_circle_demo(Mat &image);

int main(int argc, char** argv) {

Mat src = imread("D:/images/stuff.png");

if (src.empty()) {

printf("could not find image file");

return -1;

}

fit_circle_demo(src);

/*

//二值化

GaussianBlur(src, src, Size(3, 3), 0); //最好在分割處理之前使用高斯濾波降噪

Mat gray, binary;

cvtColor(src, gray, COLOR_BGR2GRAY);

threshold(gray, binary, 0, 255, THRESH_BINARY | THRESH_OTSU);

imshow("otsu binary", binary);

//輪廓發現

vector<vector<Point>> contours;

vector<Vec4i> hirearchy;

//RETR_EXTERNAL表示只繪製最外面的輪廓(外輪廓內的輪廓不繪製),RETR_TREE表示繪製所有輪廓

findContours(binary, contours, hirearchy, RETR_EXTERNAL, CHAIN_APPROX_SIMPLE, Point());

//多邊形逼近演示程式

for (size_t t = 0; t < contours.size(); t++) {

Moments mm = moments(contours[t]);

double cx = mm.m10 / mm.m00; //繪製每個輪廓中心點

double cy = mm.m01 / mm.m00;

circle(src, Point(cx, cy), 3, Scalar(255, 0, 0), 2, 8, 0);

double area = contourArea(contours[t]);

double clen = arcLength(contours[t], true);

Mat result;

approxPolyDP(contours[t], result, 4, true);

printf("corners:%d,contour area:%.2f,contour length:%.2f\n", result.rows, area, clen);

if (result.rows == 6) {

putText(src, "poly", Point(cx, cy - 10), FONT_HERSHEY_PLAIN, 1.0, Scalar(0, 0, 255), 1, 8);

}

if (result.rows == 4) {

putText(src, "rectangle", Point(cx, cy - 10), FONT_HERSHEY_PLAIN, 1.0, Scalar(0, 255, 255), 1, 8);

}

if (result.rows == 3) {

putText(src, "triangle", Point(cx, cy - 10), FONT_HERSHEY_PLAIN, 1.0, Scalar(255, 0, 255), 1, 8);

}

if (result.rows > 10) {

putText(src, "circle", Point(cx, cy - 10), FONT_HERSHEY_PLAIN, 1.0, Scalar(255, 255, 0), 1, 8);

}

}

imshow("find contours demo", src);

*/

waitKey(0);

destroyAllWindows();

return 0;

}

void fit_circle_demo(Mat &image) {

//二值化

GaussianBlur(image, image, Size(3, 3), 0); //最好在分割處理之前使用高斯濾波降噪

Mat gray, binary;

cvtColor(image, gray, COLOR_BGR2GRAY);

threshold(gray, binary, 0, 255, THRESH_BINARY | THRESH_OTSU);

imshow("otsu binary", binary);

//輪廓發現

vector<vector<Point>> contours;

vector<Vec4i> hirearchy;

//RETR_EXTERNAL表示只繪製最外面的輪廓(外輪廓內的輪廓不繪製),RETR_TREE表示繪製所有輪廓

findContours(binary, contours, hirearchy, RETR_EXTERNAL, CHAIN_APPROX_SIMPLE, Point());

//擬合圓或者橢圓

for (size_t t = 0; t < contours.size(); t++) {

//drawContours(image, contours, t, Scalar(0, 0, 255), 2, 8); //繪製原圖輪廓

RotatedRect rrt = fitEllipse(contours[t]); //獲取擬合的橢圓

float w = rrt.size.width;

float h = rrt.size.height;

Point center = rrt.center;

circle(image, center, 3, Scalar(255, 0, 0), 2, 8, 0); //繪製中心

ellipse(image, rrt, Scalar(0, 255, 0), 2, 8); //繪製擬合的橢圓

}

imshow("fit result", image);

}

效果:

1、多邊形逼近

2、影象橢圓擬合

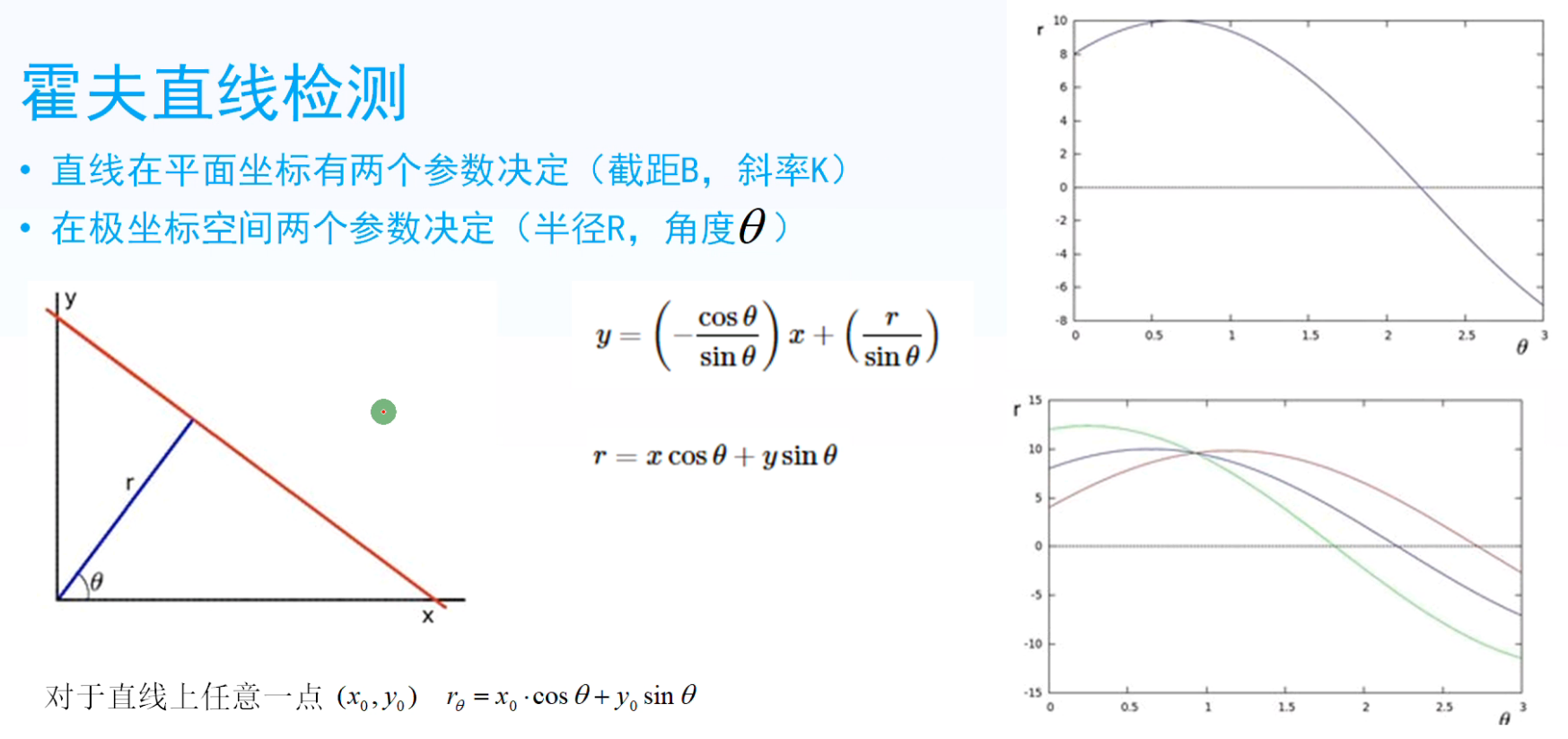

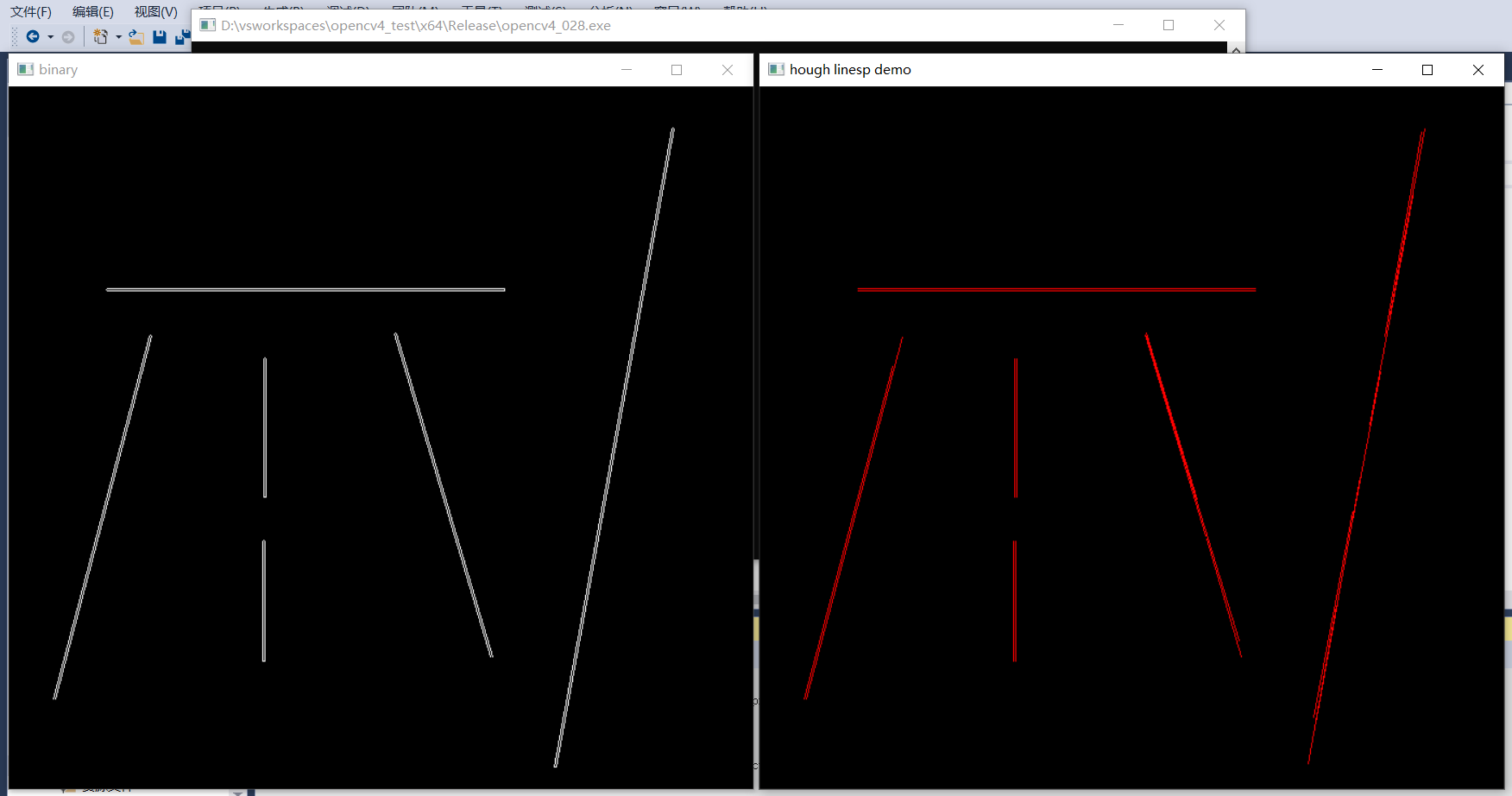

36、霍夫直線檢測1

- 直線在平面座標由兩個引數決定(截距B,斜率K)

- 在極座標空間由兩個引數決定(半徑R,角度θ)

- 圖片講解:三條曲線相交於一點,由該點確定的 r 和 θ 確定唯一的一條直線。

-

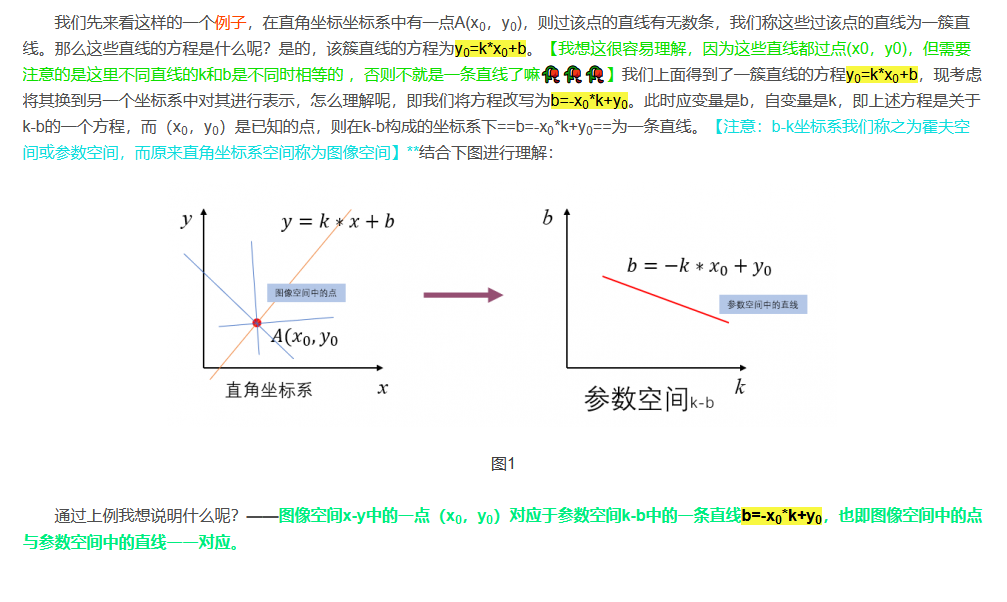

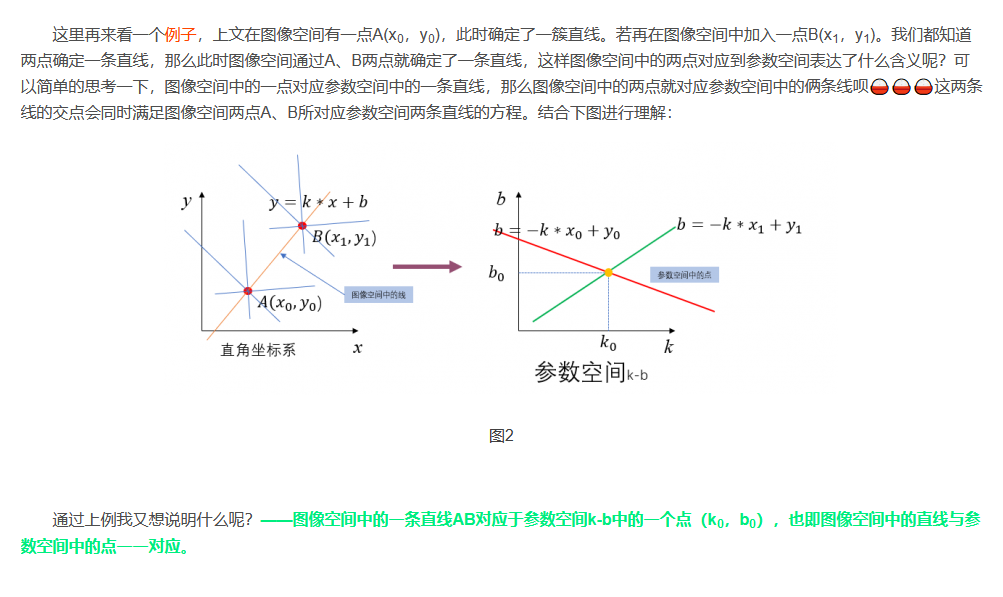

原理進一步理解:

- 1、影象空間x-y中的一點對應於引數空間k-b中的一條直線。

- 2、影象空間中的一條直線對應於引數空間k-b中的一個點。

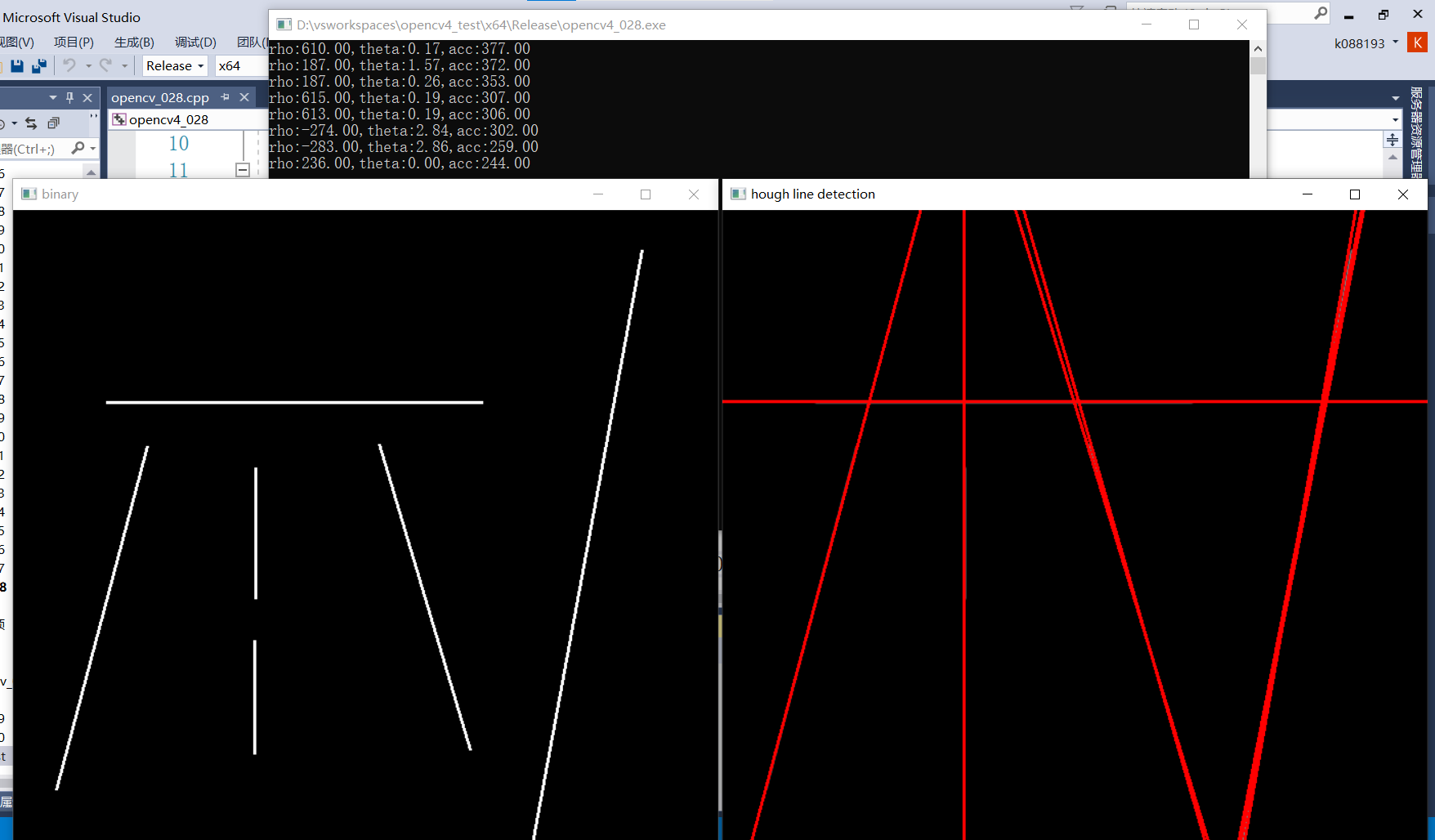

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

int main(int argc, char** argv) {

Mat src = imread("D:/images/tline.png");

if (src.empty()) {

printf("could not find image file");

return -1;

}

//二值化

GaussianBlur(src, src, Size(3, 3), 0); //最好在分割處理之前使用高斯濾波降噪

Mat gray, binary;

cvtColor(src, gray, COLOR_BGR2GRAY);

threshold(gray, binary, 0, 255, THRESH_BINARY | THRESH_OTSU);

namedWindow("binary", WINDOW_AUTOSIZE);

imshow("binary", binary);

//霍夫直線檢測

vector<Vec3f> lines;

HoughLines(binary, lines, 1, CV_PI / 180.0, 200, 0, 0);

//繪製直線

Point pt1, pt2;

for (size_t i = 0; i < lines.size(); i++) {

float rho = lines[i][0]; //距離

float theta = lines[i][1]; //角度

float acc = lines[i][2]; //累加值

printf("rho:%.2f,theta:%.2f,acc:%.2f\n", rho, theta, acc);

double a = cos(theta);

double b = sin(theta);

double x0 = a * rho, y0 = b * rho;

pt1.x = cvRound(x0 + 1000 * (-b));

pt1.y = cvRound(y0 + 1000 * (a));

pt2.x = cvRound(x0 - 1000 * (-b));

pt2.y = cvRound(y0 - 1000 * (a));

//line(src, pt1, pt2, Scalar(0, 0, 255), 2, 8, 0);

int angle = round((theta / CV_PI) * 180);

if (rho > 0) { //右傾

line(src, pt1, pt2, Scalar(0, 0, 255), 2, 8, 0);

if (angle == 90) { //水平線

line(src, pt1, pt2, Scalar(0, 255, 255), 2, 8, 0);

}

if (angle < 1) { //垂直線

line(src, pt1, pt2, Scalar(255, 255, 0), 4, 8, 0);

}

}

else { //左傾

line(src, pt1, pt2, Scalar(255, 0, 0), 2, 8, 0);

}

}

imshow("hough line detection", src);

waitKey(0);

destroyAllWindows();

return 0;

}

效果:

1、檢測直線

2、檢測不同角度的直線

37、直線型別與線段(霍夫直線檢測2)

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

void hough_linesp_demo();

int main(int argc, char** argv) {

hough_linesp_demo();

waitKey(0);

destroyAllWindows();

return 0;

}

void hough_linesp_demo() {

Mat src = imread("D:/images/tline.png");

Mat binary;

Canny(src, binary, 80, 160, 3, false);

imshow("binary", binary);

vector<Vec4i> lines;

HoughLinesP(binary, lines, 1, CV_PI / 180.0, 80, 30, 10);

Mat result = Mat::zeros(src.size(), src.type());

for (int i = 0; i < lines.size(); i++) {

line(result, Point(lines[i][0], lines[i][1]), Point(lines[i][2], lines[i][3]), Scalar(0, 0, 255), 1, 8);

}

imshow("hough linesp demo", result);

}

效果:

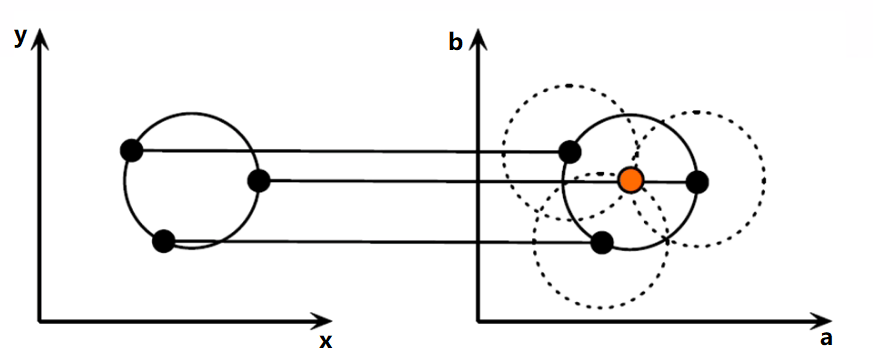

38、霍夫圓檢測

原理:

其實檢測圓形和檢測直線的原理差別不大,只不過直線是在二維空間,因為y=kx+b,只有k和b兩個自由度。而圓形的一般性方程表示為(x-a)²+(y-b)²=r²。那麼就有三個自由度圓心座標a,b,和半徑r。這就意味著需要更多的計算量,而OpenCV中提供的cvHoughCircle()函數裡面可以設定半徑r的取值範圍,相當於有一個先驗設定,在每一個r來說,在二維空間內尋找a和b就可以了,能夠減少計算量。

具體步驟如下:

-

對輸入影象進行邊緣檢測,獲取邊界點,即前景點。

-

假如影象中存在圓形,那麼其輪廓必定屬於前景點(此時請忽略邊緣提取的準確性)。

-

同霍夫變換檢測直線一樣,將圓形的一般性方程換一種方式表示,進行座標變換。由x-y座標系轉換到a-b座標系。寫成如下形式(a-x)²+(b-y)²=r²。那麼x-y座標系中圓形邊界上的一點對應到a-b座標系中即為一個圓。

-

那x-y座標系中一個圓形邊界上有很多個點,對應到a-b座標系中就會有很多個圓。由於原影象中這些點都在同一個圓形上,那麼轉換後a,b必定也滿足a-b座標系下的所有圓形的方程式,也就是有那麼一對a,b會使得所有點都在以該點為圓心的圓上。直觀表現為這許多點對應的圓都會相交於一個點,那麼這個交點就可能是圓心(a, b)。

-

統計區域性交點處圓的個數,取每一個區域性最大值,就可以獲得原影象中對應的圓形的圓心座標(a,b)。一旦在某一個r下面檢測到圓,那麼r的值也就隨之確定。

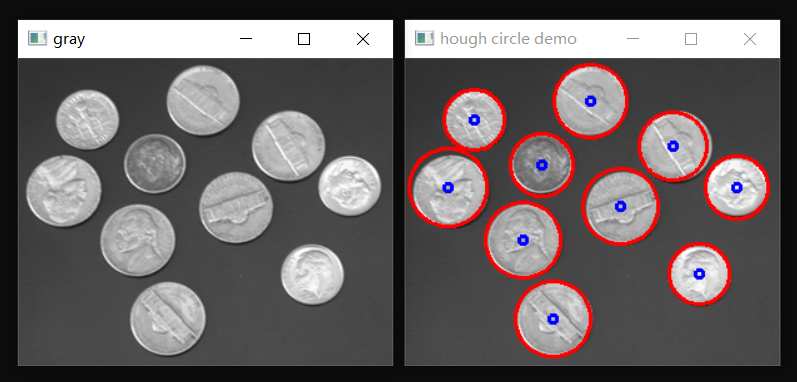

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

int main(int argc, char** argv) {

Mat src = imread("D:/images/test_coins.png");

Mat gray;

cvtColor(src, gray, COLOR_BGR2GRAY);

imshow("gray", gray);

GaussianBlur(gray, gray, Size(9, 9), 2, 2); //降噪增加圓檢測成功率,霍夫圓檢測對噪聲敏感,將檢出率調大,多餘的圓可通過後續方法過濾

vector<Vec3f> circles;

//通過調整半徑等引數來提高圓的檢出率

double minDist = 10;

double min_radius = 10;

double max_radius = 50;

HoughCircles(gray, circles, HOUGH_GRADIENT, 3, minDist, 100, 100, min_radius, max_radius); //輸入為灰度影象

for (size_t t = 0; t < circles.size(); t++) {

Point center(circles[t][0], circles[t][1]);

int radius = round(circles[t][2]);

//繪製圓

circle(src, center, radius, Scalar(0, 0, 255), 2, 8, 0);

circle(src, center, 3, Scalar(255, 0, 0), 2, 8, 0);

}

imshow("hough circle demo", src);

waitKey(0);

destroyAllWindows();

return 0;

}

效果:

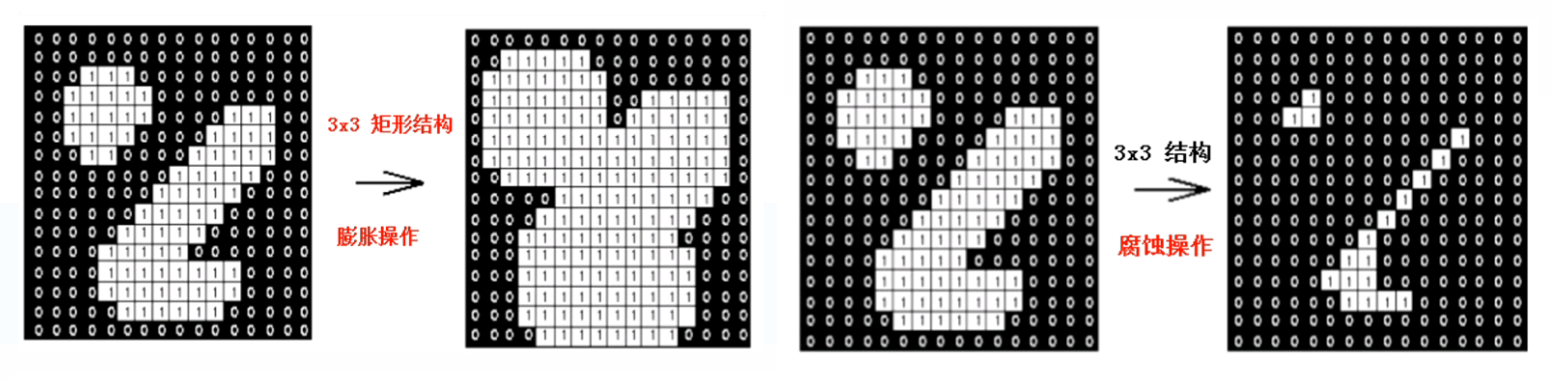

39、影象形態學操作

影象形態學介紹:

- 是影象處理學科的一個單獨分支學科

- 灰度與二值影象處理中重要手段

- 是由數學的集合論等相關理論發展起來的

- 在機器視覺、OCR等領域有重要作用

腐蝕與膨脹:

- 膨脹操作(最大值替換中心畫素)

- 腐蝕操作(最小值替換中心畫素)

結構元素形狀:

- 線形 - 水平與垂直

- 矩形 - w*h

- 十字交叉形狀

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

int main(int argc, char** argv) {

Mat src = imread("D:/images/morph.png");

imshow("input", src);

/*

Mat gray, binary;

cvtColor(src, gray, COLOR_BGR2GRAY);

threshold(gray, binary, 0, 255, THRESH_BINARY | THRESH_OTSU);

*/

Mat dst1, dst2;

Mat kernel = getStructuringElement(MORPH_RECT, Size(3, 3), Point(-1, -1)); //獲取結構元素

erode(src, dst1, kernel);

dilate(src, dst2, kernel);

imshow("erode demo", dst1);

imshow("dilate demo", dst2);

waitKey(0);

destroyAllWindows();

return 0;

}

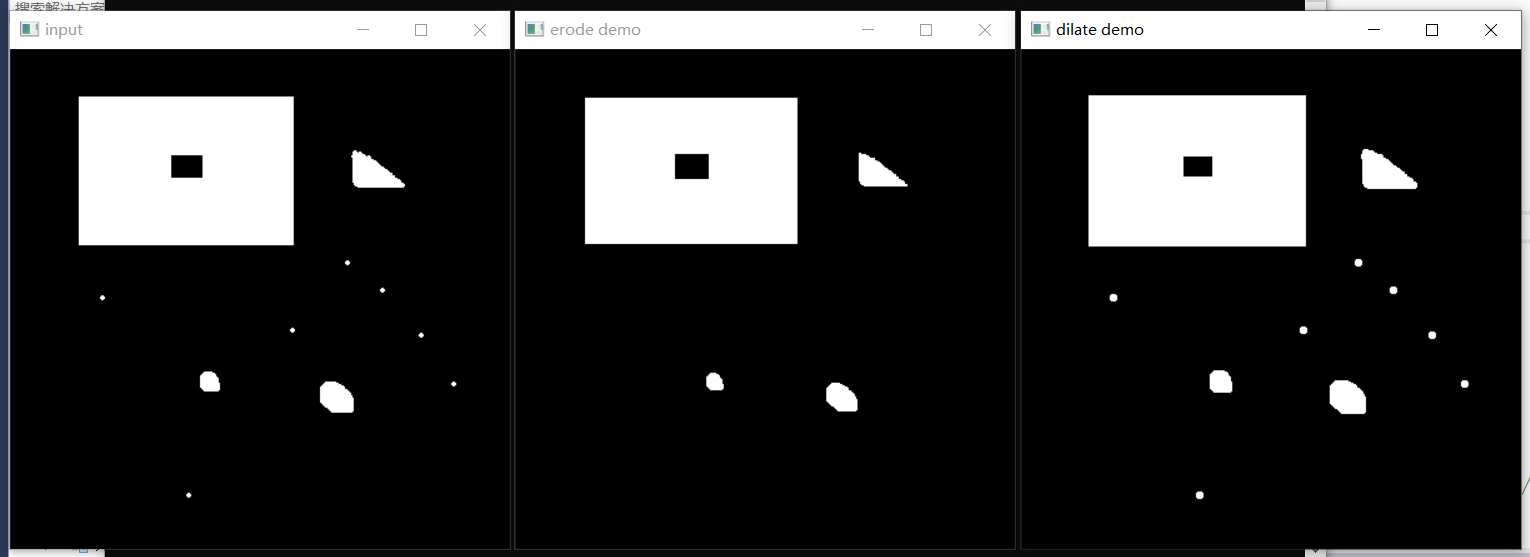

效果:

1、二值影象腐蝕與膨脹操作

2、BGR彩色影象腐蝕與膨脹操作

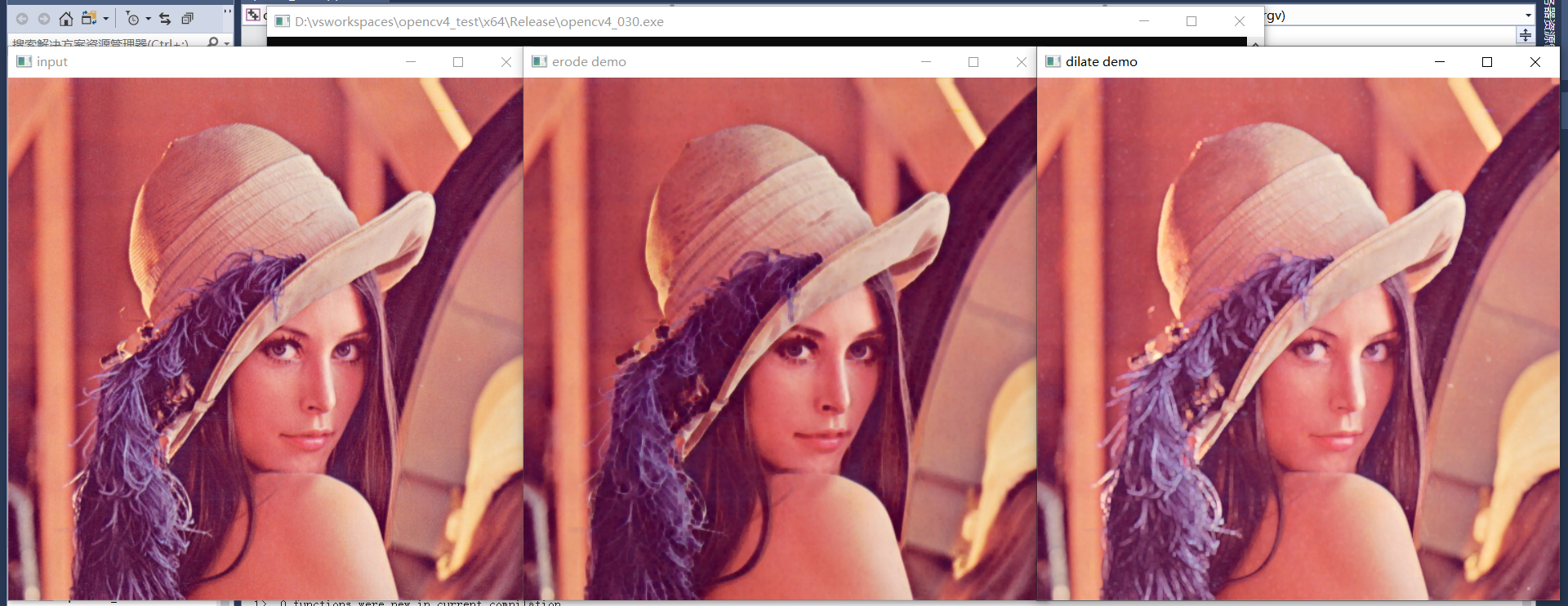

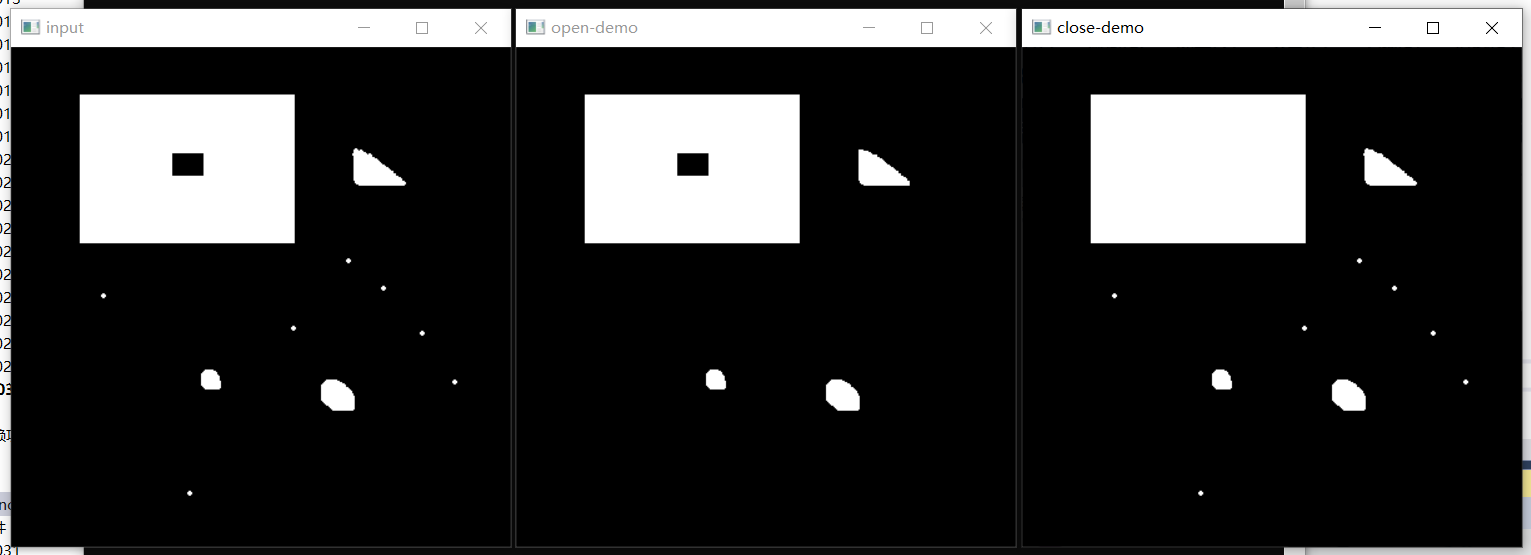

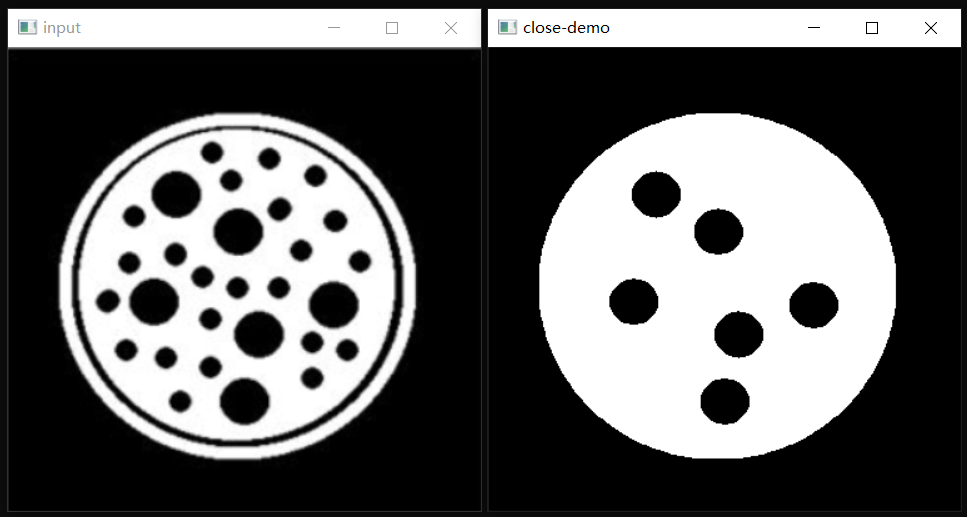

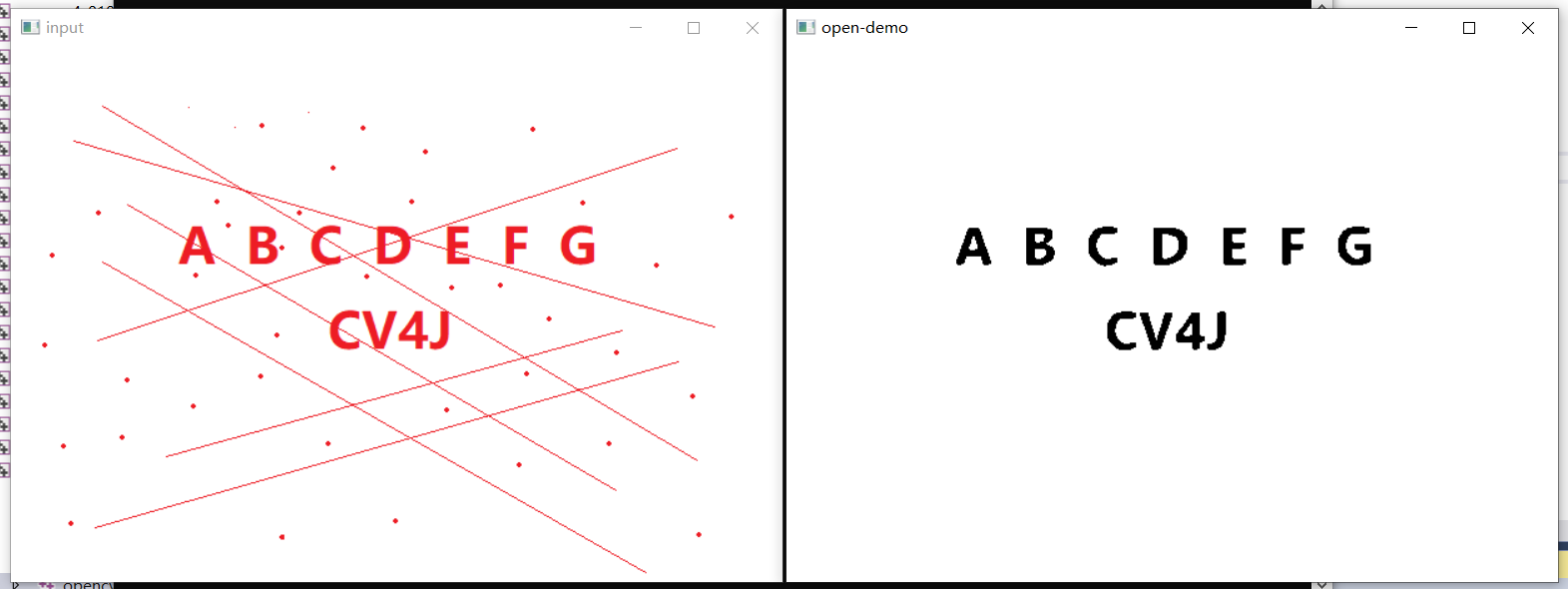

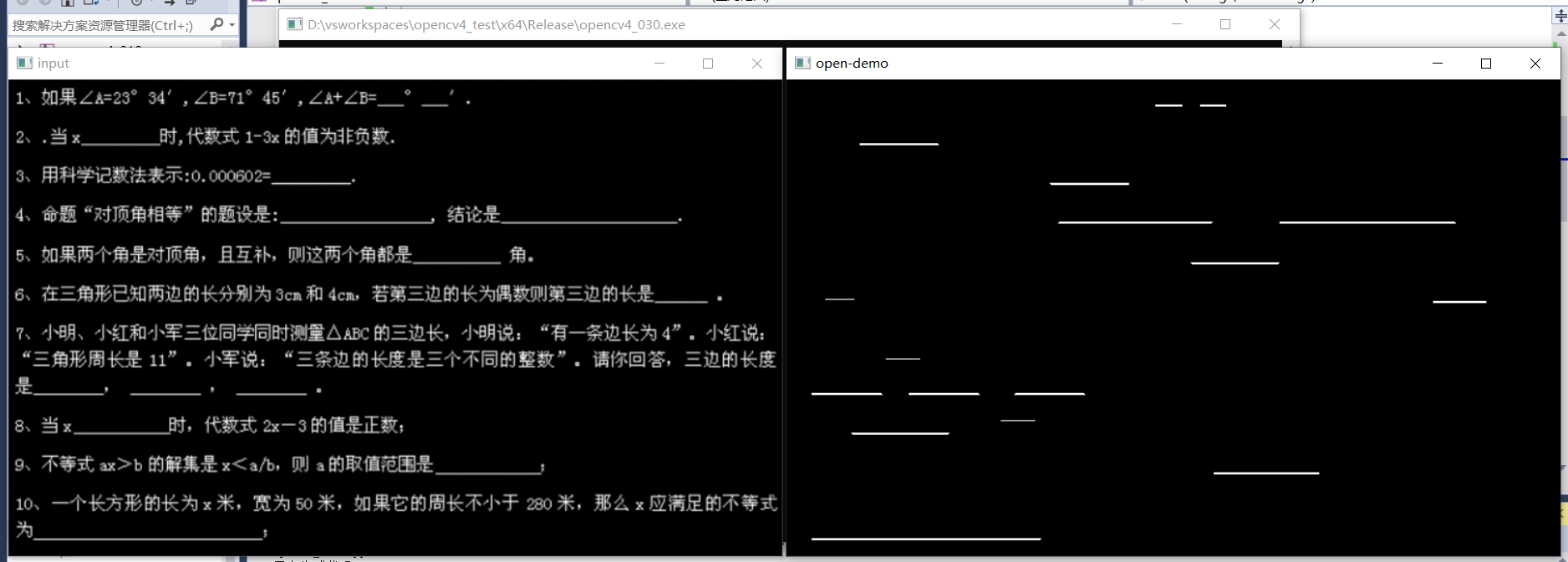

40、開閉操作

開操作:

- 開操作 = 腐蝕 + 膨脹

- 開操作 → 刪除小的干擾塊

閉操作:

- 閉操作 = 膨脹 + 腐蝕

- 閉操作 → 填充閉合區域

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

int main(int argc, char** argv) {

//Mat src = imread("D:/images/morph.png");

//Mat src = imread("D:/images/morph02.png");

Mat src = imread("D:/images/fill.png");

imshow("input", src);

Mat gray, binary;

cvtColor(src, gray, COLOR_BGR2GRAY);

threshold(gray, binary, 0, 255, THRESH_BINARY | THRESH_OTSU);

Mat dst1, dst2;

//Mat kernel01 = getStructuringElement(MORPH_RECT, Size(25, 25), Point(-1, -1));

//Mat kernel01 = getStructuringElement(MORPH_RECT, Size(25, 1), Point(-1, -1)); //提取水平線,水平方向閉操作

Mat kernel01 = getStructuringElement(MORPH_RECT, Size(25, 1), Point(-1, -1)); //提取水平線,水平方向閉操作

//Mat kernel01 = getStructuringElement(MORPH_RECT, Size(1, 25), Point(-1, -1)); //提取垂直線,垂直方向閉操作

//Mat kernel01 = getStructuringElement(MORPH_ELLIPSE, Size(25, 25), Point(-1, -1));

//Mat kernel02 = getStructuringElement(MORPH_ELLIPSE, Size(4, 4), Point(-1, -1));

//morphologyEx(binary, dst1, MORPH_OPEN, kernel02, Point(-1, -1), 1);

//morphologyEx(binary, dst2, MORPH_CLOSE, kernel01, Point(-1, -1), 1);

morphologyEx(binary, dst2, MORPH_OPEN, kernel01, Point(-1, -1), 1);

//imshow("open-demo", dst1);

imshow("open-demo", dst2);

waitKey(0);

destroyAllWindows();

return 0;

}

效果:

1、簡單圖形開閉操作

2、圓形閉操作

3、文字干擾開操作

4、直線提取開操作

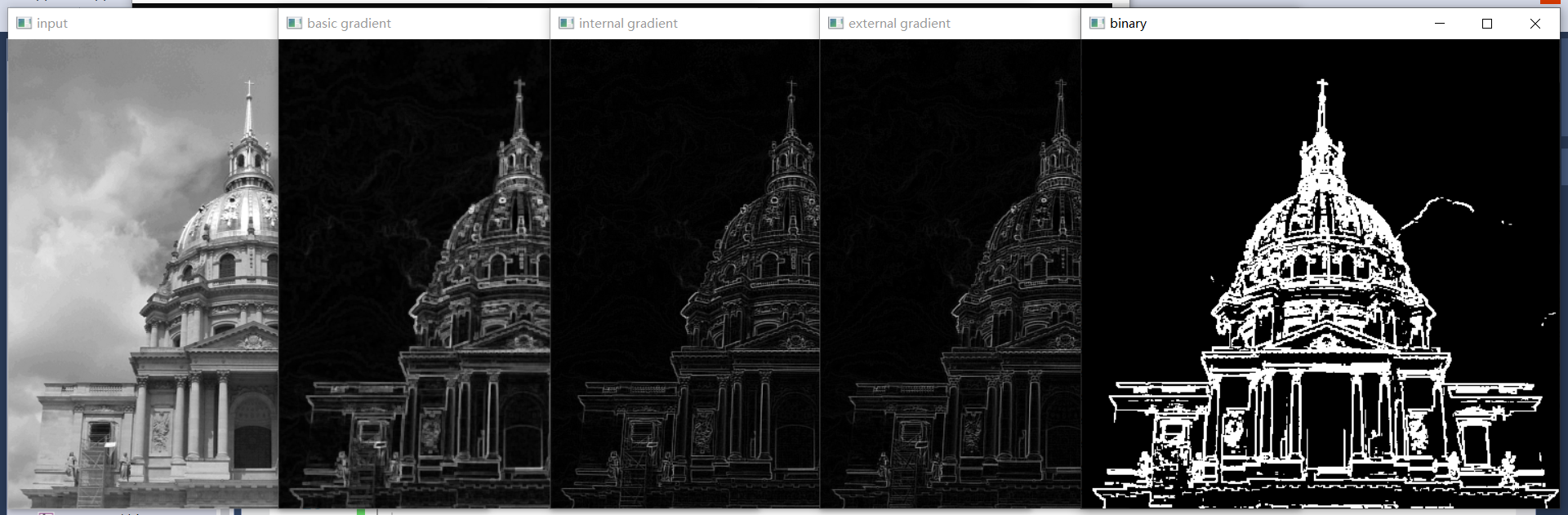

41、形態學梯度(輪廓)

形態學梯度定義

- 基本梯度 - 膨脹減去腐蝕之後的結果,OpenCV中支援的計算形態學梯度的方法

- 內梯度 - 原圖減去腐蝕之後的結果

- 外梯度 - 膨脹減去原圖的結果

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

int main(int argc, char** argv) {

//Mat src = imread("D:/images/yuan_test.png");

Mat src = imread("D:/images/building.png");

Mat gray, binary;

cvtColor(src, gray, COLOR_BGR2GRAY);

imshow("input", gray);

Mat basic_grad, inter_grad, exter_grad;

Mat kernel = getStructuringElement(MORPH_RECT, Size(3, 3), Point(-1, -1));

morphologyEx(gray, basic_grad, MORPH_GRADIENT, kernel, Point(-1, -1), 1);

imshow("basic gradient", basic_grad);

Mat dst1, dst2;

erode(gray, dst1, kernel);

dilate(gray, dst2, kernel);

subtract(gray, dst1, inter_grad);

subtract(dst2, gray, exter_grad);

imshow("internal gradient", inter_grad);

imshow("external gradient", exter_grad);

//先求基本梯度,再對基本梯度進行二值化分割處理

threshold(basic_grad, binary, 0, 255, THRESH_BINARY | THRESH_OTSU); //二值化

imshow("binary", binary);

waitKey(0);

destroyAllWindows();

return 0;

}

效果:

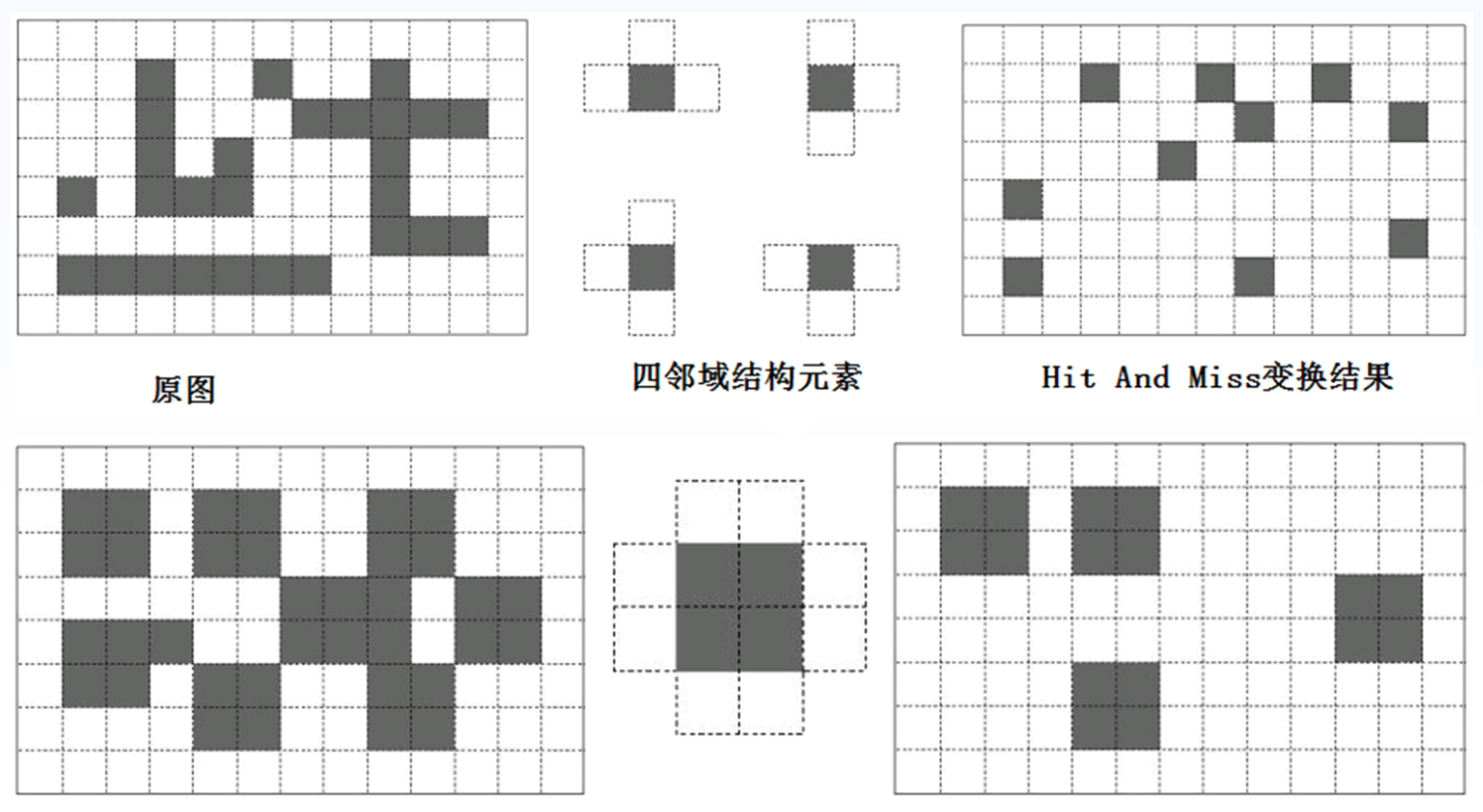

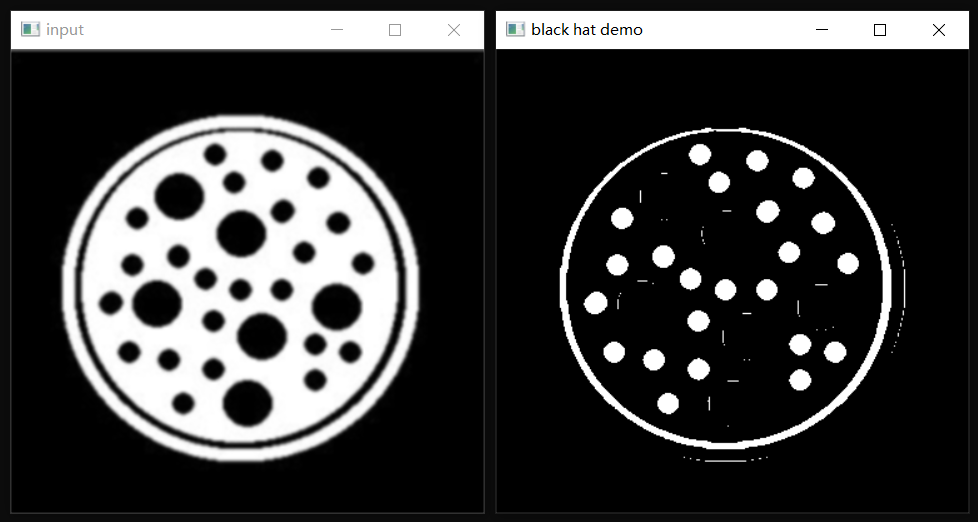

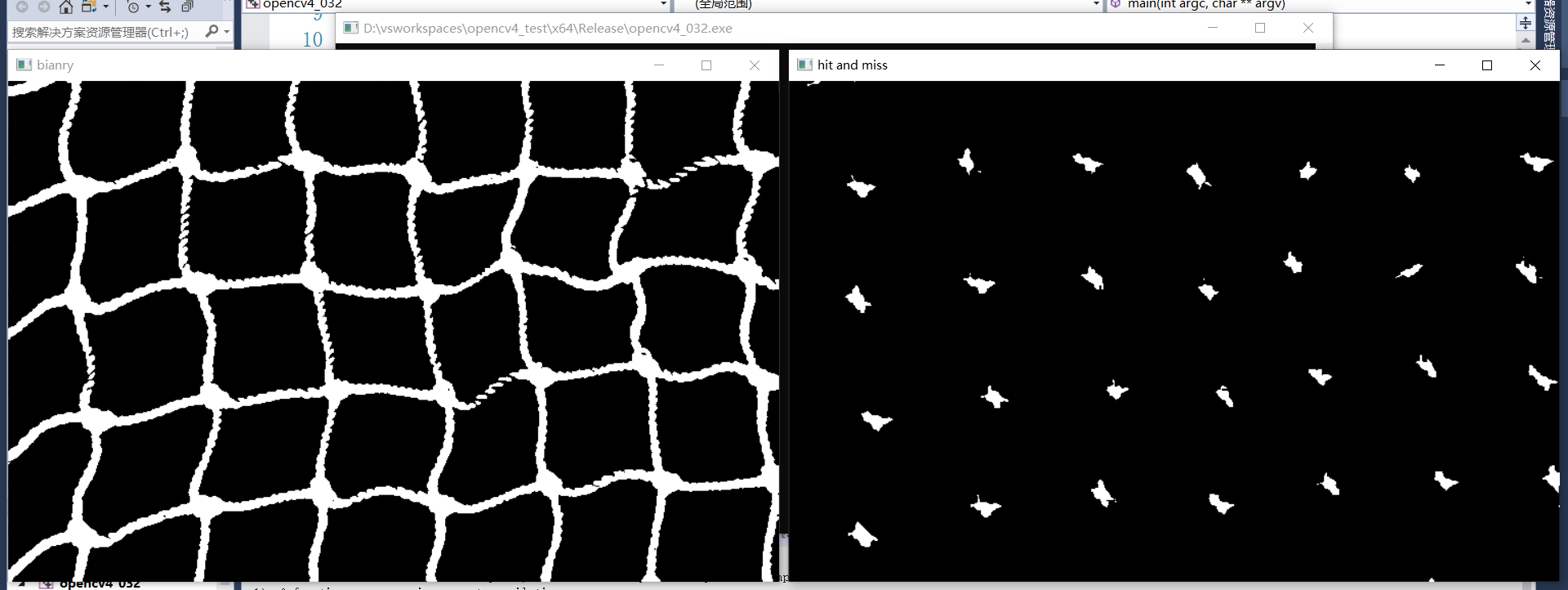

42、更多形態學操作

黑帽與頂帽

- 頂帽:原圖減去開操作之後的結果

- 黑帽:閉操作之後的結果減去原圖

- 頂帽與黑帽的作用是用來提取影象中微小有用的資訊塊

擊中擊不中(提取與結構元素完全相同的元素)

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

int main(int argc, char** argv) {

//Mat src = imread("D:/images/morph.png");

//Mat src = imread("D:/images/morph3.png");

Mat src = imread("D:/images/cross.png");

GaussianBlur(src, src, Size(3, 3), 2, 2);

Mat gray, binary;

cvtColor(src, gray, COLOR_BGR2GRAY);

imshow("input", gray);

//先求基本梯度,再對基本梯度進行二值化分割處理

threshold(gray, binary, 0, 255, THRESH_BINARY_INV | THRESH_OTSU); //二值化

imshow("bianry", binary);

//Mat tophat, blackhat;

//Mat k = getStructuringElement(MORPH_RECT, Size(3, 3), Point(-1, -1));

//Mat k = getStructuringElement(MORPH_ELLIPSE, Size(18, 18), Point(-1, -1));

//morphologyEx(binary, tophat, MORPH_TOPHAT, k);

//imshow("top hat demo", tophat);

//morphologyEx(binary, blackhat, MORPH_BLACKHAT, k);

//imshow("black hat demo", blackhat);

Mat hitmiss;

Mat k = getStructuringElement(MORPH_CROSS, Size(15, 15), Point(-1, -1));

morphologyEx(binary, hitmiss, MORPH_HITMISS, k);

imshow("hit and miss", hitmiss);

waitKey(0);

destroyAllWindows();

return 0;

}

效果:

1、頂帽(開操作去除的干擾資訊)

2、黑帽(閉操作填補的區域)

3、擊中與擊不中

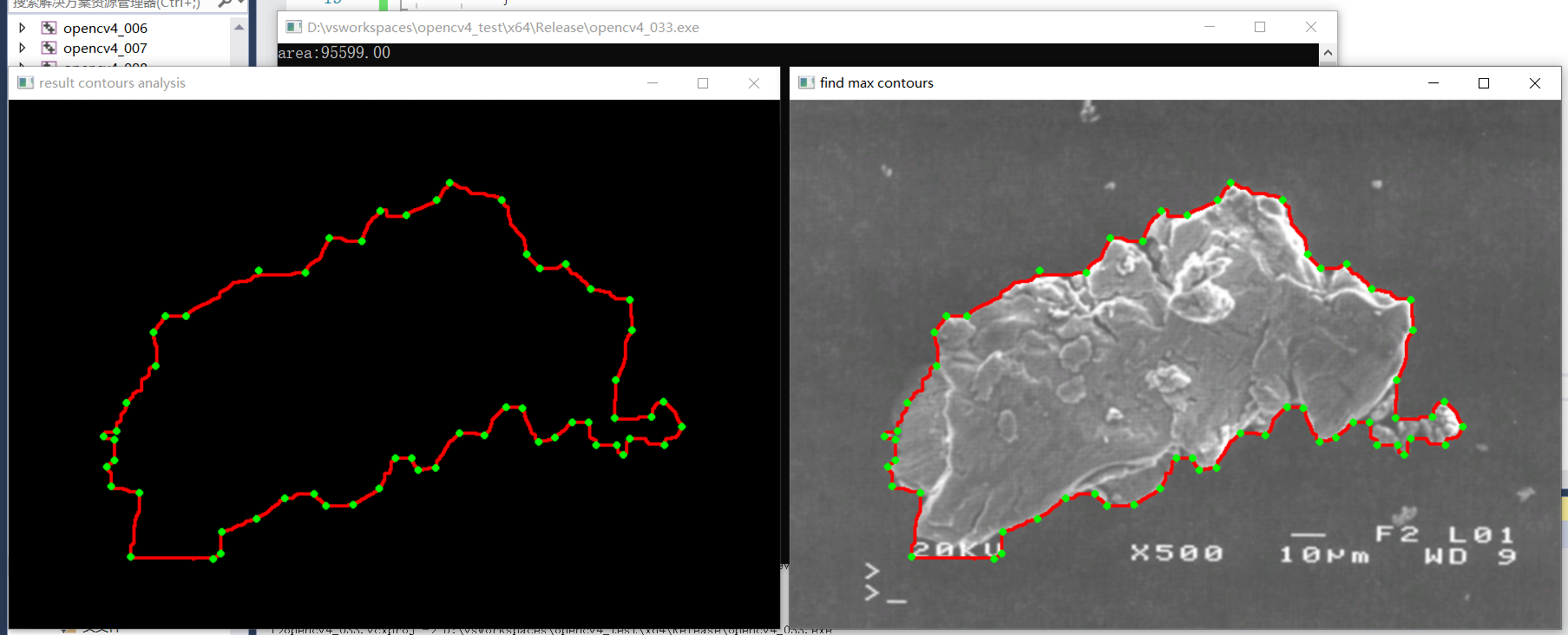

43、影象分析 案例實戰一

- 提取星雲最大輪廓面積

- 提取編碼點/極值點

#include<opencv2/opencv.hpp>

#include<iostream>

using namespace cv;

using namespace std;

RNG rng(12345);

int main(int argc, char** argv) {

Mat src = imread("D:/images/case6.png");

if (src.empty()) {

printf("could not find image file");

return -1;

}

namedWindow("input", WINDOW_AUTOSIZE);

imshow("input", src);

//二值化

GaussianBlur(src, src, Size(3, 3), 0); //最好在分割處理之前使用高斯濾波降噪

Mat gray, binary;

cvtColor(src, gray, COLOR_BGR2GRAY);

threshold(gray, binary, 0, 255, THRESH_BINARY | THRESH_OTSU);

//閉操作

Mat se = getStructuringElement(MORPH_RECT, Size(15, 15), Point(-1, -1));

morphologyEx(binary, binary, MORPH_CLOSE, se);

imshow("binary", binary);

//輪廓發現

int height = binary.rows;

int width = binary.cols;

vector<vector<Point>> contours;

vector<Vec4i> hirearchy;

//RETR_EXTERNAL表示只繪製最外面的輪廓(外輪廓內的輪廓不繪製),RETR_TREE表示繪製所有輪廓

findContours(binary, contours, hirearchy, RETR_EXTERNAL, CHAIN_APPROX_SIMPLE, Point());

double max_area = -1;

int cindex = -1;

for (size_t t = 0; t < contours.size(); t++) {

Rect rect = boundingRect(contours[t]);

if (rect.height >= height || rect.width >= width) { //跳過影象邊框輪廓

continue;

}

double area = contourArea(contours[t]); //面積

double length = arcLength(contours[t], true); //周長

if (area > max_area) {

max_area = area;

cindex = t;

}

}

drawContours(src, contours, cindex, Scalar(0, 0, 255), 2, 8);

Mat pts;

approxPolyDP(contours[cindex], pts, 4, true); //通過多邊形逼近找到極值點

Mat result = Mat::zeros(src.size(), src.type());

drawContours(result, contours, cindex, Scalar(0, 0, 255), 2, 8);

for (int i = 0; i < pts.rows; i++) {

Vec2i pt = pts.at<Vec2i>(i, 0);

circle(src, Point(pt[0], pt[1]), 2, Scalar(0, 255, 0), 2, 8, 0);

circle(result, Point(pt[0], pt[1]), 2, Scalar(0, 255, 0), 2, 8, 0);

}

imshow("find max contours", src);

imshow("result contours analysis", result);

printf("area:%.2f\n", max_area);

waitKey(0);

destroyAllWindows();

return 0;

}

效果:

1、查詢繪製最大面積輪廓

2、查詢最大輪廓的極值點並繪製

44、視訊讀寫

- 支援視訊檔讀寫

- 攝像頭與視訊流

#include <opencv2/opencv.hpp>

#include <iostream>

using namespace cv;

using namespace std;

int main(int argc, char** argv) {

//VideoCapture capture(0); //獲取攝像頭

//VideoCapture capture("D:/images/vtest.avi"); //獲取攝像頭

VideoCapture capture;

capture.open("http://ivi.bupt.edu.cn/hls/cctv6hd.m3u8");

if (!capture.isOpened()) {

printf("could not open the camera...\n");

}

namedWindow("frame", WINDOW_AUTOSIZE);

int fps = capture.get(CAP_PROP_FPS);

int width = capture.get(CAP_PROP_FRAME_WIDTH);

int height = capture.get(CAP_PROP_FRAME_HEIGHT);

int num_of_frames = capture.get(CAP_PROP_FRAME_COUNT);

int type = capture.get(CAP_PROP_FOURCC); //獲取原視訊編碼格式

printf("frame size(w=%d,h=%d),FPS:%d,frames:%d\n", width, height, fps, num_of_frames);

Mat frame;

VideoWriter writer("D:/images/test.mp4", type, fps, Size(width, height), true); //輸出視訊,設定路徑及格式

while (true) {

bool ret = capture.read(frame);

if (!ret) break;

imshow("frame", frame);

writer.write(frame); //寫出每一幀

char c = waitKey(50);

if (c == 27) { //ESC

break;

}

}

capture.release(); //讀寫完成後關閉釋放資源

writer.release();

waitKey(0);

destroyAllWindows();

}

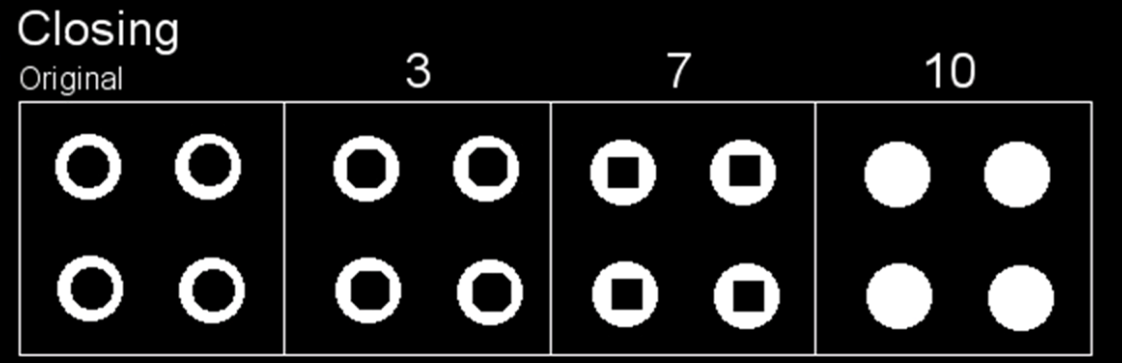

效果:

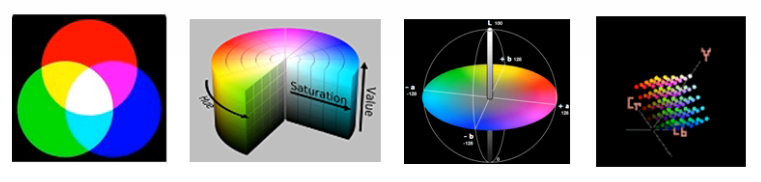

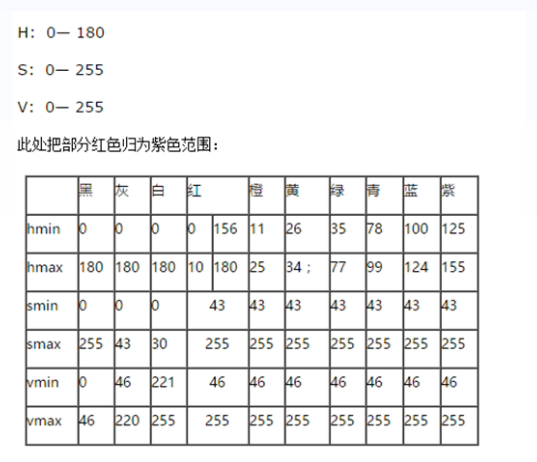

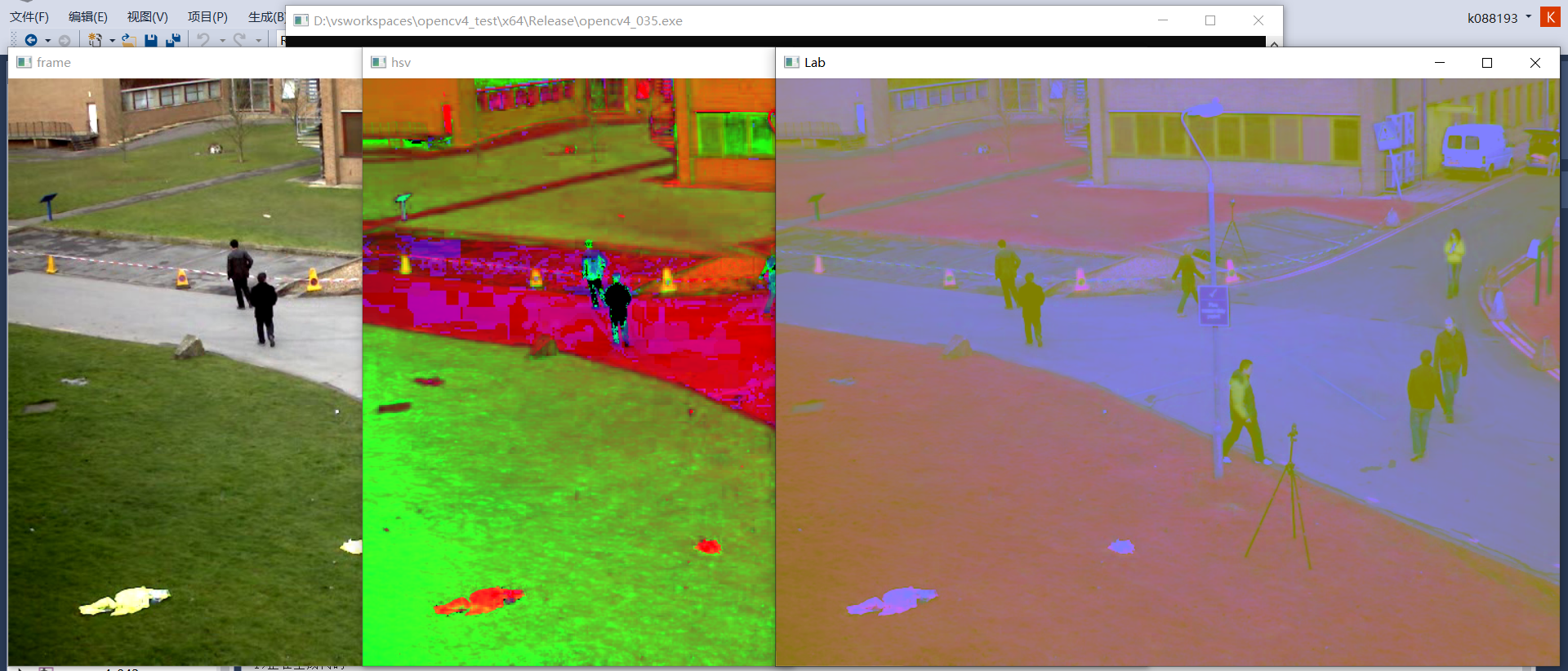

45、影象色彩空間轉換

色彩空間

- RGB色彩空間(非裝置依賴)

- HSV色彩空間

- Lab色彩空間

- YCbCr色彩空間

相互轉換

- BGR2HSV

#include <opencv2/opencv.hpp>

#include <iostream>

using namespace cv;

using namespace std;

int main(int argc, char** argv) {

VideoCapture capture("D:/images/vtest.avi"); //獲取攝像頭

if (!capture.isOpened()) {

printf("could not open the camera...\n");

}

namedWindow("frame", WINDOW_AUTOSIZE);

Mat frame, hsv, Lab, mask, result;

while (true) {

bool ret = capture.read(frame);

if (!ret) break;

imshow("frame", frame);

cvtColor(frame, hsv, COLOR_BGR2HSV);

//cvtColor(frame, Lab, COLOR_BGR2Lab);

imshow("hsv", hsv);

inRange(hsv, Scalar(35, 43, 46), Scalar(77, 255, 255), mask); //獲取指定顏色範圍內的畫素點

bitwise_not(mask, mask); //對獲取的畫素點取反,取出這些畫素

bitwise_and(frame, frame, result, mask);

imshow("mask", mask);

imshow("result", result);

//imshow("Lab", Lab);

char c = waitKey(50);

if (c == 27) { //ESC

break;

}

}

capture.release(); //讀寫完成後關閉釋放資源

//writer.release();

waitKey(0);

destroyAllWindows();

}

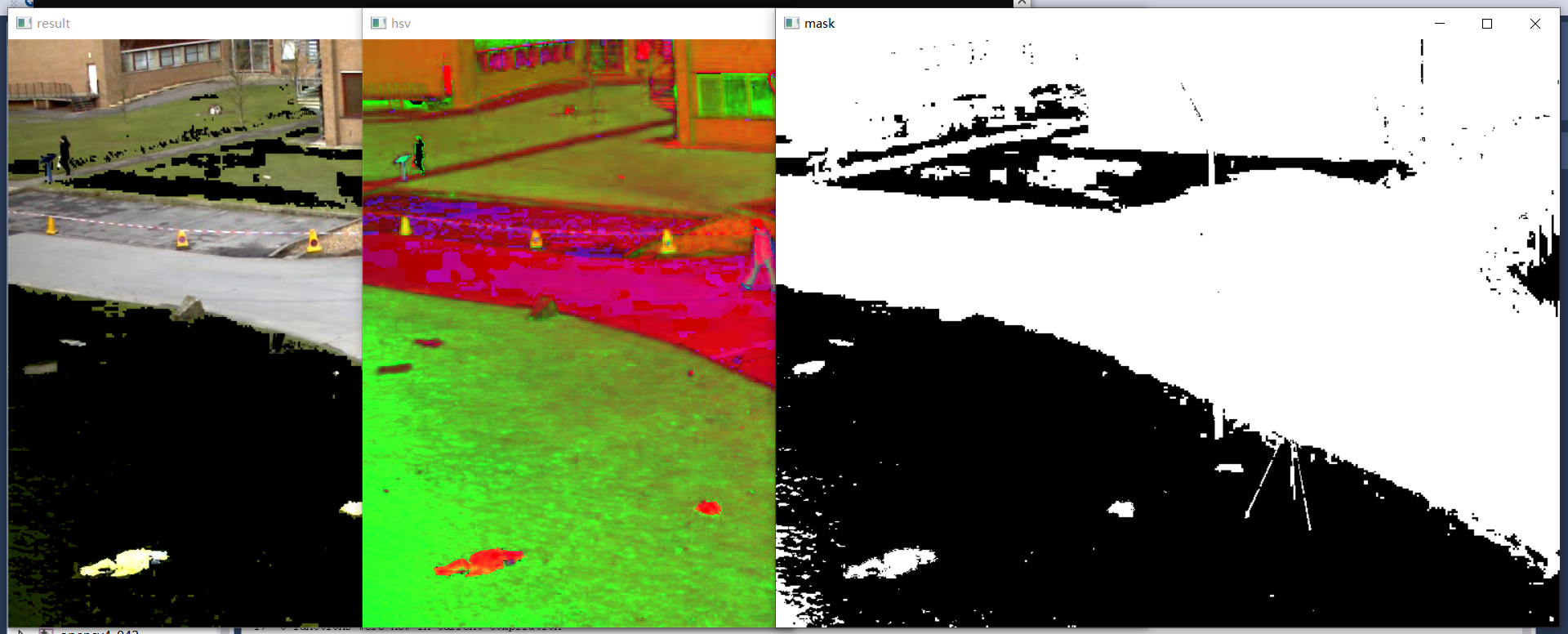

效果:

1、rgb影象轉換為hsv及Lab色彩空間

2、去除hsv影象的綠色部分

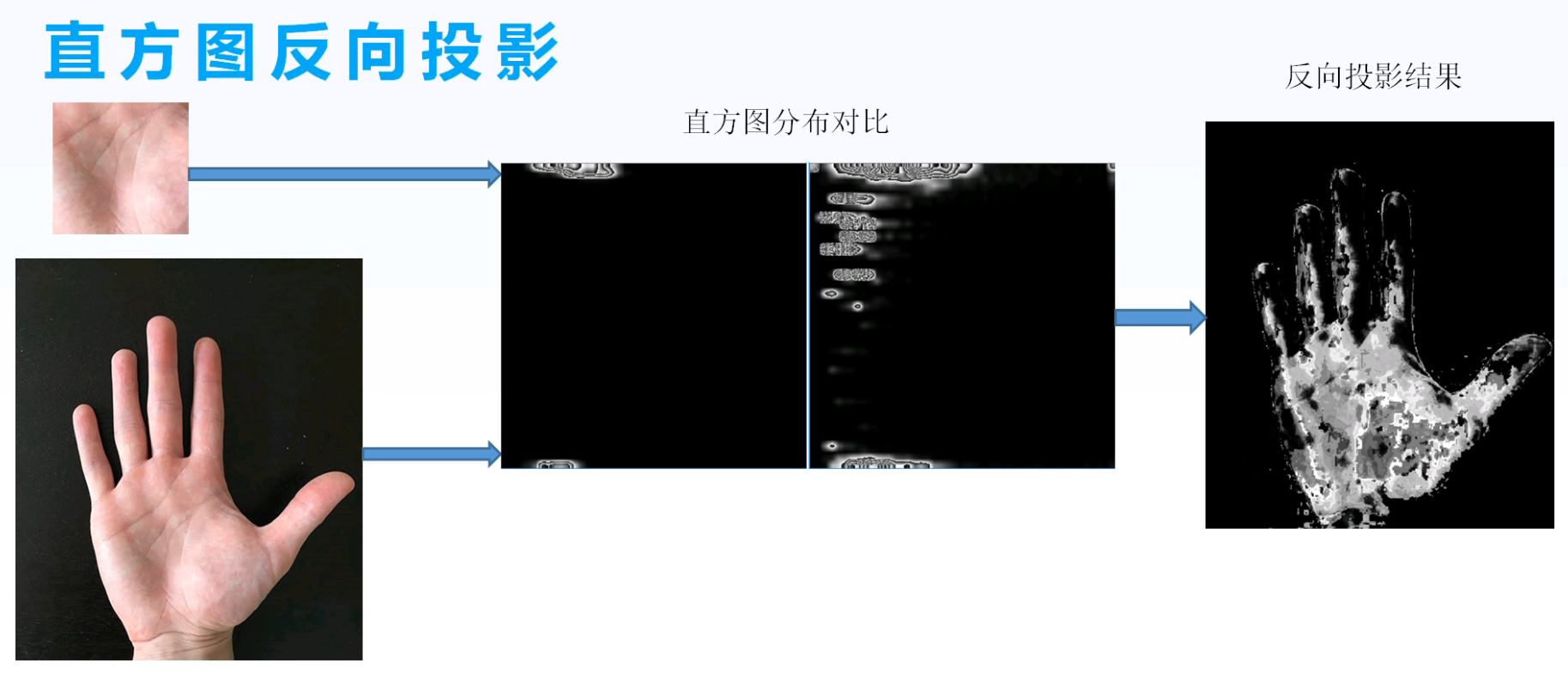

46、直方圖反向投影

基本原理

- 計算直方圖

- 計算比率

- 折積模糊

- 反向輸出

查詢過程範例:

假設我們有一張100x100的輸入影象,有一張10x10的模板影象,查詢的過程是這樣的:

(1)從輸入影象的左上角(0,0)開始,切割一塊(0,0)至(10,10)的臨時影象;

(2)生成臨時影象的直方圖;

(3)用臨時影象的直方圖和模板影象的直方圖對比,對比結果記為c;

(4)直方圖對比結果c,就是結果影象(0,0)處的畫素值;

(5)切割輸入影象從(0,1)至(10,11)的臨時影象,對比直方圖,並記錄到結果影象;

(6)重複(1)~(5)步直到輸入影象的右下角。

反向投影的結果包含了:以每個輸入影象畫素點為起點的直方圖對比結果。可以把它看成是一個二維的浮點型陣列,二維矩陣,或者單通道的浮點型影象。

#include <opencv2/opencv.hpp>

#include <iostream>

using namespace cv;

using namespace std;

int main(int argc, char** argv) {

Mat model = imread("D:/images/sample.png");

Mat src = imread("D:/images/target.png");

if (src.empty() || model.empty()) {

printf("could not find image files");

return -1;

}

namedWindow("input", WINDOW_AUTOSIZE);

imshow("input", src);

imshow("sample", model);

Mat model_hsv, image_hsv;

cvtColor(model, model_hsv, COLOR_BGR2HSV);

cvtColor(src, image_hsv, COLOR_BGR2HSV);

int h_bins = 24, s_bins = 24;

int histSize[] = { h_bins,s_bins };

int channels[] = { 0,1 };

Mat roiHist;

float h_range[] = { 0,180 };

float s_range[] = { 0,255 };

const float* ranges[] = { h_range,s_range };

calcHist(&model_hsv, 1, channels, Mat(), roiHist, 2, histSize, ranges, true, false);

normalize(roiHist, roiHist, 0, 255, NORM_MINMAX, -1, Mat()); //歸一化為0-255

MatND backproj;

calcBackProject(&image_hsv, 1, channels, roiHist, backproj, ranges, 1.0);

imshow("back projection demo", backproj);

waitKey(0);

destroyAllWindows();

return 0;

}

效果:

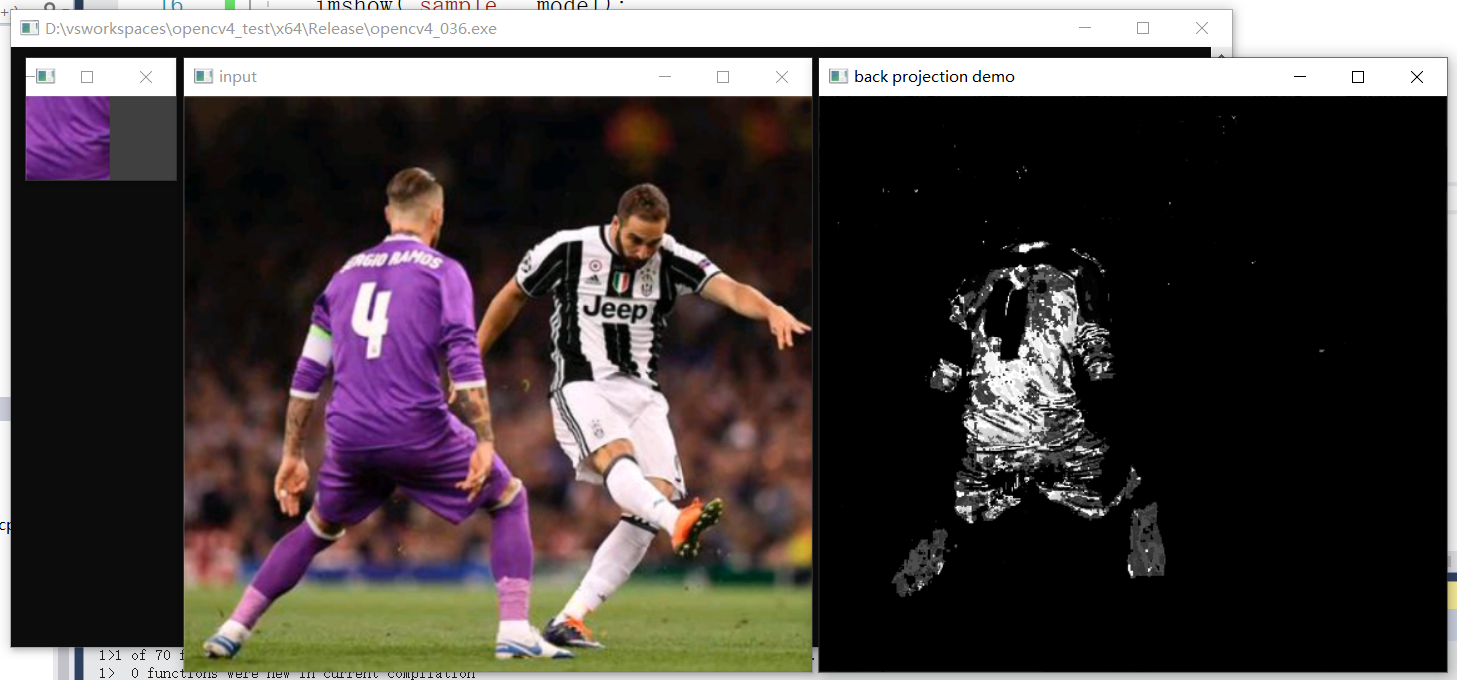

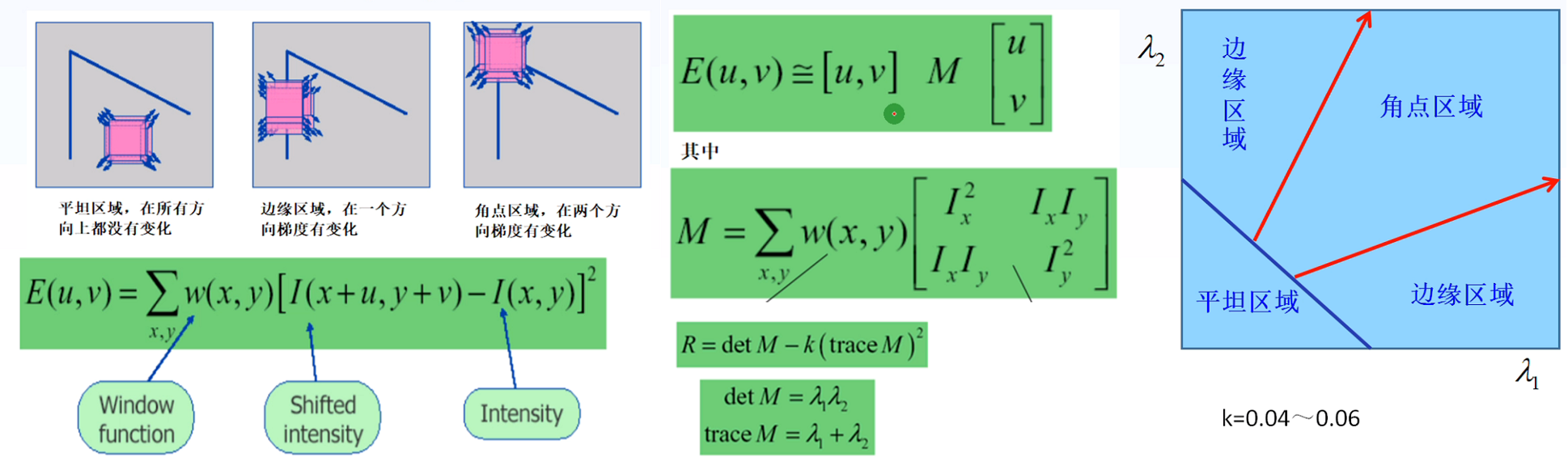

47、Harris角點檢測

角點:在x方向和y方向都有梯度變化最大的畫素點;邊緣:在一個方向上畫素梯度變化最大。

Harris角點檢測原理:

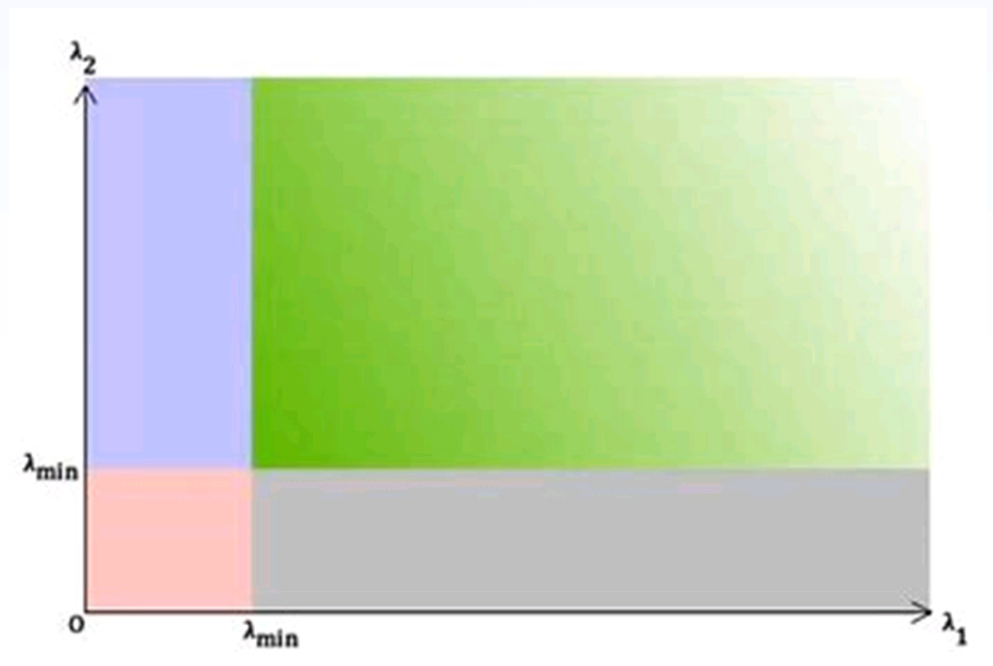

shi-tomasi角點檢測:

#include <opencv2/opencv.hpp>

#include <iostream>

using namespace cv;

using namespace std;

void harris_demo(Mat &image);

int main(int argc, char** argv) {

VideoCapture capture("D:/images/vtest.avi"); //獲取攝像頭

if (!capture.isOpened()) {

printf("could not open the camera...\n");

}

namedWindow("frame", WINDOW_AUTOSIZE);

Mat frame;

while (true) {

bool ret = capture.read(frame);

if (!ret) break;

imshow("frame", frame);

harris_demo(frame);

imshow("result", frame);

char c = waitKey(50);

if (c == 27) { //ESC

break;

}

}

capture.release(); //讀寫完成後關閉釋放資源

waitKey(0);

destroyAllWindows();

return 0;

}

void harris_demo(Mat &image) {

Mat gray;

cvtColor(image, gray, COLOR_BGR2GRAY);

Mat dst;

double k = 0.04;

int blocksize = 2; //掃描窗大小

int ksize = 3; //sobel運算元大小

cornerHarris(gray, dst, blocksize, ksize, k);

Mat dst_norm = Mat::zeros(dst.size(), dst.type());

normalize(dst, dst_norm, 0, 255, NORM_MINMAX, -1, Mat());

convertScaleAbs(dst_norm, dst_norm); //轉換為8UC1型別資料

//draw corners

RNG rng(12345);

for (int row = 0; row < image.rows; row++) {

for (int col = 0; col < image.cols; col++) {

int rsp = dst_norm.at<uchar>(row, col);

if (rsp > 150) {

int b = rng.uniform(0, 255);

int g = rng.uniform(0, 255);

int r = rng.uniform(0, 255);

circle(image, Point(col, row), 5, Scalar(b, g, r), 2, 8, 0);

}

}

}

}

效果:

48、shi-tomasi角點檢測

#include <opencv2/opencv.hpp>

#include <iostream>

using namespace cv;

using namespace std;

void shitomas_demo(Mat &image);

int main(int argc, char** argv) {

VideoCapture capture("D:/images/vtest.avi"); //獲取攝像頭

if (!capture.isOpened()) {

printf("could not open the camera...\n");

}

namedWindow("frame", WINDOW_AUTOSIZE);

Mat frame;

while (true) {

bool ret = capture.read(frame);

if (!ret) break;

imshow("frame", frame);

//harris_demo(frame);

shitomas_demo(frame);

imshow("result", frame);

char c = waitKey(50);

if (c == 27) { //ESC

break;

}

}

capture.release(); //讀寫完成後關閉釋放資源

waitKey(0);

destroyAllWindows();

return 0;

}

void shitomas_demo(Mat &image) {

Mat gray, dst;

cvtColor(image, gray, COLOR_BGR2GRAY);

vector<Point2f> corners;

double quality_level = 0.01; //角點檢測最小閾值

RNG rng(12345);

goodFeaturesToTrack(gray, corners, 200, quality_level, 3, Mat(), 3, false);

for (int i = 0; i < corners.size(); i++) {

int b = rng.uniform(0, 255);

int g = rng.uniform(0, 255);

int r = rng.uniform(0, 255);

circle(image, corners[i], 5, Scalar(b, g, r), 2, 8, 0);

}

}

效果:

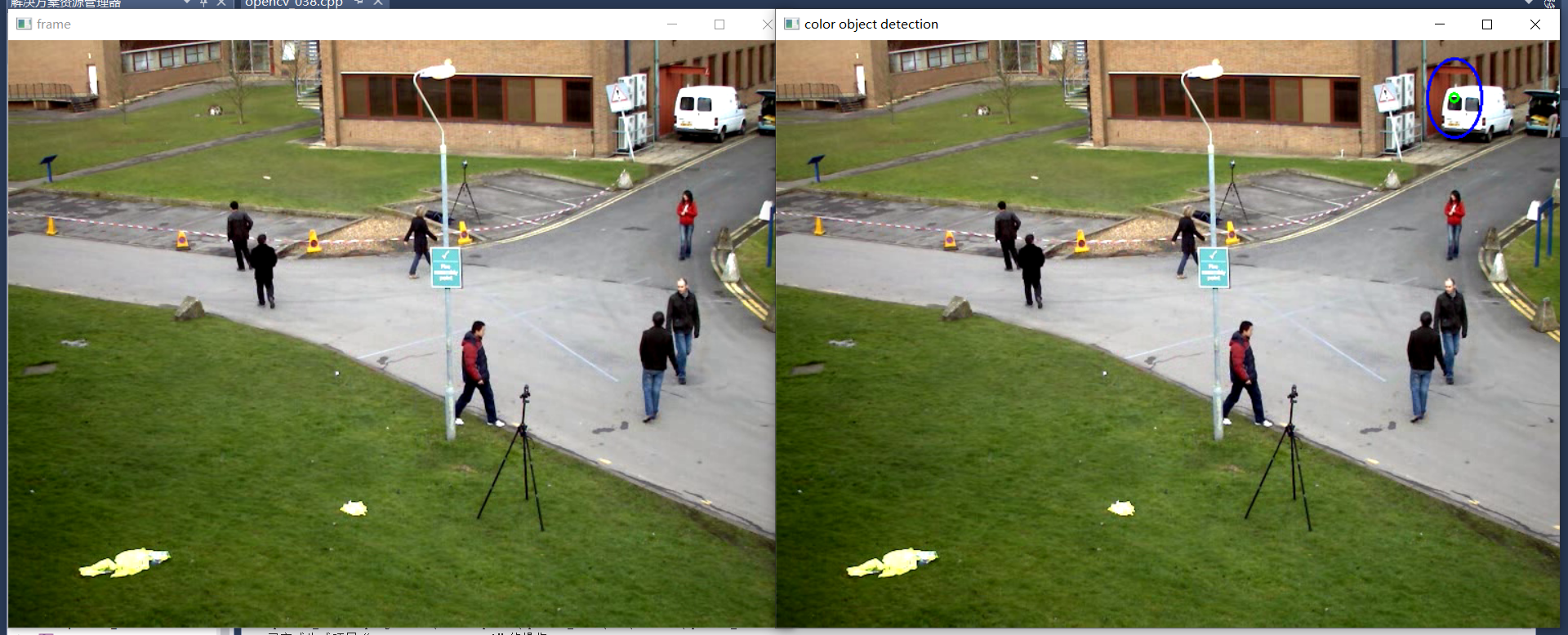

49、基於顏色的物件跟蹤

視訊幀顏色分析與提取

- 檢視幀影象畫素值分佈(image watch)

- 設定正確range取得mask

- 對mask影象進行輪廓分析

#include <opencv2/opencv.hpp>

#include <iostream>

using namespace cv;

using namespace std;

void process_frame(Mat &image);

int main(int argc, char** argv) {

VideoCapture capture("D:/images/vtest.avi"); //獲取攝像頭

if (!capture.isOpened()) {

printf("could not open the camera...\n");

}

namedWindow("frame", WINDOW_AUTOSIZE);

Mat frame;

while (true) {

bool ret = capture.read(frame);

if (!ret) break;

imshow("frame", frame);

process_frame(frame);

char c = waitKey(50);

if (c == 27) { //ESC

break;

}

}

capture.release(); //讀寫完成後關閉釋放資源

waitKey(0);

destroyAllWindows();

return 0;

}

void process_frame(Mat &image) {

Mat hsv, mask;

cvtColor(image, hsv, COLOR_BGR2HSV);

inRange(hsv, Scalar(0, 43, 46), Scalar(10, 255, 255), mask);

//imshow("mask", mask);

Mat se = getStructuringElement(MORPH_RECT, Size(3, 3));

morphologyEx(mask, mask, MORPH_OPEN, se);

//imshow("result", mask);

vector<vector<Point>> contours;

vector<Vec4i> hirearchy;

findContours(mask, contours, hirearchy, RETR_EXTERNAL, CHAIN_APPROX_SIMPLE, Point());

int index = -1;

double max_area = 0;

for (size_t t = 0; t < contours.size(); t++) {

double area = contourArea(contours[t]);

if (area > max_area) {

max_area = area;

index = t;

}

}

//進行輪廓擬合與輸出

if (index >= 0) {

RotatedRect rrt = minAreaRect(contours[index]);

ellipse(image, rrt, Scalar(255, 0, 0), 2, 8);

circle(image, rrt.center, 4, Scalar(0, 255, 0), 2, 8, 0);

}

imshow("color object detection", image);

}

效果:

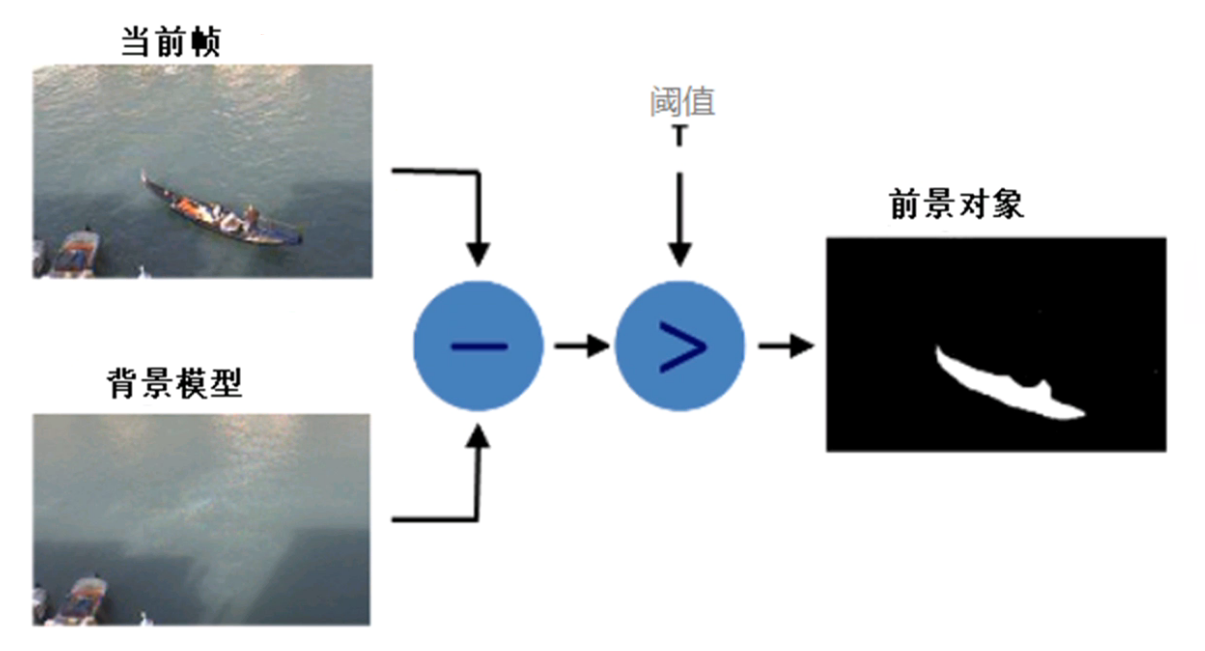

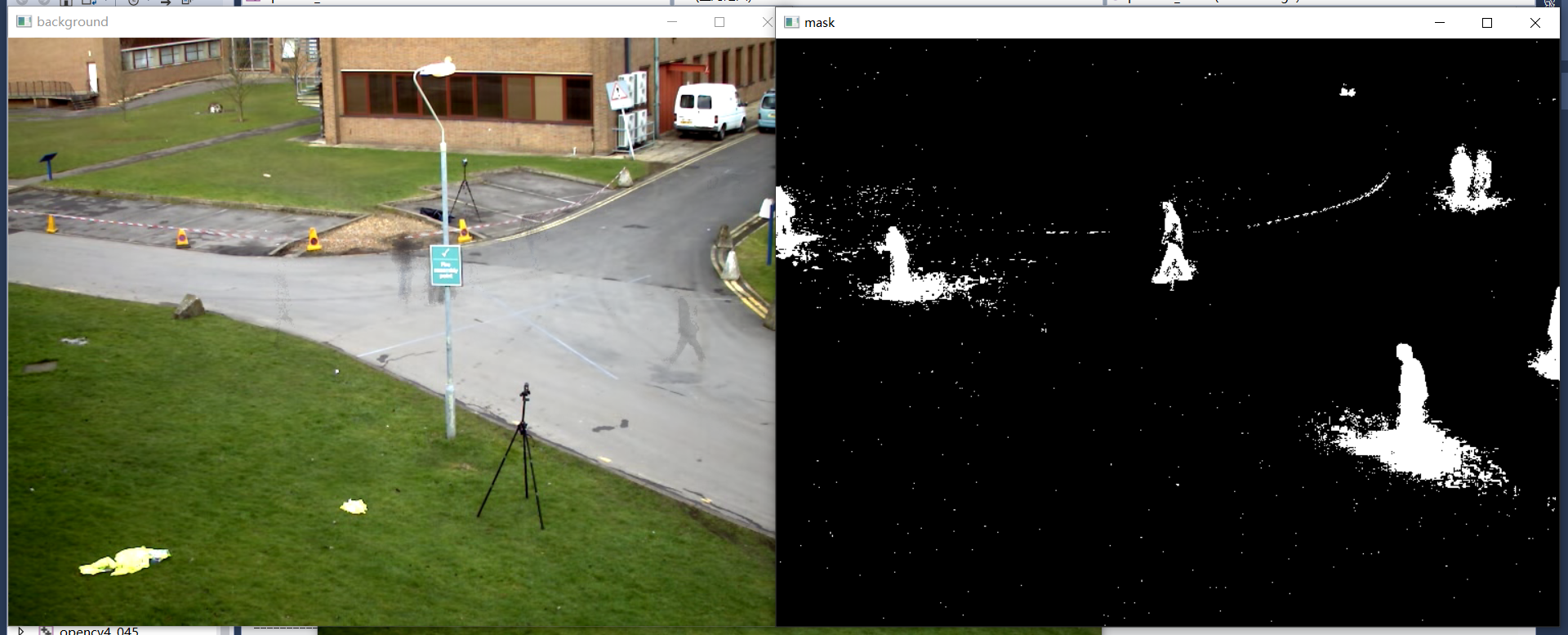

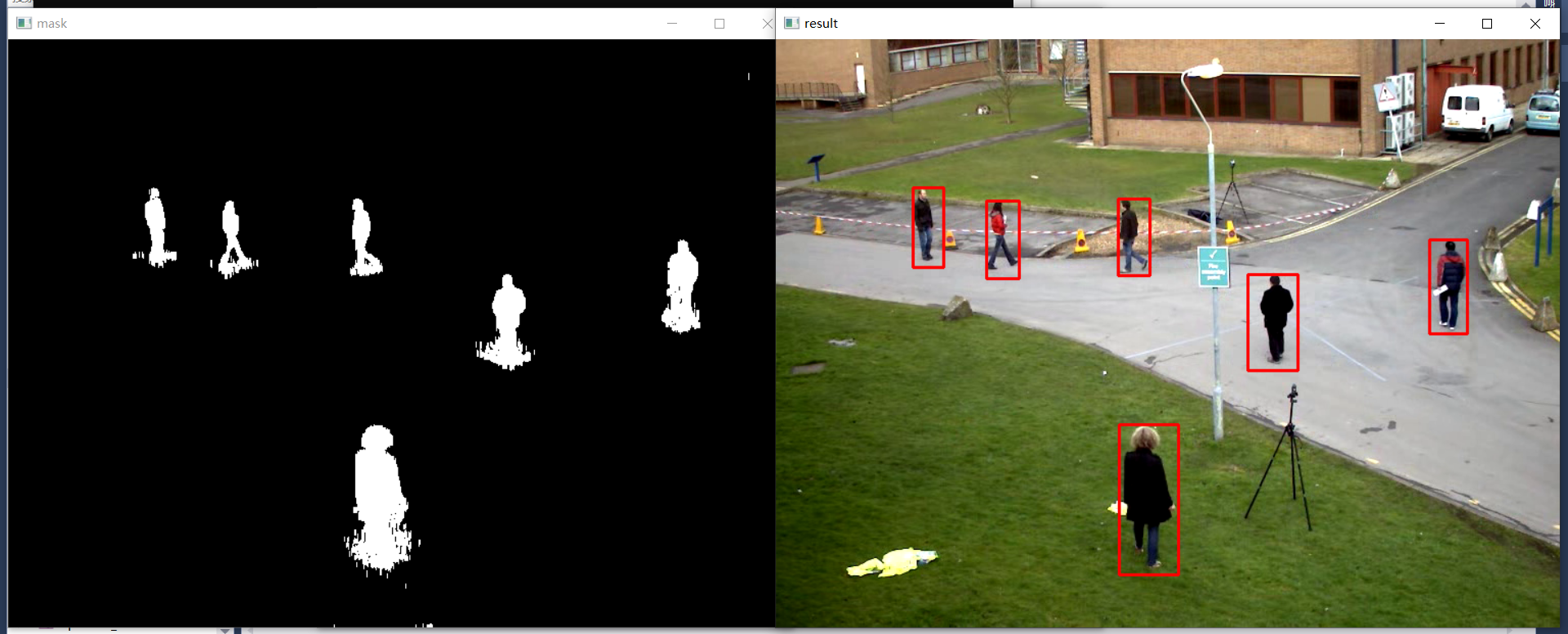

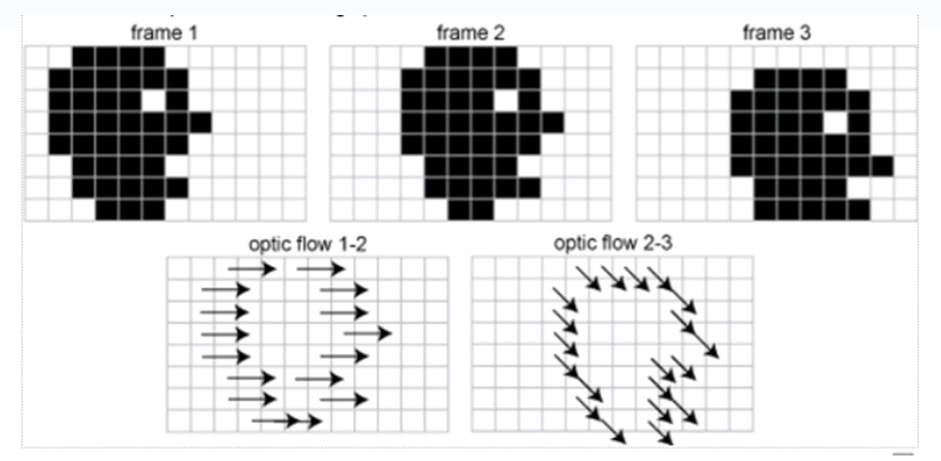

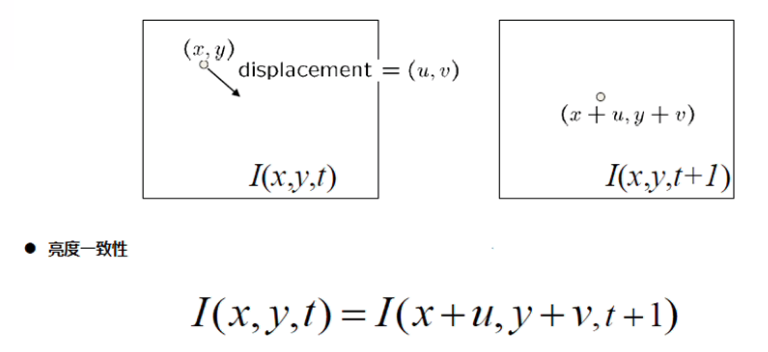

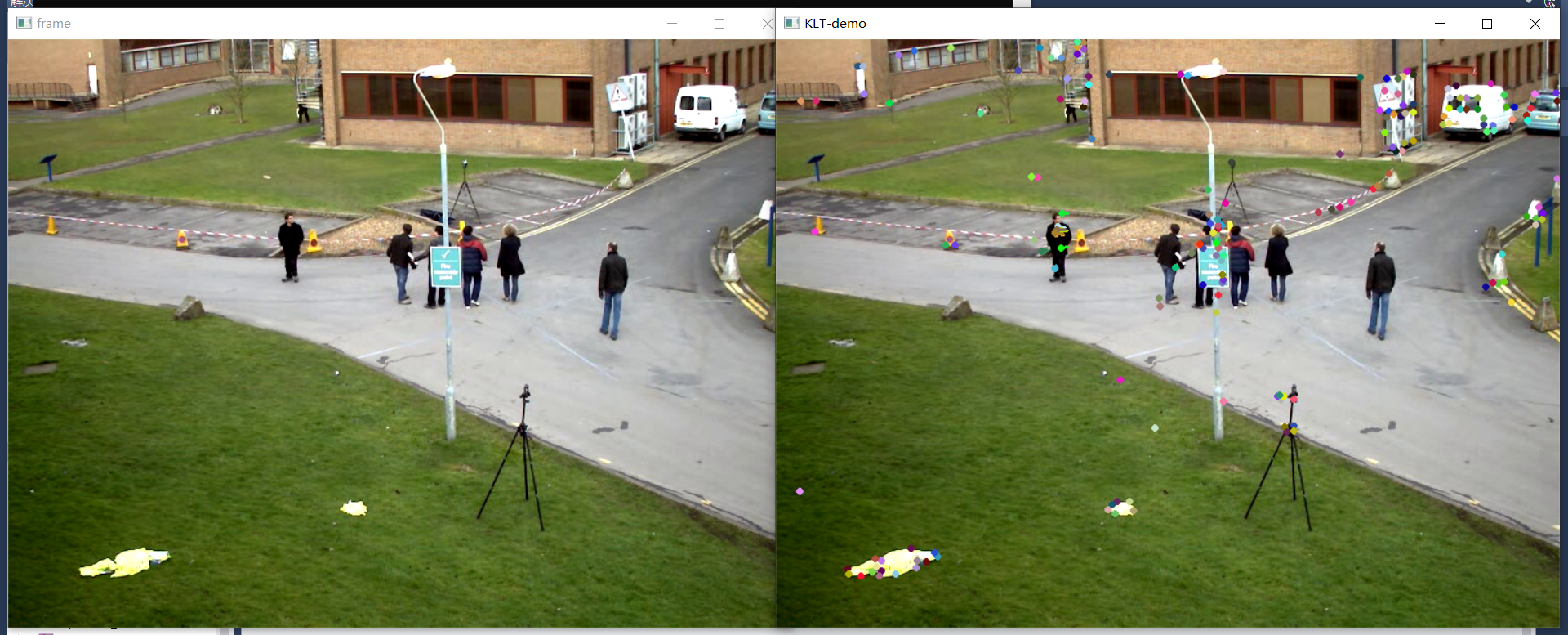

50、背景分析

-

常見演演算法

- KNN

- GMM

- Fuzzy Integral

-

基本流程

- 背景初始化階段

- 前景檢測階段

- 背景更新/維護

#include <opencv2/opencv.hpp>

#include <iostream>

using namespace cv;

using namespace std;

void process_frame(Mat &image);

void process2(Mat &image);

auto pMOG2 = createBackgroundSubtractorMOG2(500, 16, false);

int main(int argc, char** argv) {

VideoCapture capture("D:/images/vtest.avi"); //獲取攝像頭

if (!capture.isOpened()) {

printf("could not open the camera...\n");

}

namedWindow("frame", WINDOW_AUTOSIZE);

Mat frame;

while (true) {

bool ret = capture.read(frame);

if (!ret) break;

imshow("frame", frame);

//process_frame(frame);

process2(frame);

char c = waitKey(50);

if (c == 27) { //ESC

break;

}

}

capture.release(); //讀寫完成後關閉釋放資源

waitKey(0);

destroyAllWindows();

return 0;

}

void process2(Mat &image) {

Mat mask, bg_image;

pMOG2->apply(image, mask); //提取前景

//形態學操作

Mat se = getStructuringElement(MORPH_RECT, Size(1, 5), Point(-1, -1));

morphologyEx(mask, mask, MORPH_OPEN, se);

imshow("mask", mask);

vector<vector<Point>> contours;

vector<Vec4i> hirearchy;

findContours(mask, contours, hirearchy, RETR_EXTERNAL, CHAIN_APPROX_SIMPLE, Point());

int index = -1;

double max_area = 0;

for (size_t t = 0; t < contours.size(); t++) {

double area = contourArea(contours[t]);

if (area < 200) {

continue;

}

Rect box = boundingRect(contours[t]);

RotatedRect rrt = minAreaRect(contours[t]);

//rectangle(image, box, Scalar(0, 0, 255), 2, 8, 0);

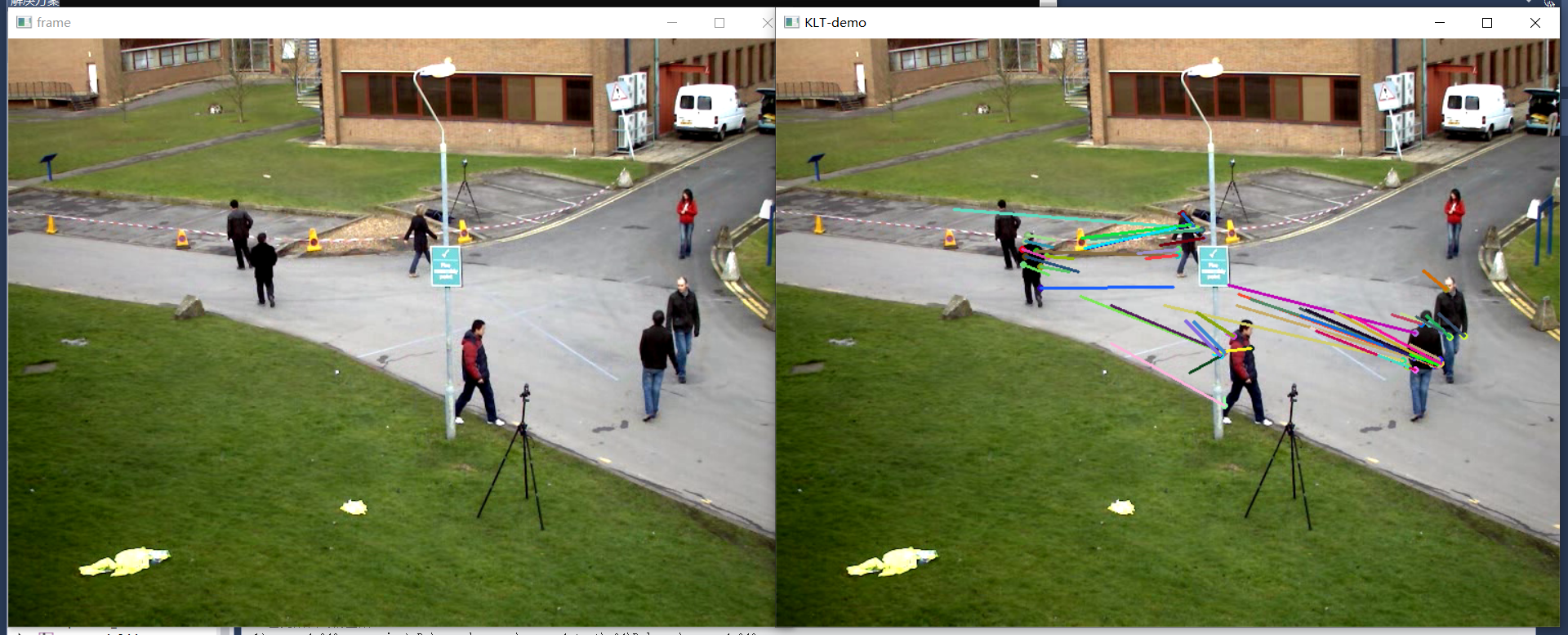

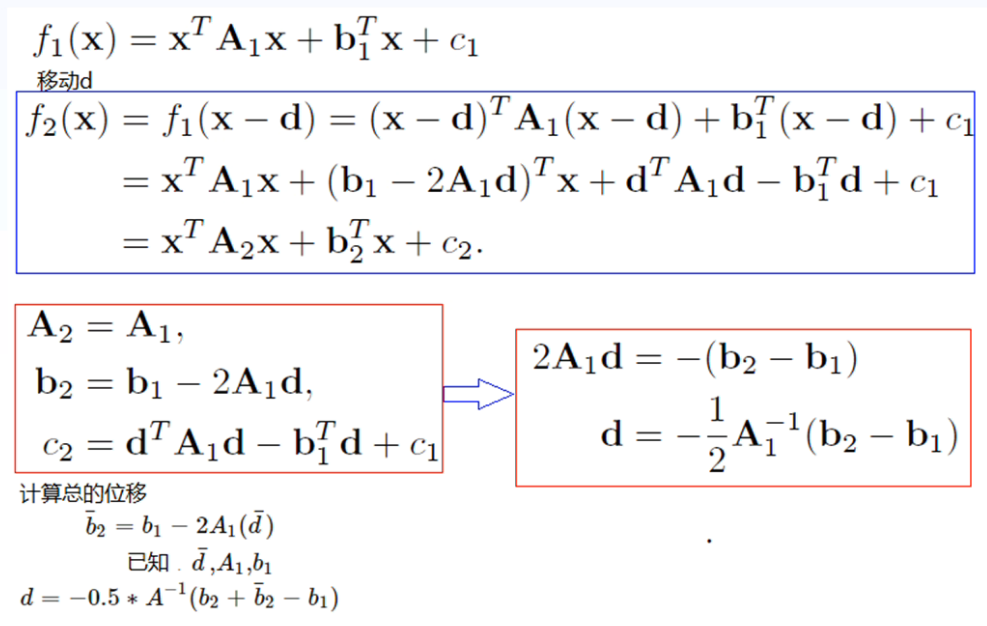

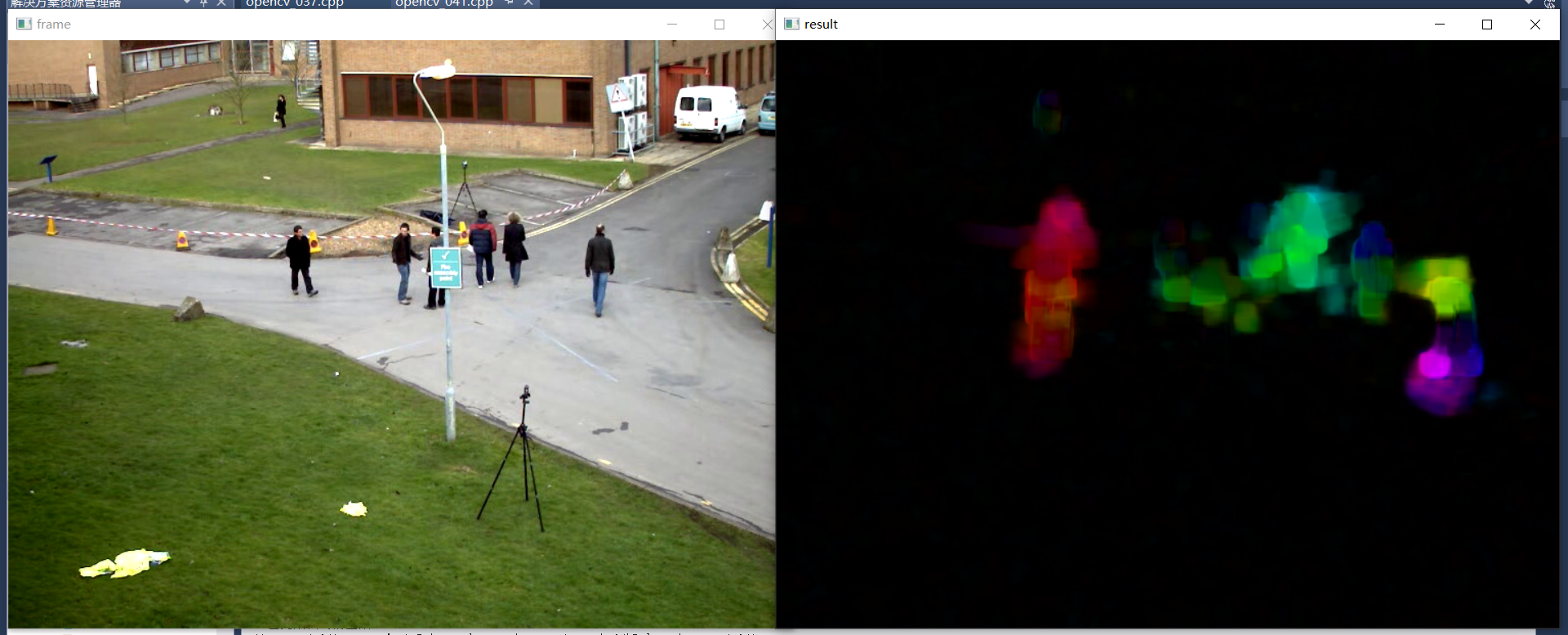

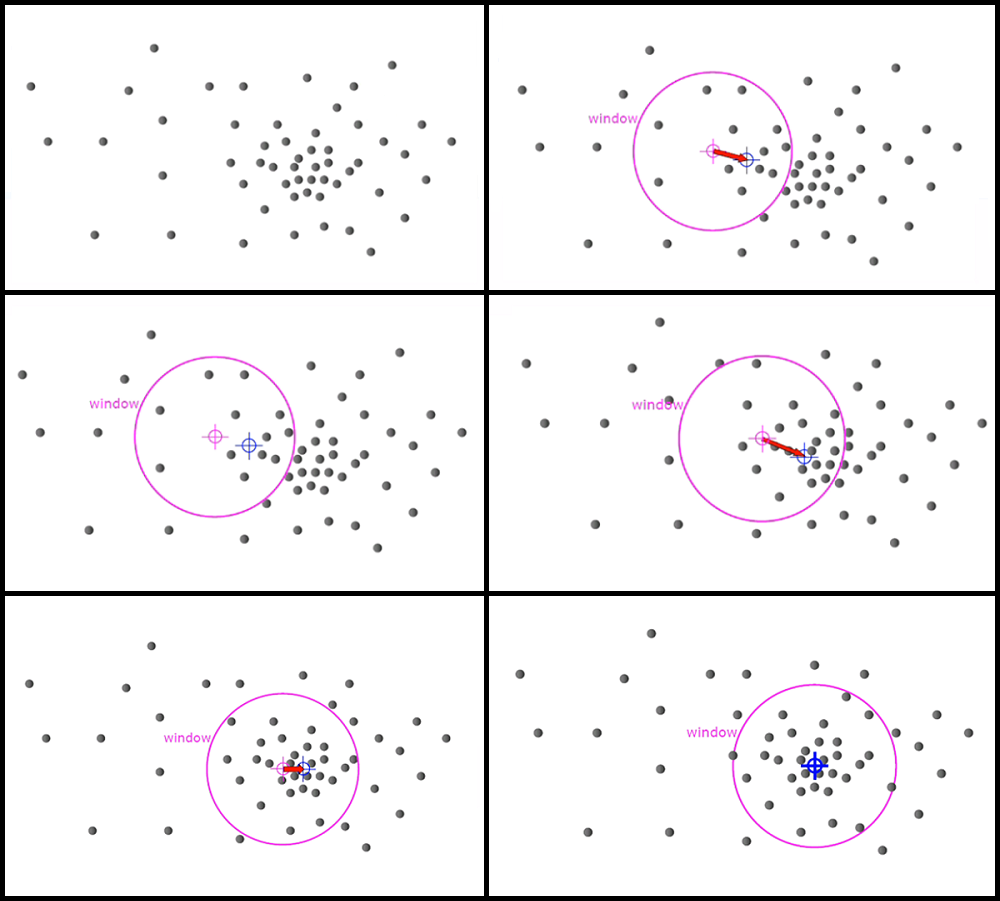

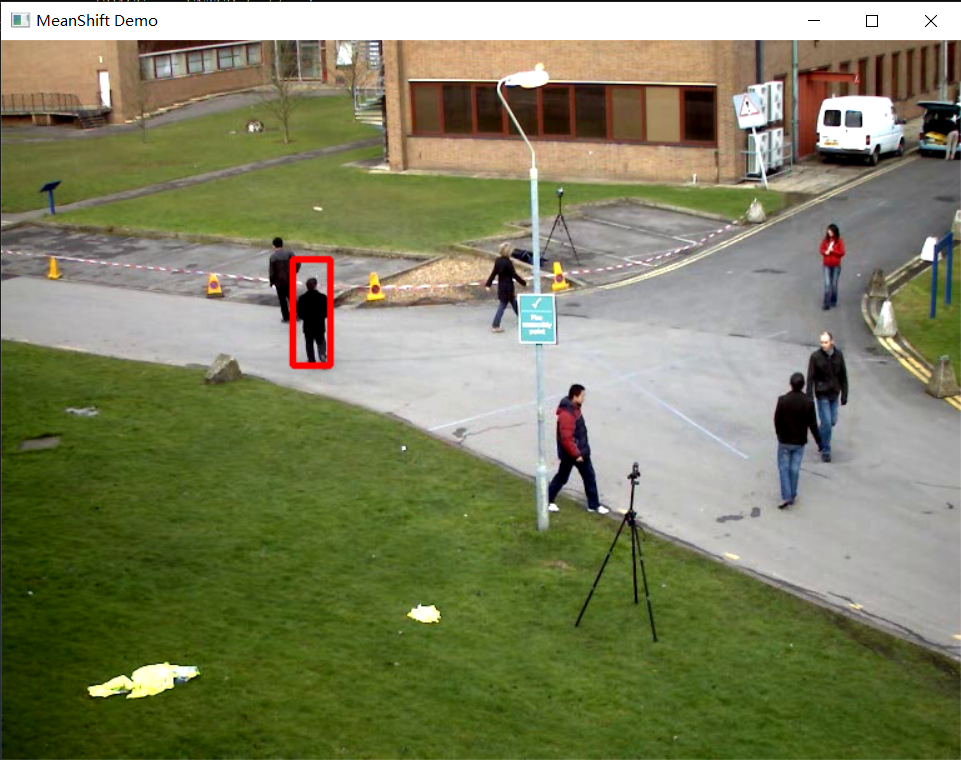

circle(image, rrt.center, 2, Scalar(255, 0, 0), 2, 8);