docker swarm快速部署redis分散式叢集

之前嘗試用swarm部署redis叢集時網上看了很多貼文,發現大多數都是單機叢集,也就是在一個伺服器上啟多個redis容器,然後進入其中一個容器執行redis搭建,經過研究,我實現了只需要通過docker-compose.yml檔案和一個啟動命令就完成redis分散式部署的方式,讓其分別部署在不同機器上,並實現叢集搭建。

環境準備

四臺虛擬機器器

- 192.168.2.38(管理節點)

- 192.168.2.81(工作節點)

- 192.168.2.100(工作節點)

- 192.168.2.102(工作節點)

時間同步

每臺機器都執行

yum install -y ntp

cat <<EOF>>/var/spool/cron/root

00 12 * * * /usr/sbin/ntpdate -u ntp1.aliyun.com && /usr/sbin/hwclock -w

EOF

##檢視計劃任務

crontab -l

##手動執行

/usr/sbin/ntpdate -u ntp1.aliyun.com && /usr/sbin/hwclock -w

Docker

安裝Docker

curl -sSL https://get.daocloud.io/docker | sh

啟動docker

sudo systemctl start docker

搭建Swarm叢集

開啟防火牆(Swarm需要)

-

管理節點開啟2377

# manager firewall-cmd --zone=public --add-port=2377/tcp --permanent -

所有節點開啟以下埠

# 所有node firewall-cmd --zone=public --add-port=7946/tcp --permanent firewall-cmd --zone=public --add-port=7946/udp --permanent firewall-cmd --zone=public --add-port=4789/tcp --permanent firewall-cmd --zone=public --add-port=4789/udp --permanent -

所有節點重啟防火牆

# 所有node firewall-cmd --reload systemctl restart docker -

圖個方便可以直接關閉防火牆

建立Swarm

docker swarm init --advertise-addr your_manager_ip

檢視join-token

[root@manager ~]# docker swarm join-token worker

To add a worker to this swarm, run the following command:

docker swarm join --token SWMTKN-1-51b7t8whxn8j6mdjt5perjmec9u8qguxq8tern9nill737pra2-ejc5nw5f90oz6xldcbmrl2ztu 192.168.2.61:2377

[root@manager ~]#

加入Swarm

docker swarm join --token SWMTKN-1-

51b7t8whxn8j6mdjt5perjmec9u8qguxq8tern9nill737pra2-ejc5nw5f90oz6xldcbmrl2ztu

192.168.2.38:2377

#檢視節點

docker node ls

服務約束

新增label

sudo docker node update --label-add redis1=true 管理節點名稱

sudo docker node update --label-add redis2=true 工作節點名稱

sudo docker node update --label-add redis3=true 工作節點名稱

sudo docker node update --label-add redis4=true 工作節點名稱

單機叢集

弊端:容器都部署在一個機器上,機器掛了,就全掛了。

建立容器

Tips:這裡可以寫個指令碼啟動,因為這種方式不常用,這裡就不寫那個指令碼了

docker create --name redis-node1 --net host -v /data/redis-data/node1:/data redis --cluster-enabled yes --cluster-config-file nodes-node-1.conf --port 6379

docker create --name redis-node2 --net host -v /data/redis-data/node2:/data redis --cluster-enabled yes --cluster-config-file nodes-node-2.conf --port 6380

docker create --name redis-node3 --net host -v /data/redis-data/node3:/data redis --cluster-enabled yes --cluster-config-file nodes-node-3.conf --port 6381

docker create --name redis-node4 --net host -v /data/redis-data/node4:/data redis --cluster-enabled yes --cluster-config-file nodes-node-4.conf --port 6382

docker create --name redis-node5 --net host -v /data/redis-data/node5:/data redis --cluster-enabled yes --cluster-config-file nodes-node-5.conf --port 6383

docker create --name redis-node6 --net host -v /data/redis-data/node6:/data redis --cluster-enabled yes --cluster-config-file nodes-node-6.conf --port 6384

啟動容器

docker start redis-node1 redis-node2 redis-node3 redis-node4 redis-node5 redis-node6

進入容器啟動叢集

# 進入其中一個節點

docker exec -it redis-node1 /bin/bash

# 建立叢集

redis-cli --cluster create 192.168.2.38:6379 192.168.2.38:6380 192.168.2.38:6381 192.168.2.38:6382 192.168.2.38:6383 192.168.2.38:6384 --cluster-replicas 1

# --cluster-replicas 1 一比一,一主一從

分散式叢集

redis叢集至少需要3個主節點,所以這裡搭建三主三從的叢集,由於只有4臺機器,所以在指令碼中把前三個節點放到一臺機器上了。

部署

在swarm叢集的Manager節點中建立

mkdir /root/redis-swarm

cd /root/redis-swarm

vi docker-compose.yml

docker compose.yml

說明:

-

前6個服務為redis節點,最後一個redis-start是用於建立叢集,利用redis-cli使用者端搭建叢集,該服務搭建完redis叢集後會自動停止執行。

-

redis-start需要等待前6個redis節點的執行完畢才能建立叢集,因此需要用到指令碼wait-for-it.sh

-

由於redis-cli --cluster create不支援網路別名,所以另寫指令碼redis-start.sh

使用這套指令碼同樣可以單機部署叢集,只需要在啟動時不使用swarm啟動就可以了,然後把docker-compose.yml中的網路模式

driver: overlay給註釋掉即可

version: '3.7'

services:

redis-node1:

image: redis

hostname: redis-node1

ports:

- 6379:6379

networks:

- redis-swarm

volumes:

- "node1:/data"

command: redis-server --cluster-enabled yes --cluster-config-file nodes-node-1.conf

deploy:

mode: replicated

replicas: 1

resources:

limits:

# cpus: '0.001'

memory: 5120M

reservations:

# cpus: '0.001'

memory: 512M

placement:

constraints:

- node.role==manager

redis-node2:

image: redis

hostname: redis-node2

ports:

- 6380:6379

networks:

- redis-swarm

volumes:

- "node2:/data"

command: redis-server --cluster-enabled yes --cluster-config-file nodes-node-2.conf

deploy:

mode: replicated

replicas: 1

resources:

limits:

# cpus: '0.001'

memory: 5120M

reservations:

# cpus: '0.001'

memory: 512M

placement:

constraints:

- node.role==manager

redis-node3:

image: redis

hostname: redis-node3

ports:

- 6381:6379

networks:

- redis-swarm

volumes:

- "node3:/data"

command: redis-server --cluster-enabled yes --cluster-config-file nodes-node-3.conf

deploy:

mode: replicated

resources:

limits:

# cpus: '0.001'

memory: 5120M

reservations:

# cpus: '0.001'

memory: 512M

replicas: 1

placement:

constraints:

- node.role==manager

redis-node4:

image: redis

hostname: redis-node4

ports:

- 6382:6379

networks:

- redis-swarm

volumes:

- "node4:/data"

command: redis-server --cluster-enabled yes --cluster-config-file nodes-node-4.conf

deploy:

mode: replicated

replicas: 1

resources:

limits:

# cpus: '0.001'

memory: 5120M

reservations:

# cpus: '0.001'

memory: 512M

placement:

constraints:

- node.labels.redis2==true

redis-node5:

image: redis

hostname: redis-node5

ports:

- 6383:6379

networks:

- redis-swarm

volumes:

- "node5:/data"

command: redis-server --cluster-enabled yes --cluster-config-file nodes-node-5.conf

deploy:

mode: replicated

replicas: 1

resources:

limits:

# cpus: '0.001'

memory: 5120M

reservations:

# cpus: '0.001'

memory: 512M

placement:

constraints:

- node.labels.redis3==true

redis-node6:

image: redis

hostname: redis-node6

ports:

- 6384:6379

networks:

- redis-swarm

volumes:

- "node6:/data"

command: redis-server --cluster-enabled yes --cluster-config-file nodes-node-6.conf

deploy:

mode: replicated

replicas: 1

resources:

limits:

# cpus: '0.001'

memory: 5120M

reservations:

# cpus: '0.001'

memory: 512M

placement:

constraints:

- node.labels.redis4==true

redis-start:

image: redis

hostname: redis-start

networks:

- redis-swarm

volumes:

- "$PWD/start:/redis-start"

depends_on:

- redis-node1

- redis-node2

- redis-node3

- redis-node4

- redis-node5

- redis-node6

command: /bin/bash -c "chmod 777 /redis-start/redis-start.sh && chmod 777 /redis-start/wait-for-it.sh && /redis-start/redis-start.sh"

deploy:

restart_policy:

condition: on-failure

delay: 5s

max_attempts: 5

placement:

constraints:

- node.role==manager

networks:

redis-swarm:

driver: overlay

volumes:

node1:

node2:

node3:

node4:

node5:

node6:

wait-for-it.sh

mkdir /root/redis-swarm/start

vi wait-for-it.sh

vi redis-start.sh

#!/usr/bin/env bash

# Use this script to test if a given TCP host/port are available

cmdname=$(basename $0)

echoerr() { if [[ $QUIET -ne 1 ]]; then echo "$@" 1>&2; fi }

usage()

{

cat << USAGE >&2

Usage:

$cmdname host:port [-s] [-t timeout] [-- command args]

-h HOST | --host=HOST Host or IP under test

-p PORT | --port=PORT TCP port under test

Alternatively, you specify the host and port as host:port

-s | --strict Only execute subcommand if the test succeeds

-q | --quiet Don't output any status messages

-t TIMEOUT | --timeout=TIMEOUT

Timeout in seconds, zero for no timeout

-- COMMAND ARGS Execute command with args after the test finishes

USAGE

exit 1

}

wait_for()

{

if [[ $TIMEOUT -gt 0 ]]; then

echoerr "$cmdname: waiting $TIMEOUT seconds for $HOST:$PORT"

else

echoerr "$cmdname: waiting for $HOST:$PORT without a timeout"

fi

start_ts=$(date +%s)

while :

do

(echo > /dev/tcp/$HOST/$PORT) >/dev/null 2>&1

result=$?

if [[ $result -eq 0 ]]; then

end_ts=$(date +%s)

echoerr "$cmdname: $HOST:$PORT is available after $((end_ts - start_ts)) seconds"

break

fi

sleep 1

done

return $result

}

wait_for_wrapper()

{

# In order to support SIGINT during timeout: http://unix.stackexchange.com/a/57692

if [[ $QUIET -eq 1 ]]; then

timeout $TIMEOUT $0 --quiet --child --host=$HOST --port=$PORT --timeout=$TIMEOUT &

else

timeout $TIMEOUT $0 --child --host=$HOST --port=$PORT --timeout=$TIMEOUT &

fi

PID=$!

trap "kill -INT -$PID" INT

wait $PID

RESULT=$?

if [[ $RESULT -ne 0 ]]; then

echoerr "$cmdname: timeout occurred after waiting $TIMEOUT seconds for $HOST:$PORT"

fi

return $RESULT

}

# process arguments

while [[ $# -gt 0 ]]

do

case "$1" in

*:* )

hostport=(${1//:/ })

HOST=${hostport[0]}

PORT=${hostport[1]}

shift 1

;;

--child)

CHILD=1

shift 1

;;

-q | --quiet)

QUIET=1

shift 1

;;

-s | --strict)

STRICT=1

shift 1

;;

-h)

HOST="$2"

if [[ $HOST == "" ]]; then break; fi

shift 2

;;

--host=*)

HOST="${1#*=}"

shift 1

;;

-p)

PORT="$2"

if [[ $PORT == "" ]]; then break; fi

shift 2

;;

--port=*)

PORT="${1#*=}"

shift 1

;;

-t)

TIMEOUT="$2"

if [[ $TIMEOUT == "" ]]; then break; fi

shift 2

;;

--timeout=*)

TIMEOUT="${1#*=}"

shift 1

;;

--)

shift

CLI="$@"

break

;;

--help)

usage

;;

*)

echoerr "Unknown argument: $1"

usage

;;

esac

done

if [[ "$HOST" == "" || "$PORT" == "" ]]; then

echoerr "Error: you need to provide a host and port to test."

usage

fi

TIMEOUT=${TIMEOUT:-15}

STRICT=${STRICT:-0}

CHILD=${CHILD:-0}

QUIET=${QUIET:-0}

if [[ $CHILD -gt 0 ]]; then

wait_for

RESULT=$?

exit $RESULT

else

if [[ $TIMEOUT -gt 0 ]]; then

wait_for_wrapper

RESULT=$?

else

wait_for

RESULT=$?

fi

fi

if [[ $CLI != "" ]]; then

if [[ $RESULT -ne 0 && $STRICT -eq 1 ]]; then

echoerr "$cmdname: strict mode, refusing to execute subprocess"

exit $RESULT

fi

exec $CLI

else

exit $RESULT

fi

redis-start.sh

getent hosts xxx檢視主機中/etc/hosts域名對映的IP

cd /redis-start/

bash wait-for-it.sh redis-node1:6379 --timeout=0

bash wait-for-it.sh redis-node2:6379 --timeout=0

bash wait-for-it.sh redis-node3:6379 --timeout=0

bash wait-for-it.sh redis-node4:6379 --timeout=0

bash wait-for-it.sh redis-node5:6379 --timeout=0

bash wait-for-it.sh redis-node6:6379 --timeout=0

echo 'redis-cluster begin'

echo 'yes' | redis-cli --cluster create --cluster-replicas 1 \

`getent hosts redis-node1 | awk '{ print $1 ":6379" }'` \

`getent hosts redis-node2 | awk '{ print $1 ":6379" }'` \

`getent hosts redis-node3 | awk '{ print $1 ":6379" }'` \

`getent hosts redis-node4 | awk '{ print $1 ":6379" }'` \

`getent hosts redis-node5 | awk '{ print $1 ":6379" }'` \

`getent hosts redis-node6 | awk '{ print $1 ":6379" }'`

echo 'redis-cluster end'

啟動

目錄結構

├── docker-compose.yml

└── start

├── redis-start.sh

└── wait-for-it.sh

swarm管理節點執行

cd /root/redis-swarm

docker stack deploy -c docker-compose.yml redis_cluster

檢視redis-start服務紀錄檔,如下即為啟動成功

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | wait-for-it.sh: waiting for redis-node1:6379 without a timeout

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | wait-for-it.sh: redis-node1:6379 is available after 18 seconds

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | wait-for-it.sh: waiting for redis-node2:6379 without a timeout

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | wait-for-it.sh: redis-node2:6379 is available after 13 seconds

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | wait-for-it.sh: waiting for redis-node3:6379 without a timeout

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | wait-for-it.sh: redis-node3:6379 is available after 0 seconds

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | wait-for-it.sh: waiting for redis-node4:6379 without a timeout

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | wait-for-it.sh: redis-node4:6379 is available after 0 seconds

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | wait-for-it.sh: waiting for redis-node5:6379 without a timeout

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | wait-for-it.sh: redis-node5:6379 is available after 0 seconds

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | wait-for-it.sh: waiting for redis-node6:6379 without a timeout

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | wait-for-it.sh: redis-node6:6379 is available after 0 seconds

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | redis-cluster begin

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | >>> Performing hash slots allocation on 12 nodes...

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | Master[0] -> Slots 0 - 2730

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | Master[1] -> Slots 2731 - 5460

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | Master[2] -> Slots 5461 - 8191

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | Master[3] -> Slots 8192 - 10922

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | Master[4] -> Slots 10923 - 13652

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | Master[5] -> Slots 13653 - 16383

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | Adding replica 10.0.5.6:6379 to 10.0.5.17:6379

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | Adding replica 10.0.5.9:6379 to 10.0.5.16:6379

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | Adding replica 10.0.5.8:6379 to 10.0.5.18:6379

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | Adding replica 10.0.5.12:6379 to 10.0.5.19:6379

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | Adding replica 10.0.5.11:6379 to 10.0.5.3:6379

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | Adding replica 10.0.5.5:6379 to 10.0.5.2:6379

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | M: 6ce90be6daabc0c700471d03deb3c6bd88c9f0e1 10.0.5.17:6379

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | slots:[0-2730] (2731 slots) master

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | M: 6ce90be6daabc0c700471d03deb3c6bd88c9f0e1 10.0.5.16:6379

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | slots:[2731-5460] (2730 slots) master

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | M: ea9b45ec64c08c17283239f8b8e5405b2d182428 10.0.5.18:6379

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | slots:[5461-8191] (2731 slots) master

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | M: ea9b45ec64c08c17283239f8b8e5405b2d182428 10.0.5.19:6379

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | slots:[8192-10922] (2731 slots) master

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | M: 935c177308232de05b5483776478020de51bc578 10.0.5.3:6379

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | slots:[10923-13652] (2730 slots) master

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | M: 935c177308232de05b5483776478020de51bc578 10.0.5.2:6379

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | slots:[13653-16383] (2731 slots) master

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | S: 1c99e42bcfb28a9fe72952d4e4cc5cd88aded0f9 10.0.5.5:6379

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | replicates 935c177308232de05b5483776478020de51bc578

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | S: 1c99e42bcfb28a9fe72952d4e4cc5cd88aded0f9 10.0.5.6:6379

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | replicates 6ce90be6daabc0c700471d03deb3c6bd88c9f0e1

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | S: 73cf232f232e83126f058cc01458df11146d8537 10.0.5.9:6379

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | replicates 6ce90be6daabc0c700471d03deb3c6bd88c9f0e1

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | S: 73cf232f232e83126f058cc01458df11146d8537 10.0.5.8:6379

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | replicates ea9b45ec64c08c17283239f8b8e5405b2d182428

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | S: ca3c50899d6deb04e296c542cd485791fb3e8922 10.0.5.12:6379

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | replicates ea9b45ec64c08c17283239f8b8e5405b2d182428

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | S: ca3c50899d6deb04e296c542cd485791fb3e8922 10.0.5.11:6379

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | replicates 935c177308232de05b5483776478020de51bc578

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | Can I set the above configuration? (type 'yes' to accept): >>> Nodes configuration updated

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | >>> Assign a different config epoch to each node

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | >>> Sending CLUSTER MEET messages to join the cluster

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | Waiting for the cluster to join

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | .

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | >>> Performing Cluster Check (using node 10.0.5.17:6379)

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | M: 6ce90be6daabc0c700471d03deb3c6bd88c9f0e1 10.0.5.17:6379

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | slots:[0-5460] (5461 slots) master

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | 1 additional replica(s)

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | S: ca3c50899d6deb04e296c542cd485791fb3e8922 10.0.5.12:6379

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | slots: (0 slots) slave

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | replicates 935c177308232de05b5483776478020de51bc578

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | M: ea9b45ec64c08c17283239f8b8e5405b2d182428 10.0.5.19:6379

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | slots:[5461-10922] (5462 slots) master

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | 1 additional replica(s)

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | M: 935c177308232de05b5483776478020de51bc578 10.0.5.3:6379

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | slots:[10923-16383] (5461 slots) master

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | 1 additional replica(s)

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | S: 1c99e42bcfb28a9fe72952d4e4cc5cd88aded0f9 10.0.5.6:6379

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | slots: (0 slots) slave

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | replicates 6ce90be6daabc0c700471d03deb3c6bd88c9f0e1

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | S: 73cf232f232e83126f058cc01458df11146d8537 10.0.5.9:6379

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | slots: (0 slots) slave

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | replicates ea9b45ec64c08c17283239f8b8e5405b2d182428

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | [OK] All nodes agree about slots configuration.

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | >>> Check for open slots...

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | >>> Check slots coverage...

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | [OK] All 16384 slots covered.

redis-swarm_redis-start.1.6xawjqf5shfw@hyx-test3 | redis-cluster end

復原部署

docker stack rm redis_cluster

如果需要重新部署叢集,redis叢集為了保證資料統一,需要清除資料卷。

# 每個節點都需要執行

docker volume prune

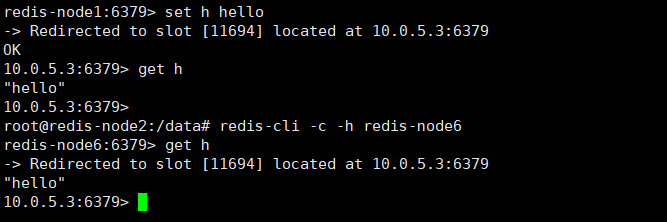

測試

進入其中一個節點容器,依次檢視叢集資訊

docker exec -it xxx bash

redis-cli -c -h redis-node1 info

測試讀寫資料

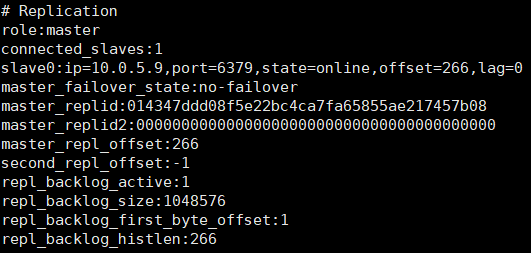

測試其中一個主節點宕機,這裡刪除了主節點1,節點1對應的從節點是節點4,節點1宕機後節點4成為主節點

docker service rm redis-swarm_redis-node1

# 檢視

root@redis-node2:/data# redis-cli -c -h redis-node1

Could not connect to Redis at redis-node1:6379: Name or service not known

not connected>

root@redis-node2:/data# redis-cli -c -h redis-node4

redis-node4:6379> info

問題

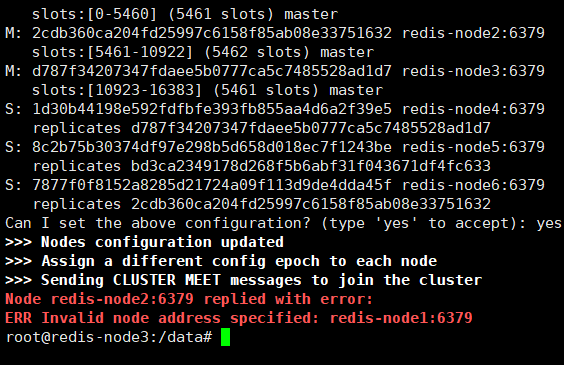

redis-cli --cluster create redis-node1:6379 ...省略

在容器中使用redis-cli建立叢集時,無法使用容器名建立,只能使用容器的ip,因為redis-cli對別名不支援

指令碼下載+快速啟動

連結:https://pan.baidu.com/s/11ITDFls2UXgjZhdWmhVMFA

提取碼:mvfj