CMU15445 (Fall 2019) 之 Project#3

前言

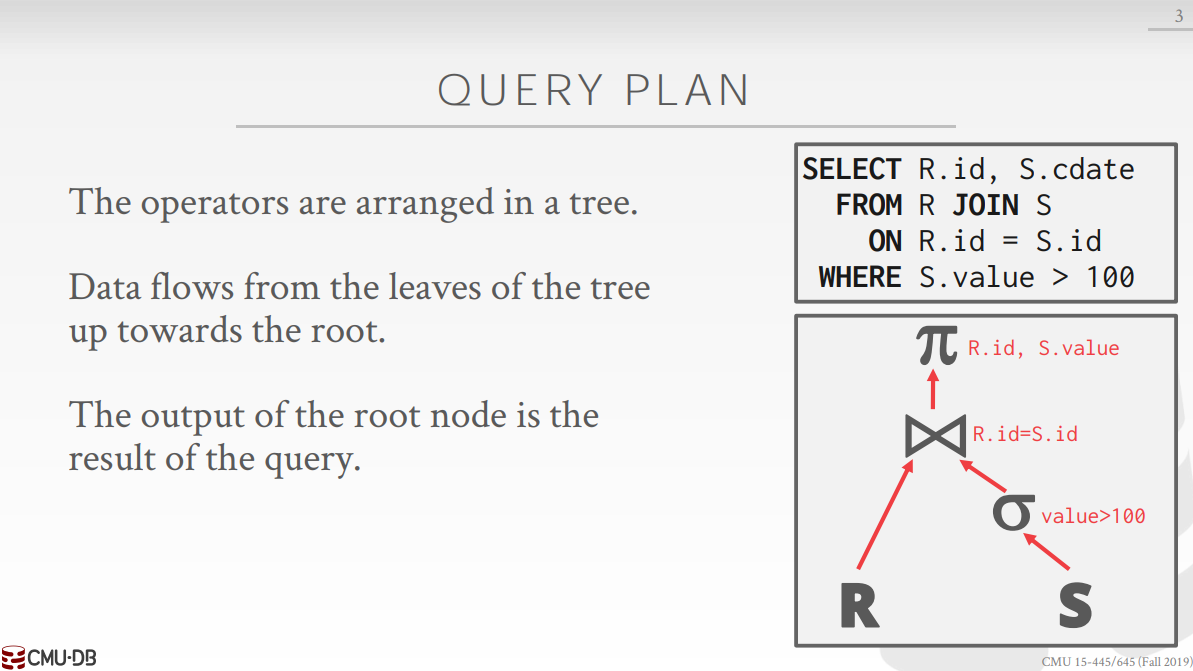

經過前面兩個實驗的鋪墊,終於到了給資料庫系統新增執行查詢計劃功能的時候了。給定一條 SQL 語句,我們可以將其中的操作符組織為一棵樹,樹中的每一個父節點都能從子節點獲取 tuple 並處理成操作符想要的樣子,下圖的根節點 \(\pi\) 會輸出最終的查詢結果。

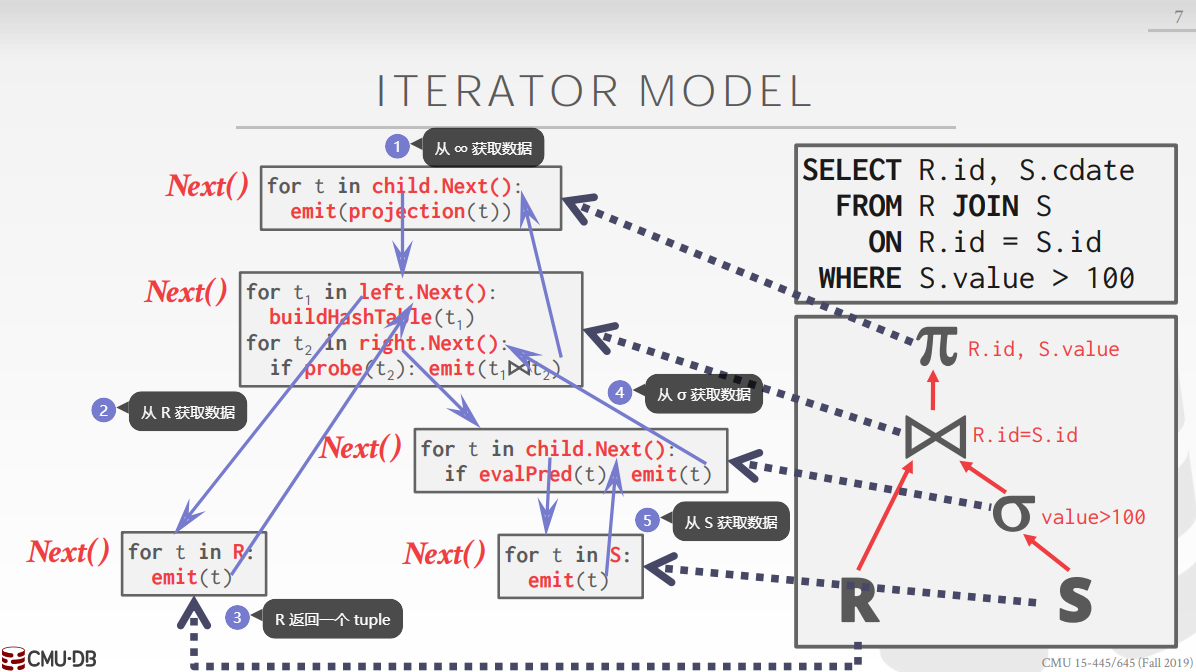

對於這樣一棵樹,我們獲取查詢結果的方式有許多種,包括:迭代模型、物化模型和向量化模型。本次實驗使用的是迭代模型,每個節點都會實現一個 Next() 函數,用於向父節點提供一個 tuple。從根節點開始,每個父節點每次向子節點索取一個 tuple 並處理之後輸出:

程式碼實現

實驗主要有三個任務:目錄表、執行器和用線性探測雜湊表重新實現 hash join 執行器,下面會一個個介紹這幾個任務的完成過程。

目錄表

目錄表可以根據 table_oid 或者 table_name 返回表的後設資料,其中最重要的一個欄位就是 table_,該欄位表示一張表,用於查詢、插入、修改和刪除 tuple:

using table_oid_t = uint32_t;

using column_oid_t = uint32_t;

struct TableMetadata {

TableMetadata(Schema schema, std::string name, std::unique_ptr<TableHeap> &&table, table_oid_t oid)

: schema_(std::move(schema)), name_(std::move(name)), table_(std::move(table)), oid_(oid) {}

Schema schema_;

std::string name_;

std::unique_ptr<TableHeap> table_;

table_oid_t oid_;

};

目錄表類 SimpleCatalog 中有三個要求我們實現的方法:CreateTable、GetTable(const std::string &table_name) 和 GetTable(table_oid_t table_oid),第一個方法用於建立一個新的表,後面兩個方法用於獲取表:

class SimpleCatalog {

public:

SimpleCatalog(BufferPoolManager *bpm, LockManager *lock_manager, LogManager *log_manager)

: bpm_{bpm}, lock_manager_{lock_manager}, log_manager_{log_manager} {}

/**

* Create a new table and return its metadata.

* @param txn the transaction in which the table is being created

* @param table_name the name of the new table

* @param schema the schema of the new table

* @return a pointer to the metadata of the new table

*/

TableMetadata *CreateTable(Transaction *txn, const std::string &table_name, const Schema &schema) {

BUSTUB_ASSERT(names_.count(table_name) == 0, "Table names should be unique!");

table_oid_t oid = next_table_oid_++;

auto table = std::make_unique<TableHeap>(bpm_, lock_manager_, log_manager_, txn);

tables_[oid] = std::make_unique<TableMetadata>(schema, table_name, std::move(table), oid);

names_[table_name] = oid;

return tables_[oid].get();

}

/** @return table metadata by name */

TableMetadata *GetTable(const std::string &table_name) {

auto it = names_.find(table_name);

if (it == names_.end()) {

throw std::out_of_range("The table name doesn't exist.");

}

return GetTable(it->second);

}

/** @return table metadata by oid */

TableMetadata *GetTable(table_oid_t table_oid) {

auto it = tables_.find(table_oid);

if (it == tables_.end()) {

throw std::out_of_range("The table oid doesn't exist.");

}

return it->second.get();

}

private:

[[maybe_unused]] BufferPoolManager *bpm_;

[[maybe_unused]] LockManager *lock_manager_;

[[maybe_unused]] LogManager *log_manager_;

/** tables_ : table identifiers -> table metadata. Note that tables_ owns all table metadata. */

std::unordered_map<table_oid_t, std::unique_ptr<TableMetadata>> tables_;

/** names_ : table names -> table identifiers */

std::unordered_map<std::string, table_oid_t> names_;

/** The next table identifier to be used. */

std::atomic<table_oid_t> next_table_oid_{0};

};

測試結果如下:

執行器

執行器用於執行查詢計劃,該實驗要求我們實現下述四種執行器:

SeqScanExecutor:順序掃描執行器,遍歷表並返回符合查詢條件的 tuple,比如SELECT * FROM user where id=1通過該執行器獲取查詢結果InsertExecutor:插入執行器,向表格中插入任意數量的 tuple,比如INSERT INTO user VALUES (1, 2), (2, 3)HashJoinExecutor:雜湊連線執行器,用於內連線查詢操作,比如SELECT u.id, c.class FROM u JOIN c ON u.id = c.uidAggregationExecutor:聚合執行器,用於執行聚合操作,比如SELECT MIN(grade), MAX(grade) from user

每個執行器都繼承自抽象類 AbstractExecutor ,有兩個純虛擬函式 Init() 和 Next(Tuple *tuple) 需要實現,其中 Init() 用於初始化執行器,比如需要在 HashJoinExecutor 的 Init() 中對 left table(outer table) 建立雜湊表。AbstractExecutor 還有一個 ExecutorContext 成員,包含一些查詢的後設資料,比如 BufferPoolManager 和上個任務實現的 SimpleCatalog:

class AbstractExecutor {

public:

/**

* Constructs a new AbstractExecutor.

* @param exec_ctx the executor context that the executor runs with

*/

explicit AbstractExecutor(ExecutorContext *exec_ctx) : exec_ctx_{exec_ctx} {}

/** Virtual destructor. */

virtual ~AbstractExecutor() = default;

/**

* Initializes this executor.

* @warning This function must be called before Next() is called!

*/

virtual void Init() = 0;

/**

* Produces the next tuple from this executor.

* @param[out] tuple the next tuple produced by this executor

* @return true if a tuple was produced, false if there are no more tuples

*/

virtual bool Next(Tuple *tuple) = 0;

/** @return the schema of the tuples that this executor produces */

virtual const Schema *GetOutputSchema() = 0;

/** @return the executor context in which this executor runs */

ExecutorContext *GetExecutorContext() { return exec_ctx_; }

protected:

ExecutorContext *exec_ctx_;

};

執行器內部會有一個代表執行計劃的 AbstractPlanNode 的子類資料成員,而這些子類內部又會有一個 AbstractExpression 的子類資料成員用於判斷查詢條件是否成立等操作。

順序掃描

提供的程式碼中為我們實現了一個 TableIterator 類,用於迭代 TableHeap,我們只要在 Next 函數中判斷迭代器所指的 tuple 是否滿足查詢條件並遞增迭代器,如果滿足條件就返回該 tuple,不滿足就接著迭代:

class SeqScanExecutor : public AbstractExecutor {

public:

/**

* Creates a new sequential scan executor.

* @param exec_ctx the executor context

* @param plan the sequential scan plan to be executed

*/

SeqScanExecutor(ExecutorContext *exec_ctx, const SeqScanPlanNode *plan);

void Init() override;

bool Next(Tuple *tuple) override;

const Schema *GetOutputSchema() override { return plan_->OutputSchema(); }

private:

/** The sequential scan plan node to be executed. */

const SeqScanPlanNode *plan_;

TableMetadata *table_metadata_;

TableIterator table_iterator_;

};

實現程式碼如下:

SeqScanExecutor::SeqScanExecutor(ExecutorContext *exec_ctx, const SeqScanPlanNode *plan)

: AbstractExecutor(exec_ctx),

plan_(plan),

table_metadata_(exec_ctx->GetCatalog()->GetTable(plan->GetTableOid())),

table_iterator_(table_metadata_->table_->Begin(exec_ctx->GetTransaction())) {}

void SeqScanExecutor::Init() {}

bool SeqScanExecutor::Next(Tuple *tuple) {

auto predicate = plan_->GetPredicate();

while (table_iterator_ != table_metadata_->table_->End()) {

*tuple = *table_iterator_++;

if (!predicate || predicate->Evaluate(tuple, &table_metadata_->schema_).GetAs<bool>()) {

return true;

}

}

return false;

}

插入

插入操作分為兩種:

- raw inserts:插入資料直接來自插入執行器本身,比如

INSERT INTO tbl_user VALUES (1, 15), (2, 16) - not-raw inserts:插入的資料來自子執行器,比如

INSERT INTO tbl_user1 SELECT * FROM tbl_user2

可以根據插入計劃的 IsRawInsert() 判斷插入操作的型別,這個函數根據子查詢器列表是否為空進行判斷:

/** @return true if we embed insert values directly into the plan, false if we have a child plan providing tuples */

bool IsRawInsert() const { return GetChildren().empty(); }

如果是 raw inserts,我們直接根據插入執行器中的資料構造 tuple 並插入表中,否則呼叫子執行器的 Next 函數獲取資料並插入表中:

class InsertExecutor : public AbstractExecutor {

public:

/**

* Creates a new insert executor.

* @param exec_ctx the executor context

* @param plan the insert plan to be executed

* @param child_executor the child executor to obtain insert values from, can be nullptr

*/

InsertExecutor(ExecutorContext *exec_ctx, const InsertPlanNode *plan,

std::unique_ptr<AbstractExecutor> &&child_executor);

const Schema *GetOutputSchema() override;

void Init() override;

// Note that Insert does not make use of the tuple pointer being passed in.

// We return false if the insert failed for any reason, and return true if all inserts succeeded.

bool Next([[maybe_unused]] Tuple *tuple) override;

private:

/** The insert plan node to be executed. */

const InsertPlanNode *plan_;

std::unique_ptr<AbstractExecutor> child_executor_;

TableMetadata *table_metadata_;

};

實現程式碼為:

InsertExecutor::InsertExecutor(ExecutorContext *exec_ctx, const InsertPlanNode *plan,

std::unique_ptr<AbstractExecutor> &&child_executor)

: AbstractExecutor(exec_ctx),

plan_(plan),

child_executor_(std::move(child_executor)),

table_metadata_(exec_ctx->GetCatalog()->GetTable(plan->TableOid())) {}

const Schema *InsertExecutor::GetOutputSchema() { return plan_->OutputSchema(); }

void InsertExecutor::Init() {}

bool InsertExecutor::Next([[maybe_unused]] Tuple *tuple) {

RID rid;

if (plan_->IsRawInsert()) {

for (const auto &values : plan_->RawValues()) {

Tuple tuple(values, &table_metadata_->schema_);

if (!table_metadata_->table_->InsertTuple(tuple, &rid, exec_ctx_->GetTransaction())) {

return false;

};

}

} else {

Tuple tuple;

while (child_executor_->Next(&tuple)) {

if (!table_metadata_->table_->InsertTuple(tuple, &rid, exec_ctx_->GetTransaction())) {

return false;

};

}

}

return true;

}

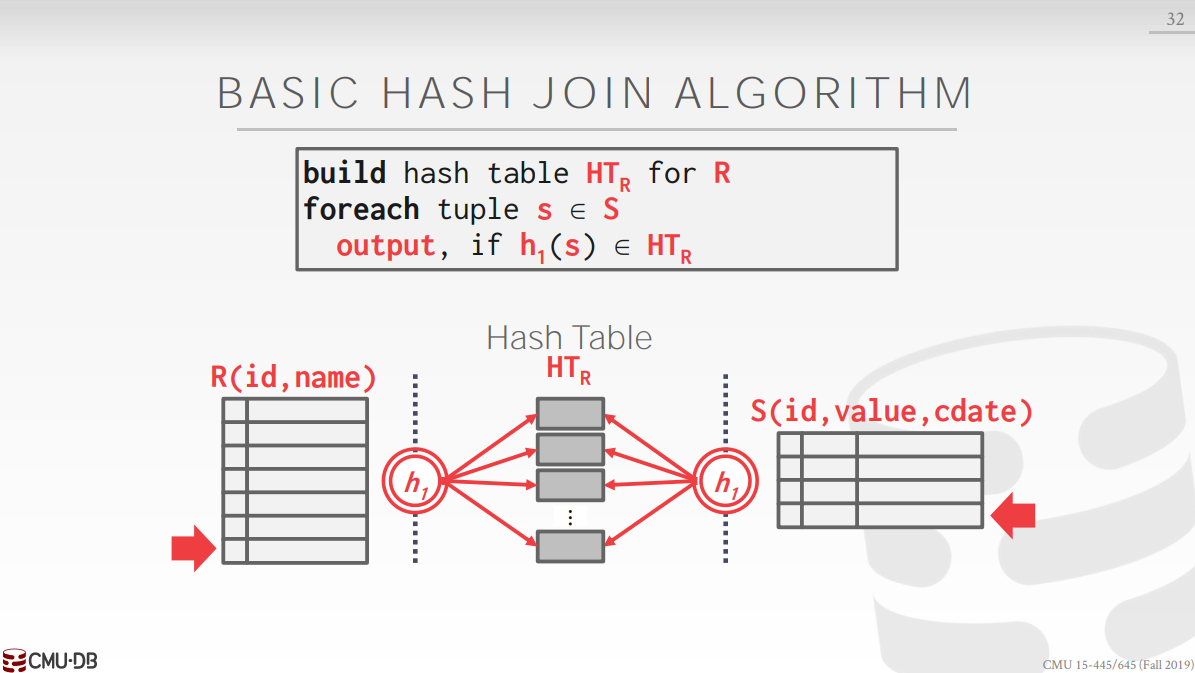

雜湊連線

雜湊連線執行器使用的是最基本的雜湊連線演演算法,沒有使用布隆過濾器等優化措施。該演演算法分為兩個階段:

- 將 left table 的 join 語句中各個條件所在列的值作為鍵,tuple 或者 row id 作為值構造雜湊表,這一步允許將相同雜湊值的 tuple 插入雜湊表

- 對 right table 的 join 語句中各個條件所在列的值作為鍵,在雜湊表中進行查詢獲取所以系統雜湊值的 left table 中的 tuple,再使用 join 條件進行精確匹配

對 tuple 進行雜湊的函數為:

/**

* Hashes a tuple by evaluating it against every expression on the given schema, combining all non-null hashes.

* @param tuple tuple to be hashed

* @param schema schema to evaluate the tuple on

* @param exprs expressions to evaluate the tuple with

* @return the hashed tuple

*/

hash_t HashJoinExecutor::HashValues(const Tuple *tuple, const Schema *schema, const std::vector<const AbstractExpression *> &exprs) {

hash_t curr_hash = 0;

// For every expression,

for (const auto &expr : exprs) {

// We evaluate the tuple on the expression and schema.

Value val = expr->Evaluate(tuple, schema);

// If this produces a value,

if (!val.IsNull()) {

// We combine the hash of that value into our current hash.

curr_hash = HashUtil::CombineHashes(curr_hash, HashUtil::HashValue(&val));

}

}

return curr_hash;

}

為了方便我們的測試,實驗提供了一個簡易的雜湊表 SimpleHashJoinHashTable 用於插入 (hash, tuple) 鍵值對,該雜湊表直接整個放入記憶體中,如果 tuple 很多,記憶體會放不下這個雜湊表,所以任務三會替換為上一個實驗中實現的 LinearProbeHashTable。

using HT = SimpleHashJoinHashTable;

class HashJoinExecutor : public AbstractExecutor {

public:

/**

* Creates a new hash join executor.

* @param exec_ctx the context that the hash join should be performed in

* @param plan the hash join plan node

* @param left the left child, used by convention to build the hash table

* @param right the right child, used by convention to probe the hash table

*/

HashJoinExecutor(ExecutorContext *exec_ctx, const HashJoinPlanNode *plan, std::unique_ptr<AbstractExecutor> &&left,

std::unique_ptr<AbstractExecutor> &&right);

/** @return the JHT in use. Do not modify this function, otherwise you will get a zero. */

const HT *GetJHT() const { return &jht_; }

const Schema *GetOutputSchema() override { return plan_->OutputSchema(); }

void Init() override;

bool Next(Tuple *tuple) override;

hash_t HashValues(const Tuple *tuple, const Schema *schema, const std::vector<const AbstractExpression *> &exprs) { // 省略 }

private:

/** The hash join plan node. */

const HashJoinPlanNode *plan_;

std::unique_ptr<AbstractExecutor> left_executor_;

std::unique_ptr<AbstractExecutor> right_executor_;

/** The comparator is used to compare hashes. */

[[maybe_unused]] HashComparator jht_comp_{};

/** The identity hash function. */

IdentityHashFunction jht_hash_fn_{};

/** The hash table that we are using. */

HT jht_;

/** The number of buckets in the hash table. */

static constexpr uint32_t jht_num_buckets_ = 2;

};

根據上述的演演算法過程可以得到實現程式碼為:

HashJoinExecutor::HashJoinExecutor(ExecutorContext *exec_ctx, const HashJoinPlanNode *plan,

std::unique_ptr<AbstractExecutor> &&left, std::unique_ptr<AbstractExecutor> &&right)

: AbstractExecutor(exec_ctx),

plan_(plan),

left_executor_(std::move(left)),

right_executor_(std::move(right)),

jht_("join hash table", exec_ctx->GetBufferPoolManager(), jht_comp_, jht_num_buckets_, jht_hash_fn_) {}

void HashJoinExecutor::Init() {

left_executor_->Init();

right_executor_->Init();

// create hash table for left child

Tuple tuple;

while (left_executor_->Next(&tuple)) {

auto h = HashValues(&tuple, left_executor_->GetOutputSchema(), plan_->GetLeftKeys());

jht_.Insert(exec_ctx_->GetTransaction(), h, tuple);

}

}

bool HashJoinExecutor::Next(Tuple *tuple) {

auto predicate = plan_->Predicate();

auto left_schema = left_executor_->GetOutputSchema();

auto right_schema = right_executor_->GetOutputSchema();

auto out_schema = GetOutputSchema();

Tuple right_tuple;

while (right_executor_->Next(&right_tuple)) {

// get all tuples with the same hash values in left child

auto h = HashValues(&right_tuple, right_executor_->GetOutputSchema(), plan_->GetRightKeys());

std::vector<Tuple> left_tuples;

jht_.GetValue(exec_ctx_->GetTransaction(), h, &left_tuples);

// get the exact matching left tuple

for (auto &left_tuple : left_tuples) {

if (!predicate || predicate->EvaluateJoin(&left_tuple, left_schema, &right_tuple, right_schema).GetAs<bool>()) {

// create output tuple

std::vector<Value> values;

for (uint32_t i = 0; i < out_schema->GetColumnCount(); ++i) {

auto expr = out_schema->GetColumn(i).GetExpr();

values.push_back(expr->EvaluateJoin(&left_tuple, left_schema, &right_tuple, right_schema));

}

*tuple = Tuple(values, out_schema);

return true;

}

}

}

return false;

}

聚合

聚合執行器內部維護了一個雜湊表 SimpleAggregationHashTable 以及雜湊表迭代器 aht_iterator_,將鍵值對插入雜湊表的時候會立刻更新聚合結果,最終的查詢結果也從該雜湊表獲取:

AggregationExecutor::AggregationExecutor(ExecutorContext *exec_ctx, const AggregationPlanNode *plan,

std::unique_ptr<AbstractExecutor> &&child)

: AbstractExecutor(exec_ctx),

plan_(plan),

child_(std::move(child)),

aht_(plan->GetAggregates(), plan->GetAggregateTypes()),

aht_iterator_(aht_.Begin()) {}

const AbstractExecutor *AggregationExecutor::GetChildExecutor() const { return child_.get(); }

const Schema *AggregationExecutor::GetOutputSchema() { return plan_->OutputSchema(); }

void AggregationExecutor::Init() {

child_->Init();

// initialize aggregation hash table

Tuple tuple;

while (child_->Next(&tuple)) {

aht_.InsertCombine(MakeKey(&tuple), MakeVal(&tuple));

}

aht_iterator_ = aht_.Begin();

}

bool AggregationExecutor::Next(Tuple *tuple) {

auto having = plan_->GetHaving();

auto out_schema = GetOutputSchema();

while (aht_iterator_ != aht_.End()) {

auto group_bys = aht_iterator_.Key().group_bys_;

auto aggregates = aht_iterator_.Val().aggregates_;

if (!having || having->EvaluateAggregate(group_bys, aggregates).GetAs<bool>()) {

std::vector<Value> values;

for (uint32_t i = 0; i < out_schema->GetColumnCount(); ++i) {

auto expr = out_schema->GetColumn(i).GetExpr();

values.push_back(expr->EvaluateAggregate(group_bys, aggregates));

}

*tuple = Tuple(values, out_schema);

++aht_iterator_;

return true;

}

++aht_iterator_;

}

return false;

}

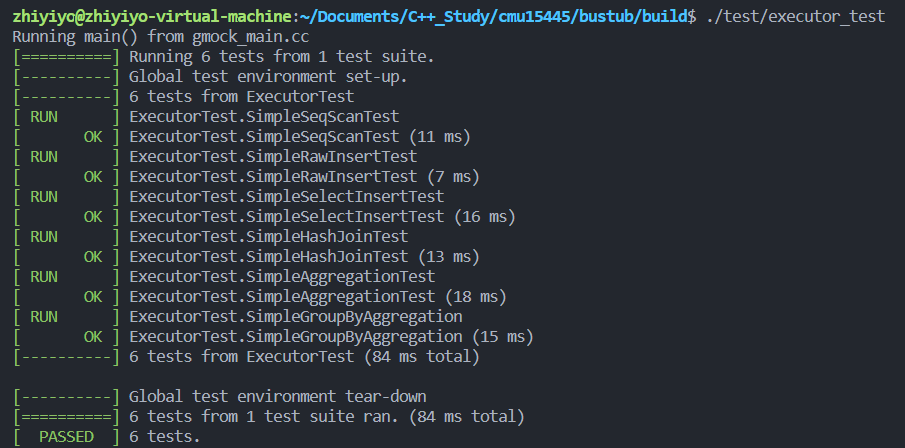

測試

測試結果如下圖所示,成功通過所有測試用例:

線性探測雜湊表

這個任務要求將雜湊連線中的 SimpleHashJoinHashTable 更換成 LinearProbeHashTable,這樣就能在磁碟中儲存 left table 的雜湊表。實驗還提示我們可以實現 TmpTuplePage,用於儲存 left table 的 tuple,其實我們完全可以用程式碼中寫好的 TablePage 來實現該目的,但是 TmpTuplePage 結構更為精簡,可以搭配 Tuple::DeserializeFrom 食用,通過實現 TmpTuplePage,我們也能加深對 tuple 儲存方式的理解。

TmpTuplePage 的格式如下所示:

---------------------------------------------------------------------------------------------------------

| PageId (4) | LSN (4) | FreeSpace (4) | (free space) | TupleSize2 | TupleData2 | TupleSize1 | TupleData1 |

---------------------------------------------------------------------------------------------------------

\-----------------V------------------/ ^

header free space pointer

前 12 個位元組是 header,記錄了 page id、lsn 和 free space pointer,此處的 free space pointer 是相對 page id 的地址而言的。如果表中一個 tuple 都沒有,且表大小為 PAGE_SIZE,那麼 free space pointer 的值就是 PAGE_SIZE。tuple 從末尾開始插入,每個 tuple 後面跟著 tuple 的大小(佔用 4 位元組),也就是說插入一個 tuple 佔用的空間大小為 tuple.size_ + 4。

理解上述內容後,實現 TmpTupleHeader 就很簡單了,模仿 TablePage 的寫法即可(需要將 TmpTuplePage 宣告為 Tuple 的友元):

class TmpTuplePage : public Page {

public:

void Init(page_id_t page_id, uint32_t page_size) {

memcpy(GetData(), &page_id, sizeof(page_id));

SetFreeSpacePointer(page_size);

}

/** @return the page ID of this temp table page */

page_id_t GetTablePageId() { return *reinterpret_cast<page_id_t *>(GetData()); }

bool Insert(const Tuple &tuple, TmpTuple *out) {

// determine whether there is enough space to insert tuple

if (GetFreeSpaceRemaining() < tuple.size_ + SIZE_TUPLE) {

return false;

}

// insert tuple and its size

SetFreeSpacePointer(GetFreeSpacePointer() - tuple.size_);

memcpy(GetData() + GetFreeSpacePointer(), tuple.data_, tuple.size_);

SetFreeSpacePointer(GetFreeSpacePointer() - SIZE_TUPLE);

memcpy(GetData() + GetFreeSpacePointer(), &tuple.size_, SIZE_TUPLE);

out->SetPageId(GetPageId());

out->SetOffset(GetFreeSpacePointer());

return true;

}

private:

static_assert(sizeof(page_id_t) == 4);

static constexpr size_t SIZE_TABLE_PAGE_HEADER = 12;

static constexpr size_t SIZE_TUPLE = 4;

static constexpr size_t OFFSET_FREE_SPACE = 8;

/** @return pointer to the end of the current free space, see header comment */

uint32_t GetFreeSpacePointer() { return *reinterpret_cast<uint32_t *>(GetData() + OFFSET_FREE_SPACE); }

/** set the pointer of the end of current free space.

* @param free_space_ptr the pointer relative to data_

*/

void SetFreeSpacePointer(uint32_t free_space_ptr) {

memcpy(GetData() + OFFSET_FREE_SPACE, &free_space_ptr, sizeof(uint32_t));

}

/** @return the size of free space */

uint32_t GetFreeSpaceRemaining() { return GetFreeSpacePointer() - SIZE_TABLE_PAGE_HEADER; }

};

在 Insert 函數中更新了 TmpTuple 的引數,我們會將 TmpTuple 作為 left table 雜湊表的值,而 tuple 放在 TmpTuplePage 中,根據 TmpTuple 中儲存的 offset 獲取 tuple:

class TmpTuple {

public:

TmpTuple(page_id_t page_id, size_t offset) : page_id_(page_id), offset_(offset) {}

inline bool operator==(const TmpTuple &rhs) const { return page_id_ == rhs.page_id_ && offset_ == rhs.offset_; }

page_id_t GetPageId() const { return page_id_; }

size_t GetOffset() const { return offset_; }

void SetPageId(page_id_t page_id) { page_id_ = page_id; }

void SetOffset(size_t offset) { offset_ = offset; }

private:

page_id_t page_id_;

size_t offset_;

};

接著需要將雜湊表更換為 LinearProbeHashTable,在 linear_probe_hash_table.cpp 中需要進行模板特例化:

template class LinearProbeHashTable<hash_t, TmpTuple, HashComparator>;

還要對 HashTableBlockPage 進行模板特例化:

template class HashTableBlockPage<hash_t, TmpTuple, HashComparator>;

接著更改 HT:

using HashJoinKeyType = hash_t;

using HashJoinValType = TmpTuple;

using HT = LinearProbeHashTable<HashJoinKeyType, HashJoinValType, HashComparator>;

由於 tuple 可能很多,將 jht_num_buckets_ 設定為 1000 可以減少調整大小的次數,最後是實現程式碼:

void HashJoinExecutor::Init() {

left_executor_->Init();

right_executor_->Init();

// create temp tuple page

auto buffer_pool_manager = exec_ctx_->GetBufferPoolManager();

page_id_t tmp_page_id;

auto tmp_page = reinterpret_cast<TmpTuplePage *>(buffer_pool_manager->NewPage(&tmp_page_id)->GetData());

tmp_page->Init(tmp_page_id, PAGE_SIZE);

// create hash table for left child

Tuple tuple;

TmpTuple tmp_tuple(tmp_page_id, 0);

while (left_executor_->Next(&tuple)) {

auto h = HashValues(&tuple, left_executor_->GetOutputSchema(), plan_->GetLeftKeys());

// insert tuple to page, creata a new temp tuple page if page if full

if (!tmp_page->Insert(tuple, &tmp_tuple)) {

buffer_pool_manager->UnpinPage(tmp_page_id, true);

tmp_page = reinterpret_cast<TmpTuplePage *>(buffer_pool_manager->NewPage(&tmp_page_id)->GetData());

tmp_page->Init(tmp_page_id, PAGE_SIZE);

// try inserting tuple to page again

tmp_page->Insert(tuple, &tmp_tuple);

}

jht_.Insert(exec_ctx_->GetTransaction(), h, tmp_tuple);

}

buffer_pool_manager->UnpinPage(tmp_page_id, true);

}

bool HashJoinExecutor::Next(Tuple *tuple) {

auto buffer_pool_manager = exec_ctx_->GetBufferPoolManager();

auto left_schema = left_executor_->GetOutputSchema();

auto right_schema = right_executor_->GetOutputSchema();

auto predicate = plan_->Predicate();

auto out_schema = GetOutputSchema();

Tuple right_tuple;

while (right_executor_->Next(&right_tuple)) {

// get all tuples with the same hash values in left child

auto h = HashValues(&right_tuple, right_executor_->GetOutputSchema(), plan_->GetRightKeys());

std::vector<TmpTuple> tmp_tuples;

jht_.GetValue(exec_ctx_->GetTransaction(), h, &tmp_tuples);

// get the exact matching left tuple

for (auto &tmp_tuple : tmp_tuples) {

// convert tmp tuple to left tuple

auto page_id = tmp_tuple.GetPageId();

auto tmp_page = buffer_pool_manager->FetchPage(page_id);

Tuple left_tuple;

left_tuple.DeserializeFrom(tmp_page->GetData() + tmp_tuple.GetOffset());

buffer_pool_manager->UnpinPage(page_id, false);

if (!predicate || predicate->EvaluateJoin(&left_tuple, left_schema, &right_tuple, right_schema).GetAs<bool>()) {

// create output tuple

std::vector<Value> values;

for (uint32_t i = 0; i < out_schema->GetColumnCount(); ++i) {

auto expr = out_schema->GetColumn(i).GetExpr();

values.push_back(expr->EvaluateJoin(&left_tuple, left_schema, &right_tuple, right_schema));

}

*tuple = Tuple(values, out_schema);

return true;

}

}

}

return false;

}

測試結果如下:

總結

通過這次實驗,可以加深對目錄、查詢計劃、迭代模型和 tuple 頁佈局的理解,算是收穫滿滿的一次實驗了,以上~~